SEO

5 Restaurant SEO Tips Backed by Diners & Data

Getting diners through the door, then, is a game of ranking well for these types of queries.

Here are a few ways to do this, backed by concrete research and the real experiences of food bloggers and serial diners.

Google Business Profile is a free tool to manage your restaurant’s appearance on Google. You can’t rank in Google Maps or the “local pack” search results without one.

Given how many people use Google to find restaurants, there’s virtually nothing more important than keeping this optimized and updated.

If a listing contains few photos, no menu, or other scanty details, there is little chance that we might eat or order food from it.

If a restaurant or cafe is new, it should have pictures, detailed information, and a menu. Without these, I’m less inclined to give it a try.

Here are a few of the most important things to focus on:

Add your menu

People don’t always search by cuisine. Sometimes, they search for a specific dish. You’ll likely stand a better chance of showing up for their search if the dish they searched for is on the menu uploaded to your Business Profile.

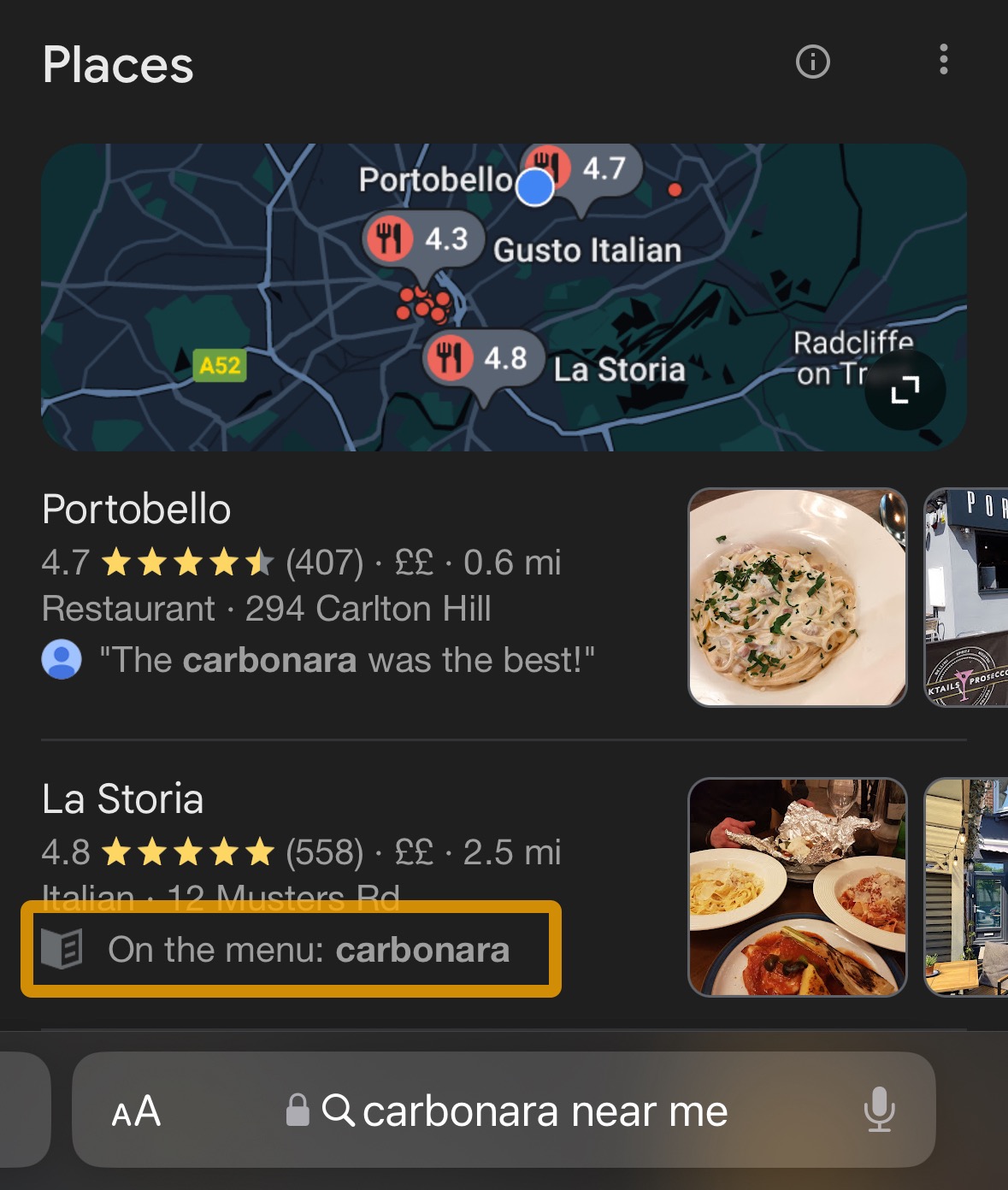

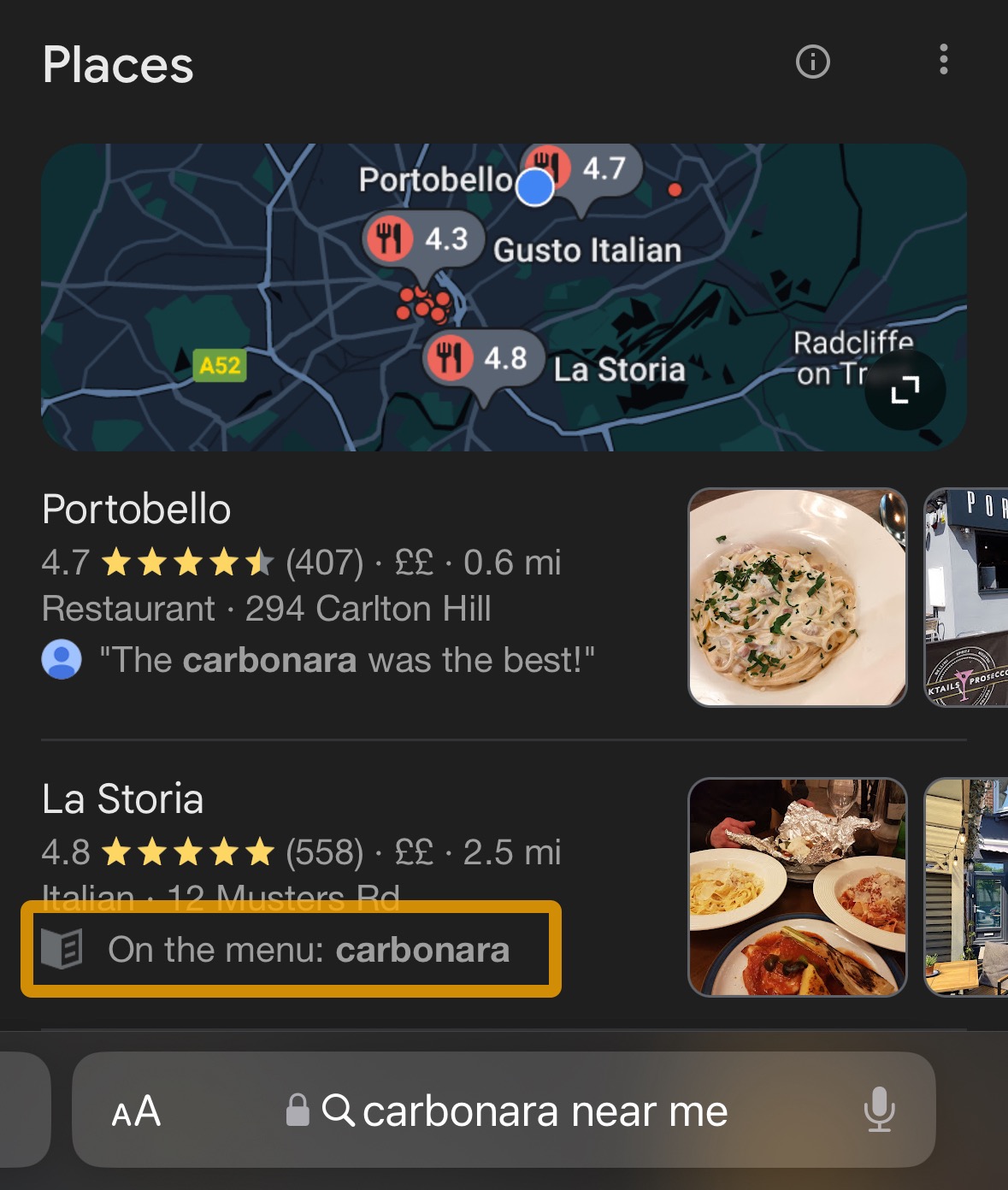

For example, if I search for “carbonara” in Google Maps, this nearby restaurant pops up because this dish is on its menu:

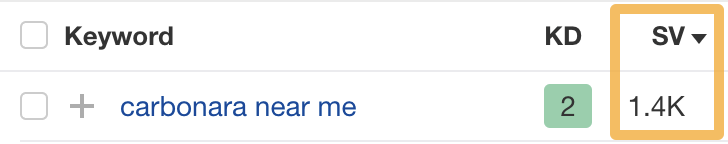

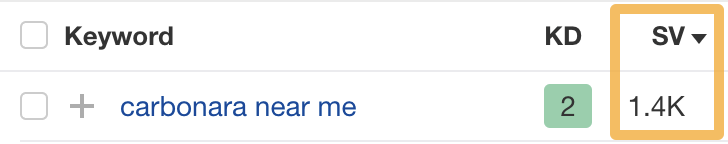

Given that thousands of people across the US search for “carbonara near me” (and many other dishes “near me”) every month, this is an easy but often overlooked way to show up for more searches.

Plus, even when people don’t search this way, 85% of them still use menus to pick new restaurants.

Add photos of food and drink

People eat with their eyes first, which is probably why Google says to add at least three photos of the food and drink you serve.

There’s data to back up the importance of photos, too:

- Businesses with photos receive 42% more requests for driving directions and 35% more website clicks. (Source)

- Businesses with more than 100 photos get 520% more calls, 2,717% more direction requests, and 1,065% more website clicks (Source)

Add table reservations and/or online ordering

Having the option to book a table on your profile reduces friction and improves your chances of converting searchers to diners. There are plenty of third-party apps that can add this functionality, such as Resy, OpenTable, and Yelp.

For online ordering functionality, there are obvious options like Uber Eats and Doordash. However, given that 40% of consumers prefer to order directly from a restaurant’s website over third-party apps, it pays to offer a more direct option.

Owner is one solution here. Their platform gives your customers the ability to order food for collection or delivery via your own website and mobile app. I asked their VP of Marketing, David Fallarme, why this is so important:

Your restaurant’s website should be your best sales channel. The best restaurants do this by making it easy for people to order directly from your website. When you do that, you’re satisfying searcher intent.

A painful mistake that restaurants make is having a great website, but then sending people to Uber Eats or DoorDash to make an order. Not only are you giving up commission fees on people who wanted to order directly, but you’re giving Google negative user experience signals. People come to your website, then close the tab because they don’t want to order from third parties, or they just bounce right off and complete a conversion on another website.

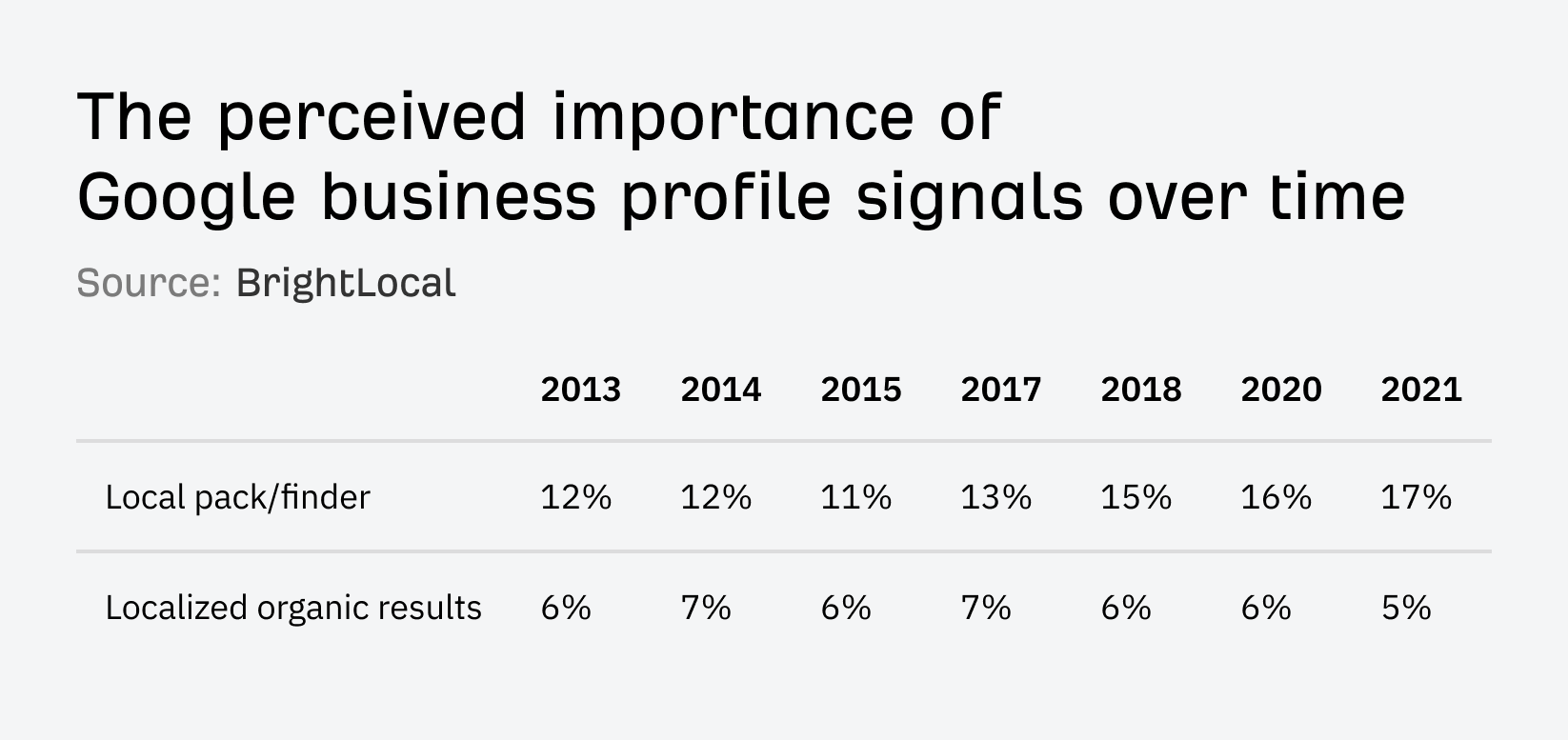

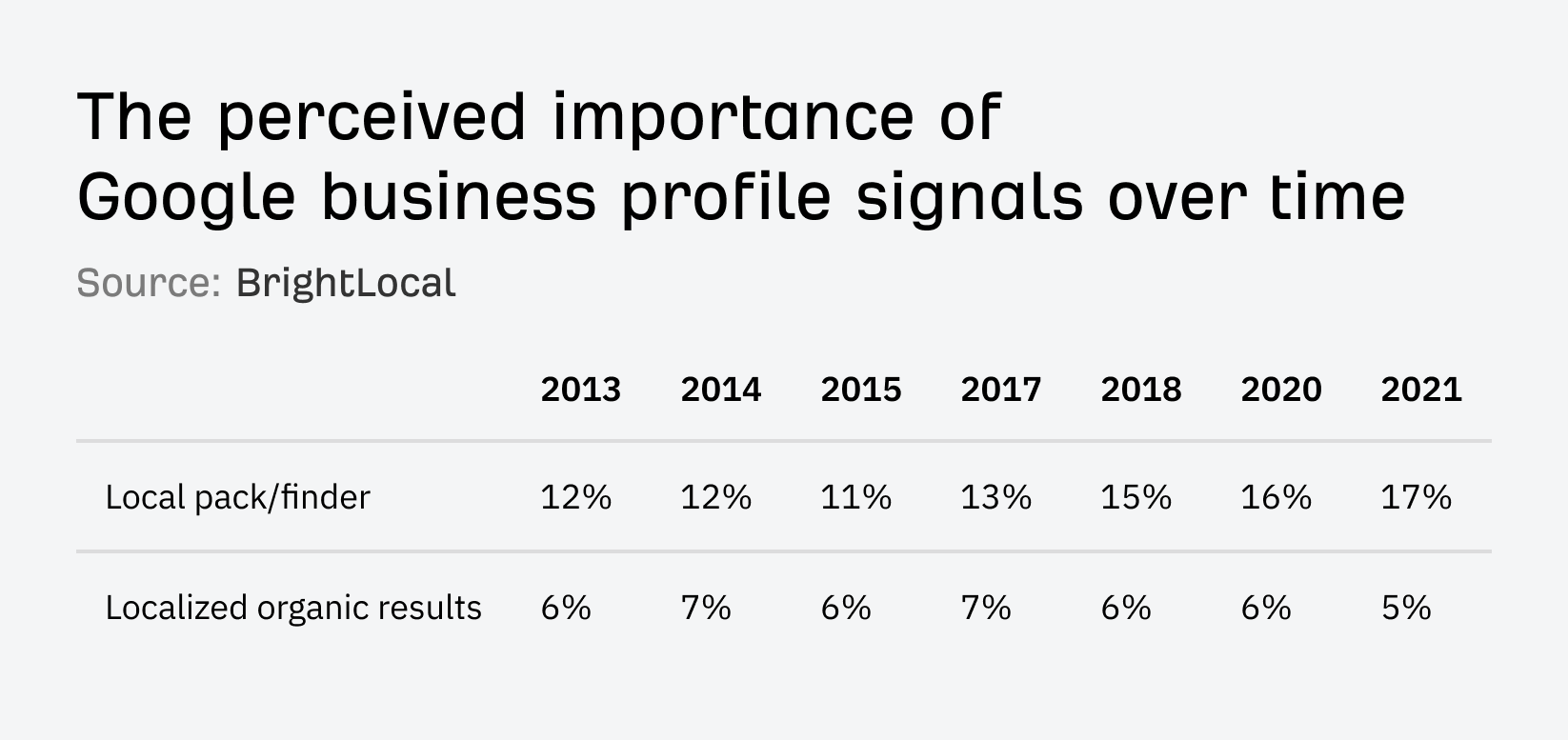

Reviews are believed to be the third most important ranking factor for “local pack” results. Their importance has also grown since 2013.

This is hardly surprising, given how many diners use reviews to help them decide where to eat.

Reviews are crucial in my decision-making process. I definitely lean towards restaurants with better or more reviews. Positive feedback from others gives me confidence in the quality of the food and service, which plays a big part in whether I’ll choose to dine there or order takeout.

While I may not read 20-30 reviews before I make a decision, I definitely would browse through the first few reviews to see what dishes are being recommended.

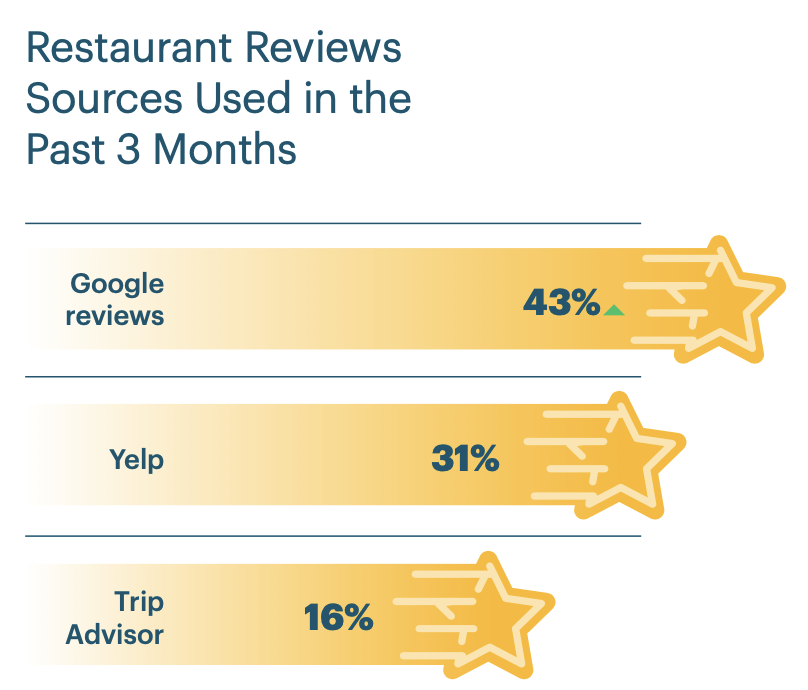

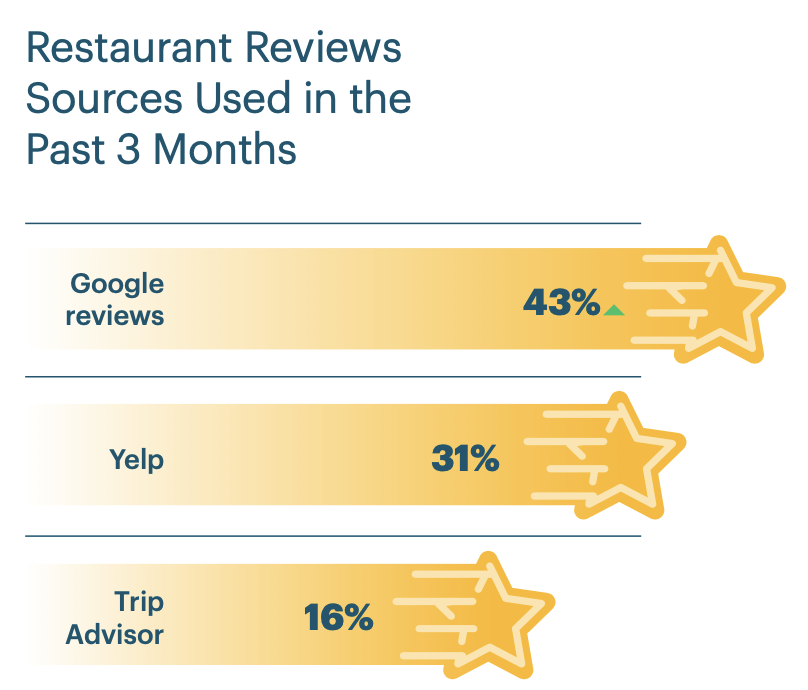

Where should you be trying to get them? Your Business Profile is the most crucial, with 43% of diners reading Google restaurant reviews. Yelp and TripAdvisor are also important, with 31% and 16% of diners reading reviews there.

Here are a couple of tips for getting more reviews:

Remind diners to leave a review after their meal

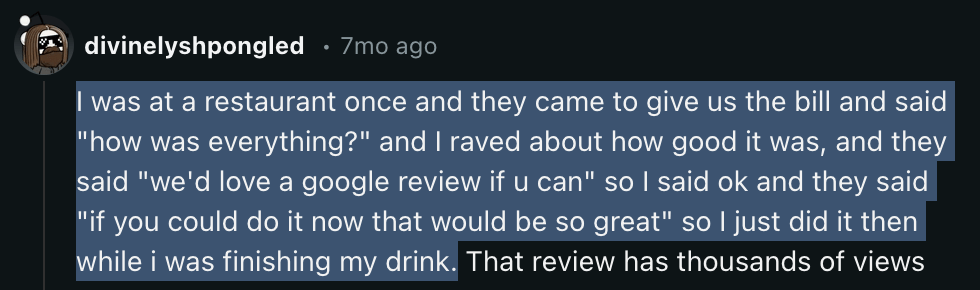

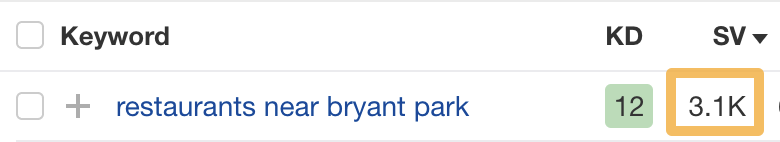

Here’s a genius way to do this I found on Reddit:

If that feels a little pushy for your tastes (it does for mine!), try placing cards on tables with a QR code link to review.

Here’s how to create one of these:

- Use Google’s instructions to find your Business Profile review link

- Search Google for a free QR code generator and paste in your link

- Print the code on cards

If you want to go the extra mile, you can also buy NFC-enabled cards or cards that diners can tap with their phones.

Sidenote.

If you have the option for diners to book a table online, check your reservation app. Some let you email diners to ask for a review the day after their reservation.

For takeouts, you can print them on the receipt or just stamp them on your takeout boxes.

Ask diners to review you online

69% of consumers can recall leaving a business review after being prompted by the brand within the last year.

Here are a few places you can do this:

- Email. Place a short call to review (with a link) at the end of marketing emails.

- Website. Link to your TripAdvisor, Yelp, and Google Profiles.

- Social media. Create a post asking for reviews every now and again. People following you have probably dined with you and enjoyed your food before, so they’re unlikely to leave bad reviews.

Diners don’t always search by cuisine or dish. Sometimes, they search for restaurants near a popular attraction they’re visiting.

Recently, a search for “restaurants near The Alhambra” on Google Maps helped us to find recommended spots and choose where to dine.

I often search for restaurants by proximity, especially when I’m in an unfamiliar area or after attending an event.

I often search for restaurants nearby when I’m out and about or traveling. I spend every summer traveling with my family, and many of our days are impromptu, so we rely on Google Maps to find nearby restaurants. Ironically, this is how we ended up at a Cat Cafe in Quebec City this summer!

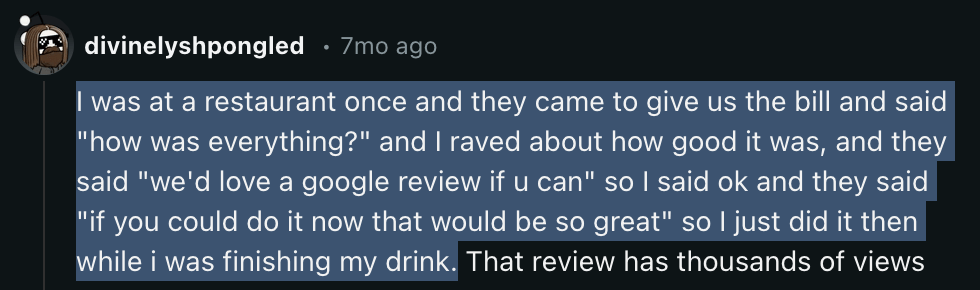

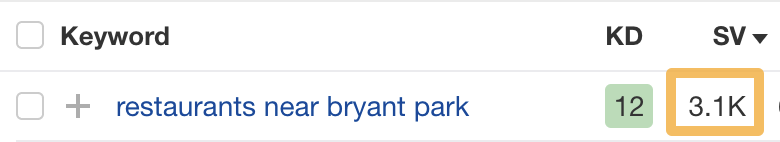

For example, Ahrefs tells us that there are an estimated 3,100 monthly searches for “restaurants near bryant park”:

Most top-ranking pages for these kinds of searches will be listicles from sites like TripAdvisor, OpenTable, and other local guides. But, it is possible for restaurant websites to rank on the first page.

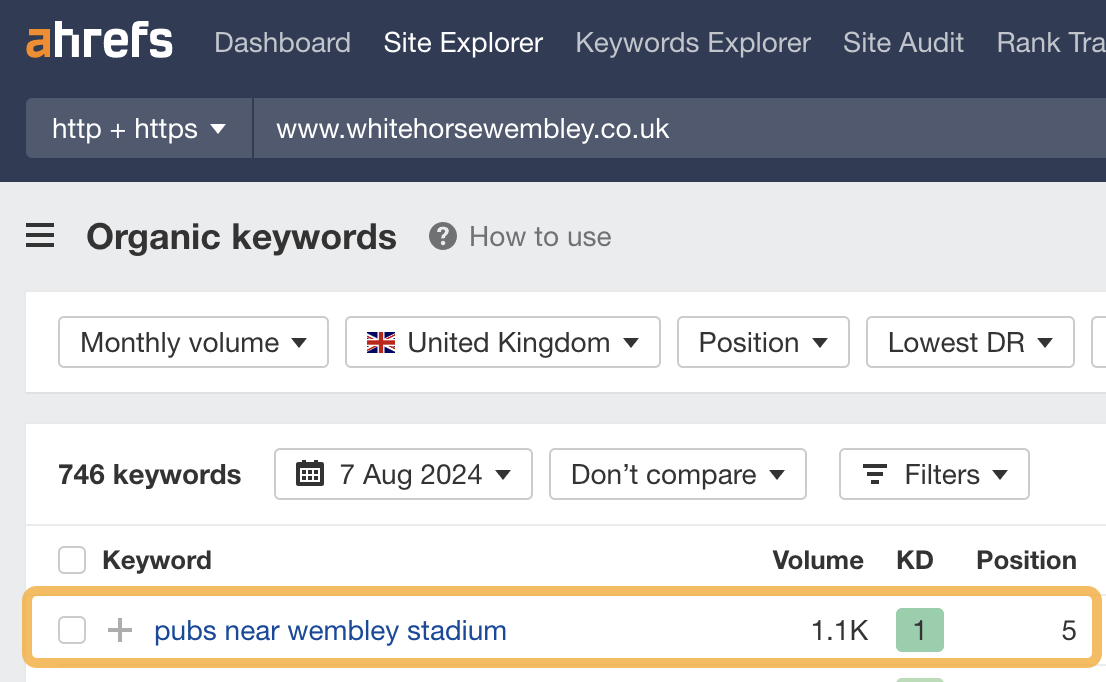

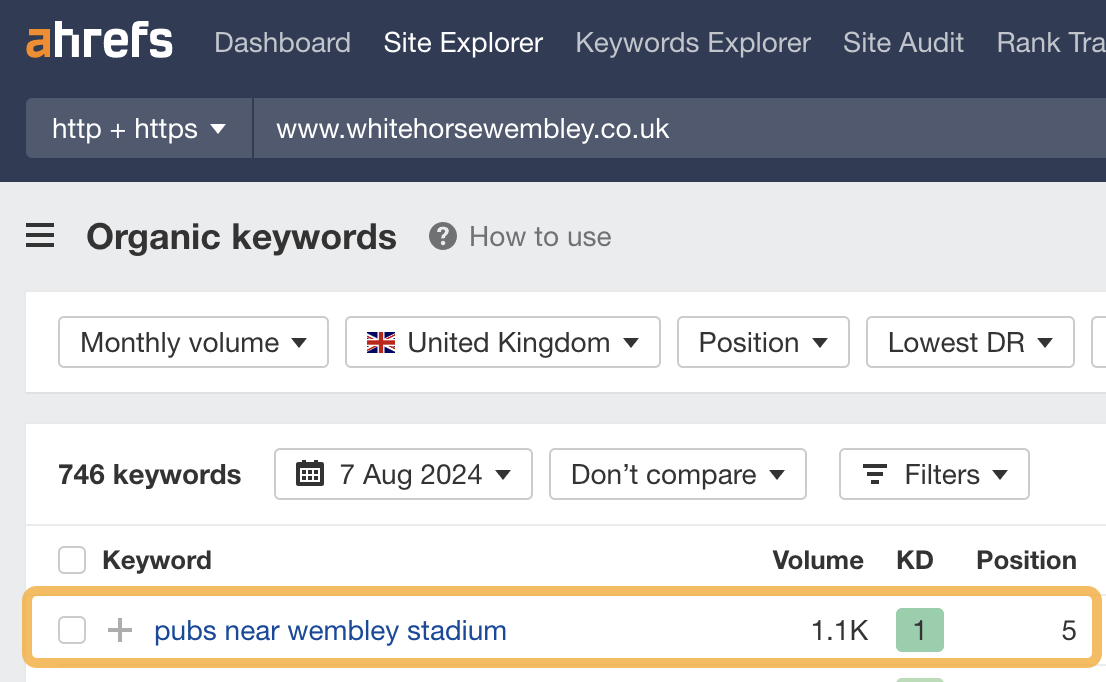

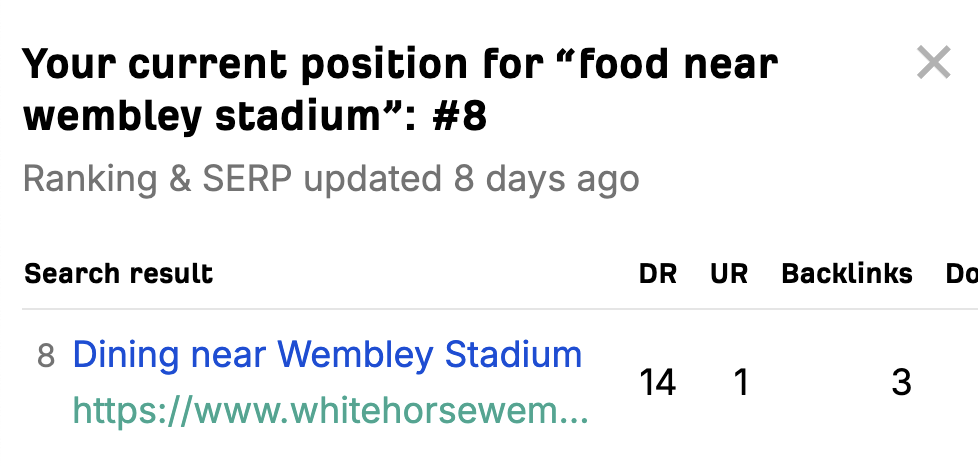

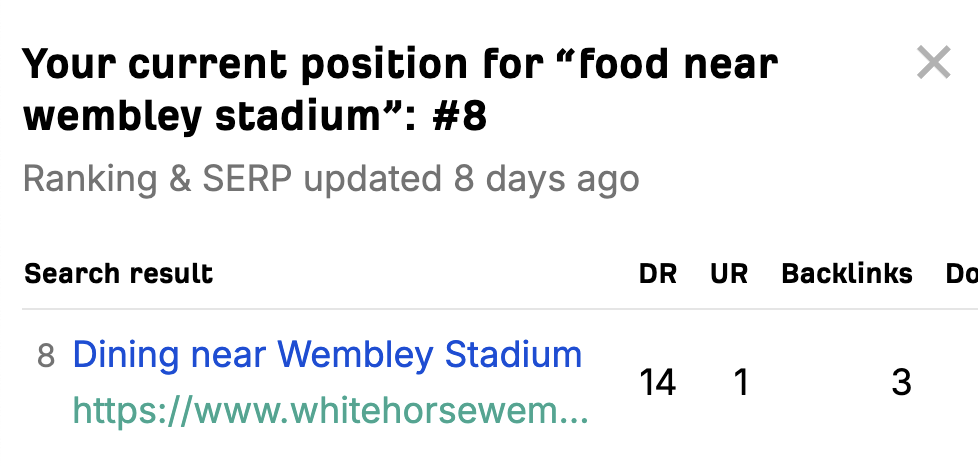

For example, this local pub ranks #5 for “pubs near wembley stadium”:

Here’s how to optimize for these searches in three steps:

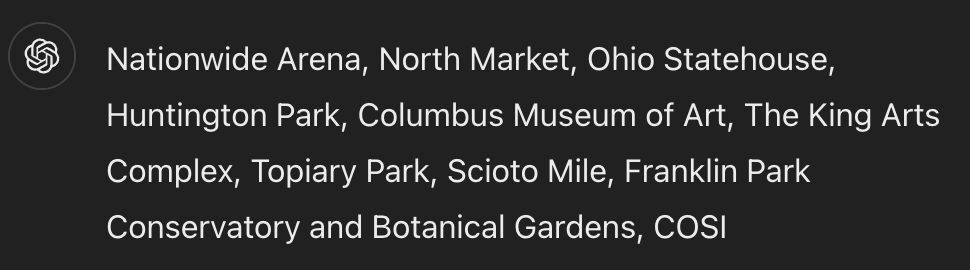

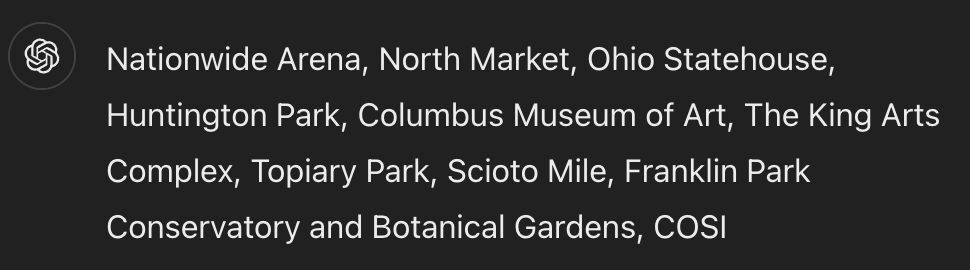

a) Find popular attractions nearby

Most restaurant owners will already know these. If that’s you, note them down and move on to the next step. If you’re doing SEO for a restaurant and have no idea what’s nearby, use this query to ask ChatGPT:

give me a list of 10 popular attractions near: [restaurant address]. don't give me any descriptions, just a comma-separated list of attraction names

It should give you something like this:

b) Check their popularity

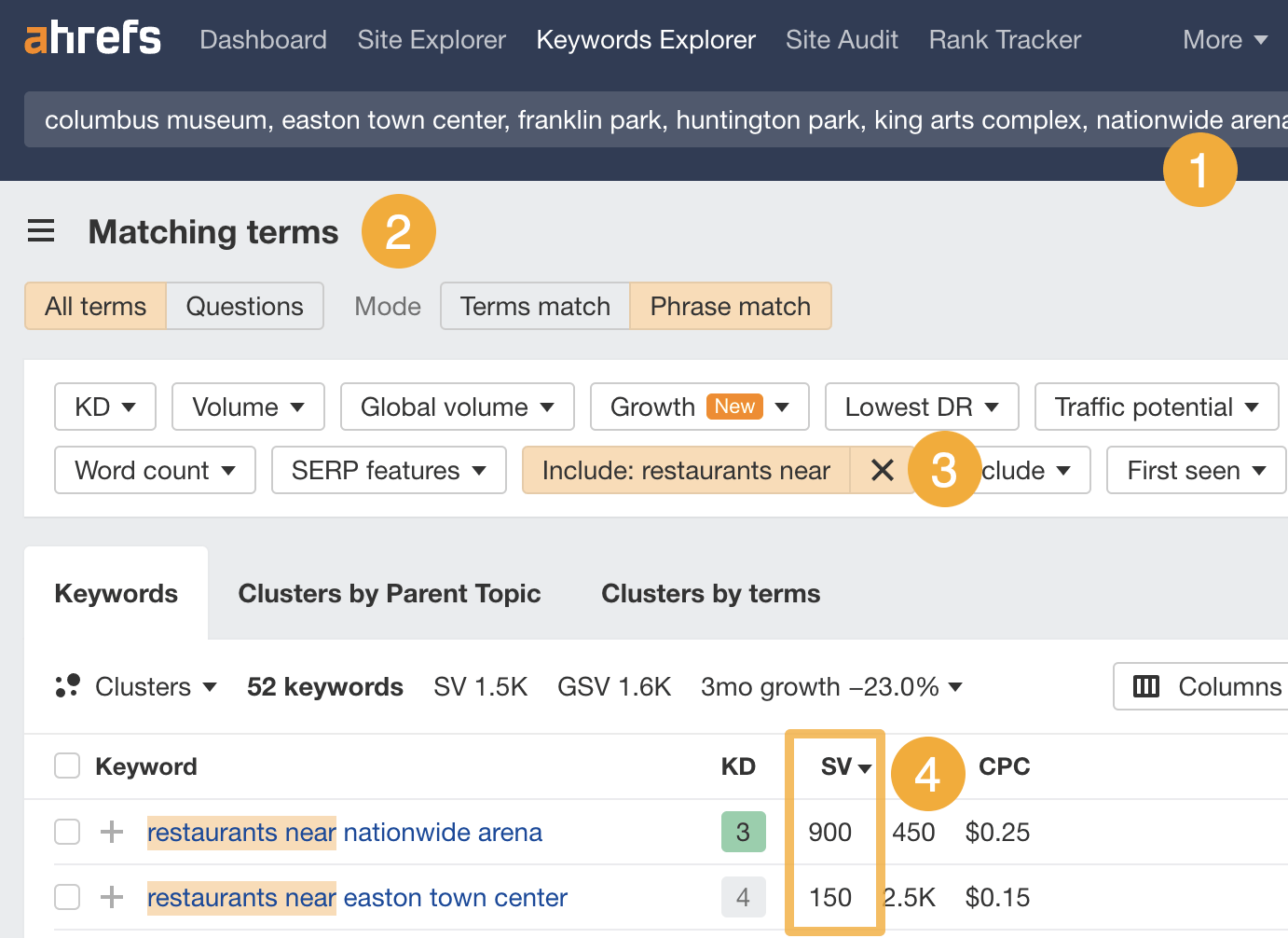

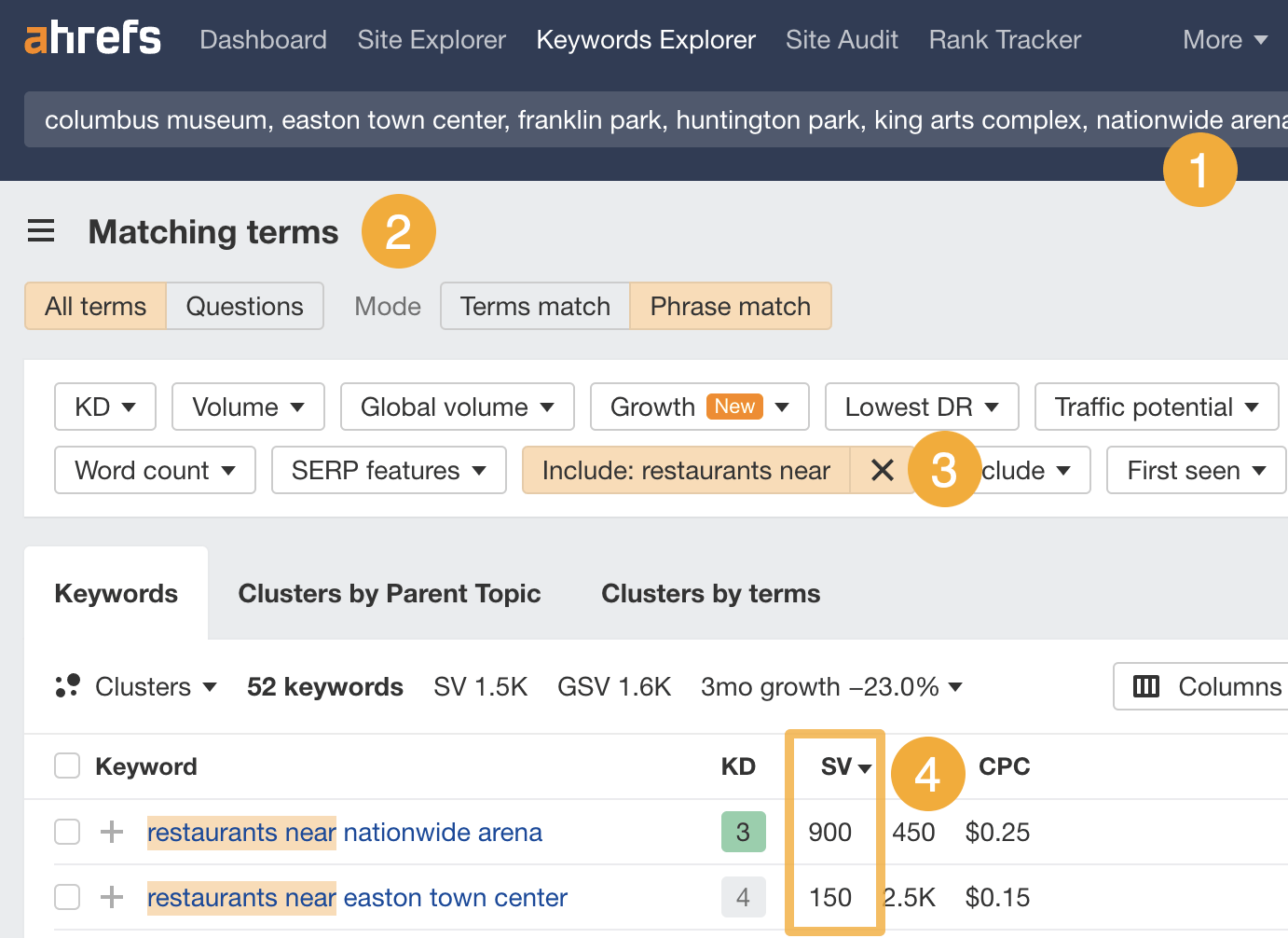

It only makes sense to optimize for attractions where people are searching for nearby restaurants. Here’s how to check which those are:

- Paste the list of attractions into Ahrefs’ Keywords Explorer

- Go to the Matching terms report

- Add “restaurants near” to the Include filter.

- Look at which attractions get the most searches

In this case, Nationwide Arena is the most popular, but people are also searching for restaurants near Easton Town Center and Franklin Park Zoo.

c) Optimize your website for them

This is really all about mentioning the attractions on your site in natural and helpful ways.

Here are a few ways I’ve seen this done:

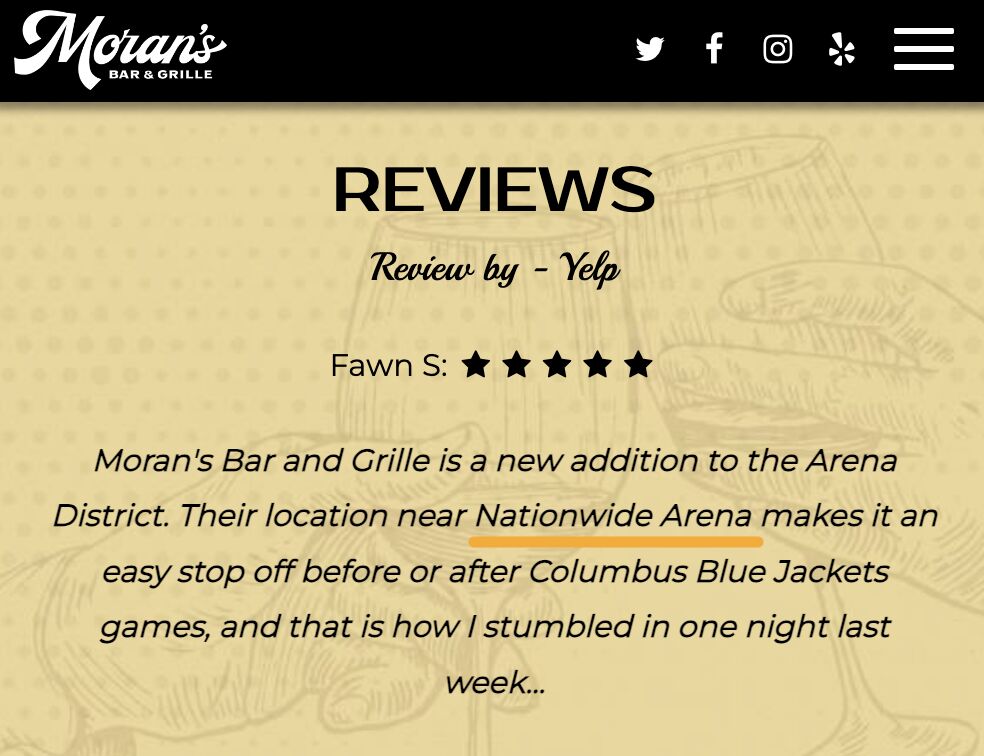

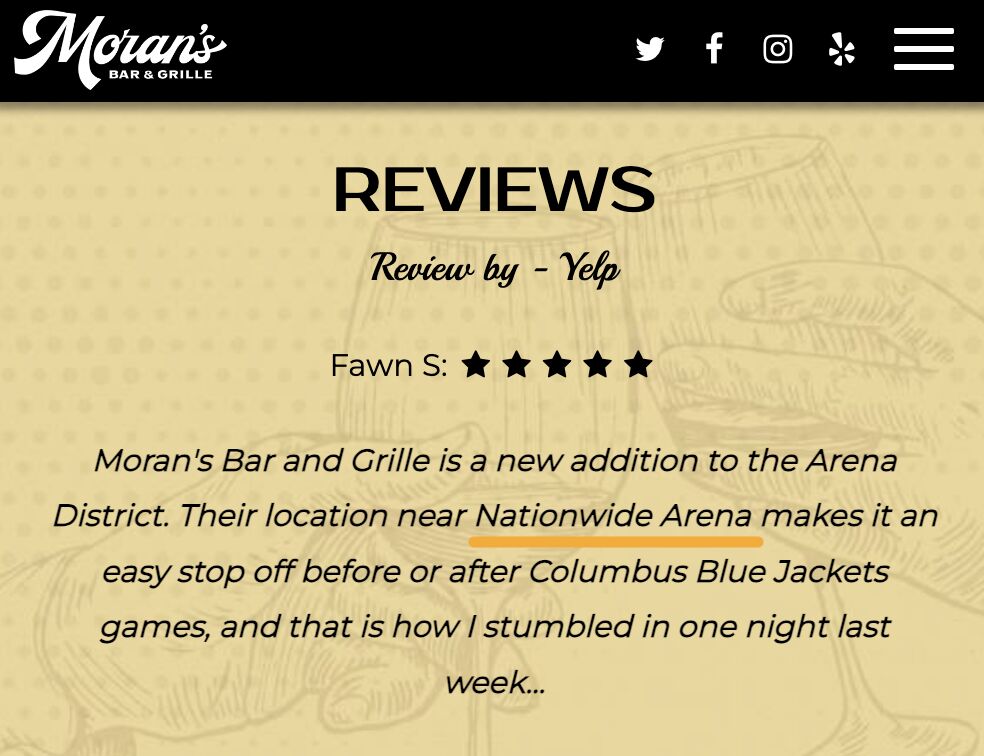

Testimonials

Moran’s Bar & Grille featured a Yelp review that mentions the arena and a few related terms on their homepage:

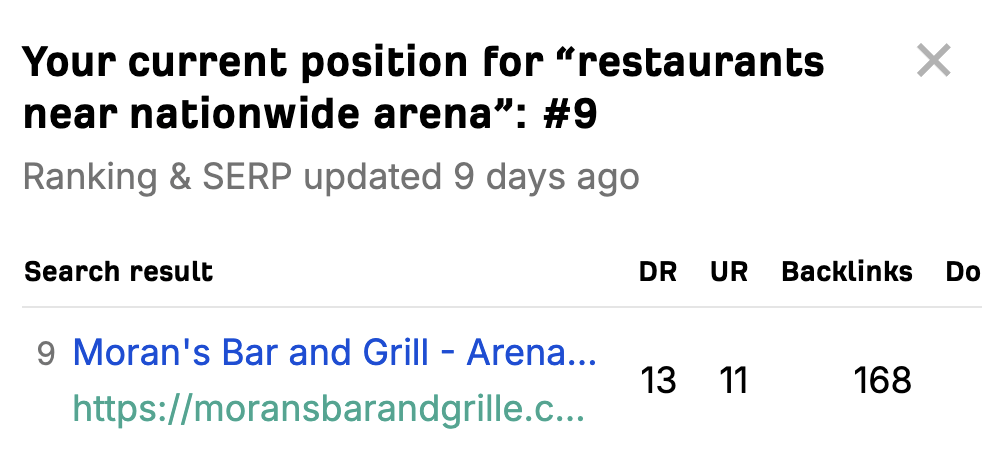

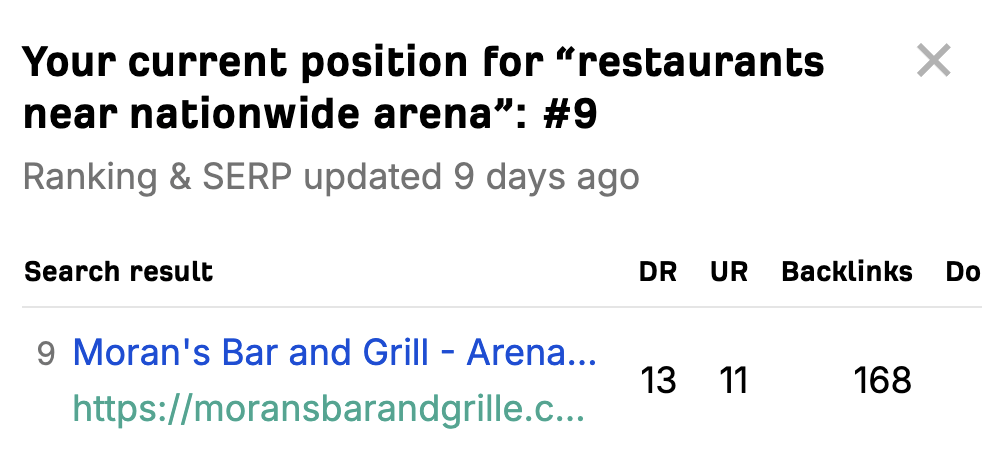

This is likely what helped them to rank #9 for “restaurants near nationwide arena”:

Booking advice

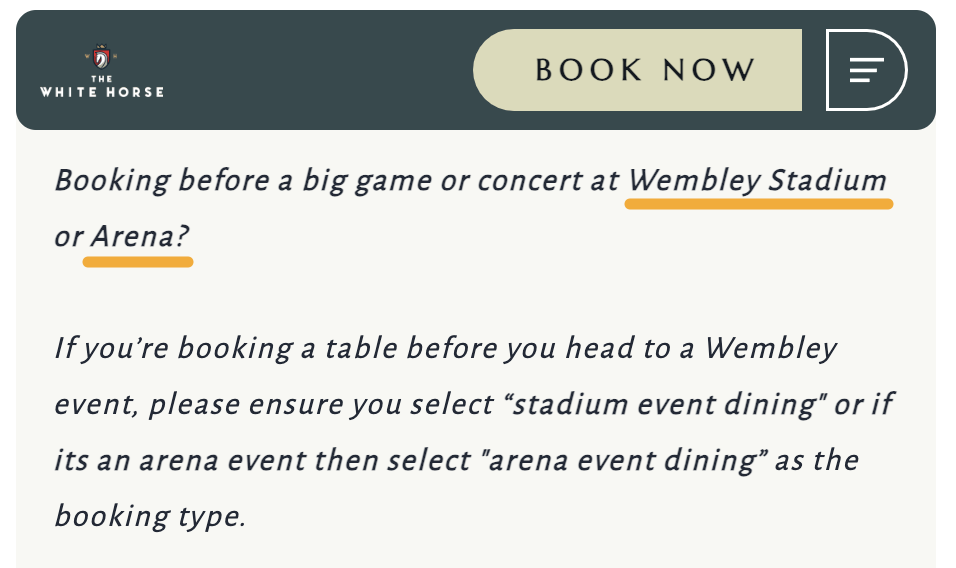

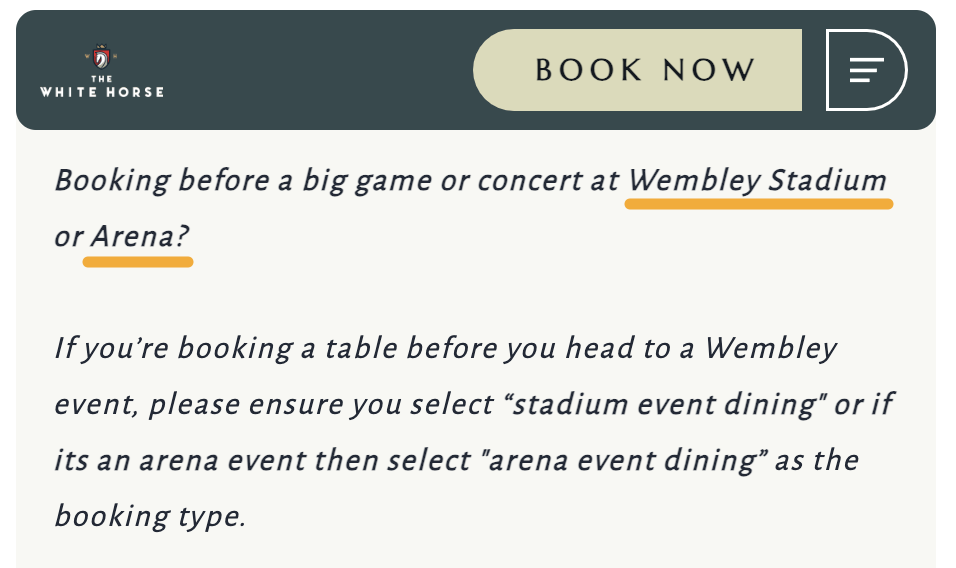

The White Horse Pub has advice for those booking to dine before a big game or concert on their homepage:

This is likely what helped them to rank #8 for “food near wembley stadium”:

Location guide

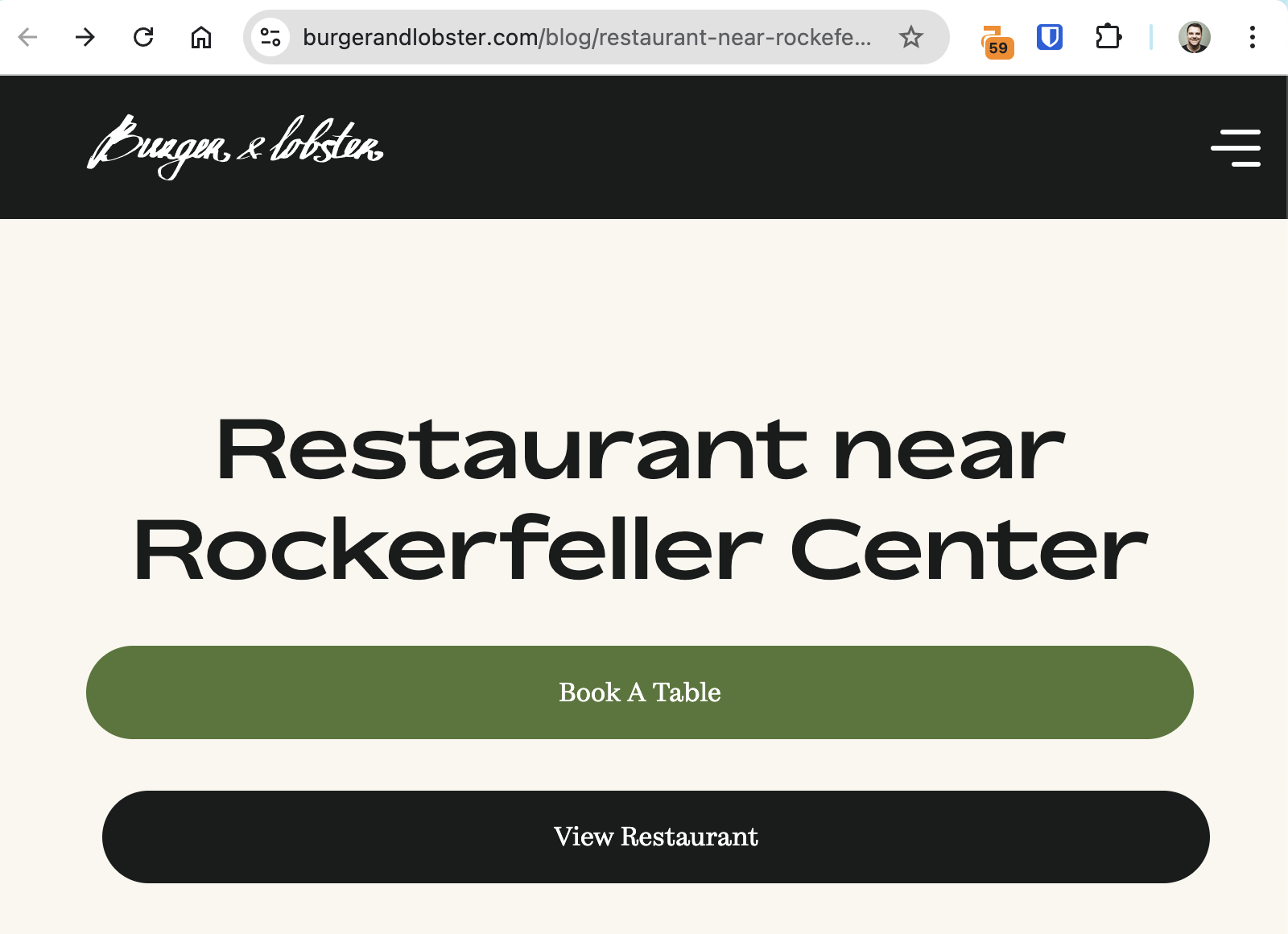

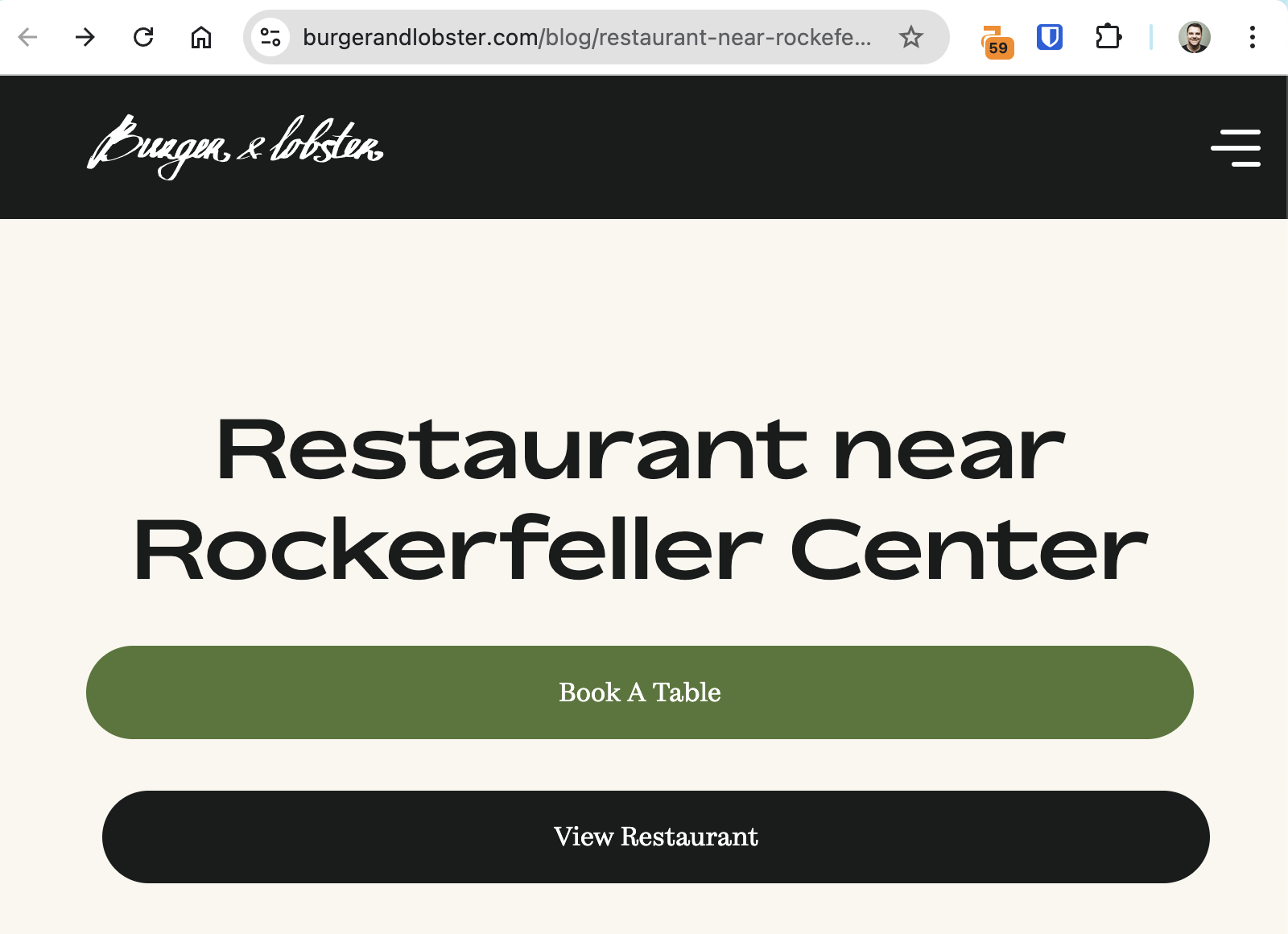

Burger & Lobster created an entire page about restaurants in the area:

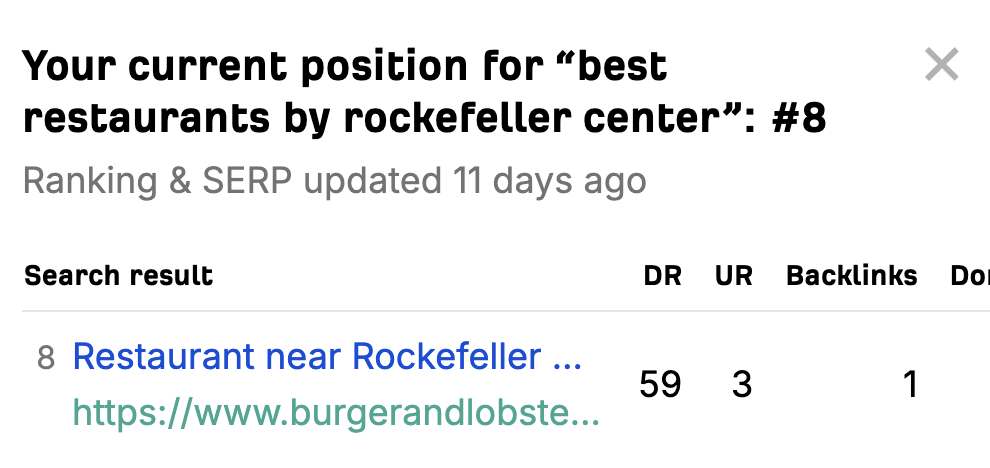

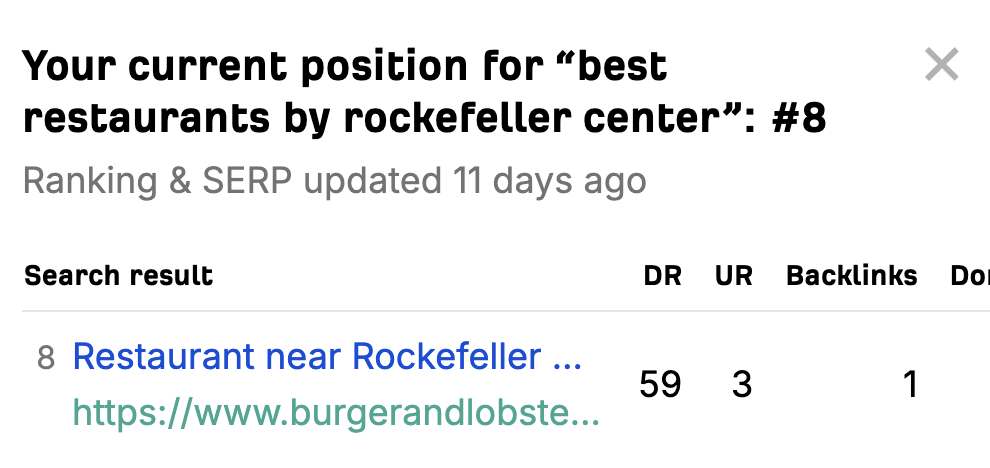

This is likely what helped them to rank #8 for “best restaurants by rockefeller center”:

However, while this seemed to work, the execution is a little spammy for my tastes. Most of the copy is just fluff that ChatGPT could write.

If you want to give this tactic a shot, I’d suggest creating a page with genuine local restaurant recommendations. Your joint can be one of them, but be helpful and feature some of the other places you love, too.

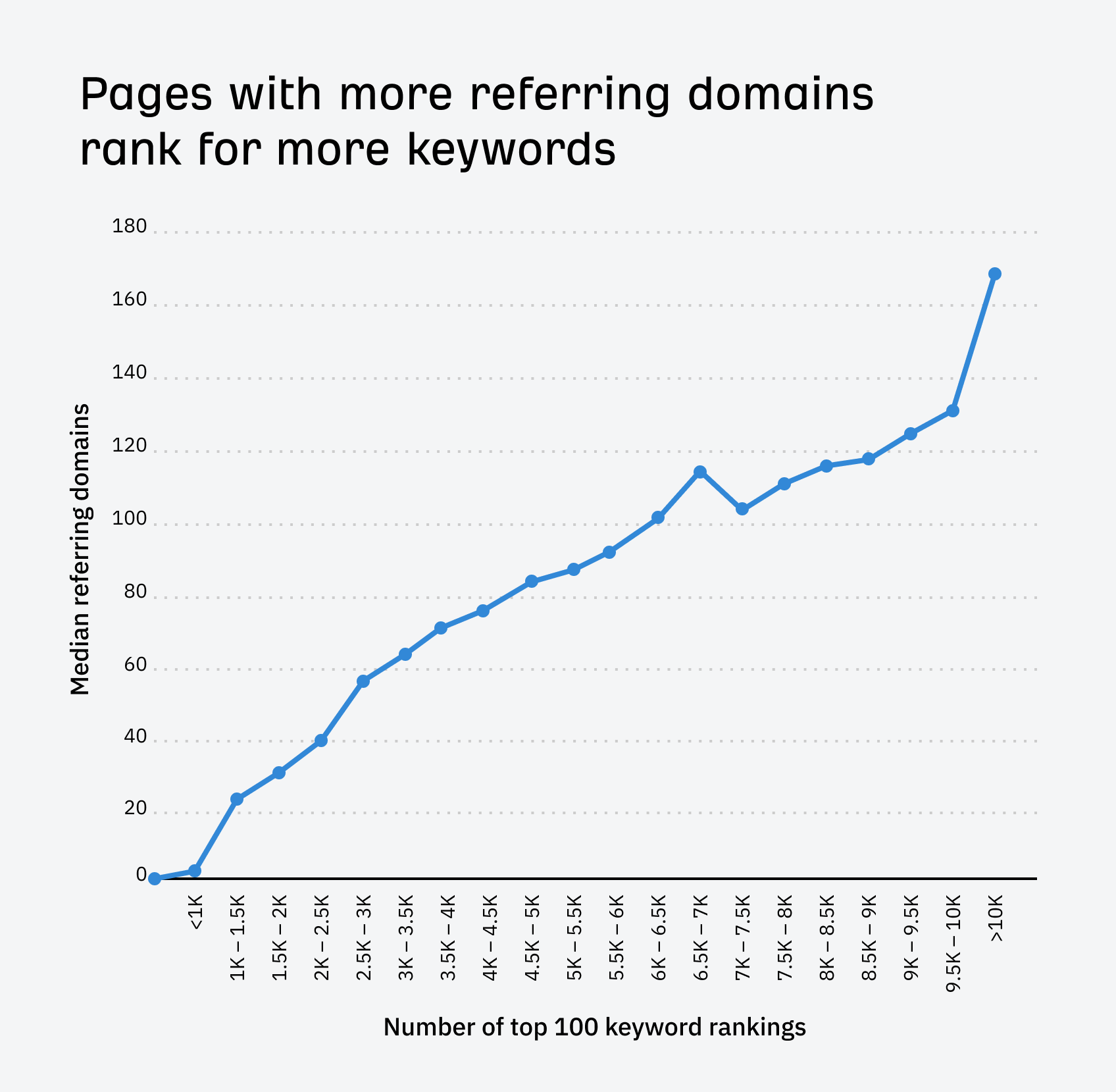

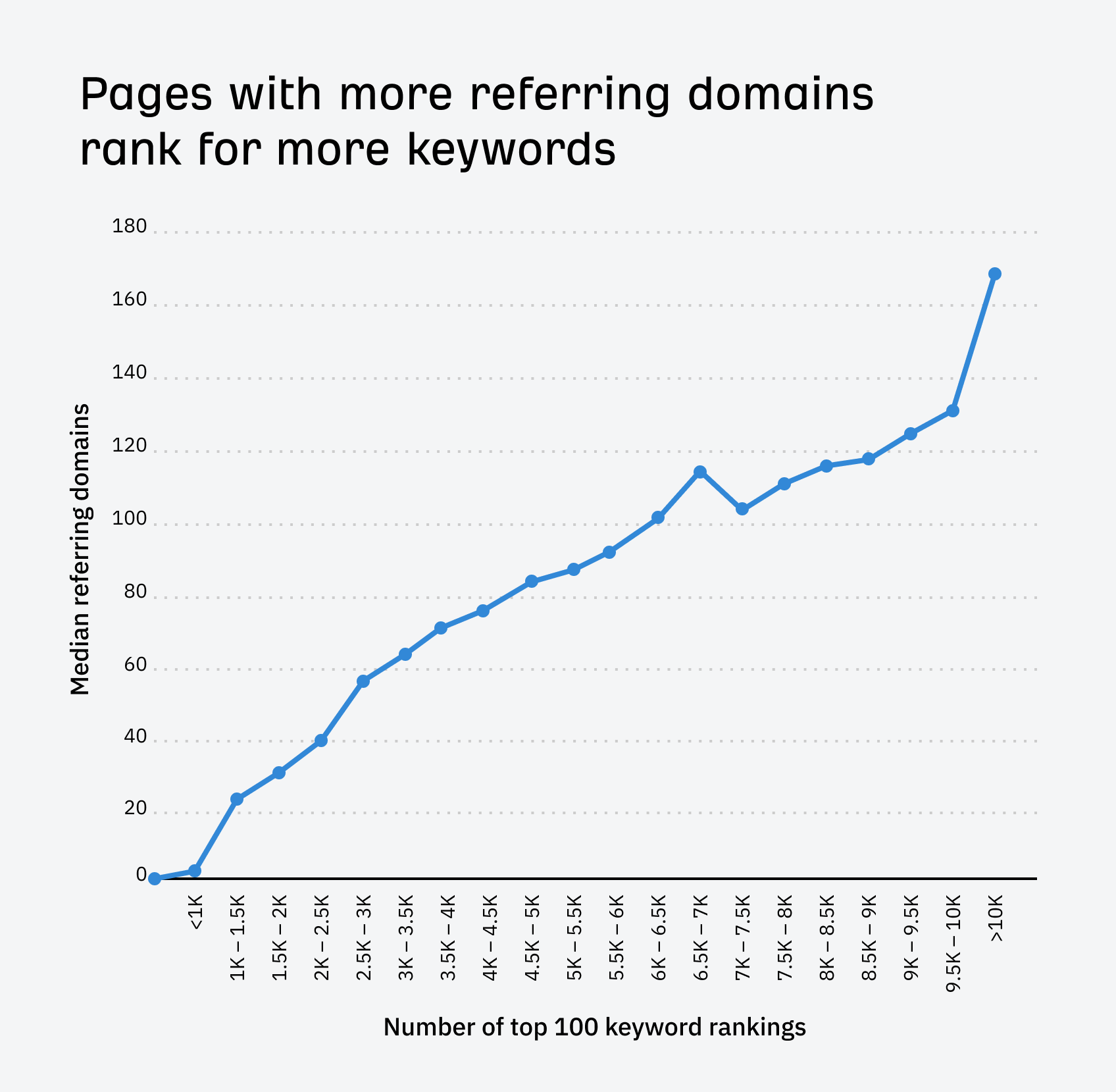

Our analysis of around 14 billion pages found a clear correlation between backlinks and keyword rankings.

Backlinks can also help diners to discover your restaurant on other websites.

I discovered a coffee shop in Boston through a recommendation from a local blogger and have been multiple times since. Her description and photos really sold me on visiting, and it was a great find. I’ve visited a few of her recommendations.

I was able to discover a nice little bistro through a food blogger’s site that gave a detailed review and had a link to the restaurant’s page. Because of this, I had one of the most memorable meals in my life.

I’ve discovered new restaurants from food bloggers’ Instagram accounts before, as well as from blog posts. For example, I learnt about a new Mexican place in Rome from the instagram page of Wanted in Rome.

Getting backlinks can be challenging, but here are a few tactics to get you started:

Get food bloggers to review you

Food bloggers who review you will usually link to you from their website.

Here’s a quick way to find bloggers to reach out to:

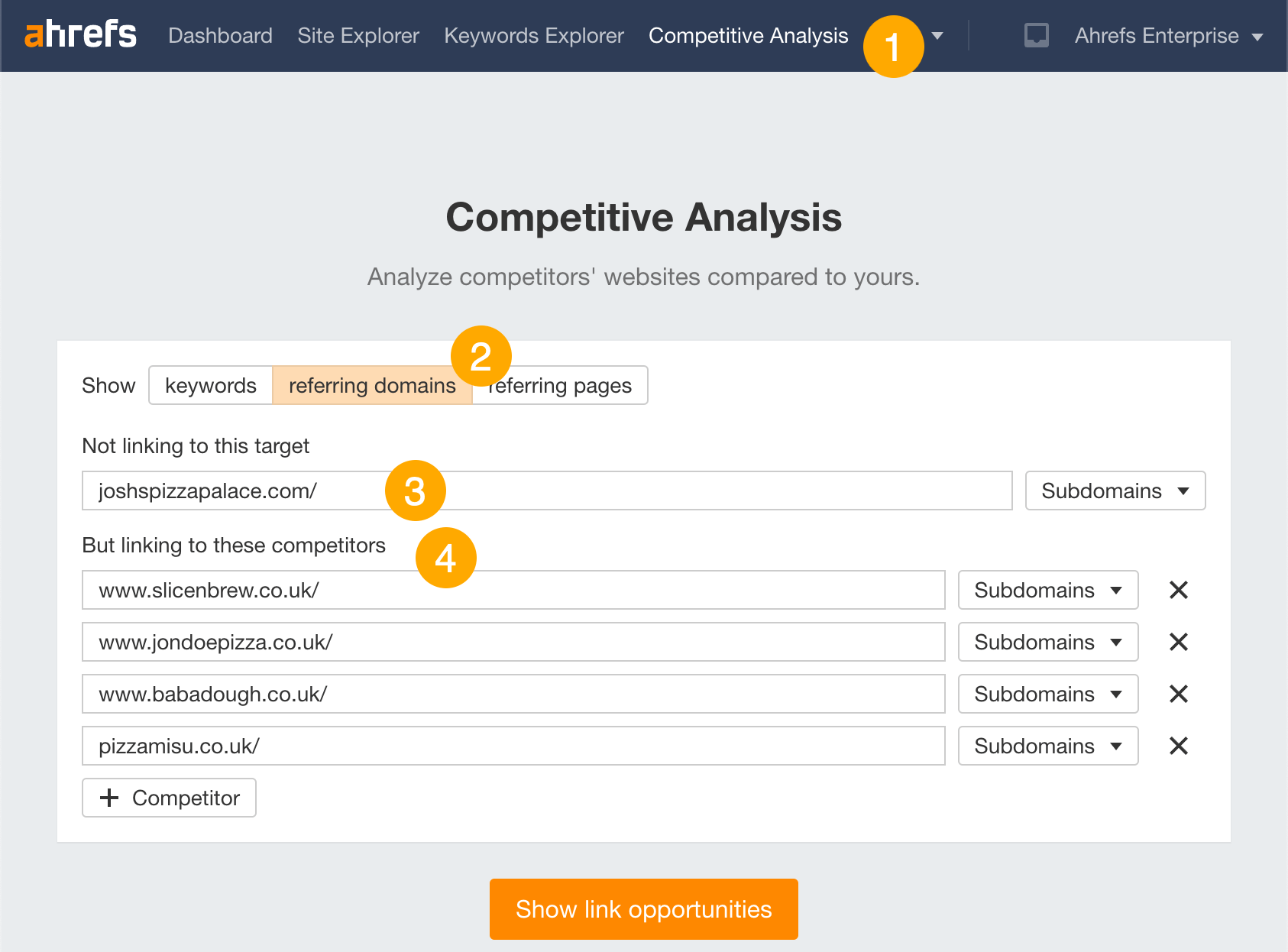

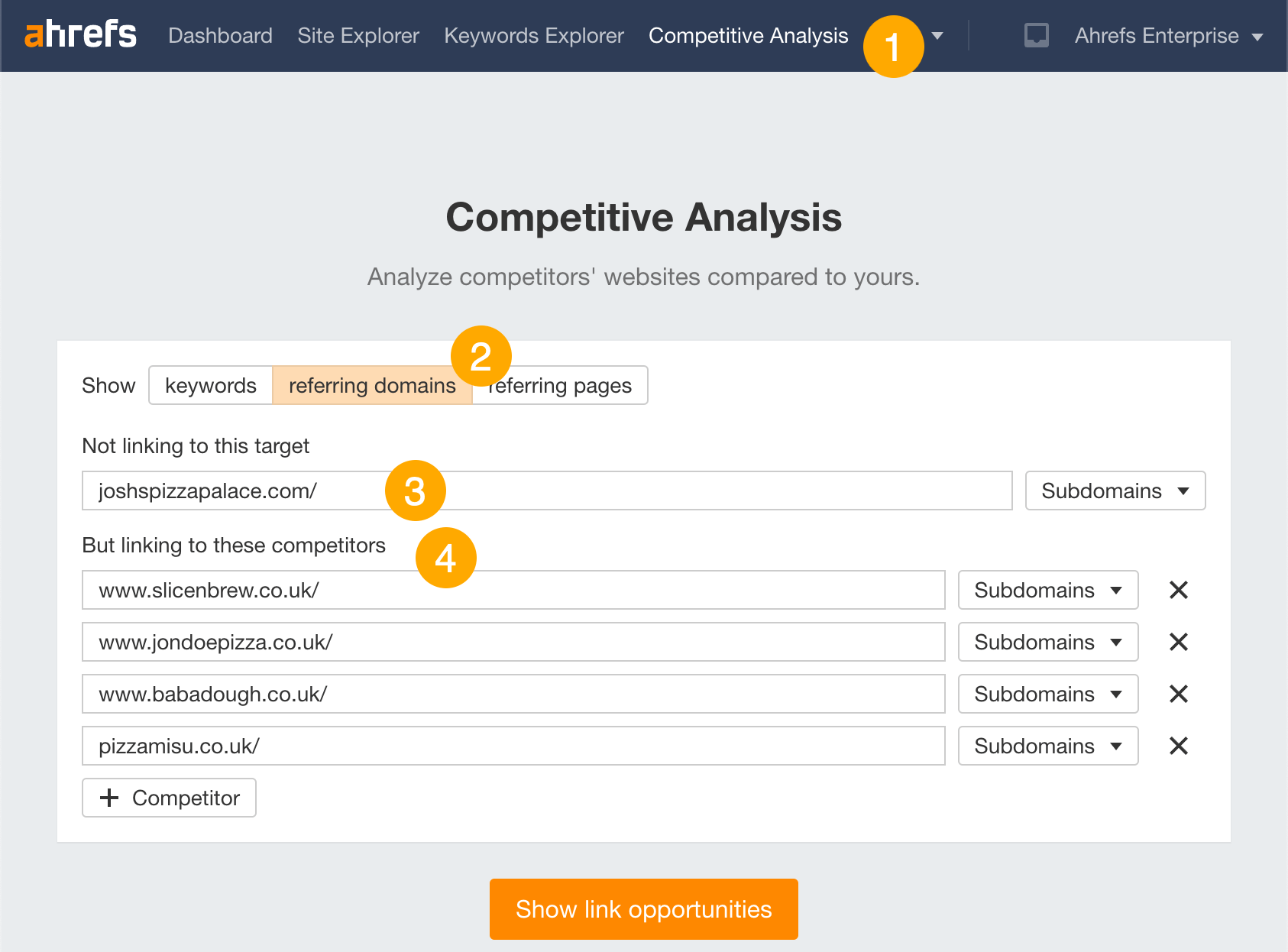

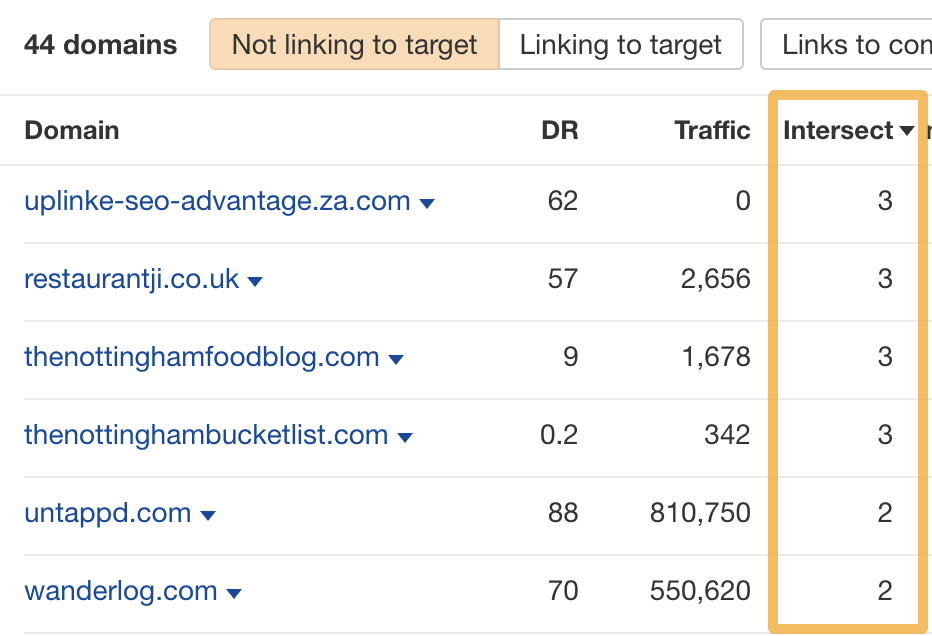

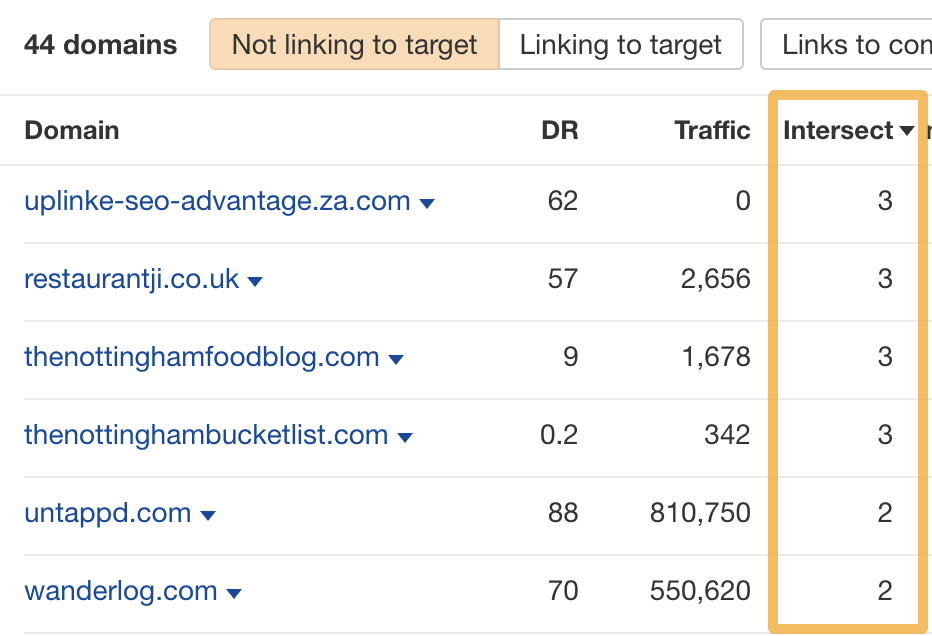

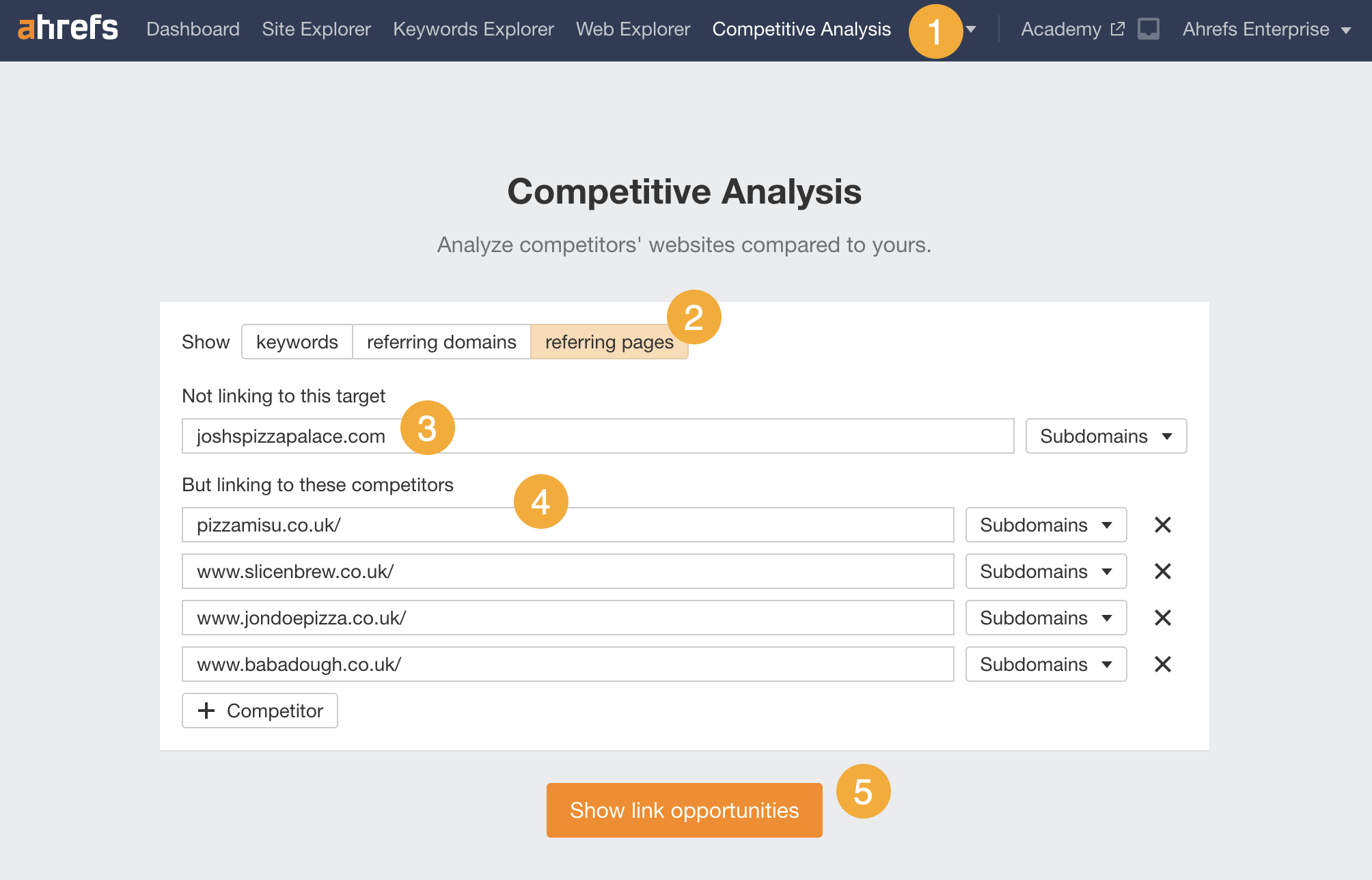

- Go to Ahrefs’ Competitive Analysis tool

- Switch the toggle to “referring domains”

- Enter your website in the “Not linking to this target” field

- Enter a few competitors’ websites in the “But linking to these competitors” fields

Hit “Show link opportunities,” and it’ll show you websites linking to one or more of your competitors but not you.

Eyeball the domains and food bloggers should jump out at you.

For example, three popular pizzerias in my city have links from a site that clearly belongs to a food blogger:

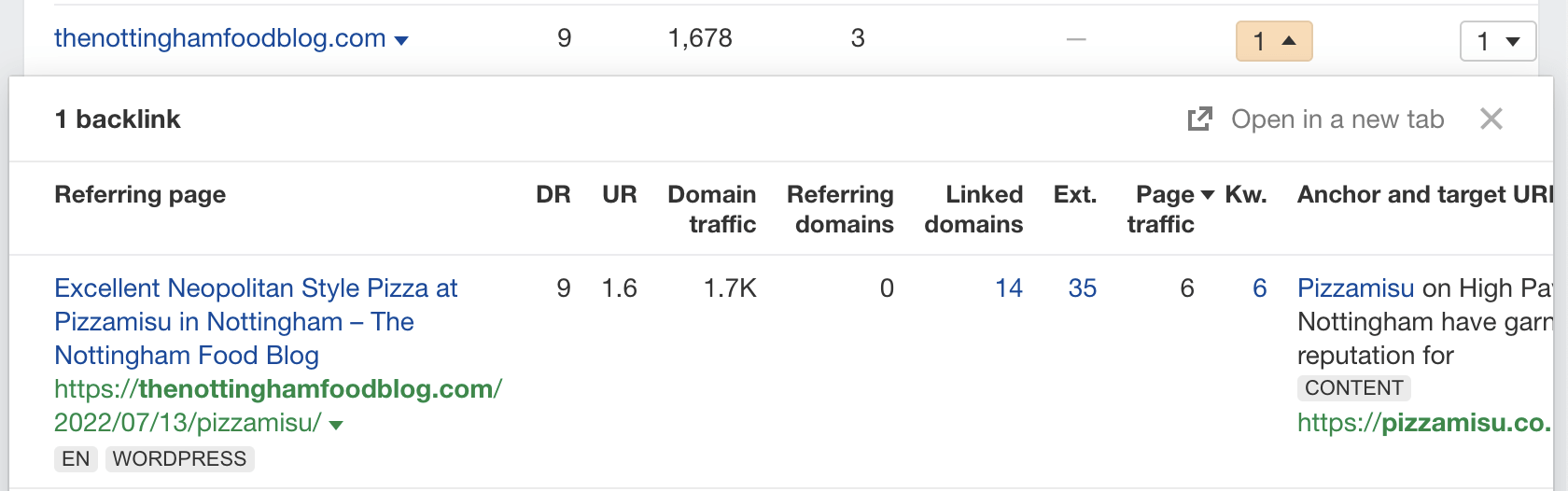

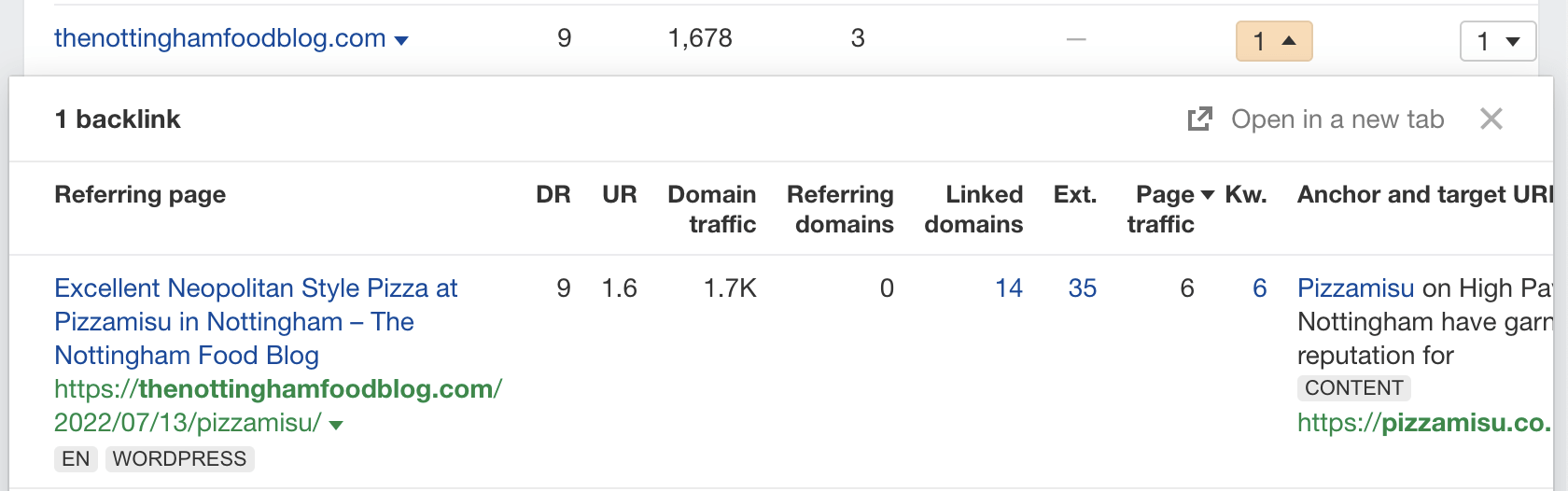

Hit the caret in each competitor column and you’ll see the linking page:

Each linking page in this case is a restaurant review, so it would definitely make sense to invite this blogger down for some grub in return for a review.

Reviews have benefits beyond SEO

Many food bloggers also have large followings on Instagram and TikTok, where they’ll likely also post their reviews.

For example, this blogger in my city has over 11,500 followers on Insta:

Get “supplier” links

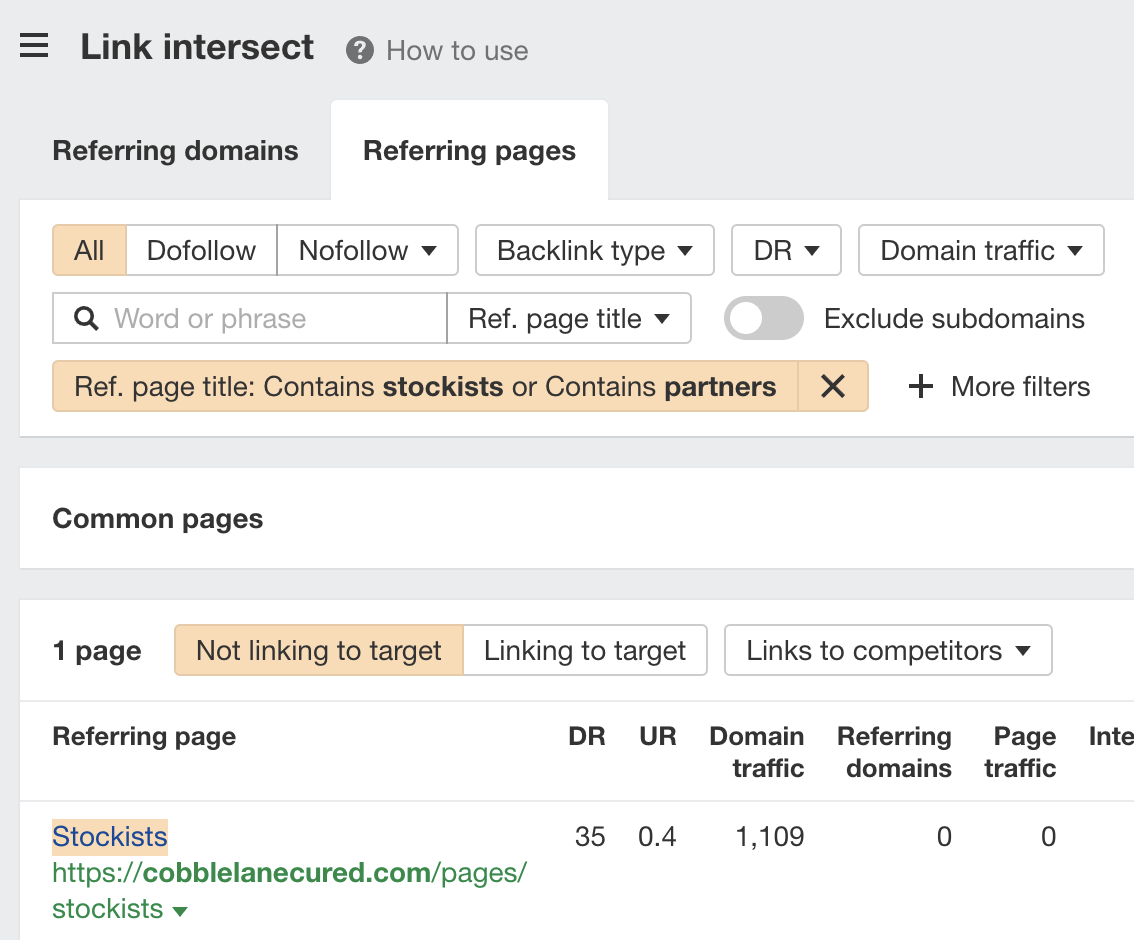

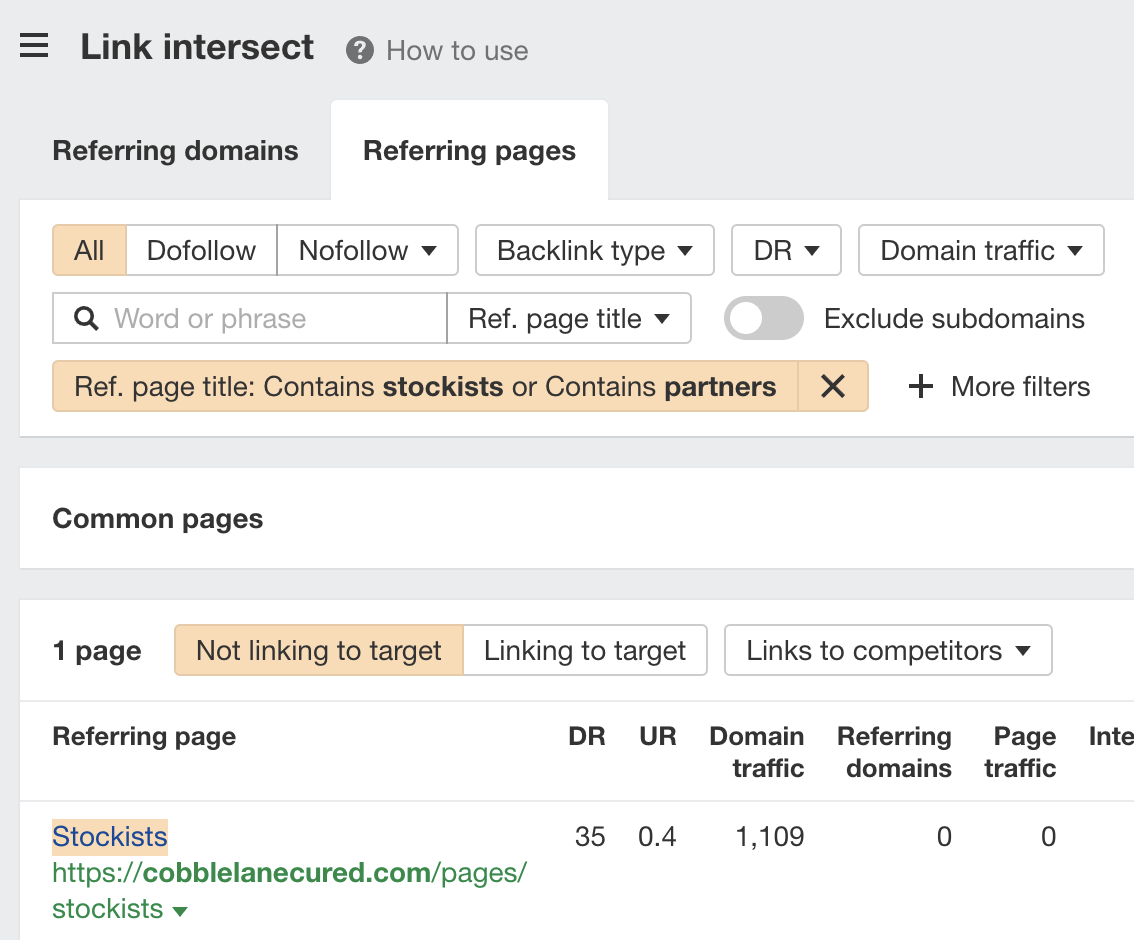

Every restaurant has suppliers, and those suppliers often have pages on their websites listing their “stockists.”

For example, this supplier of cured meats lists and links to all the restaurants they supply:

If your suppliers have a page like this, getting a link is usually as easy as reaching out and asking if they can add you.

To find these opportunities, just search Google for: [supplier name] partners|stockists

If you’re likely to have shared suppliers with competitors, you can also sometimes uncover these opportunities by searching their backlinks with Ahrefs:

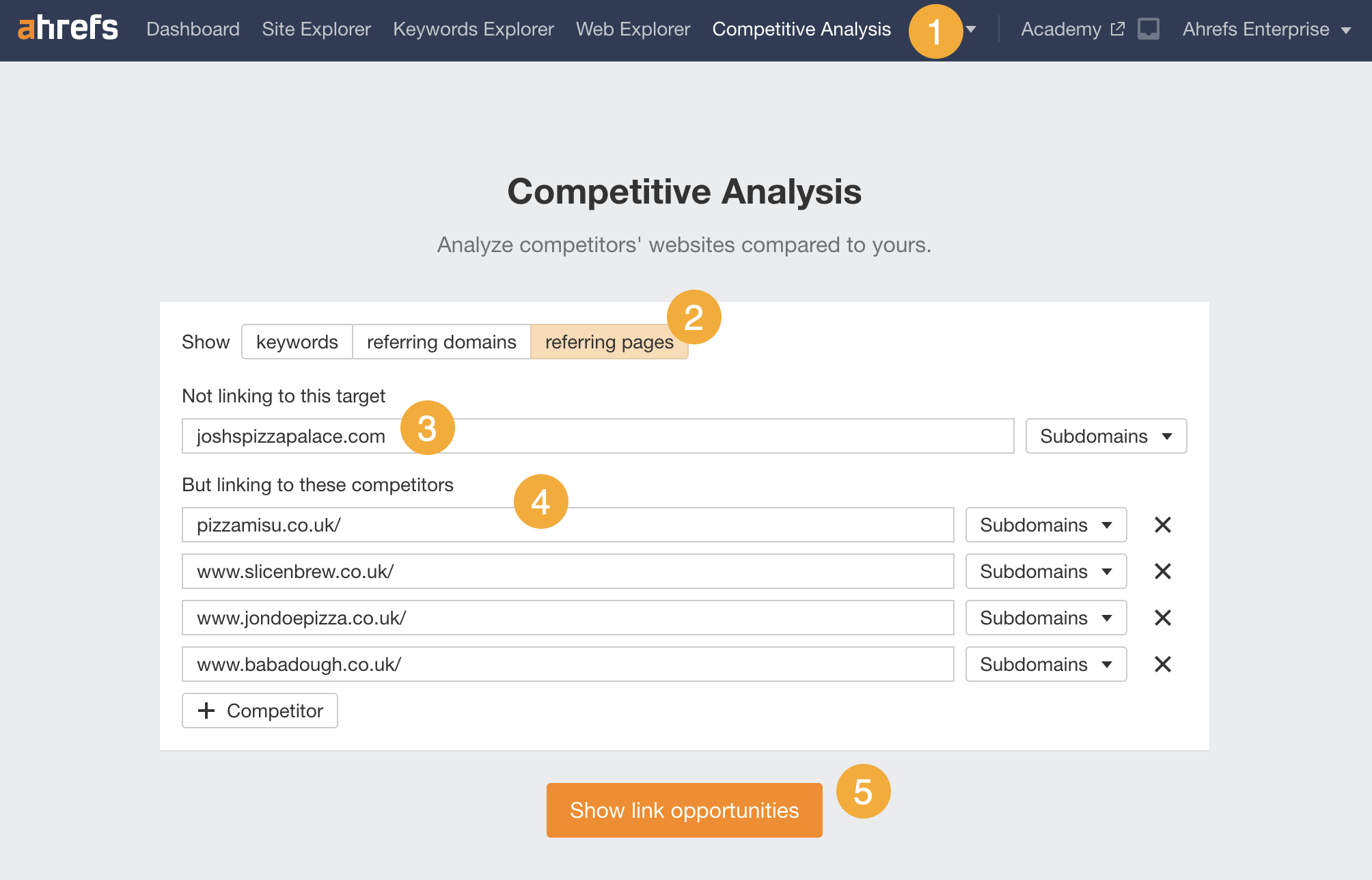

- Go to Ahrefs’ Competitive Analysis tool

- Switch the toggle to “referring pages”

- Enter your website in the “Not linking to this target” field

- Enter a few competitors’ websites in the “But linking to these competitors” fields

- Hit “Show link opportunities”

If you then filter for referring pages with words like “stockists” or “partners” in their titles, you might see some opportunities:

Not finding any supplier pages?

If they have a blog, ask if they’d be interested in doing an interview or spotlight piece with one of their customers.

This is exactly what Adam Atkins, who runs a pizza food truck in the UK, did with one of his suppliers:

Most restaurant websites are a total mess.

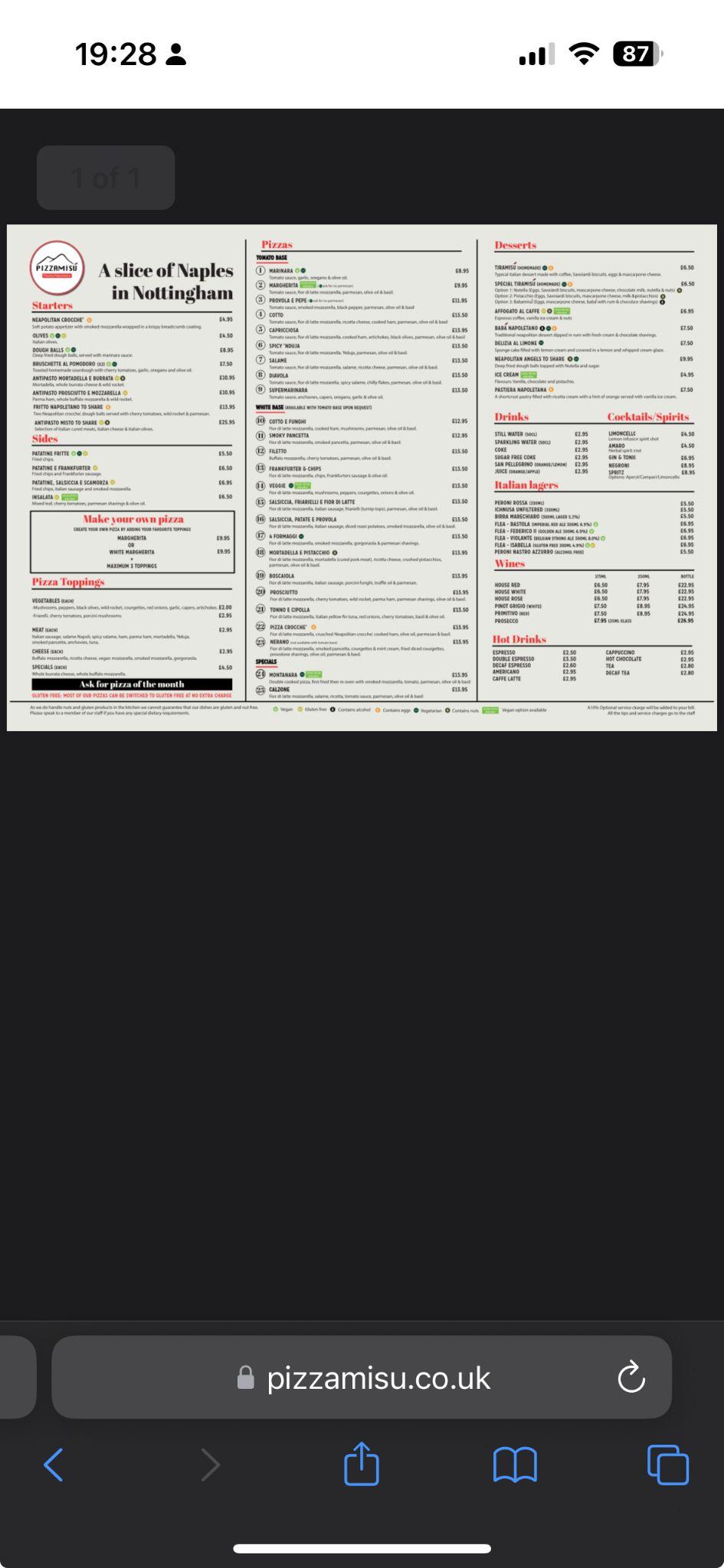

For example, here’s the homepage for one of my favorite pizzerias:

Everything is visually misaligned, there are buttons all over the place, and for some strange reason it’s asking me to buy a tshirt. (I don’t want a fashion show, I just want to see the menu!)

Other diners agree that a bad website leaves a bad taste in their mouths:

A bad website is a huge turn-off. For example, near where I live, I prefer ordering from Formosa Taipei over Mulan in Waltham/Lexington—even though Mulan might have slightly better food—because Formosa Taipei has a much easier ordering system. A cumbersome website can make the whole experience frustrating, so I’ll often choose convenience over slightly better quality if it means avoiding the hassle.

An awful website can discourage eating out or ordering from certain eateries. Annoying PDF menus or complicated navigation are some examples of such issues that create a bad impression and might imply that the restaurant does not value its customers’ experience; hence, it will impact our decision whether to visit them or not.

Here are a few tips for improving your restaurant’s website for the 80% of searchers who care:

Put your menu on a page, not in a PDF

Google says more people search on mobile than desktop—and that’s across the board. For local searches like nearby restaurants, it’s probably the vast majority.

This is why PDF menus are such a bad idea. They force potential diners to pinch, zoom, and pan their way around an ugly PDF when the same information could easily be put on a mobile-friendly page.

If you really must use a PDF, follow our SEO for PDFs guide to make sure it’s optimized. Google will still index it and it should appear in search results. But I have seen weird things happen with them…

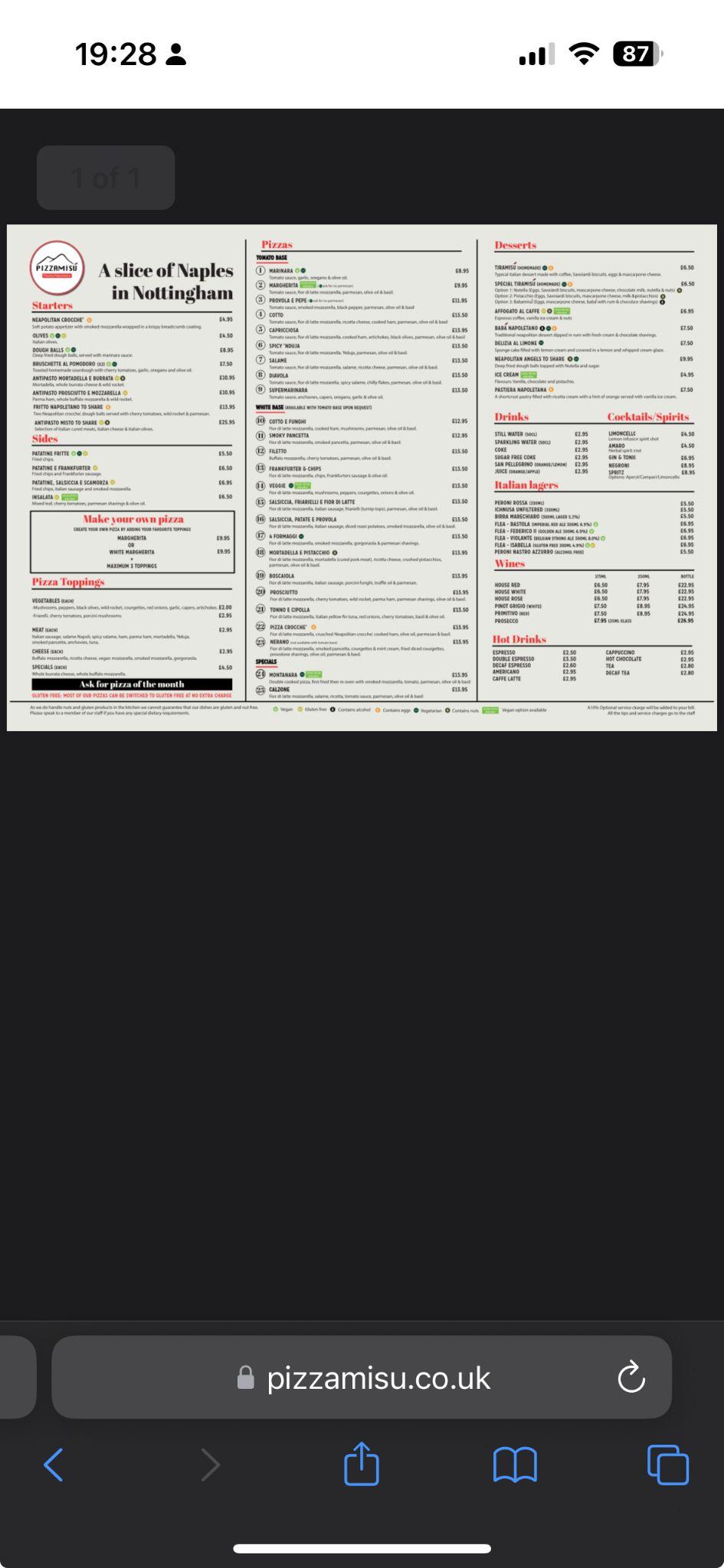

For example, if I search for my favorite pizza restaurant’s menu on Google, this is the result:

Here’s the page this takes me to:

Despite Google having the PDF menu indexed, it chooses to send me to no man’s land—a blank page with a teeny tiny text link to the PDF menu.

If the menu was just on the page and not in an annoying PDF, this wouldn’t happen!

Make sure your site is free of technical issues

Technical issues can wreak havoc on a restaurant’s SEO.

For example, accidentally clicking the wrong button in some backend systems can deindex your entire site. The result is that your site won’t appear in Google search—at all.

Not all technical SEO issues are this serious, of course, but it’s best to keep an eye on them and nip them in the bud as soon as possible.

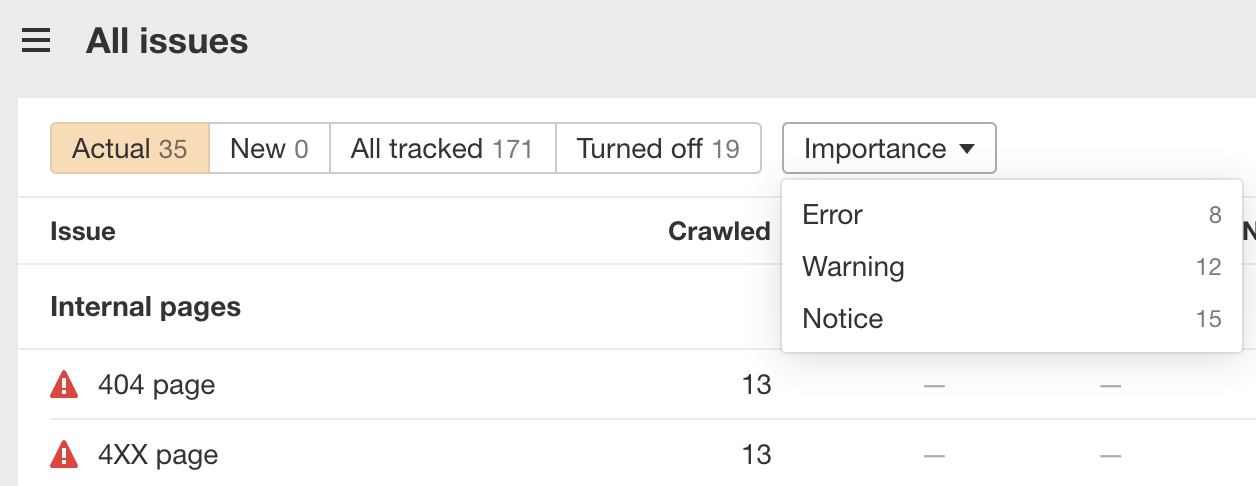

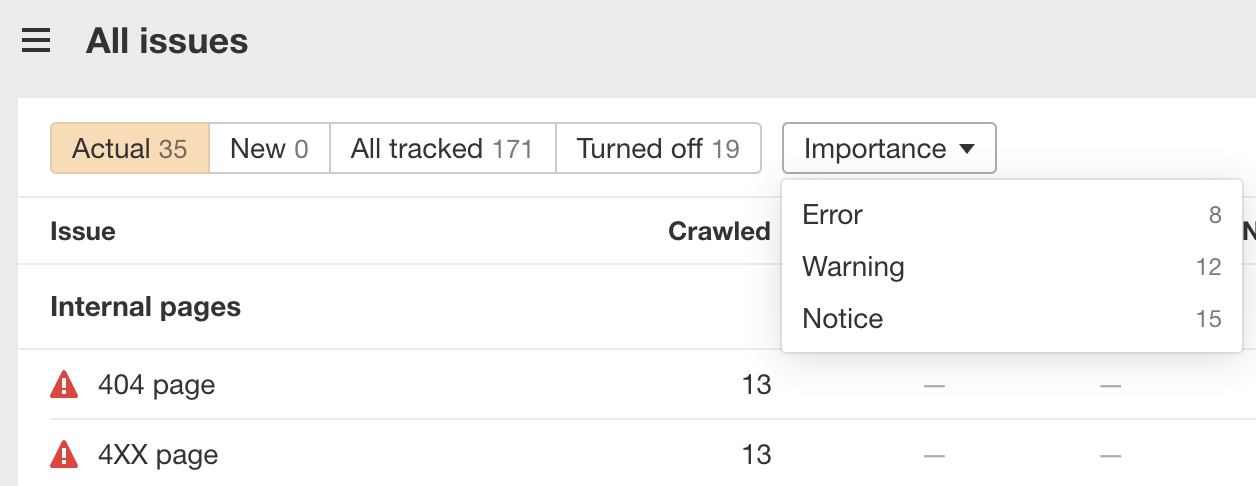

To do this, sign up for a free Ahrefs Webmaster Tools (AWT) account and schedule regular crawls in Site Audit. This checks your site for 170+ SEO issues and groups them by importance:

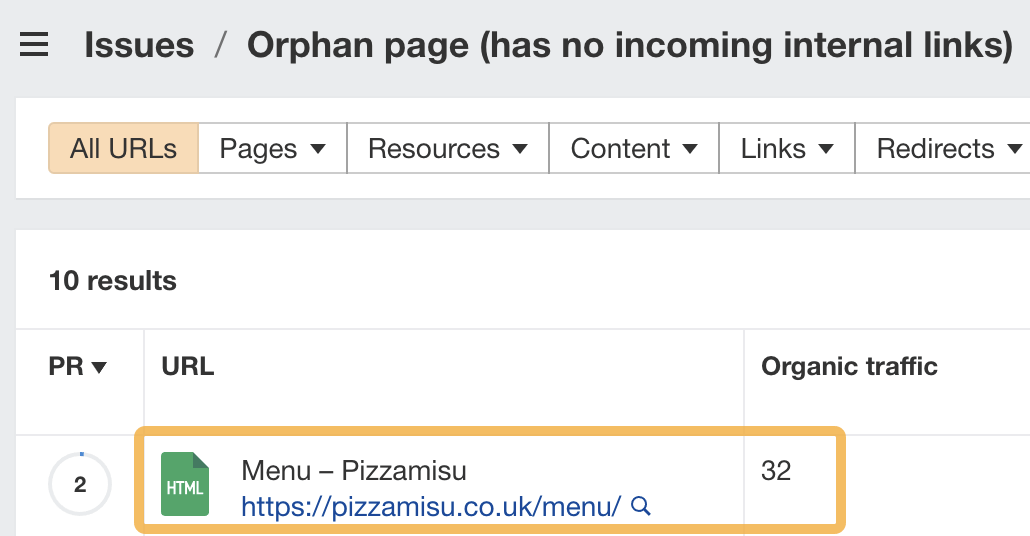

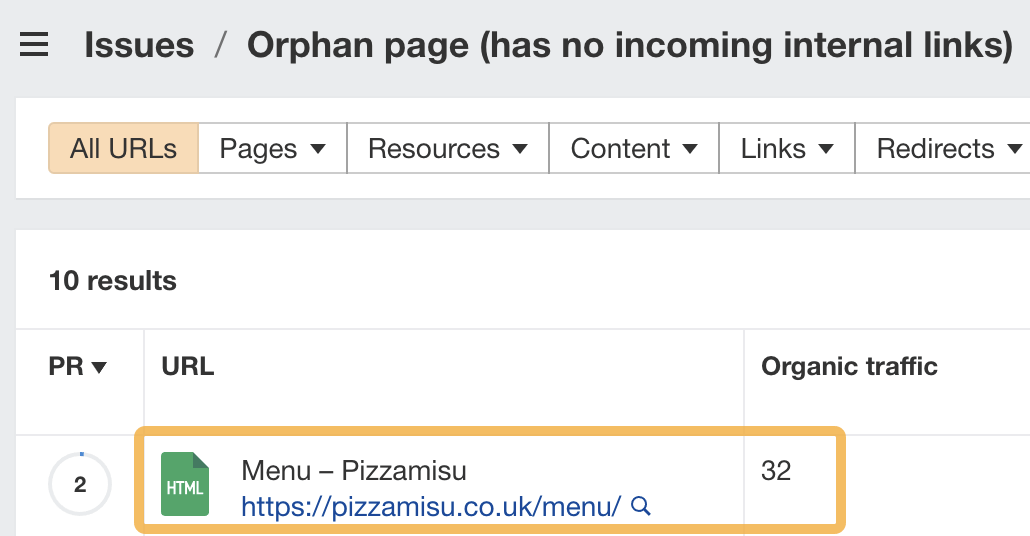

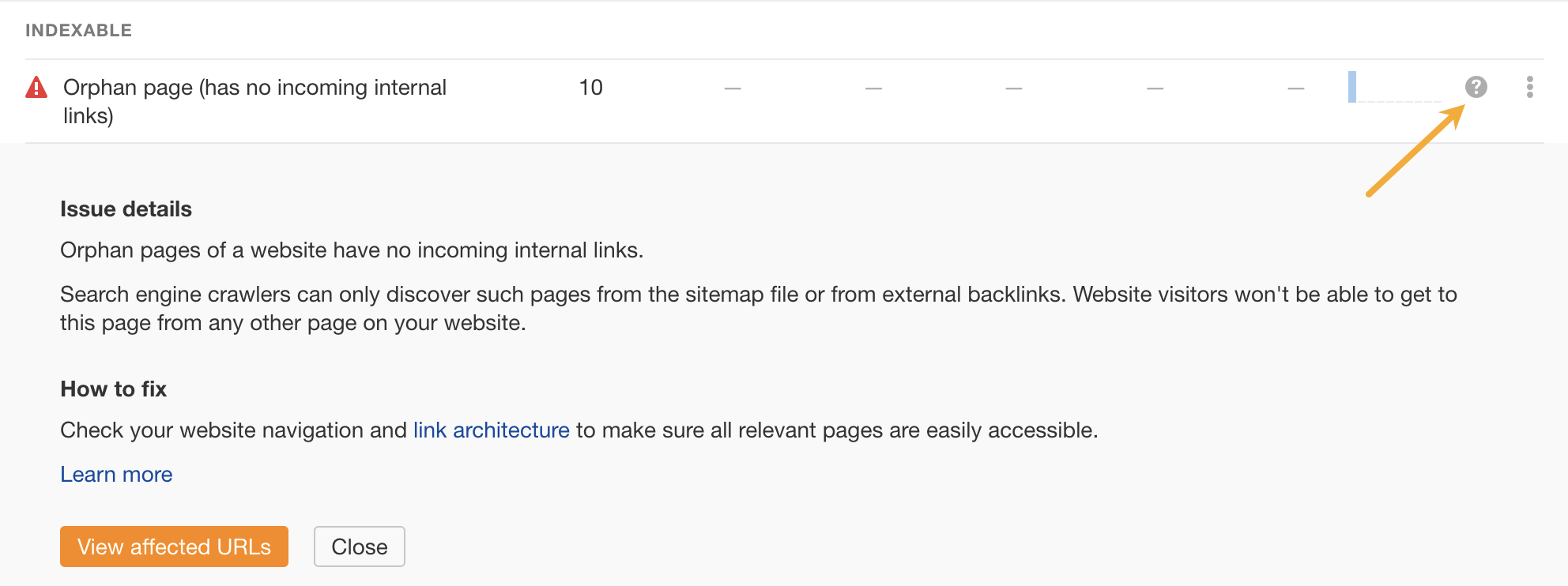

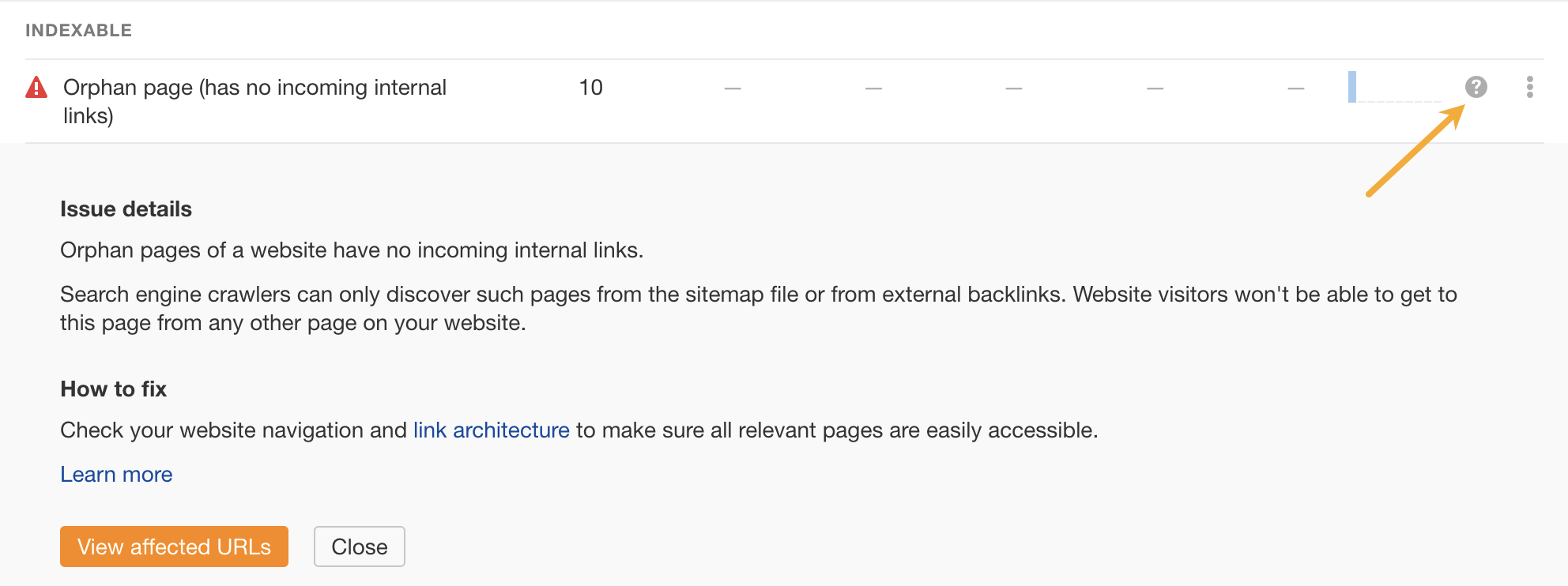

For example, the pizzeria I mentioned above has an orphan page (page with no internal links) getting organic traffic:

Do you recognize the page? That’s right—it’s the dodgy blank menu page from earlier. This is exactly how I found that issue. To solve this, the site should either noindex this page or, ideally, put its menu there (instead of in the ugly PDF) and internally link to it.

If you’re not sure how to solve an issue that pops up for your site, click on the “?” icon to see advice on how to fix it:

If you’re not SEO-savvy enough to do the fixes yourself, export the affected pages and send them to your developer or a freelancer.

Final thoughts

It doesn’t matter how amazing your food is unless you can get customers through the door. That’s why SEO matters for restaurants. Your food may do the talking once the customer is dining with you, but it’s what they see online that’ll get them there.

![How AEO Will Impact Your Business's Google Visibility in 2026 Why Your Small Business’s Google Visibility in 2026 Depends on AEO [Webinar]](https://articles.entireweb.com/wp-content/uploads/2026/01/How-AEO-Will-Impact-Your-Businesss-Google-Visibility-in-2026-400x240.png)

![How AEO Will Impact Your Business's Google Visibility in 2026 Why Your Small Business’s Google Visibility in 2026 Depends on AEO [Webinar]](https://articles.entireweb.com/wp-content/uploads/2026/01/How-AEO-Will-Impact-Your-Businesss-Google-Visibility-in-2026-80x80.png)