SEO

6 Link Building Services That Actually Work (+6 More to Avoid)

Link building is hard work. Landing top-quality links takes a lot of time. Unless you have a dedicated in-house team of link builders, acquiring the links you need can be difficult, especially if you have more than one site you’re working on.

So if you’re a busy business owner or an SEO with multiple clients, how do you get the links you need while dedicating as little time as possible? By using a link building service.

But knowing the type of link building service you should be looking for can be overwhelming. That’s why we will walk you through the best link building services that work and those you should avoid.

We need to take a look at the link building service providers first, though.

OK, so here’s the thing:

Many link building service providers are beyond sketchy. The majority are selling the digital equivalent of a knock-off Louis Vuitton purse out of the trunk of their virtual car.

Every link building service website, marketplace page, etc., says “manual outreach” and “no PBNs.” But, for the most part, that isn’t the case.

That’s just a quick word of warning for you.

On the bright side, some fantastic link building agencies and freelancers work hard to win top-notch links that you’ll be happy to have on your site and will be proud to show off to your clients.

The tricky part is knowing what to look for to find these gems. Let’s look at some of the things you need to consider.

Reputation

This may seem obvious, but the reputation of an agency or freelancer needs to go beyond the testimonials on their website. Unless they’re reviews from well-known companies or industry professionals, I’ll usually assume these are fake.

Is the company one you’ve heard of before? Are the people behind the company well-known industry professionals?

Before reaching out to them, do some digging. Reading reviews beyond those on social media that anyone can buy is a good place to start.

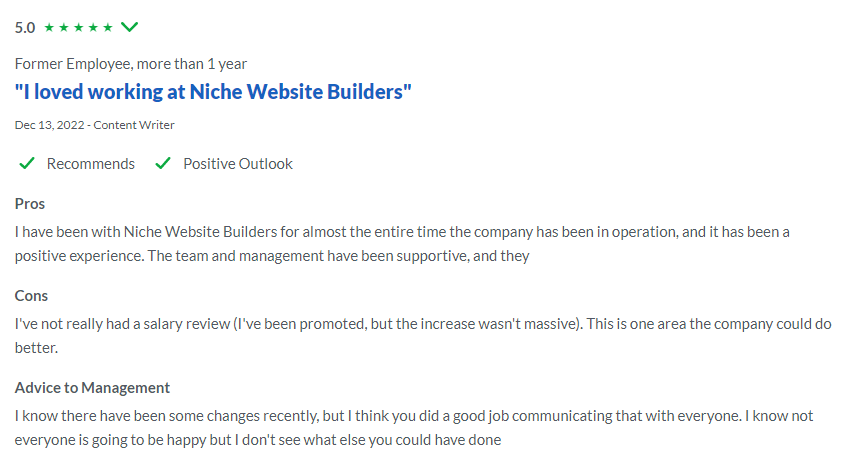

Checking sites like Glassdoor to see what a company’s staff have to say about it can be a great way to evaluate its reputation. However, you should take these reviews with a grain of salt, as even the best company can have a disgruntled ex-employee.

Also, always be bold and ask other SEOs for their recommendations, either one to one or in groups and forums. Having someone advocate for a service they’ve used is one of the best ways to avoid disappointment.

Quality

Checking the quality of service the company provides is incredibly important, and I’m talking about more than the quality of the links it builds.

The customer service it provides, especially in the initial discovery and onboarding phases, can indicate what the service will look like in the long term.

You want to build a long-lasting relationship with a provider so that you don’t have to go through this process every few months. Knowing that the people you’re working with will take care of you and your sites makes all the difference.

You’ll want to work with a provider that can assess your site and curate a link building strategy designed to meet your individual goals. You’ll also want to work with someone who will get back to you reasonably quickly and is happy to jump on a call when needed.

Scalability

Something else to consider is whether a link building service provider can increase the number of links you want to acquire.

If you’re going to pay for link building services because you have many websites you can’t manage alone, you may run into the same issue with a freelancer or small agency.

Even if you plan to start small, if you’re thinking of scaling up in the long term, this is something you should discuss up front. It is better to find a high-quality provider you’re happy with that can grow with you rather than start again later.

So now we’ve covered what to look for in a service provider, let’s look at some good services that can actually move the needle.

After checking out some of the most popular link building agencies, I’ve found the top services that are most frequently offered across the board, including:

- HARO link building.

- Digital PR.

- Guest posting.

- Niche edits.

- Skyscraper link building.

- Managed link building.

It’s worth noting that most of these services are based on a particular technique or tactic used to acquire links rather than a link building service per se. But as this is how most agencies market them, it’s worth discussing them this way.

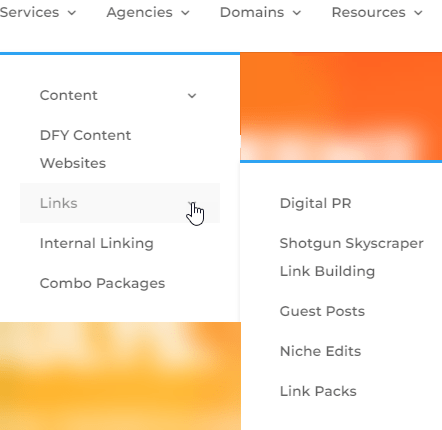

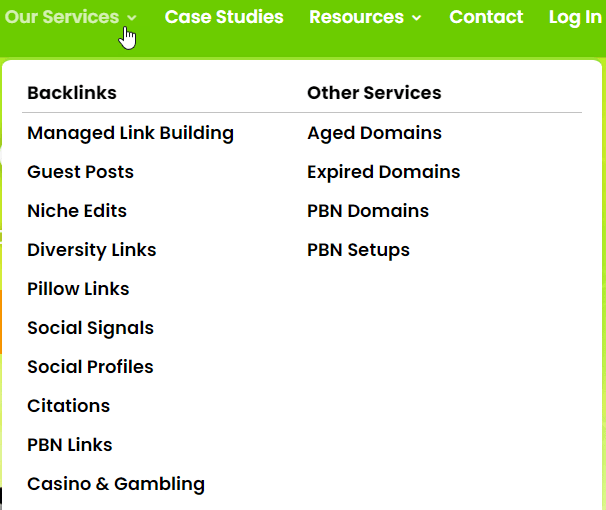

You can see an example from an established link building provider here:

And here:

Let’s look at not only what each service is but also the benefits of having a link building provider acquire those links for you and what you can expect to pay for each service.

1. Help a Reporter Out (HARO) editorial link building

Using HARO and alternatives to acquire editorial links from highly authoritative websites is the ultimate white hat link building technique.

Sites like Help a Reporter Out, Terkel, Dot Star Media, and the like allow journalists to post queries looking for sources for articles they are currently writing. If you’re an expert on that topic, you can give quality insights and be quoted (with a link to your site) in their article.

Not only can these links move the needle when coming from highly authoritative, niche-relevant sites that pass lots of link equity, but they can also help you improve experience, expertise, authority, and trust (E-E-A-T).

But are there benefits to using a service provider to build HARO links?

When it comes to well-established agencies that offer this service, they do a fantastic job of delivering the results you need with fully managed services. You only need to give them some initial information, and they will do the hard work for you.

However, they’re explicitly designed to scale these links and are working with multiple clients. To scale a fully managed service to that level, they usually have a large team of people pitching, tracking links, managing the accounts, liaising with clients, etc.

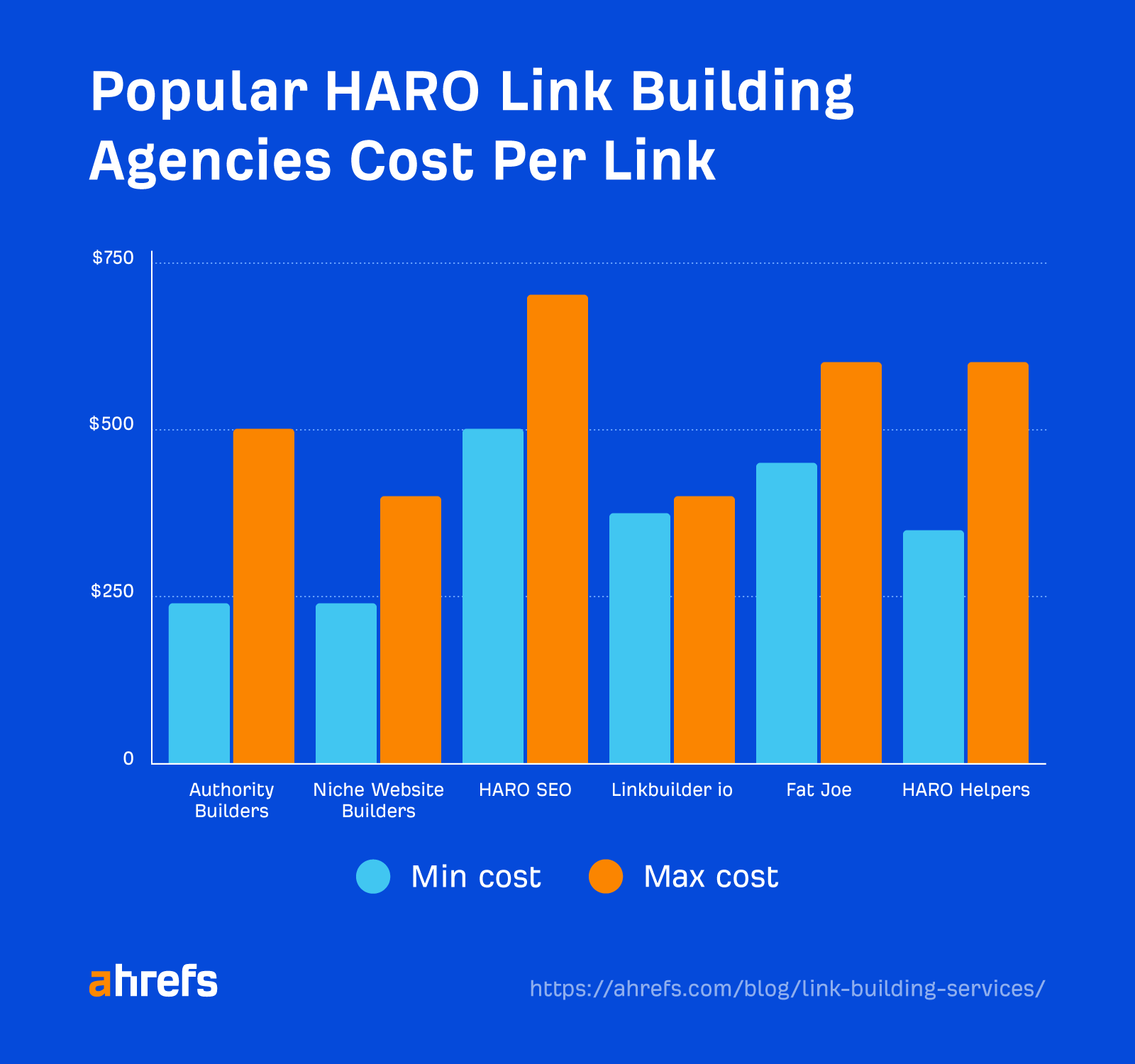

With that kind of team in place comes a higher cost. The average price range for this service with big agencies is $240–$700 per link.

Unless you’re working on a large brand, enterprise site, or portfolio of websites, it probably isn’t worth it. However, if you need more time or have too many sites to manage yourself, you can find a freelancer who can help you at a more budget-friendly price tag.

It’s worth mentioning that most good freelancers will still have the cost of paid tools to cover, and they’ll likely pitch for multiple clients on one platform. Not to mention the hard work and time that go into crafting quality pitches. You can find someone who can do the job well for $200–$350 per link.

2. Digital PR

Like HARO link building, digital PR is one of the best ways to build high-authority links while also gaining brand exposure, driving traffic, and improving E-E-A-T.

But unlike HARO link building—where you approach journalists who have already advertised their need for sources on a particular topic—digital PR techniques create creative campaigns that proactively approach journalists to get the word out about your business.

Many different techniques can be used, including:

- Press releases

- Data-led campaigns

- Newsjacking

- Reactive PR

Arguably, digital PR is something you can only do with the help of an agency. Unless you’re a PR expert yourself and have time to dedicate to it, you’re not going to get the same results as a quality agency can.

Digital PR is an “always-on” tactic that needs a team of people monitoring what’s trending that can put together an entire campaign as soon as something happens.

A great example is how search-first creative agency Rise at Seven quickly responded to this creative campaign from Marmite:

Within 45 minutes of this campaign going live, Rise at Seven executives responded with their creative version on behalf of their client, Auto Glass, which virtually responded to fix the broken windshield:

The high-quality work needed to execute digital PR tactics successfully comes at a high cost. Most digital PR agencies with an SEO focus have a minimum monthly retainer of between $4,000 and $15,000.

3. Guest posting link building

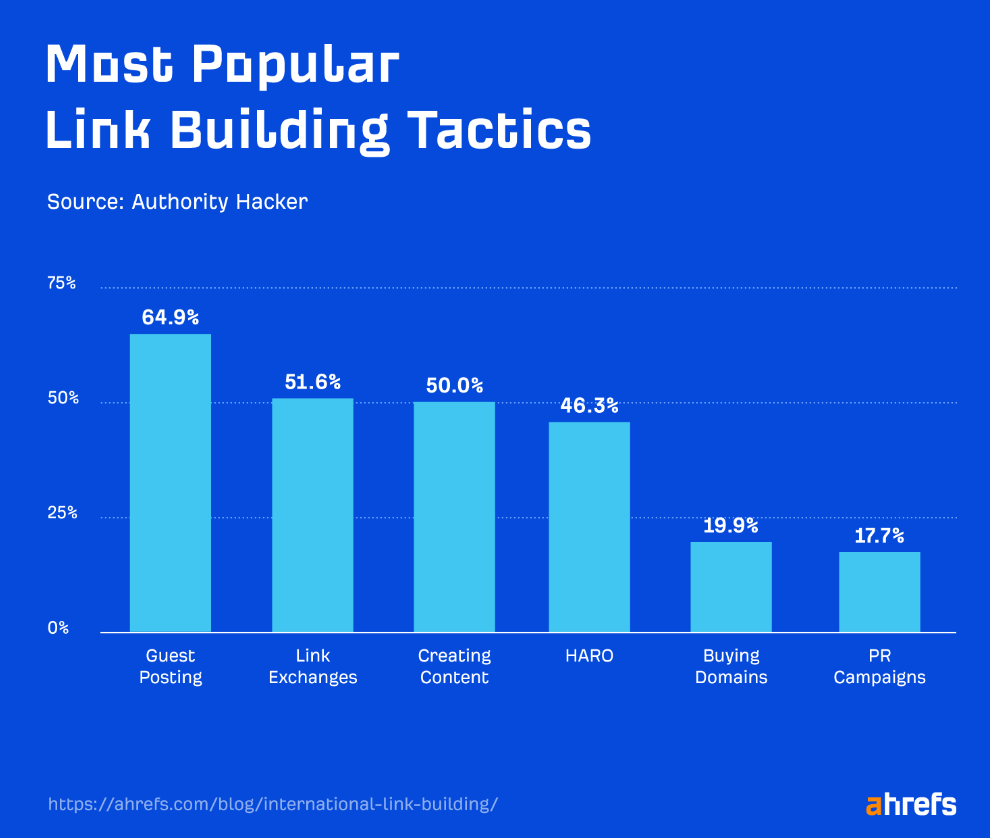

Building links through guest posting is the most popular way to build links among SEO professionals, according to Authority Hacker’s link building survey.

This service requires the provider to find high-quality, niche-relevant websites with a good amount of traffic and a strong backlink profile. Then the provider has to pitch the site admins topic ideas for a guest post to be added on the site.

This can be either offering to create an updated version of existing content that is currently underperforming or a new but relevant idea.

There’s a reason why guest posting is the most popular link building tactic among SEOs—because it works. Links are also relatively easy to achieve, as it is usually a win-win for both sites.

Plus, a quality service provider will have built up a database of sites it’s worked with and nurtured relationships with web admins, which means it can often acquire links easier than you would through hours and hours of outreach.

The thing is, quality comes at a cost. An agency offering guest posts as a service has a team of outreach specialists, content writers, and editors to produce the posts, plus site administration fees (the cost of someone to edit and upload the content) to cover.

If you want to build guest post links properly at scale, the average cost of $250–$400 per link is generally worth it.

4. Niche edits

Niche edits, also known as link placements, are where a link is placed on a site within an existing piece of content.

This can be that a current link in the content needs to be updated or fixed. Or simply because you think their audience will benefit from your content as a source of further reading.

Niche edit links can achieve similar results to guest post links but at a more budget-friendly cost (as there is no content to write). For this service, most reputable providers charge between $150 and $300 per link.

Be warned that many link building providers will simply buy these links. For the most part, providers will work from a list of sites that have link placement prices within a set budget.

For example, I have worked with agencies in the past that are willing to pay a fee of up to $100 for any link and they continuously do outreach to build upon that list. Then, whenever they need a link, they simply reach out to a relevant site and arrange for a link to be placed.

Obviously, buying links is something you need to be careful of, as it is against Google’s guidelines (unless the link contains a “nofollow” or “sponsored” link attribute). Many providers simply won’t inform you this is how they do business.

However, some will inform you that a site has asked for an additional fee and whether you are happy to pay that to give you the option. This is usually only the case for highly authoritative sites asking for $200+. But at least then, you can decide if this is something you’re happy to do.

5. Skyscraper link building

Skyscraper link building is a technique where you find a popular piece of content with lots of backlinks, and then you create your own (better) high-quality content to attempt to steal your competitor’s links.

When done well, skyscraper link building can land you dozens of high-quality links quickly. The problem is this tactic is a lot for one person to handle. You must do all the research, create content, find sites to reach out to, and then perform email outreach to gain links.

That’s why skyscraper link building is one of the best services you can outsource to an agency. Not only will it have a dedicated team to handle the entire process, but it will also have the professional tools needed to execute the services successfully at scale.

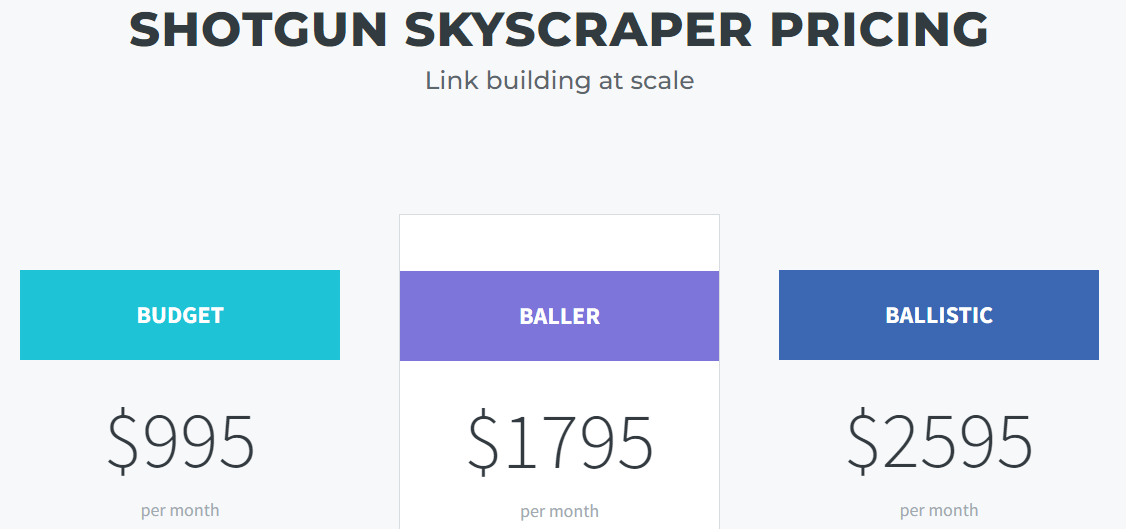

You can’t be surprised that this doesn’t come cheap. Most SEO agencies that offer skyscraper link building have different packages, depending on how many links you’re looking to build. They usually range from around $900–$3,000.

6. Managed link services

Managed link services and link packs are packaged services that agencies offer to cover all of your link building needs in one place. The idea is you will get a certain number of different types of links per month. For example:

- Five guest post links

- Three niche edit links

- Two HARO links

Some agencies will offer different packs at set prices for you to choose from. Others will charge a set retainer and customize link building packages based on your current backlink profile, competitor analysis, and goals.

If you’re someone with a large portfolio of sites or even a large enterprise site that doesn’t want the hassle of managing and training a dedicated in-house link building team, using a managed link building service from a reputable provider is worth it.

Prices can range between $2,500 and $10,000 per month.

Similar to how there are plenty of bad link building providers, link building services can be questionable at best and downright “black hat” at worst.

Even some well-known providers offer several services that aren’t for everyone, so it’s a good idea to know what you should avoid—even more so than what you’re looking for.

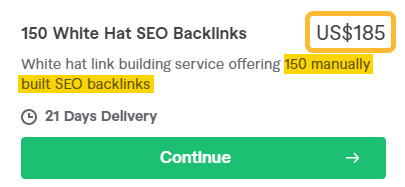

1. Anything cheap

Although paying someone $250 per hour to build links to your site doesn’t guarantee you’ll receive quality service, for the most part, you get what you pay for.

Most agencies will have a team that will spend hours performing outreach and producing content. Not to mention paid tools that allow team members to do their jobs well. So it’s reasonable to expect a particular price tag with a professional service.

Therefore, anyone on platforms like Fiverr, Upwork, or Freelancer offering 500 links for $10 will provide a service that either does nothing at all or, worse, more harm than good.

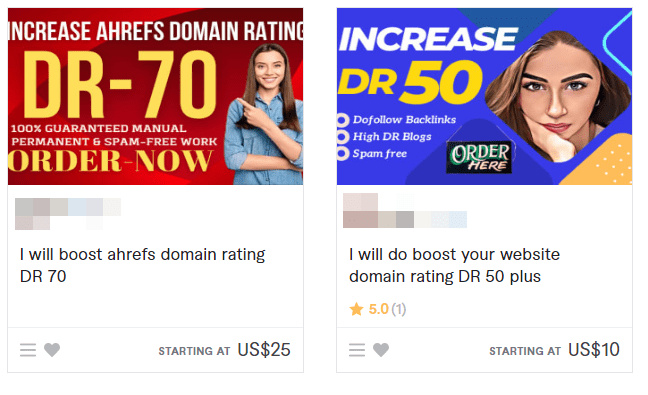

2. DA/DR increasing services

These services can be seen across freelancing platforms and guarantee to boost your Ahrefs’ Domain Rating (DR) or Moz’s Domain Authority (DA) above a particular metric. The idea is to make your site appear more authoritative than it is.

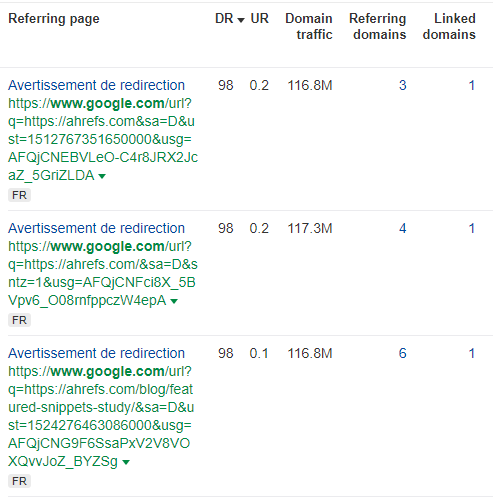

They involve spamming your site with a load of links that can look like this:

It’s worth stressing that both DA and DR are not considered by Google and hold no influence over your rankings. If your DR or DA is manipulated, it is solely to impress others using Ahrefs to check out your site.

These attempts of metrics manipulation naturally call for response from the SEO tool providers. Ahrefs worked on implementing changes to the DR calculation to try to make these artificial DR boosts ineffective.

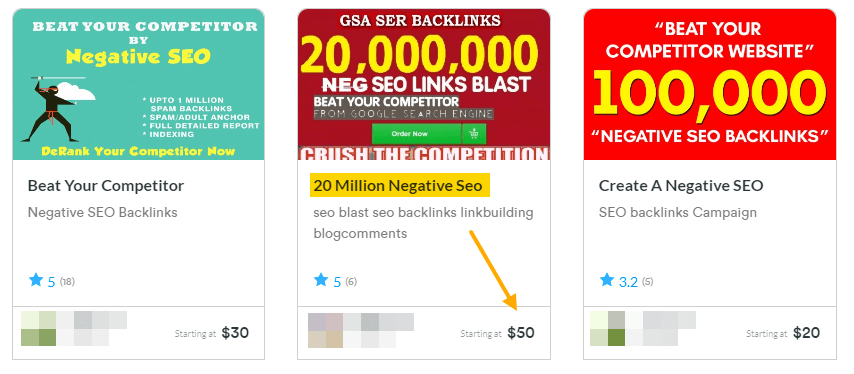

3. Negative SEO links

Negative SEO links, or “SEO attacks,” as they are sometimes referred to, are spamming your competitors with poor-quality links in hopes of negatively impacting their rankings and traffic.

Usually, these services spam a site with millions of links at a minimal cost.

Not only is this completely unethical, it is also, quite frankly, downright lazy. And it most likely won’t do anything. If you’re spamming your competitor just to get a leg up on the competition rather than spending time building up your backlink profile, you’re doing SEO wrong.

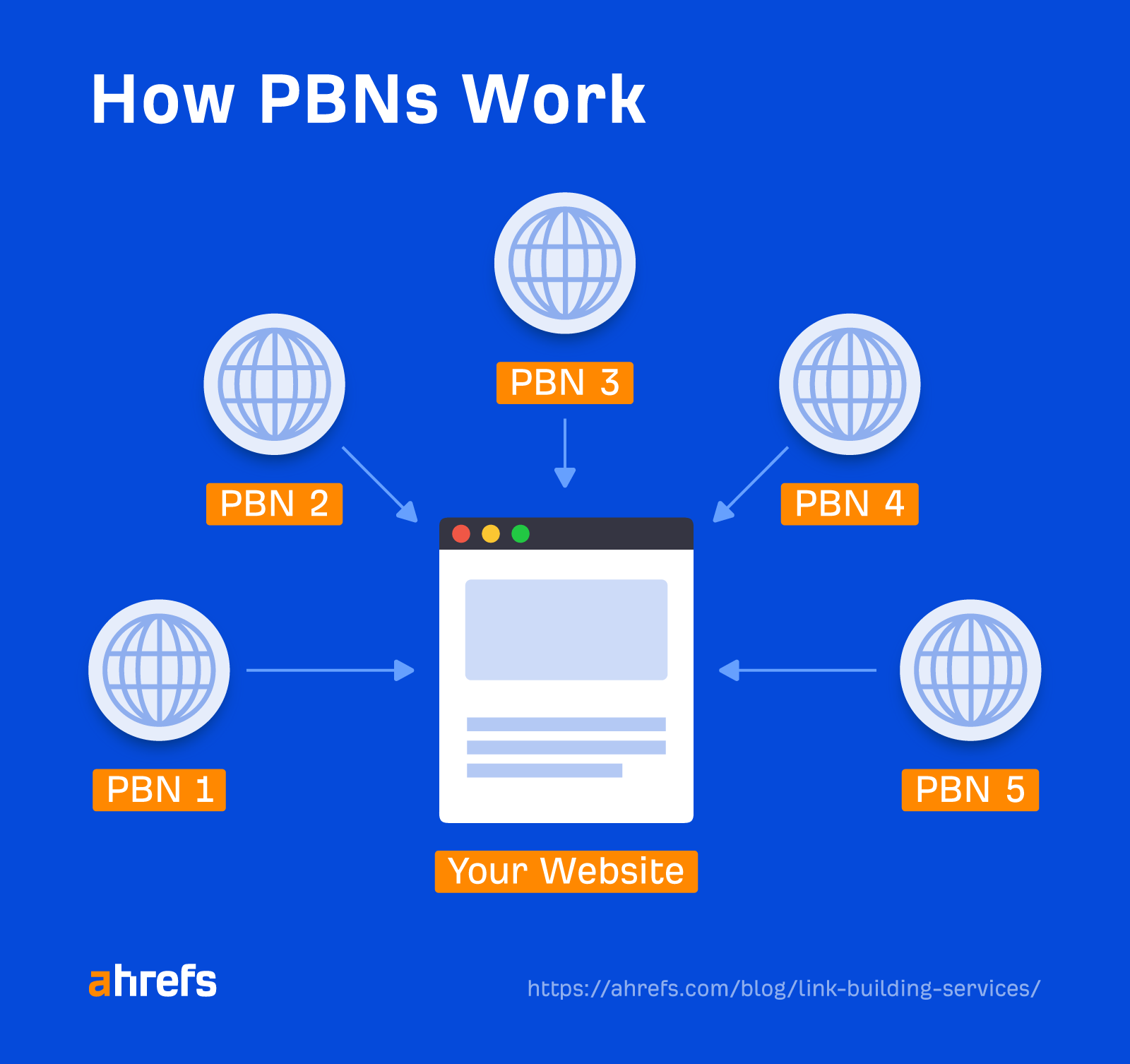

4. Private blog networks (PBNs)

PBNs are a group of websites created specifically to link to one another and create the appearance that a website has “earned” backlinks from other websites.

By linking to your site from every other site in your PBN, you artificially establish trust signals in the hopes that Google will recognize them as legitimate websites and rank you higher.

Private blog networks used for link building are considered link spam and can land you with a Google penalty.

5. Press releases that aren’t newsworthy

A press release can be a genuinely helpful tool for building high-quality links and brand awareness when your company has something newsworthy to share, such as a merger or acquisition.

The issue arises when companies run a press release weekly solely to build links on everything from Karen-from-accounts’ new Labradoodle to “Flip-Flop Fridays.”

This is not only considered a spammy practice but will also most likely not be picked up by the sites you want to obtain links from. This makes them worthless.

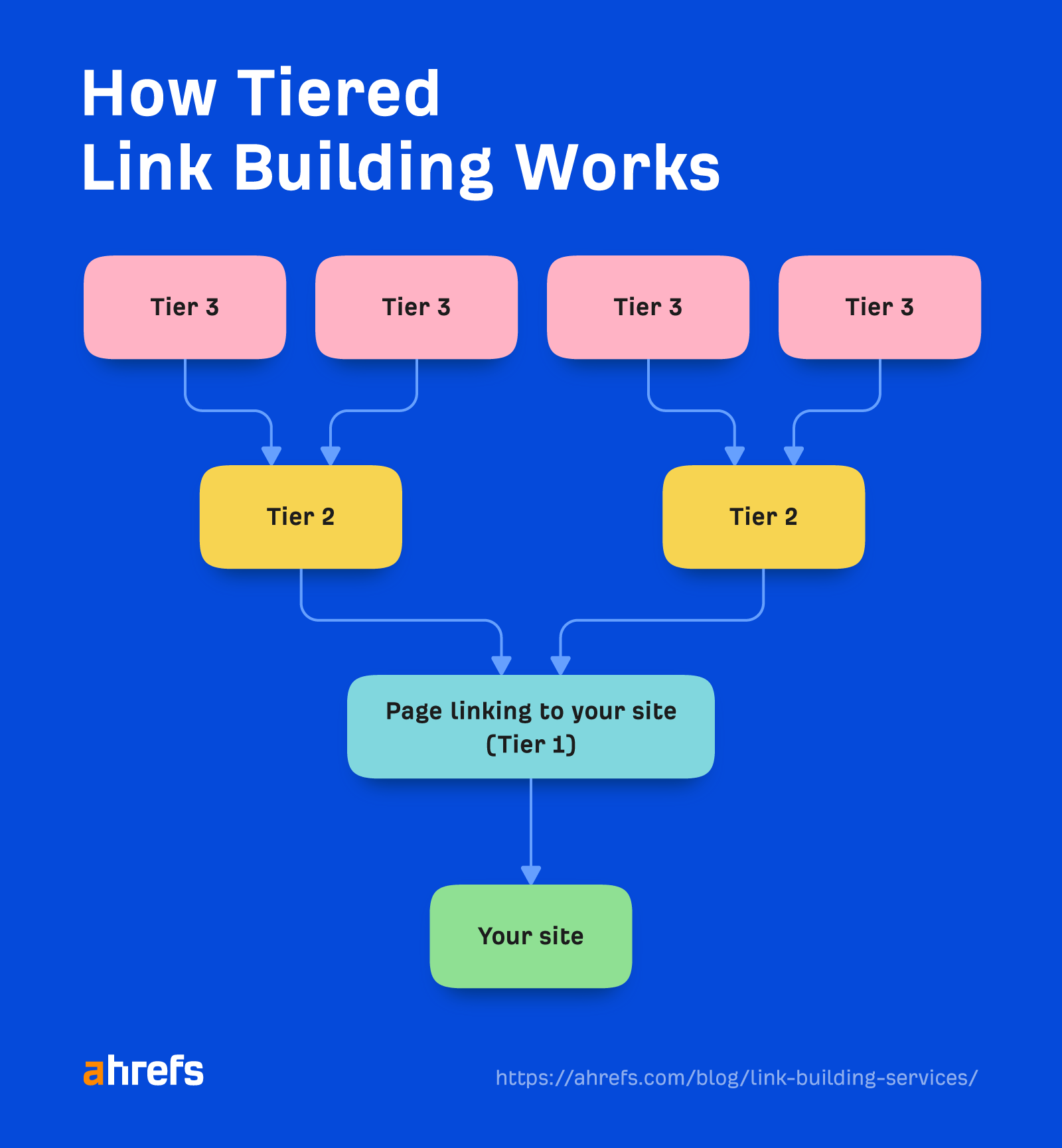

6. Tiered links

Tiered link building is the practice of building links to pages you have already built links to your site from.

The idea is to increase the link equity to your site by improving the backlink profile of the page linking to yours.

This can work well when done correctly (by manually building high-quality links on each tier). I would never recommend doing this on a client site. But if you want to on your personal sites, that’s your choice.

Most tiered link building services involve spamming the Tier 1 site with low-quality links using automated tools like Money Robot. This unethical practice is used by SEOs who are happy to spam somebody else’s site with poor-quality links.

Final thoughts

Link building services can be the secret to SEO success for anyone who wants to build high-quality links at scale.

The key is finding a high-quality service provider that not only delivers what you need but also is someone you can work with in the long term and grow with you.

Have you got questions? Ping me on Twitter.

![How AEO Will Impact Your Business's Google Visibility in 2026 Why Your Small Business’s Google Visibility in 2026 Depends on AEO [Webinar]](https://articles.entireweb.com/wp-content/uploads/2026/01/How-AEO-Will-Impact-Your-Businesss-Google-Visibility-in-2026-400x240.png)

![How AEO Will Impact Your Business's Google Visibility in 2026 Why Your Small Business’s Google Visibility in 2026 Depends on AEO [Webinar]](https://articles.entireweb.com/wp-content/uploads/2026/01/How-AEO-Will-Impact-Your-Businesss-Google-Visibility-in-2026-80x80.png)