SEO

A Guide to Star Ratings on Google and How They Work

The elusive five-star review used to be something you could only flaunt in a rotating reviews section on your website.

But today, Google has pulled these stars out of the shadows and features them front and center across branded SERPs and beyond.

Star ratings can help businesses earn trust from potential customers, improve local search rankings, and boost conversions.

This is your guide to how they work.

Stars And SERPs: What Is The Google Star Rating?

A Google star rating is a consumer-powered grading system that lets other consumers know how good a business is based on a score of one to five stars.

These star ratings can appear across maps and different Google search results properties like standard blue link search listings, ads, rich results like recipe cards, local pack results, third-party review sites, and on-app store results.

How Does The Google Star Rating Work?

When a person searches Google, they will see star ratings in the results. Google uses an algorithm and an average to determine how many stars are displayed on different review properties.

Google explains that the star score system operates based on an average of all review ratings for that business that have been published on Google.

It’s important to note that this average is not calculated in real-time and can take up to two weeks to update after a new review is created.

When users leave a review, they are asked to rate a business based on specific aspects of their customer experience, as well as the type of business being reviewed and the services they’ve included.

For example, “plumbers may get “Install faucet” or “Repair toilet” as services to add,” and Google also allows businesses to add custom services that aren’t listed.

When customers are prompted to give feedback, they can give positive or critical feedback, or they can choose not to select a specific aspect to review, in which case this feedback aspect is considered unavailable.

This combination of feedback is what Google uses to determine a business’s average score by “dividing the number of positive ratings by the total number of ratings (except the ones where the aspect was not rated).”

Google star ratings do have some exceptions in how they function.

For example, the UK and EU have certain restrictions that don’t apply to other regions, following recent scrutiny by the EU Consumer Protection Cooperation and the UK Competitions and Market Authority about fake reviews being generated.

Additionally, the type of rating search property will determine the specifics of how it operates and how to gather and manage reviews there.

Keep reading to get an in-depth explanation of each type of Google star rating available on the search engine results pages (SERPs).

How To Get Google Star Ratings On Different Search Properties

As mentioned above, there are different types of Google star ratings available across search results, including the standard blue-link listings, ads, local pack results, rich snippets, third-party reviews, and app store results.

Here’s what the different types of star-rating results look like in Google and how they work on each listing type.

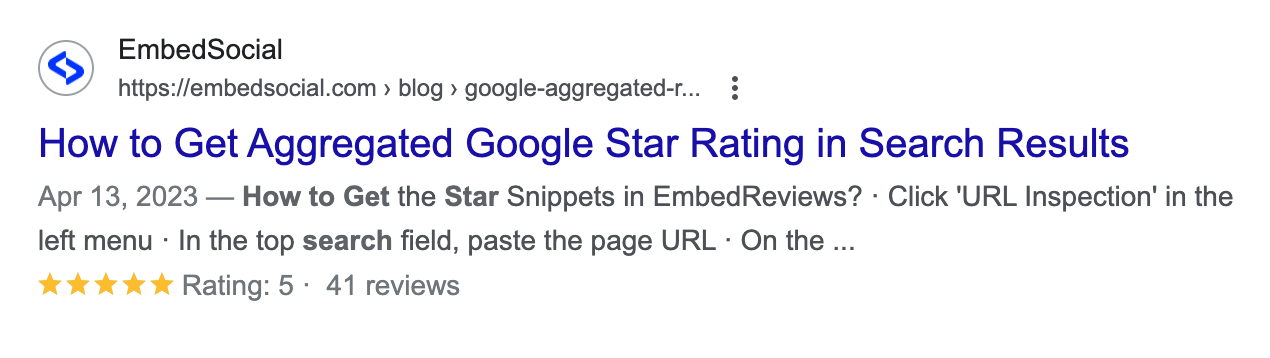

Standard “Blue Link” Listings And Google Stars

In 2021, Google started testing star ratings in organic search and has since kept this SERP feature intact.

Websites can stand out from their competitors by getting stars to show up around their organic search results listing pages.

How To Get Google Stars On Organic SERPs

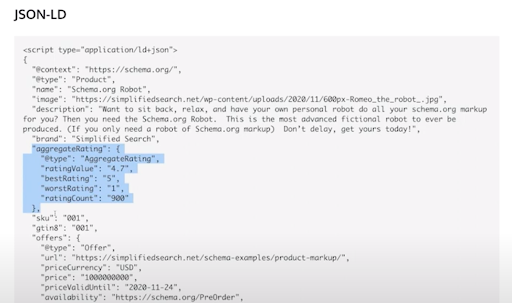

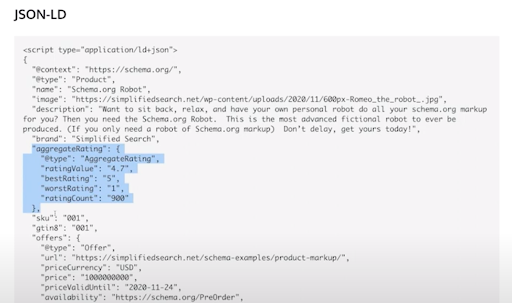

If you want stars to show up on your organic search results, add schema markup to your website.

Learn how to do that in the video below:

As the video points out, you need actual reviews to get your structured data markup to show.

Then, you can work with your development team to input the code on your site that indicates your average rating, highest, lowest, and total rating count.

Screenshot JSON-LD script on Google Developers, August 2021

Screenshot JSON-LD script on Google Developers, August 2021

Once you add the rich snippet to your site, there is no clear timeline for when they will start appearing in the SERPs – that’s up to Google.

In fact, Google specifically mentions that reviews in properties like search can take longer to appear, and often, this delay is caused by business profiles being merged.

When you’re done, you can check your work with Google’s Structured Data Testing Tool.

Adding schema is strongly encouraged. But even without it, if you own a retail store with ratings, Google may still show your star ratings in the search engine results.

They do this to ensure searchers are getting access to a variety of results. Google says:

“content on your website that’s been crawled and is related to retail may also be shown in product listings and annotations for free across Google.”

If you want star ratings to show up on Shopping Ads, you’ll have to pay for that.

Paid Ads And Google Stars

When Google Stars appear in paid search ads, they’re known as seller ratings, “an automated extension type that showcases advertisers with high ratings.”

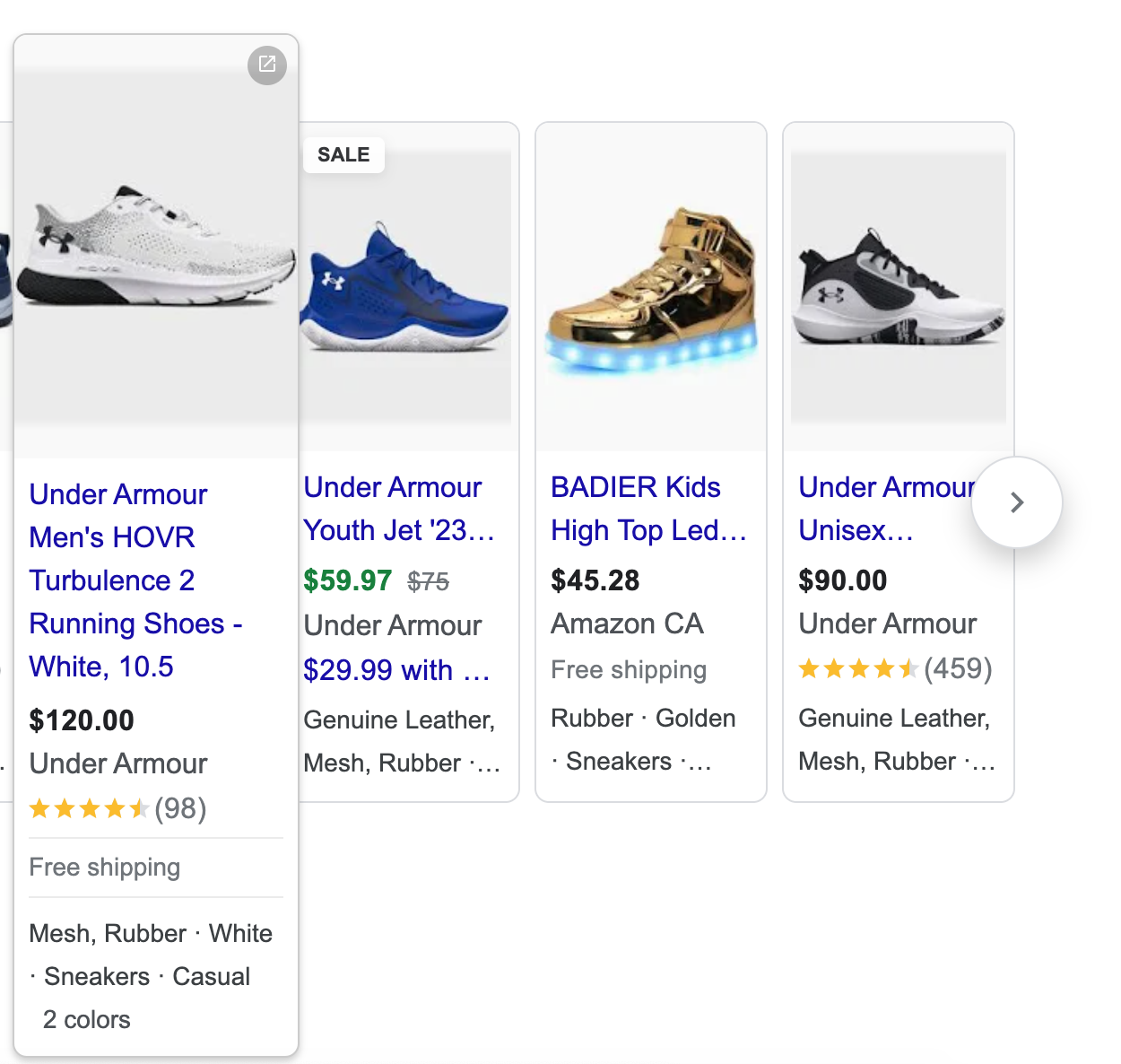

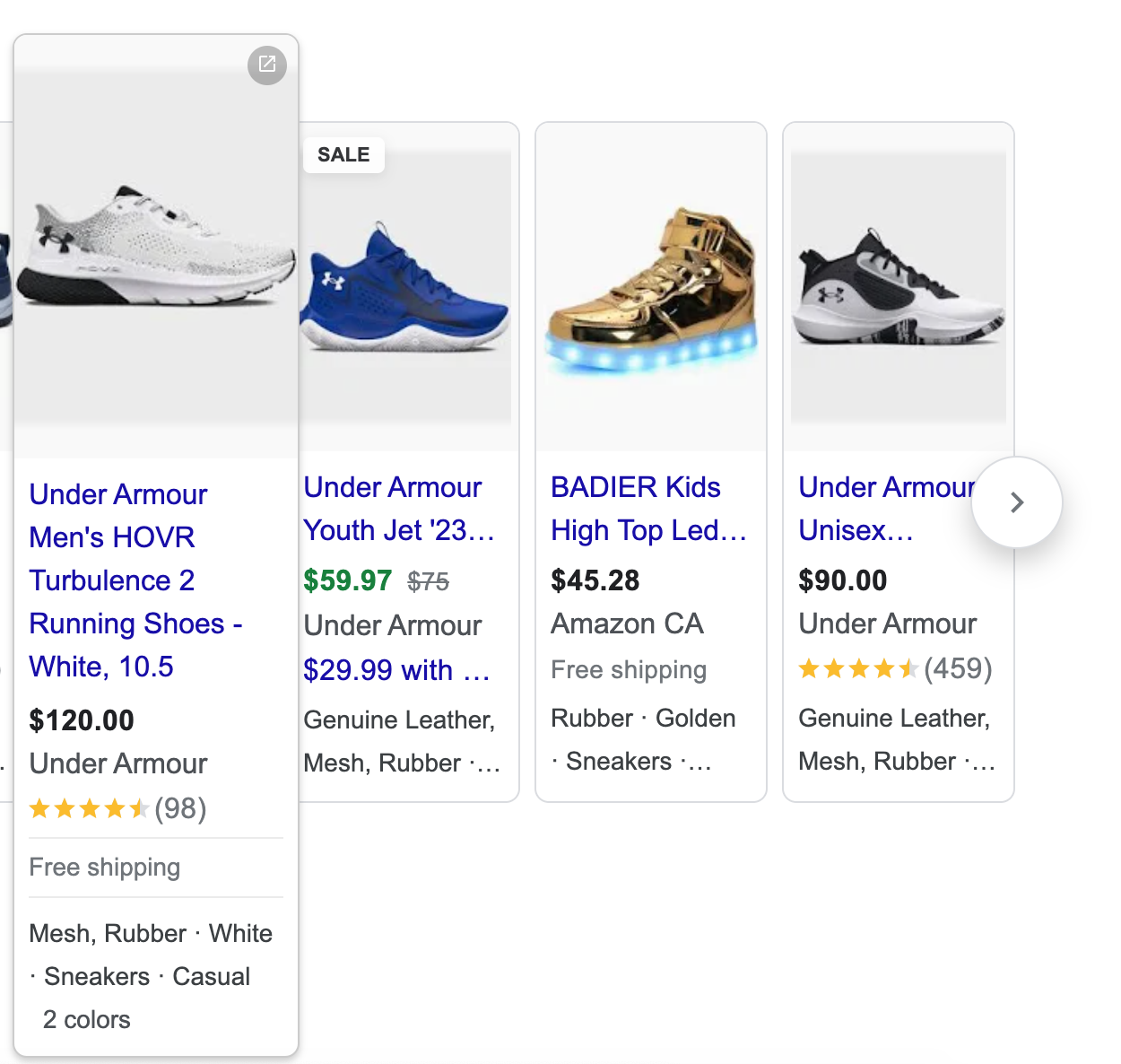

These can appear in text ads, shopping ads, and free listings. Both the star rating and the total number of votes or reviews are displayed.

In addition to Google star ratings, shopping ads may include additional production information such as shipping details, color, material, and more, as shown below.

Screenshot from SERPs ads, Google, February 2024

Screenshot from SERPs ads, Google, February 2024

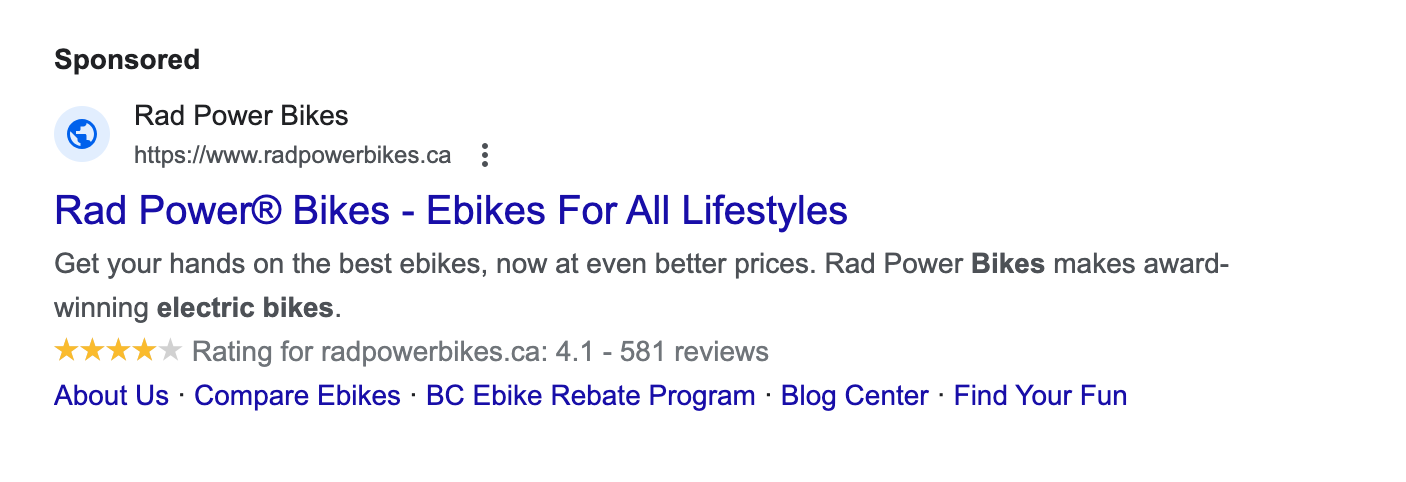

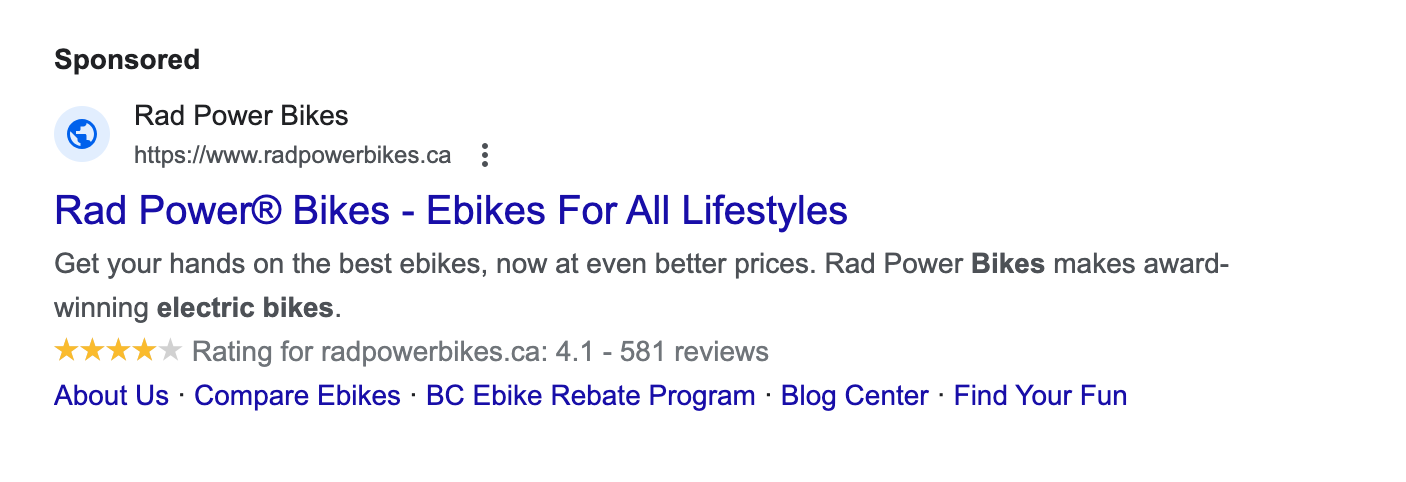

Paid text ads were previously labeled as “ads” and recently have been upgraded to a “sponsored” label, as shown below.

Screenshot from SERPs ads, Google, February 2024

Screenshot from SERPs ads, Google, February 2024

How To Get Google Stars On Paid Ads

To participate in free listings, sellers have to do three things:

- Follow all the required policies around personally identifiable information, spam, malware, legal requirements, return policies, and more.

- Submit a feed through the Google Merchant Center or have structured data markup on their website (as described in the previous section).

- Add their shipping settings.

Again, some ecommerce sellers who do not have schema markup may still have their content show up in the SERPs.

For text ads and shopping ads to show star ratings, sellers are typically required to have at least 100 reviews in the last 12 months.

Paid advertisers must also meet a minimum number of stars for seller ratings to appear on their text ads. This helps higher-quality advertisers stand out from the competition.

For example, text ads have to have a minimum rating of 3.5 for the Google star ratings to show.

Google treats reviews on a per-country basis, so the minimum review threshold of 100 also applies only to 1 region at a time.

For star ratings to appear on a Canadian ecommerce company’s ads, for example, they would have to have obtained a minimum of 100 reviews from within Canada in the last year.

Google considers reviews from its own Google Customer Reviews and also from approved third-party partner review sites from its list of 29 supported review partners, which makes it easier for sellers to meet the minimum review threshold each year.

Google also requests:

- The domain that has ratings must be the same as the one that’s visible in the ad.

- Google or its partners must conduct a research evaluation of your site.

- The reviews included must be about the product or service being sold.

Local Pack Results And Google Stars

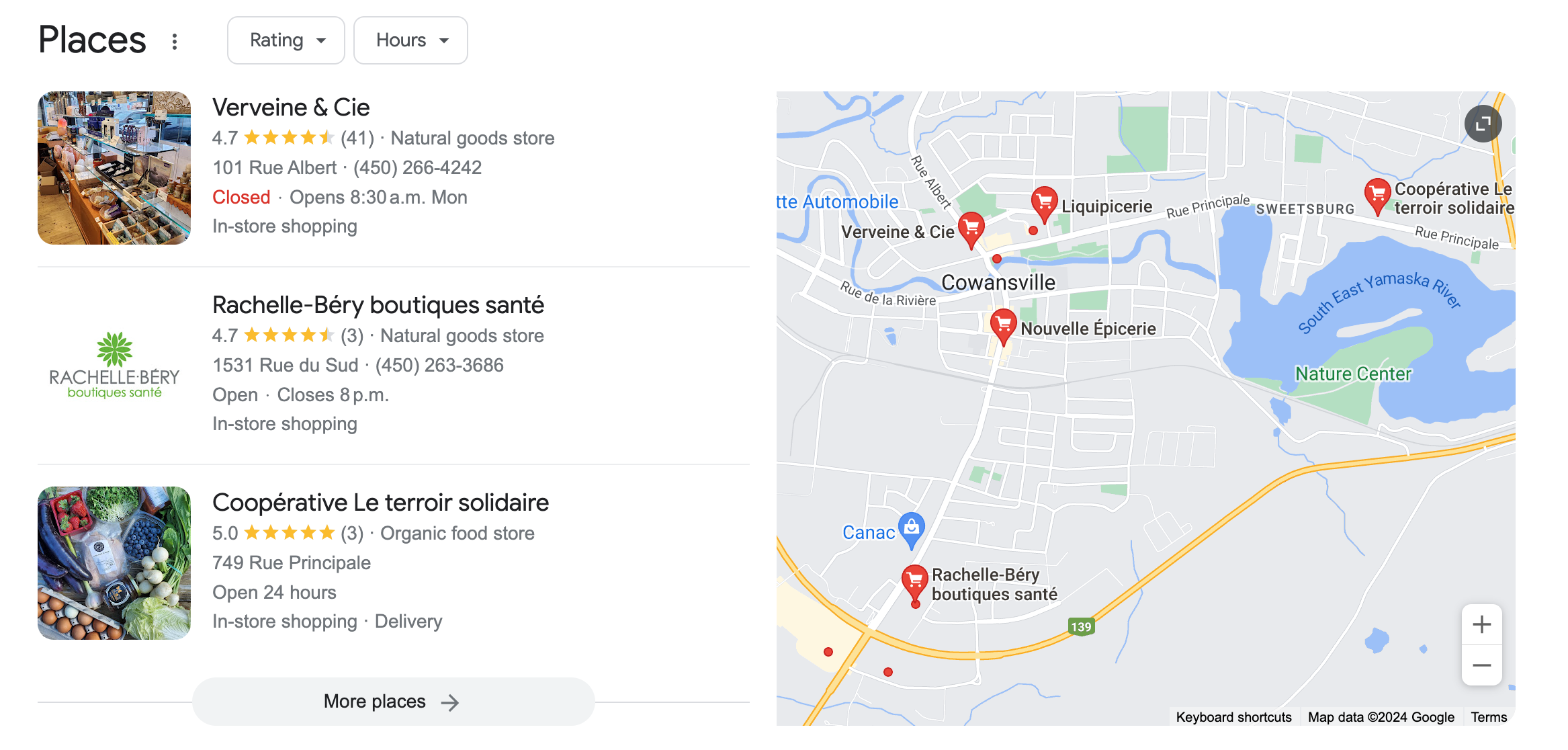

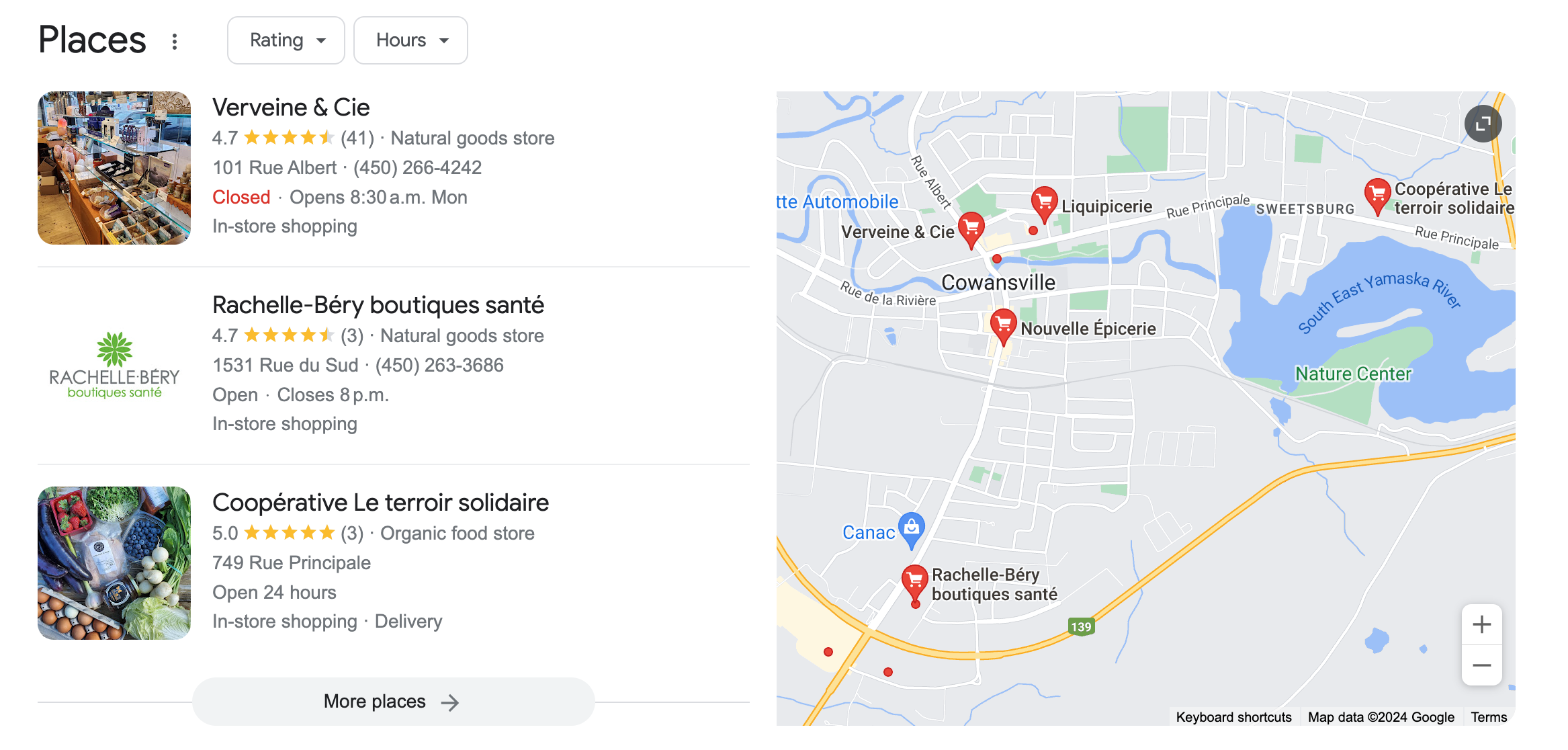

Local businesses have a handful of options for their business to appear on Google via Places, local map results, and a Google Business Profile page – all of which can show star ratings.

Consumers even have the option to sort local pack results by their rating, as shown in the image example below.

Screenshot from SERPs local pack, Google, February 2024

Screenshot from SERPs local pack, Google, February 2024

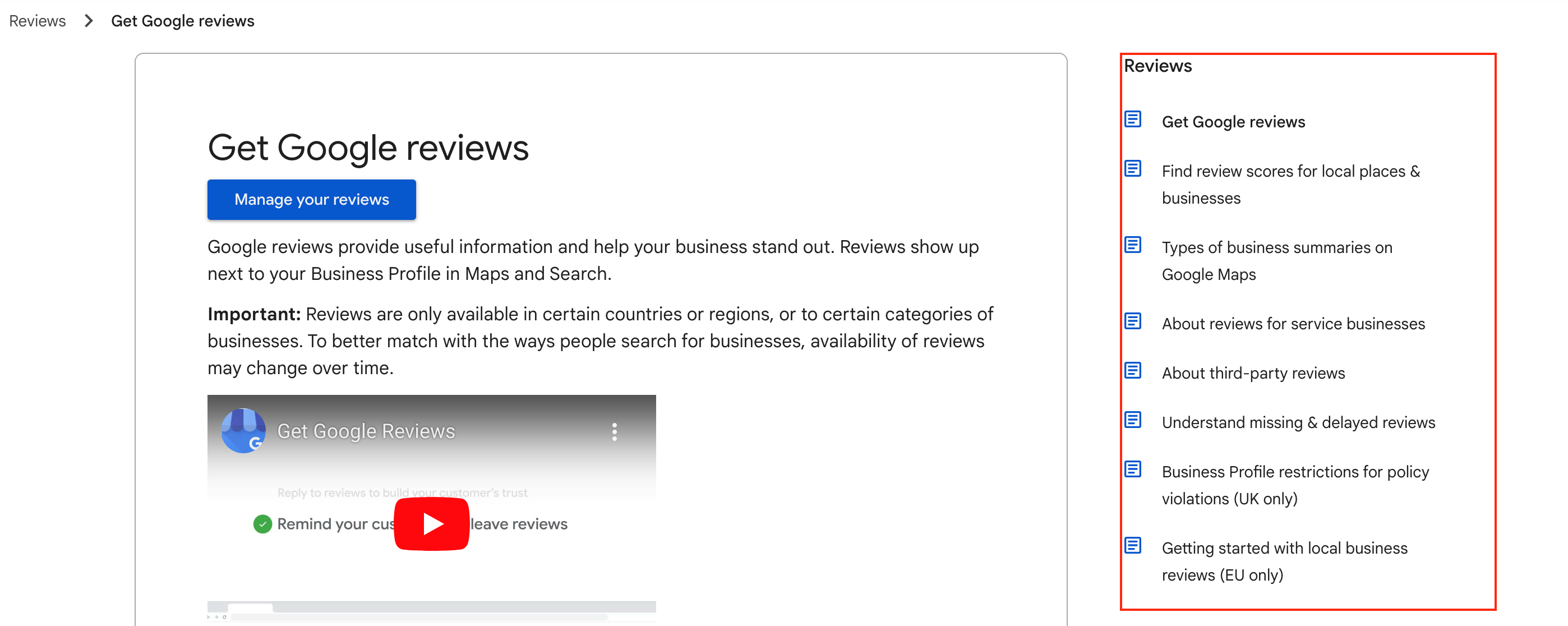

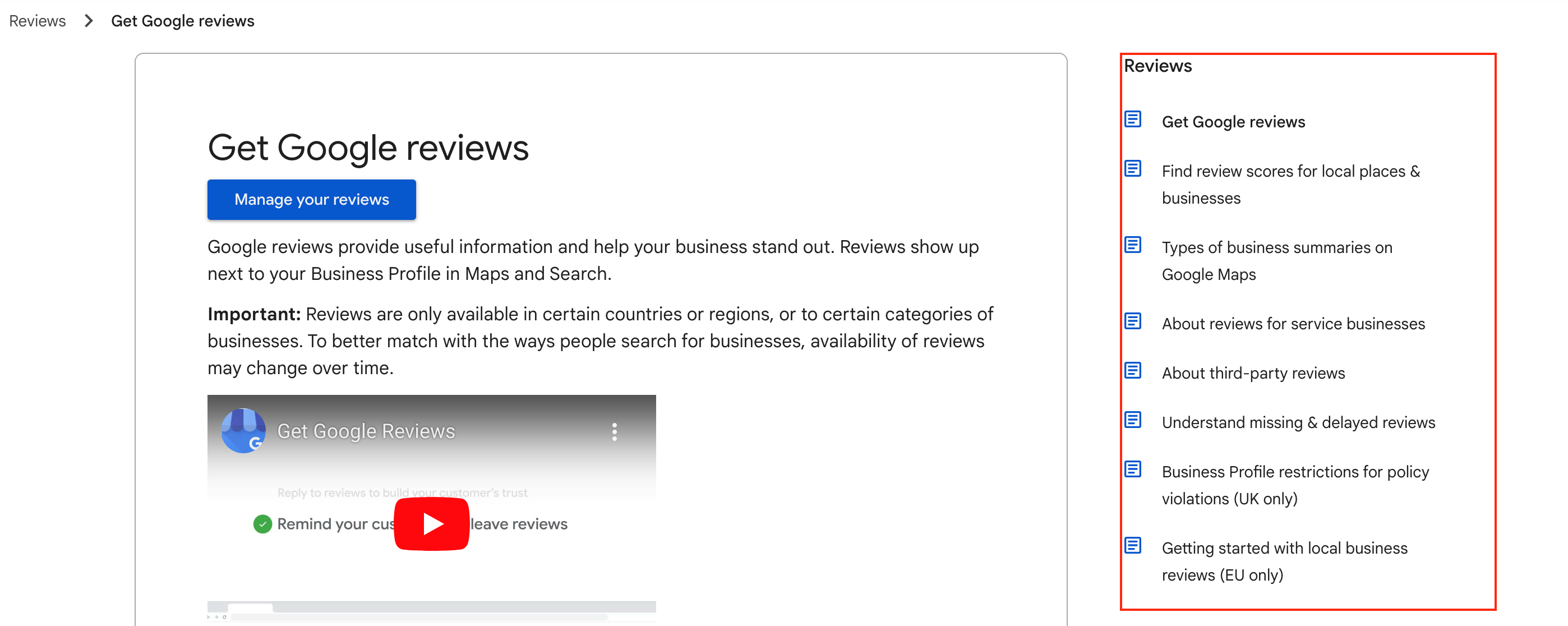

How To Get Google Stars On Local Search Results

To appear in local search results, a Google Business Profile is required.

Customers may leave reviews directly on local business properties without being asked, but Google also encourages business owners to solicit reviews from their customers and shares best practices, including:

- Asking your customers to leave you a review and make it easy for them to do so by providing a link to your review pages.

- Making review prompts desktop and mobile-friendly.

- Replying to customer reviews (ensure you’re a verified provider on Google first).

- Be sure you do not offer incentives for reviews.

Customers can also leave star ratings on other local review sites, as Google can pull from both to display on local business search properties. It can take up to two weeks to get new local reviews to show in your overall score.

Once customers are actively leaving reviews, Google Business Profile owners have a number of options to help them manage these:

Screenshot from Google Business Profile Help, Google, February 2024

Screenshot from Google Business Profile Help, Google, February 2024

Rich Results, Like Recipes, And Google Stars

Everybody’s gotta eat, and we celebrate food in many ways — one of which is recipe blogs.

While restaurants rely more on local reviews, organic search results, and even paid ads, food bloggers seek to have their recipes rated.

Similar to other types of reviews, recipe cards in search results show the average review rating and the total number of reviews.

Screenshot from search for [best vegan winter recipes], Google, February 2024

Screenshot from search for [best vegan winter recipes], Google, February 2024

The outcome has become a point of contention among the food blogging community, since only three recipes per search can be seen on Google desktop results (like shown in the image above), and four on a mobile browser.

These coveted spots will attract clicks, leaving anyone who hasn’t mastered online customer reviews in the dust. That means that the quality of the recipe isn’t necessarily driving these results.

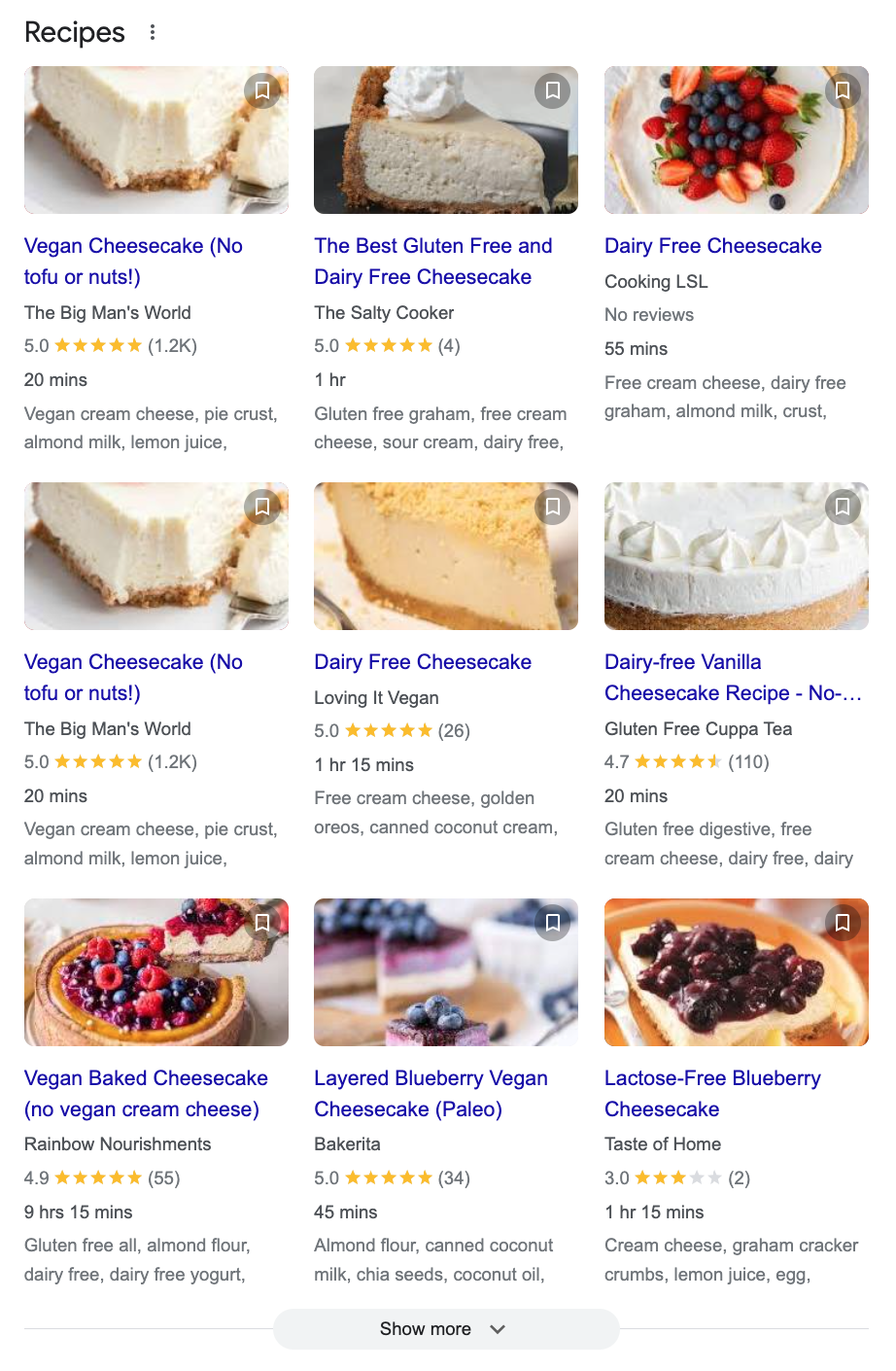

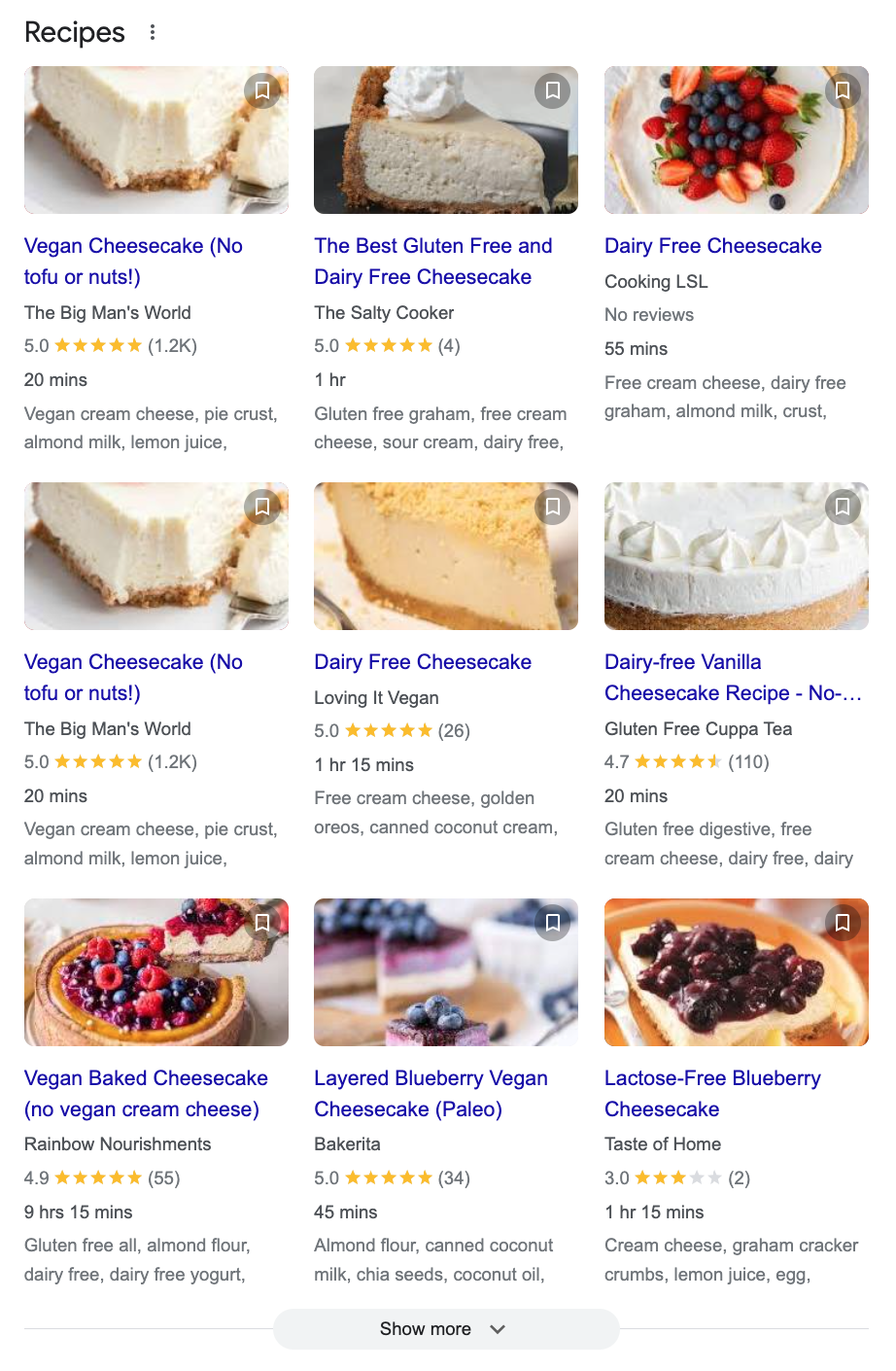

Google gives users the option to click “Show more” to see two additional rows of results:

Screenshot from SERPs, Google, February 2024

Screenshot from SERPs, Google, February 2024

Searchers can continue to click the “Show more” button to see additional recipe results.

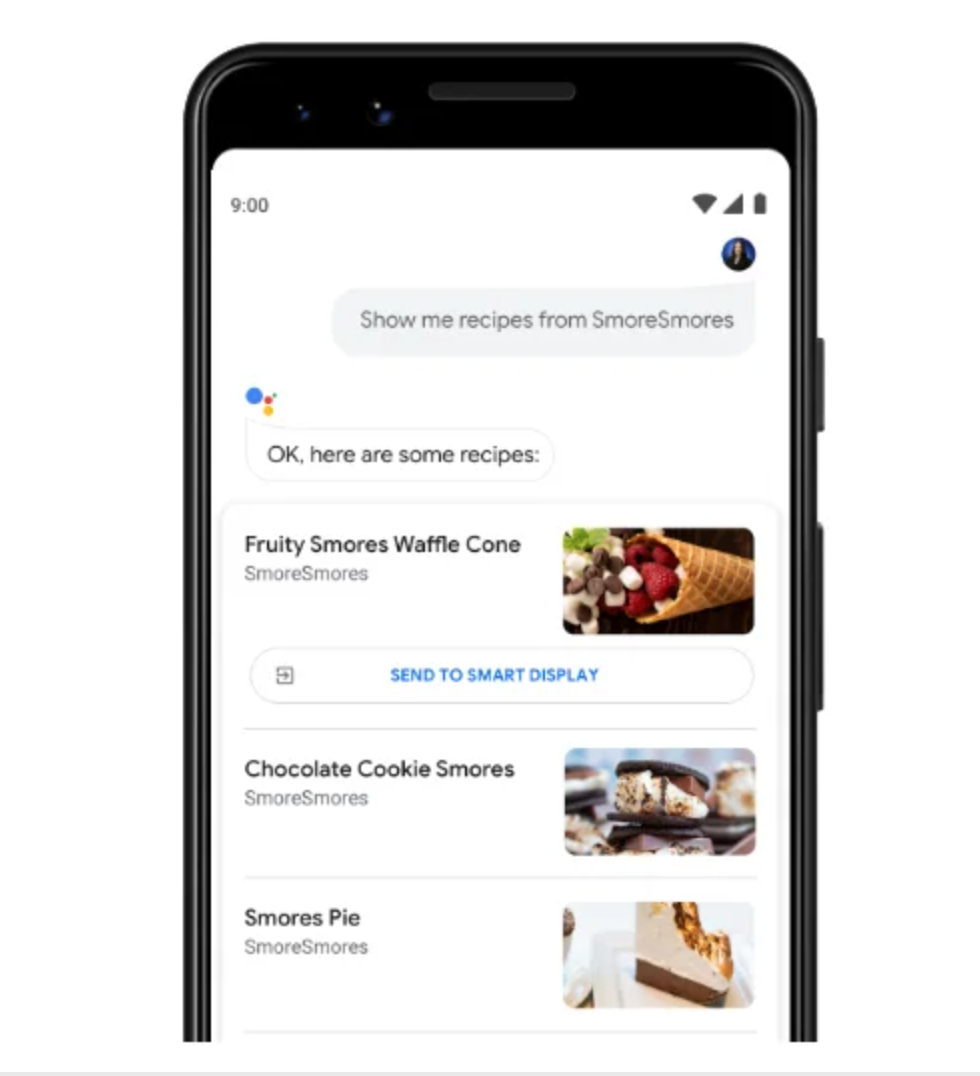

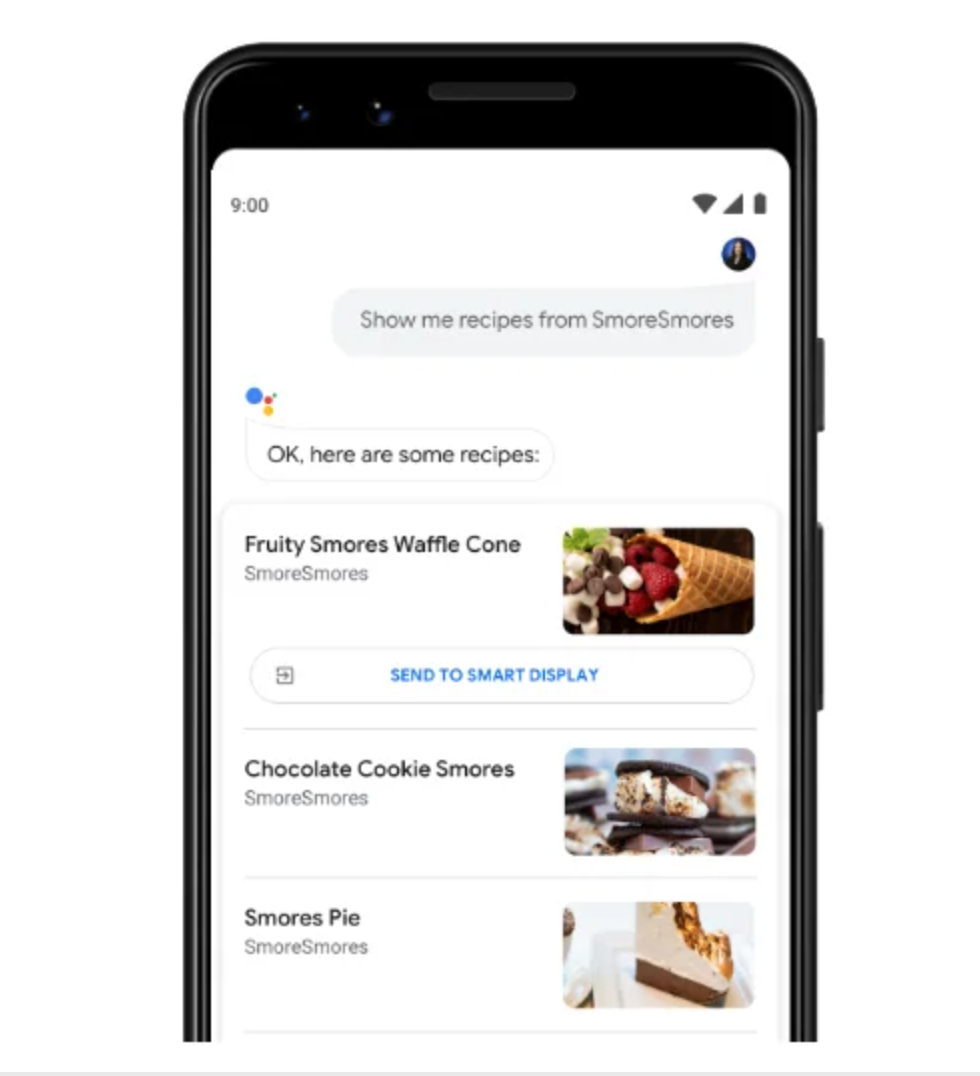

Anyone using Google Home can search for a recipe and get results through their phone:

Screenshot from Elfsight, February 2024

Screenshot from Elfsight, February 2024

Similarly, recipe search results can be sent from the device to the Google Home assistant. Both methods will enable easy and interactive step-by-step recipe instructions using commands like “start recipe,” “next step,” or even “how much olive oil?”

How To Get Google Stars On Recipe Results

Similar to the steps to have stars appear on organic blue-link listings, food bloggers and recipe websites need to add schema to their websites in order for star ratings to show.

However, it’s not as straightforward as listing the average and the total number of ratings. Developers should follow Google’s instructions for recipe markup.

There is both required and recommended markup:

Required Markup For Recipes

- Name of the recipe.

- Image of the recipe in a BMP, GIF, JPEG, PNG, WebP, or SVG format.

Recommended Markup For Recipes

- Aggregate rating.

- Author.

- Cook time, preparation time, and total duration.

- Date published.

- Description.

- Keywords.

- Nutrition information.

- Prep time.

- Recipe category by meal type, like “dinner.”

- Region associated with the recipe.

- Ingredients.

- Instructions.

- Yield or total serving.

- Total time.

- Video (and other related markup, if there is a video in the recipe).

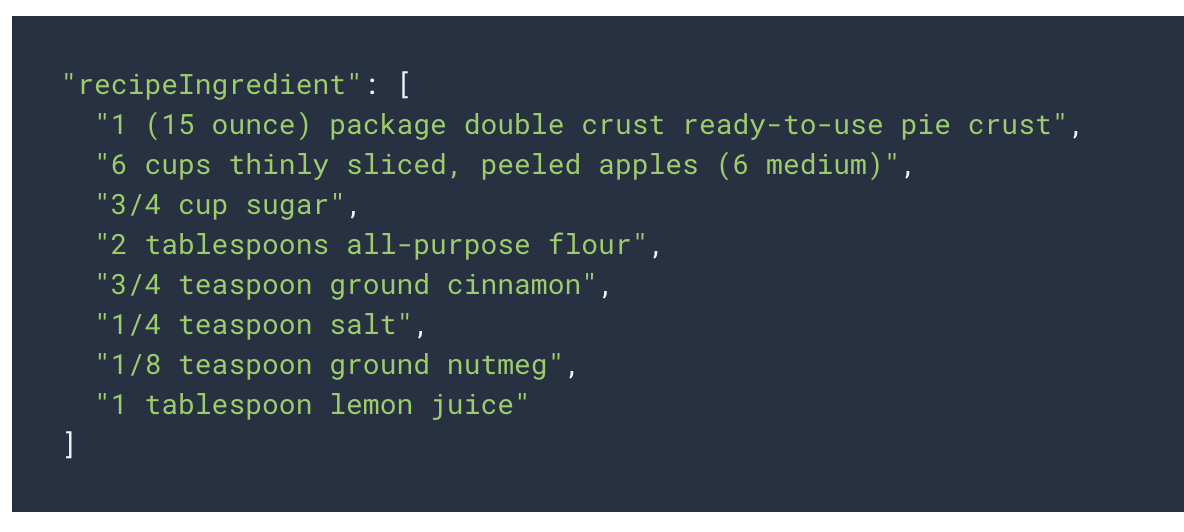

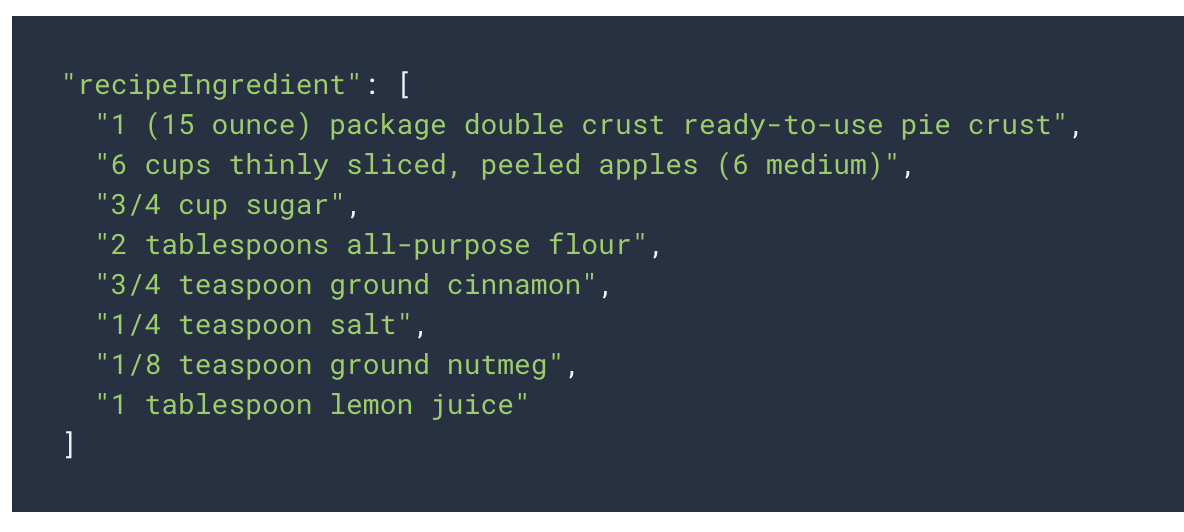

To have recipes included in Google Assistant Guided Recipes, the following markup must be included:

- recipeIngredient

- recipeInstructions

- To have the video property, add the contentUrl.

For example, here’s what the structured markup would look like for the recipeIngredient property:

Screenshot from Google Developer, February 2024

Screenshot from Google Developer, February 2024

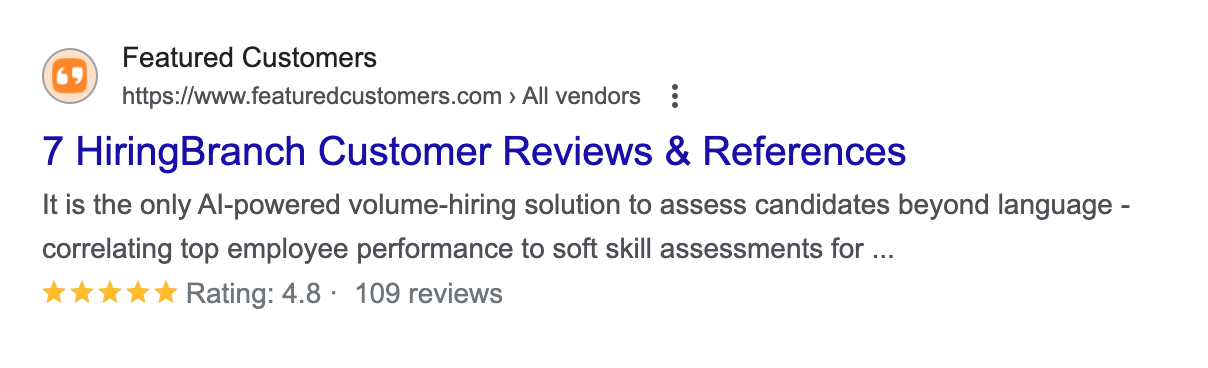

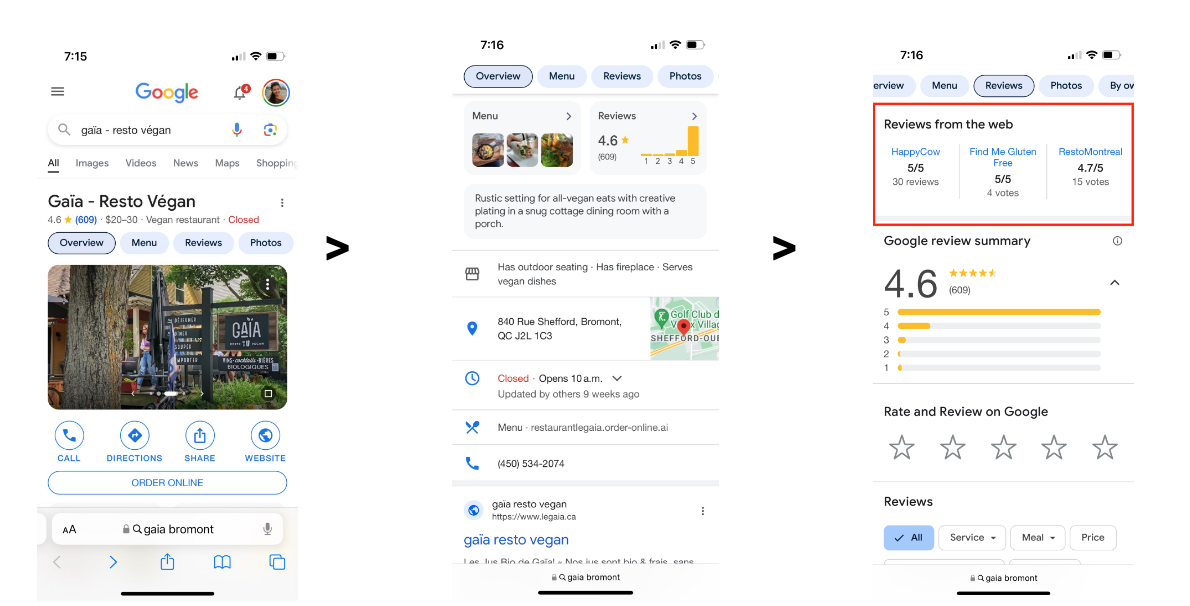

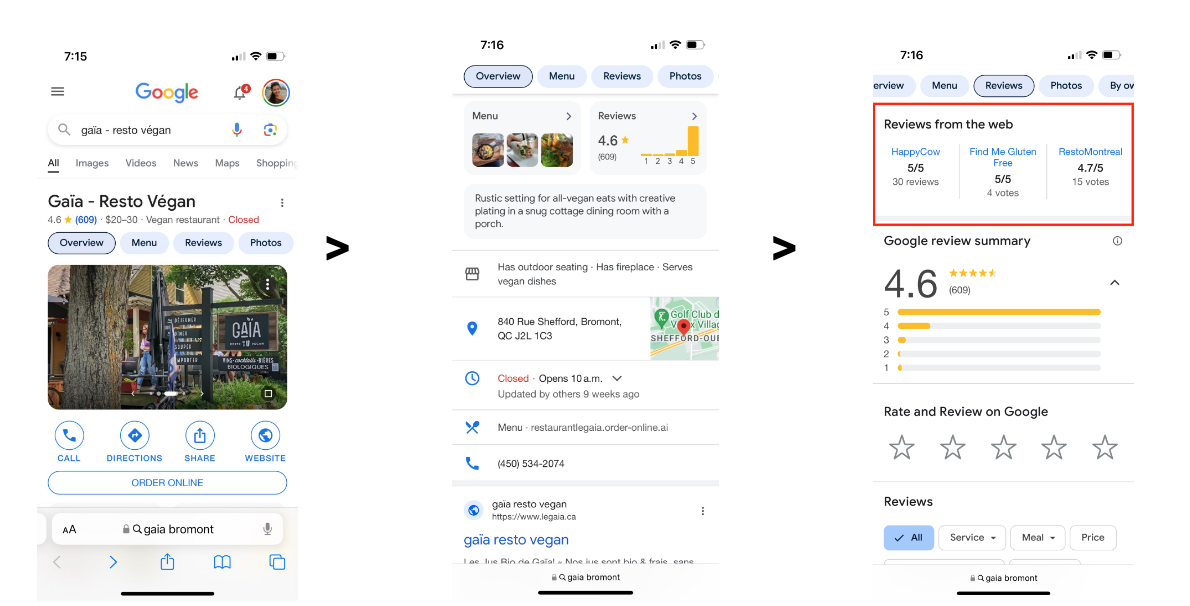

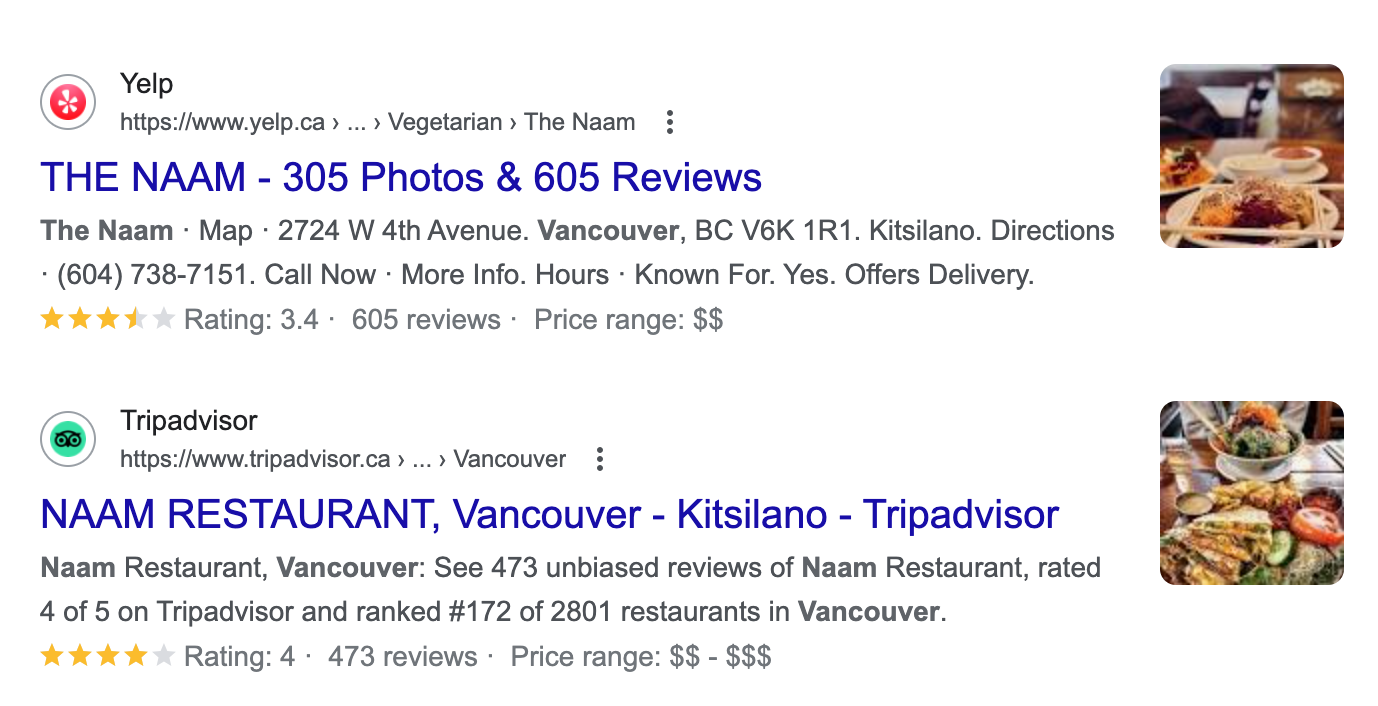

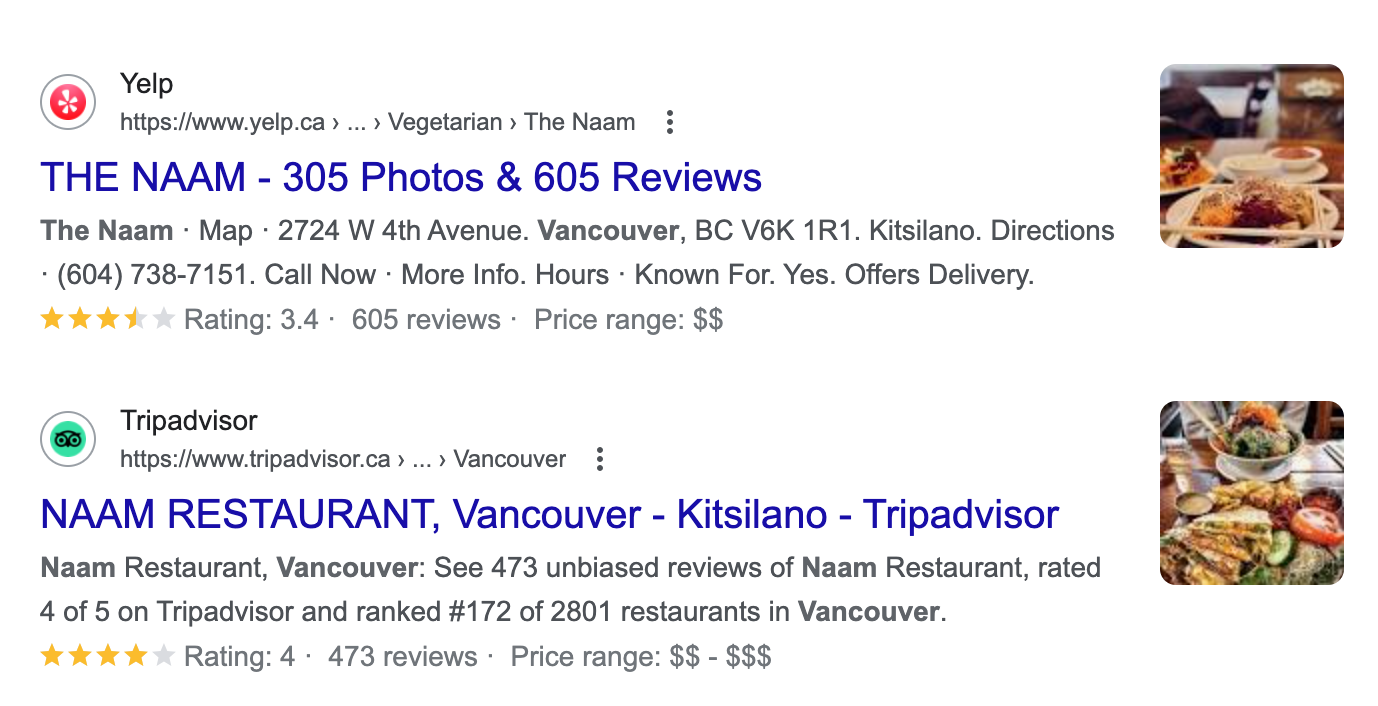

Third-Party Review Sites And Google Stars

Many software companies rely on third-party review sites to help inform their customer’s purchasing decisions.

Third-party review sites include any website a brand doesn’t own where a customer can submit a review, such as Yelp, G2, and many more.

Many of these sites, like Featured Customers shown below, can display star ratings within Google search results.

Screenshot from SERPs listing of a review site, Google, February 2024

Screenshot from SERPs listing of a review site, Google, February 2024

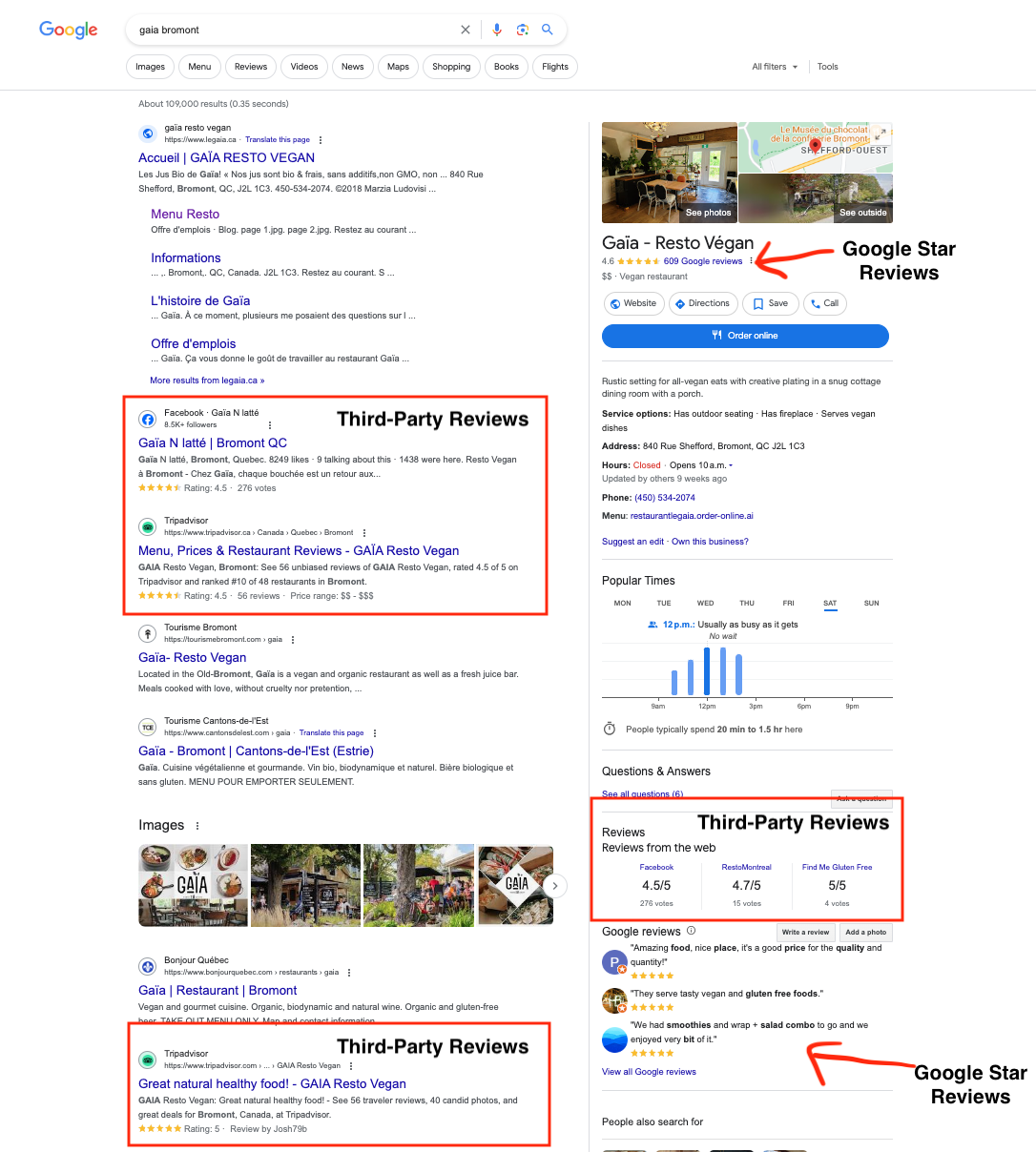

Rich snippets from third-party reviews, such as stars, summary info, or ratings, can also appear on a Google Business Profile or map view from approved sites.

For local businesses, Google star ratings appear in different locations than the third-party reviews on a desktop:

Screenshot from SERPs listing of a review site, Google, February 2024

Screenshot from SERPs listing of a review site, Google, February 2024

On mobile, ratings are displayed on a company’s Google Business Profile. Users need to click on Reviews or scroll down to see the third-party reviews:

Screenshot from SERPs listing of a review site, Google, February 2024

Screenshot from SERPs listing of a review site, Google, February 2024

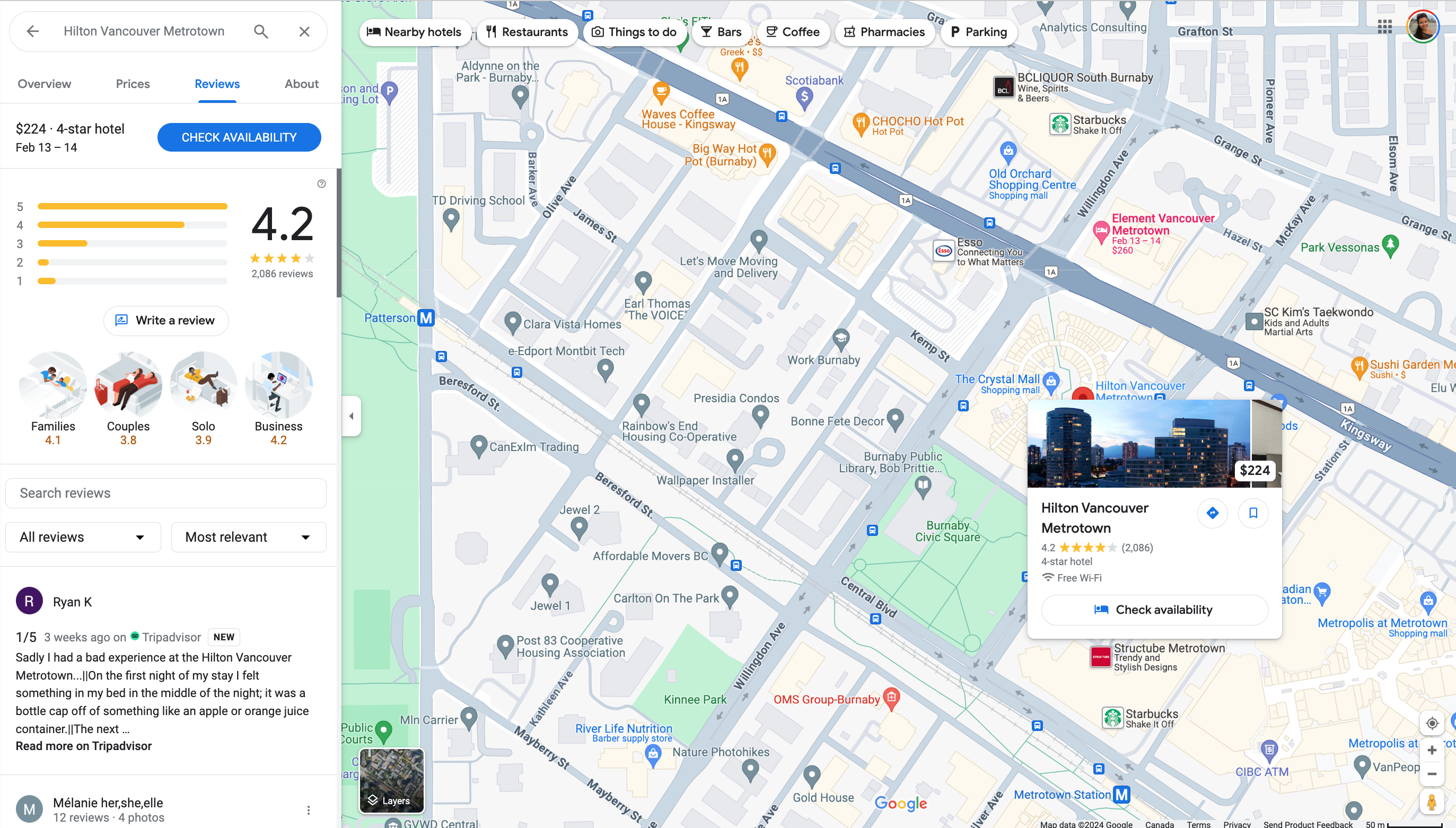

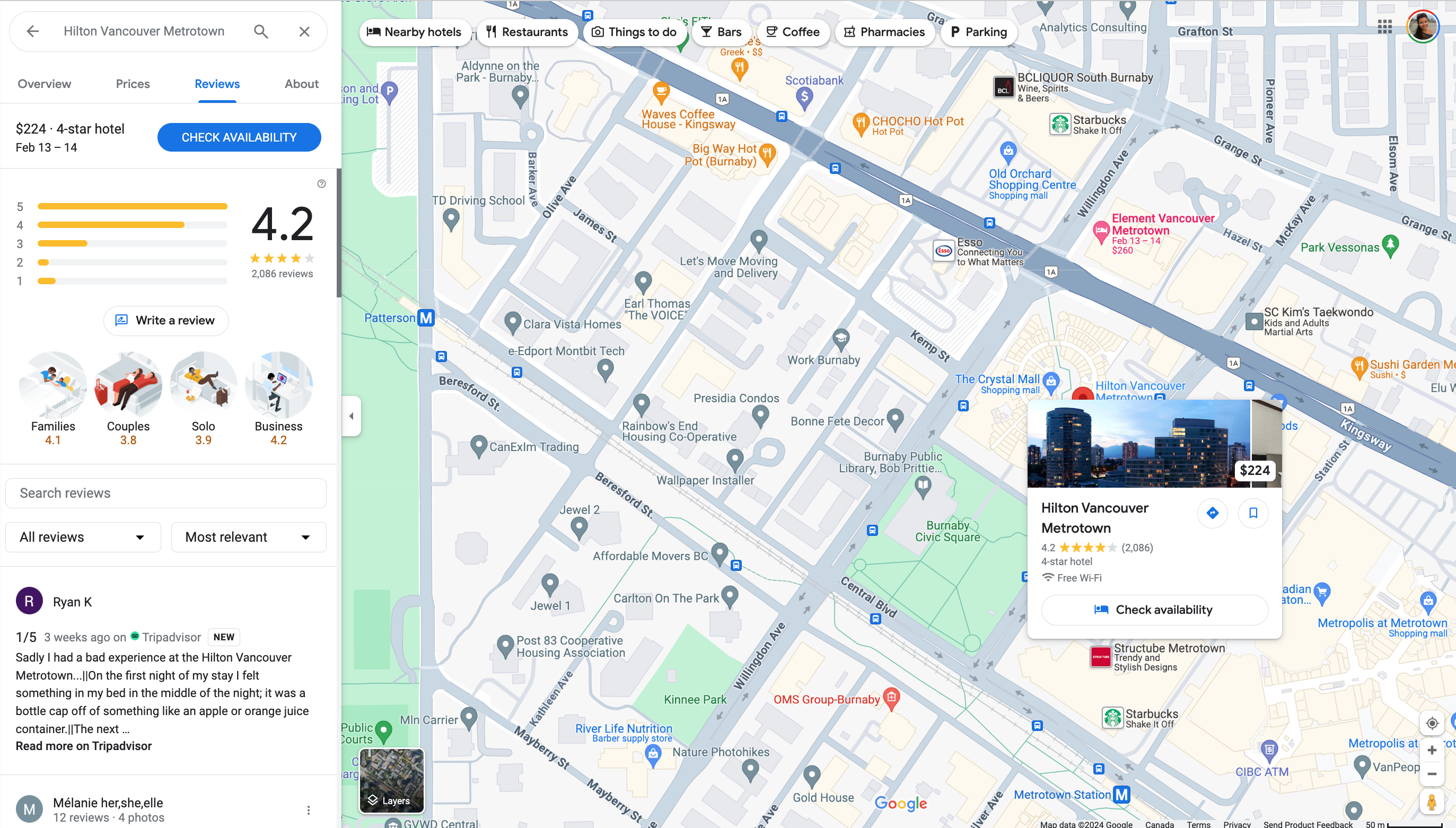

On a map, the results from third parties may be more prominent, like the Tripadvisor review that shows up for a map search of The Hilton in Vancouver (although it does not display a star rating even though Tripadvisor does provide star ratings):

Screenshot from SERPs listing of a review site, Google, February 2024

Screenshot from SERPs listing of a review site, Google, February 2024

How To Get Google Stars On Third-Party Review Sites

The best way to get a review on a third-party review site depends on which site is best for the brand or the business.

For example, if you have active customers on Yelp or Tripadvisor, you may choose to engage with customers there.

Screenshot from SERPs listing of a review site, Google, February 2024

Screenshot from SERPs listing of a review site, Google, February 2024

Similarly, if a software review site like Trustpilot shows up for your branded search, you could do an email campaign with your customer list asking them to leave you a review there.

Here are a few of the third-party review websites that Google recognizes:

- Trustpilot.

- Reevoo.

- Bizrate – through Shopzilla.

When it comes to third-party reviews, Google reminds businesses that there is no way to opt out of third-party reviews, and they need to take up any issues with third-party site owners.

App Store Results And Google Stars

When businesses have an application as their core product, they typically rely on App Store and Google Play Store downloads.

Right from the SERPs, searchers can see an app’s star ratings, as well as the total votes and other important information, like whether the app is free or not.

Screenshot from SERP play store results, Google, February 2024

Screenshot from SERP play store results, Google, February 2024

How To Get Google Stars On App Store Results

Businesses can list their iOS apps in the App Store or on the Google Play store, prompt customers to leave reviews there, and also respond to them.

Does The Google Star Rating Influence SEO Rankings?

John Mueller confirmed that Google does not factor star ratings or customer reviews into web search rankings. However, Google is clear that star ratings influence local search results and rankings:

“Google review count and review score factor into local search ranking. More reviews and positive ratings can improve your business’ local ranking.”

Even though they are not a ranking factor for non-local organic search, star ratings can serve as an important conversion element, helping you display social proof, build credibility, and increase your click-through rate from search engines (which may indirectly impact your search rankings).

For local businesses, both Google stars and third-party ratings appear in desktop and mobile searches, as seen above.

These ratings not only help local businesses rank above their competitors for key phrases, but they will also help convince more customers to click, which is every company’s search game.

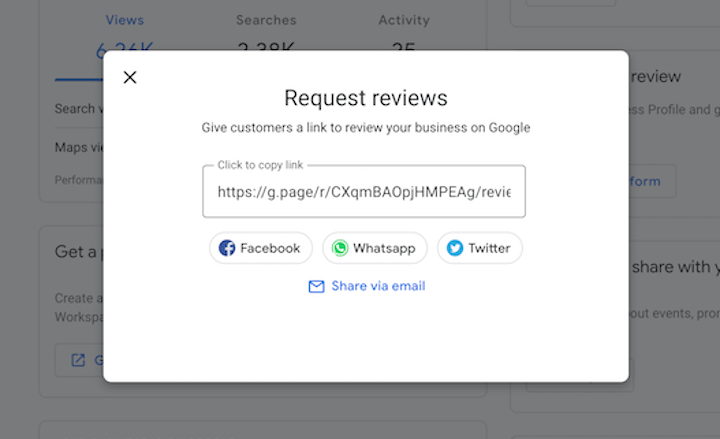

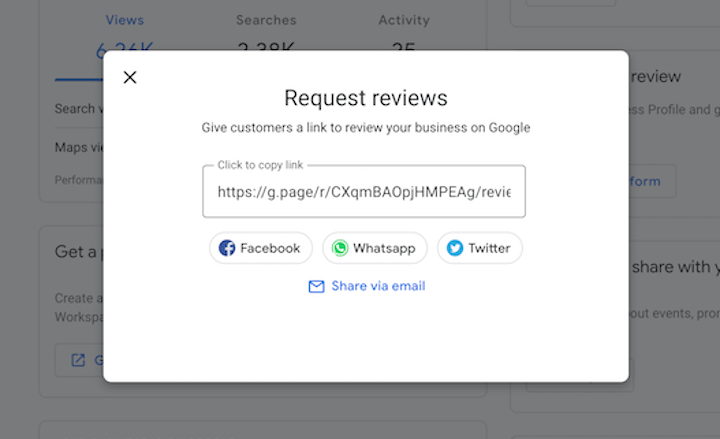

How Do I Improve My Star Rating?

Businesses that want to improve their Google star rating should start by claiming their Google Business Profile and making sure all the information is complete and up to date.

If a company has already taken these steps and wants to offset a poor rating, they are going to need more reviews to offset the average.

Companies can get more Google reviews by making it easy for customers to leave one. The first step for a company is to get the link to leave a review inside their Google Business Profile:

Screenshot from Wordstream, February 2024

Screenshot from Wordstream, February 2024

From there, companies can send this link out to customers directly (there are four options displayed right from the link as seen above), include it on social media, and even dedicate sections of their website to gathering more reviews and/or displaying reviews from other users.

It isn’t clear whether or not responding to reviews will help improve a local business’s ranking; however, it’s still a good idea for companies to respond to reviews on their Google Business Profile in order to improve their ratings overall.

That’s because responding to reviews can entice other customers to leave a review since they know they will get a response and because the owner is actually seeing the feedback.

For service businesses, Google provides the option for customers to rate aspects of the experience.

This is helpful since giving reviewers this option allows anyone who had a negative experience to rate just one aspect negatively rather than giving a one-star review overall.

Does Having A Star Rating On Google Matter? Yes! So Shoot For The Stars

Stars indicate quality to consumers, so they almost always improve click-through rates wherever they are present.

Consumers tend to trust and buy from brands with higher star ratings in local listings, paid ads, or even app downloads.

Many, many, many studies have demonstrated this phenomenon time and again. So, don’t hold back when it comes to reviews.

Do an audit of where your brand shows up in SERPs and get stars next to as many placements as possible.

The most important part of star ratings across Google, however, will always be the service and experiences companies provide that fuel good reviews from happy customers.

More resources:

Feature Image: BestForBest/Shutterstock

All screenshots taken by author

![How AEO Will Impact Your Business's Google Visibility in 2026 Why Your Small Business’s Google Visibility in 2026 Depends on AEO [Webinar]](https://articles.entireweb.com/wp-content/uploads/2026/01/How-AEO-Will-Impact-Your-Businesss-Google-Visibility-in-2026-400x240.png)

![How AEO Will Impact Your Business's Google Visibility in 2026 Why Your Small Business’s Google Visibility in 2026 Depends on AEO [Webinar]](https://articles.entireweb.com/wp-content/uploads/2026/01/How-AEO-Will-Impact-Your-Businesss-Google-Visibility-in-2026-80x80.png)