SEO

Building An Integrated Search Strategy

Digital transformation is here.

But is it really here for search marketers?

According to Google,

“Now is the time to reset, pivot, and think big to transform your business operations to match new digital expectations.”

Search marketers need to transform.

Looking at all your audiences and connecting them to relevant search engines – not just Google – truly allows digital marketers to transform.

Every search engine is different; in results, modes of search, and keyword intent (i.e., what keywords we use on each search engine varies).

It is not possible to duplicate what you are doing on Google and think it will work on YouTube and/or Amazon (or any other search engine for that matter).

As you begin your integrated search strategy, looking at one search engine can help you start to think about the others.

Generally speaking, we use:

- Google to find.

- Amazon to buy.

- YouTube to watch.

When it comes to integrated search strategies, let’s begin by identifying what our focus engine/s are.

Identify Your Core Focus Areas Based On Your Audience And Analyze What The Search Engine Displays

Amazon is all about products.

Get to know your product performance. For brands, this isn’t just looking at Brand Analytics via Amazon Seller Central.

Here are the core and secondary focus of the ‘big three’ search engines:

| Search engine | Core focus | Secondary focus |

| Amazon | ||

|

||

| YouTube |

Review your Amazon performance and visibility (i.e., how well you rank) on Google. This will help you start to build out your keyword strategy.

You can do this by looking at which keywords Google ranks Amazon for.

Are any of these URLs your Amazon products? Do any of these keywords have an Amazon “double bubble”?

This might be a stronger indication that that keyword has high commercial intent. So much so that Google ranks Amazon more than once.

Amazon double bubbles are even more important on Google when they occur in higher-ranked positions.

Equally, if you see YouTube ranking, predominantly, on Google (e.g. displaying YouTube timestamps), this may be an indication that these keywords are more video-platform friendly.

Keep an eye on YouTube ranking for a traditional result too. It’s not always about timestamps.

When you see the usual diverse Google layout, one with lots of different search engine results page (SERP) features, this would be an indication that that keyword requires a Google platform focus.

Since we are talking about integrated search, let’s not just look at Google.

Google is a competitor of many search engines, including YouTube. (Even though they are part of the same company, Alphabet.)

According to eMarketer:

“Amazon’s first-party data on consumer shopping and purchase habits offer it an advantage over the more general online behavioral data that Facebook and Google provide.”

This is why Google has created things like Google Shopping and Google Jobs to fend off other large search engines like Amazon, Indeed, or Glassdoor, becoming the most dominant.

Each engine has very unique data, search behavior, and results. Every engine that your audience uses, needs its own strategy.

Overreliance and underinvestment in looking at each search engine’s data will limit your ability to create an integrated search strategy.

On-site search is beneficial.

On-Site Search (OSS)

Since the interface (and the functions of that interface) impact our search behavior, your top internal site search keywords (i.e., what keywords your website users are using within your own search bar) within your website will never fully align with what you see in Google Search Console, for example.

It is, however, a good idea to keep monitoring your internal site searches to continue to evolve your keyword strategy. There are some nuggets in there to help you develop what content you write about.

Internal site search, a form of on-site search (OSS), is powerful because it is based on real user behavior data.

Whenever you can, look at OSS by search engine.

Disclaimer: I work for Similarweb. The company I work for has features using OSS.

Let’s take the product “air conditioner” as an example.

On Google, we search for a wide range of keywords, but most of these are branded keywords, for example, “LG air conditioner” or “Mitsubishi air conditioner”. Overall, these keywords are broad and less specific.

On Amazon, we are more specific and tend to use more non-branded keywords – for example, “portable air conditioner” or “sliding window air conditioner.”

On YouTube, we are interested in both inspirational content at the pre-sale stage (e.g. “best portable air conditioner”), as well as post-sale stage (e.g. “mini split air conditioner installation”).

Image from author, January 2023

Image from author, January 2023Any business that ranks on Google and produces videos needs to start creating separate Google and YouTube strategies. Retailers also need to do this for Amazon.

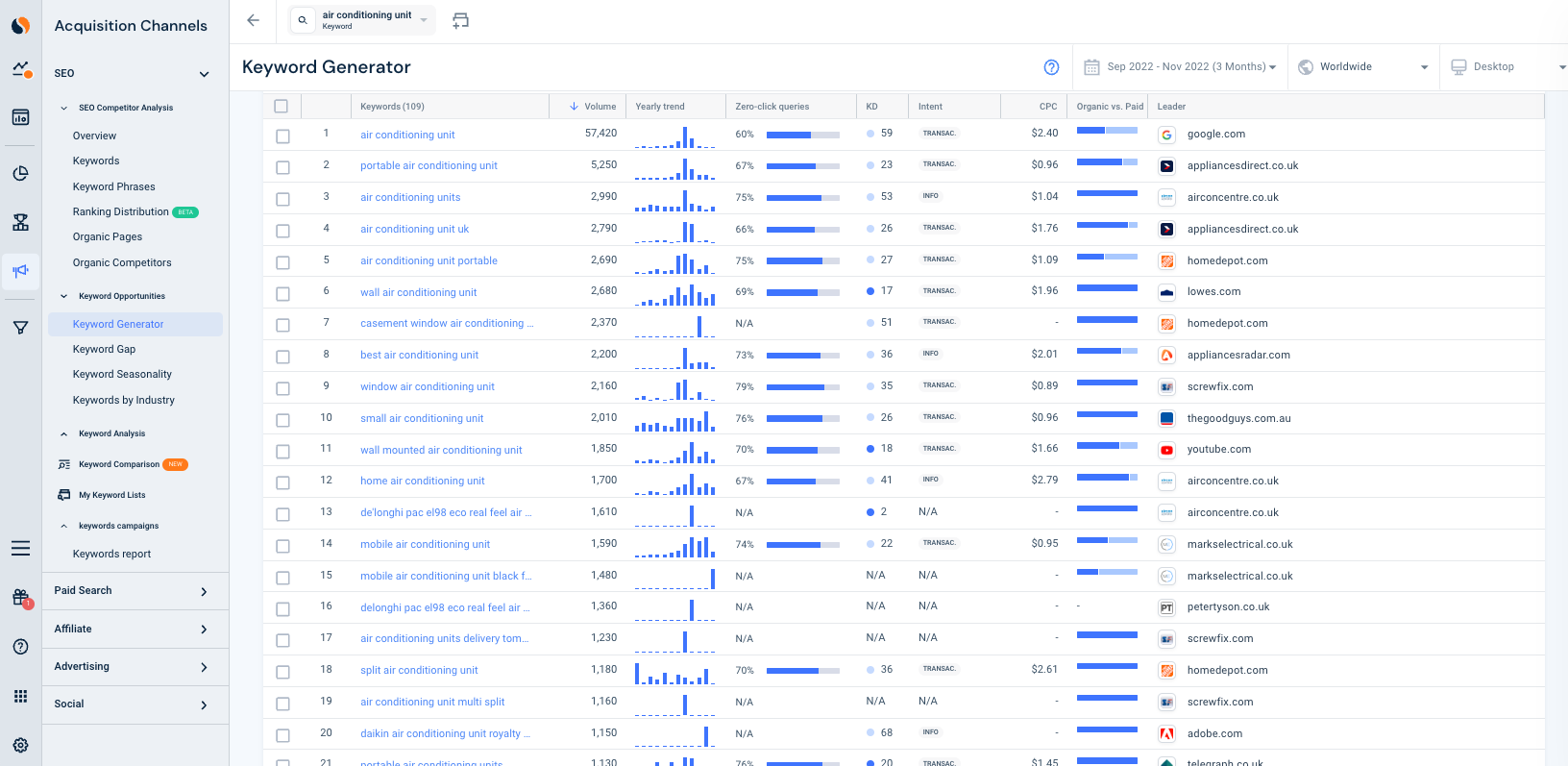

Let’s take a look at how keyword research compares across sites.

Google keyword research:

Screenshot by author, January 2023

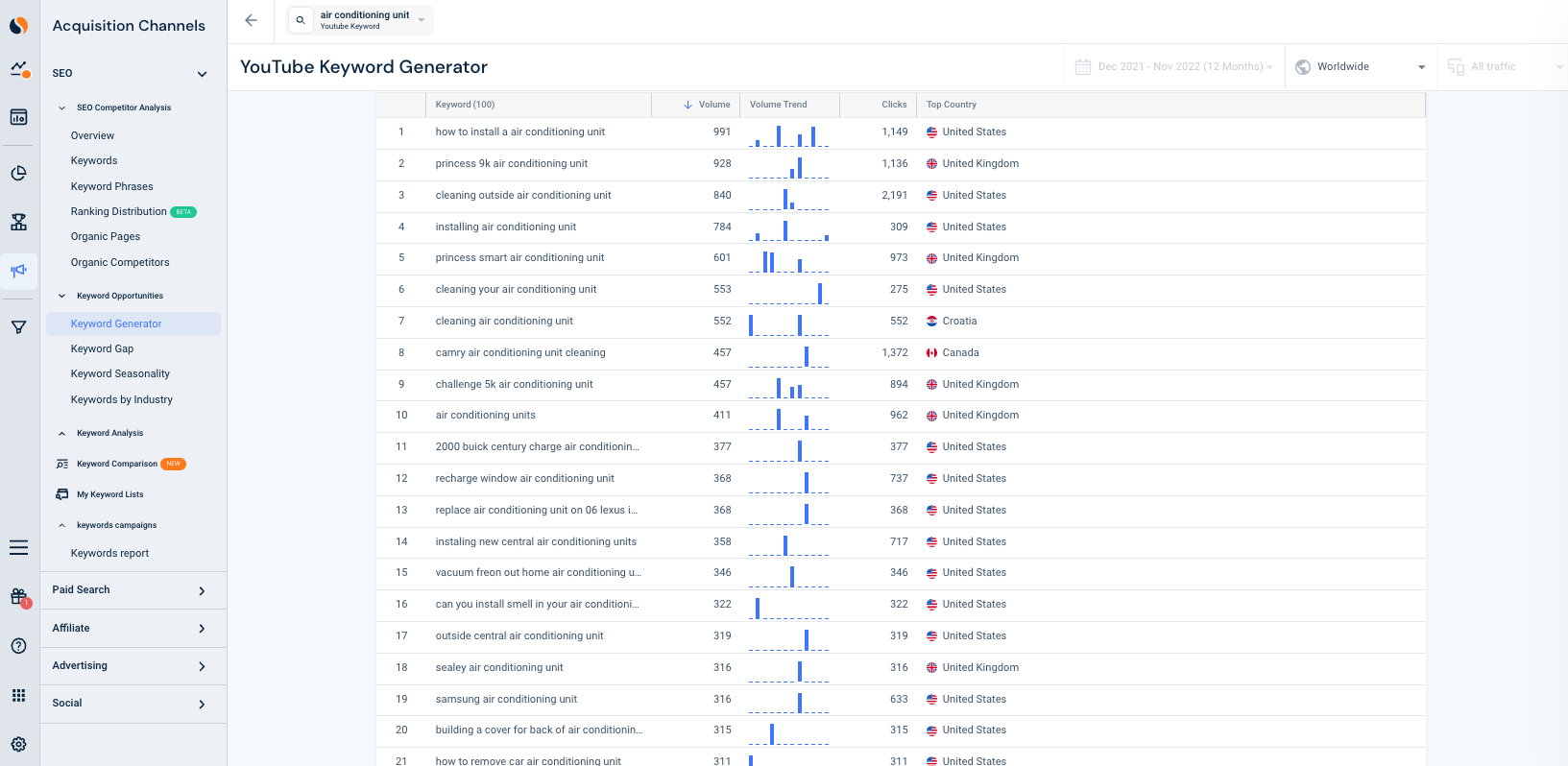

Screenshot by author, January 2023YouTube keyword research:

Screenshot by author, January 2023

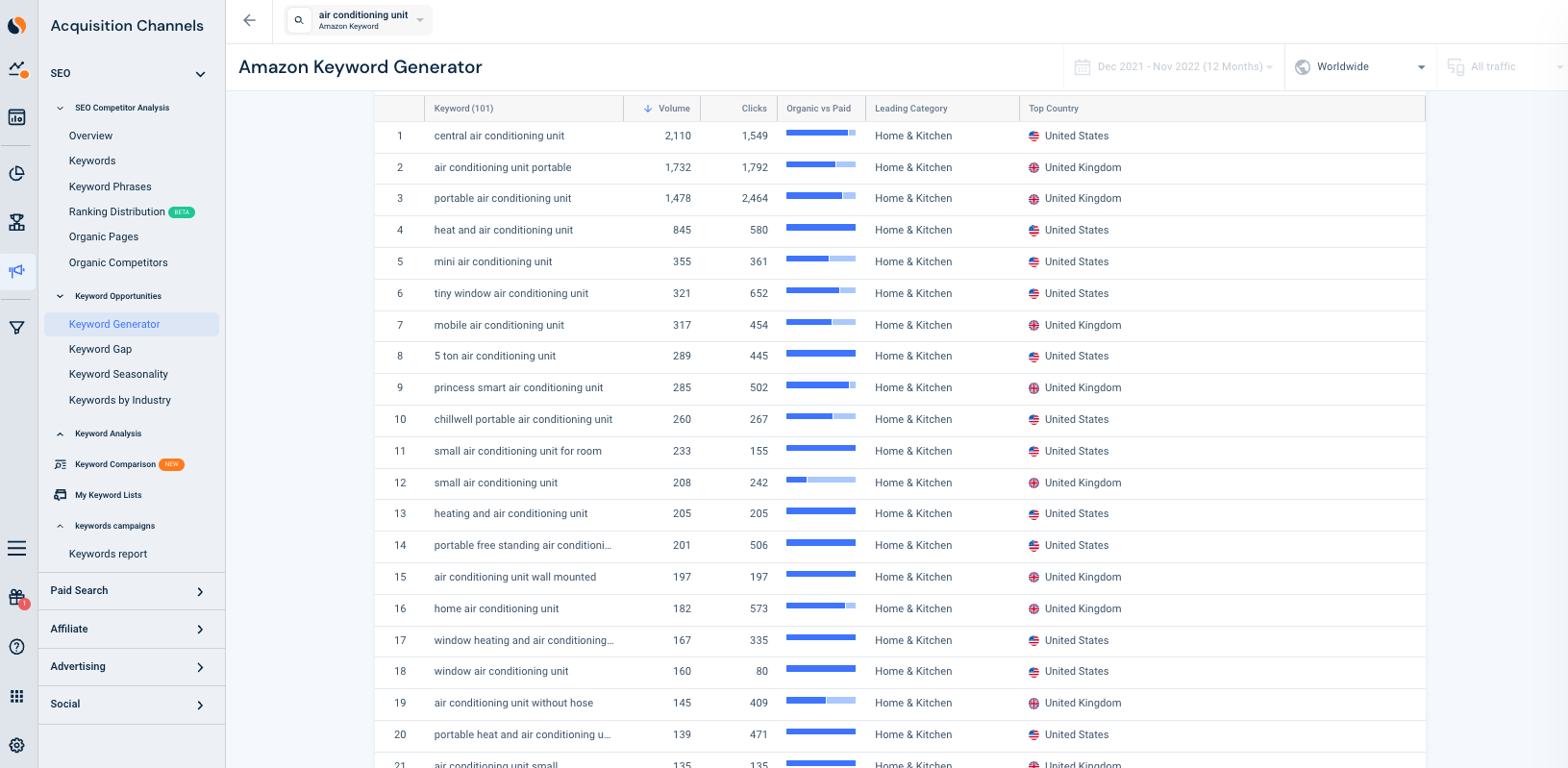

Screenshot by author, January 2023 Screenshot by author, January 2023

Screenshot by author, January 2023Buyer’s Journey

Traditionally, and far before digital acceleration was a thing, we used to group keywords by “informational,” “navigational,” and “transactional.”

These categories are outdated for integrated search, as the groupings do not easily align with search engines.

Group your keywords into these stages to get a better alignment with the buyer’s journey and core search engine:

| Buyer’s journey phase | Core search engine | Secondary search engine | Keyword example |

| Awareness | Amazon and YouTube | “Paper” | |

| Consideration | Amazon, Google, and YouTube | “Printer paper” | |

| Decision | Amazon | “A4 printer paper” | |

| Inspiration | YouTube | Amazon and YouTube | “How to make a paper airplane” |

Awareness

- Core search engine: Google (sometimes Amazon and YouTube).

- Keyword example: “Paper”.

Consideration

- Core search engines: Amazon, Google, and YouTube.

- Keyword example: “Printer paper”.

Decision

- Core search engine: Amazon (sometimes Google).

- Keyword example: “A4 printer paper”.

Inspiration

- Core search engine: YouTube (sometimes Amazon and YouTube).

- Keyword example: “How to make an airplane out of printer paper”.

Benefits Of The Buyer’s Journey

Easier alignment to search engines and better performance insights.

Let’s take Google SERP features, for example. Are there more Instant Answers for awareness keywords compared to decision keywords?

SERP features help develop your Google strategy and digital assets on Google. Keywords with videos SERPs should not direct your YouTube strategy.

The buyer’s journey helps you to start building an integrated search strategy across the big three search engines of Amazon, Google, and YouTube.

If you have low SEO resources restricting you from focusing on this, try to set aside 30 minutes a week so you can slowly start to categorize keywords for your most important business unit.

Over the following weeks, you will start to gain valuable insights into an important line of business.

This will help you automatically get attention from other stakeholders internally, which will help you make a business case to do more SEO activities.

This is SEO evangelism at its best: engaging internal stakeholders.

The buyer’s journey is much easier to understand for non-digital audiences compared to a specific search engine’s ranking factors.

E-A-T Content Is Required For Every Search Engine Strategy, But Some Less Than Others

If you need a rundown (or refresher) on E-A-T, the SEJ guide explains it beautifully. Read it here: What Exactly Is E-A-T & Why Does It Matter to Google?

Now, let’s look at E-A-T (expertise, authority, and trustworthiness) from an integrated search perspective.

Google places more emphasis on E-A-T compared to Amazon and YouTube.

Amazon uses E-A-T the least, as it’s still playing catchup on improving its content-specific algorithms.

But since content exists on all three search engines, and to follow best practices, we need to think about E-A-T for all three search engines.

Do not use generic product descriptions on Amazon. If you are reselling, do not copy content from external sources, including from the supplier if you are reselling.

Be reactive on Amazon to user-generated content, in particular to reviews and questions/answers you receive.

Unlike Google and YouTube, you can’t send a piece of consumed text or video back. So keep an eye on order defect rates, stock levels, price, and conversion trends.

On Amazon and Google, keep an eye on reviews; authentic, specialist reviews are important.

As mentioned earlier, Google is the most advanced algorithmically when it comes to E-A-T. But really, E-A-T principles are like university essays.

Key questions to ask:

- Is the content unique? If so, to what extent vs. what else we have seen (in the case of search engines indexed)?

- Who else published about the topic? What are their strengths and weaknesses? How does this domain compare in relation to this?

- What research has been conducted? Are there any references and to which resources/author profiles?

- What questions were addressed? What are missing questions/angles?

- What methods and formats were used?

- What were the key points?

YouTube, like Amazon, factors more real-life metrics into its algorithm. In the case of Amazon, it’s defect rates and stock levels. On YouTube, it’s views, likes, gaining authentic subscribers, and comments.

Shareability is important to all three search engines but more important to Google and YouTube.

Google, for the most part, uses backlinks to monitor shareability.

YouTube may also use backlinks but video shares are more important. Make sure you enable video sharing under options/settings. Engagement is key for YouTube.

How Can I Build An Integrated Search Strategy?

Identify who your audience is, and what their core focus is.

If it’s to target younger audiences who consume video, your keyword strategy needs to be different than just targeting video consumers.

If your company is currently only doing SEO, review Amazon and YouTube performance on Google. This is the easiest way to start getting teams to think and get them to understand that each engine is different.

Understanding Amazon performance on Google at a keyword level, can add another dimension to keyword intent.

Categorize your keywords into the four stages of the buyer’s journey: awareness, consideration, decision, and inspiration. This will help you align your keywords by search engine, too.

Keyword grouping of the buyer’s journey will also add another interesting layer to your understanding of SERP features.

E-A-T your content on every search engine you operate in. Some engines have less emphasis, but remember every engine is getting more sophisticated, some at a slower rate than others.

Stay ahead of the game.

More resources:

Featured Image: Costello77/Shutterstock

You must be logged in to post a comment Login