SEO

How to Persuade Your Boss to Send You to Ahrefs Evolve

There’s one thing standing between you and several days of SEO, socializing, and Singaporean sunshine: your boss (and their Q4 budget 😅).

But don’t worry—we’ve got your back. Here are 5 arguments (and an example message) you can use to persuade your boss to send you to Ahrefs Evolve.

About Ahrefs Evolve

SEO is changing at a breakneck pace. Between AI Overviews, Google’s rolling update schedule, their huge API leak, and all the documents released during their antitrust trial, it’s hard to keep up. What works in SEO today?

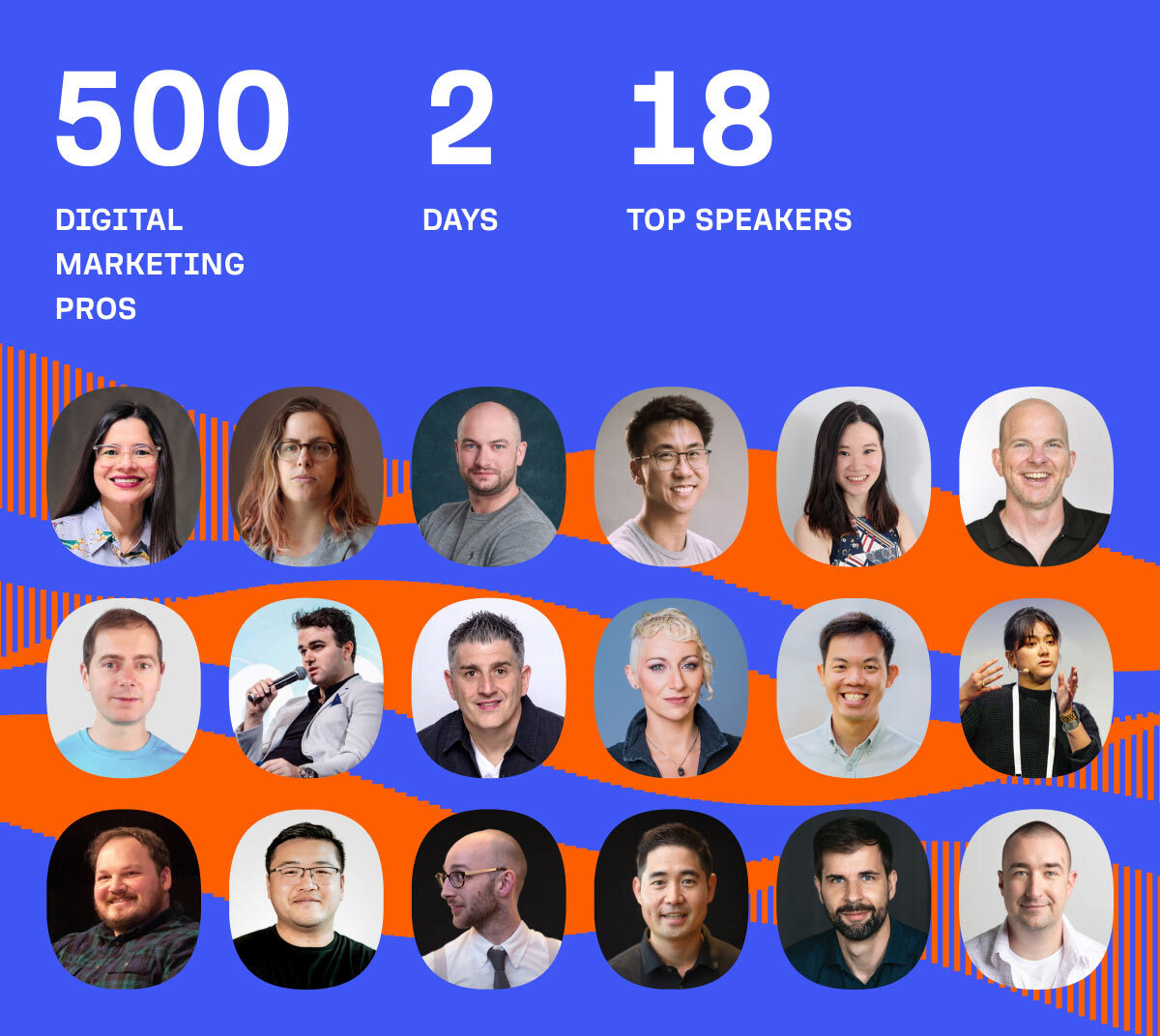

You could watch a YouTube video or two, maybe even attend an hour-long webinar. Or, much more effective: you could spend two full days learning from a panel of 18 international SEO experts, discussing your takeaways live with other attendees.

Our world-class speakers are tackling the hardest problems and best opportunities in SEO today. The talk agenda covers topics like:

- Responding to AI Overviews: Amanda King will teach you how to respond to AI Overviews, Google Gemini, and other AI search functions.

- Surviving (and thriving) Google’s algo updates: Lily Ray will talk through Google’s recent updates, and share data-driven recommendations for what’s working in search today.

- Planning for the future of SEO: Bernard Huang will talk through the failures of AI content and the path to better results.

(And attendees will get video recordings of each session, so you can share the knowledge with your teammates too.)

View the full talk agenda here.

There’s no substitute for meeting with influencers, peers, and partners in real life.

Conferences create serendipity: chance encounters and conversations that can have a huge positive impact on you and your business. By way of example, these are some of the real benefits that have come my way from attending conferences:

- Conversations that lead to new customers for our business,

- Invitations to speak at events,

- New business partnerships and co-marketing opportunities, and

- Meeting people that we went on to hire.

There’s a “halo” effect that lingers long after the event is over: the people you meet will remember you for longer, think more highly of you, and be more likely to help you out, should you ask.

(And let’s not forget: there’s a lot of information, particularly in SEO, that only gets shared in person.)

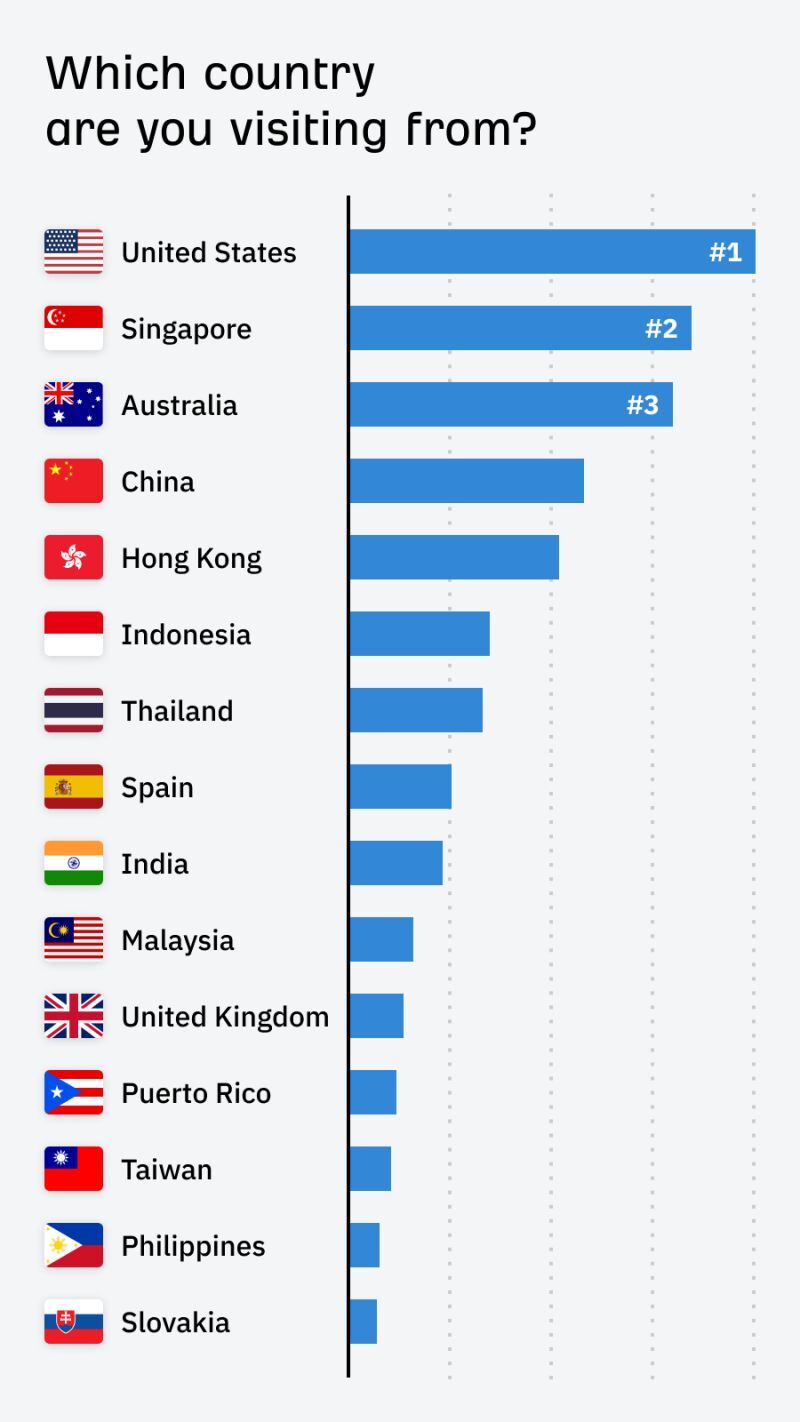

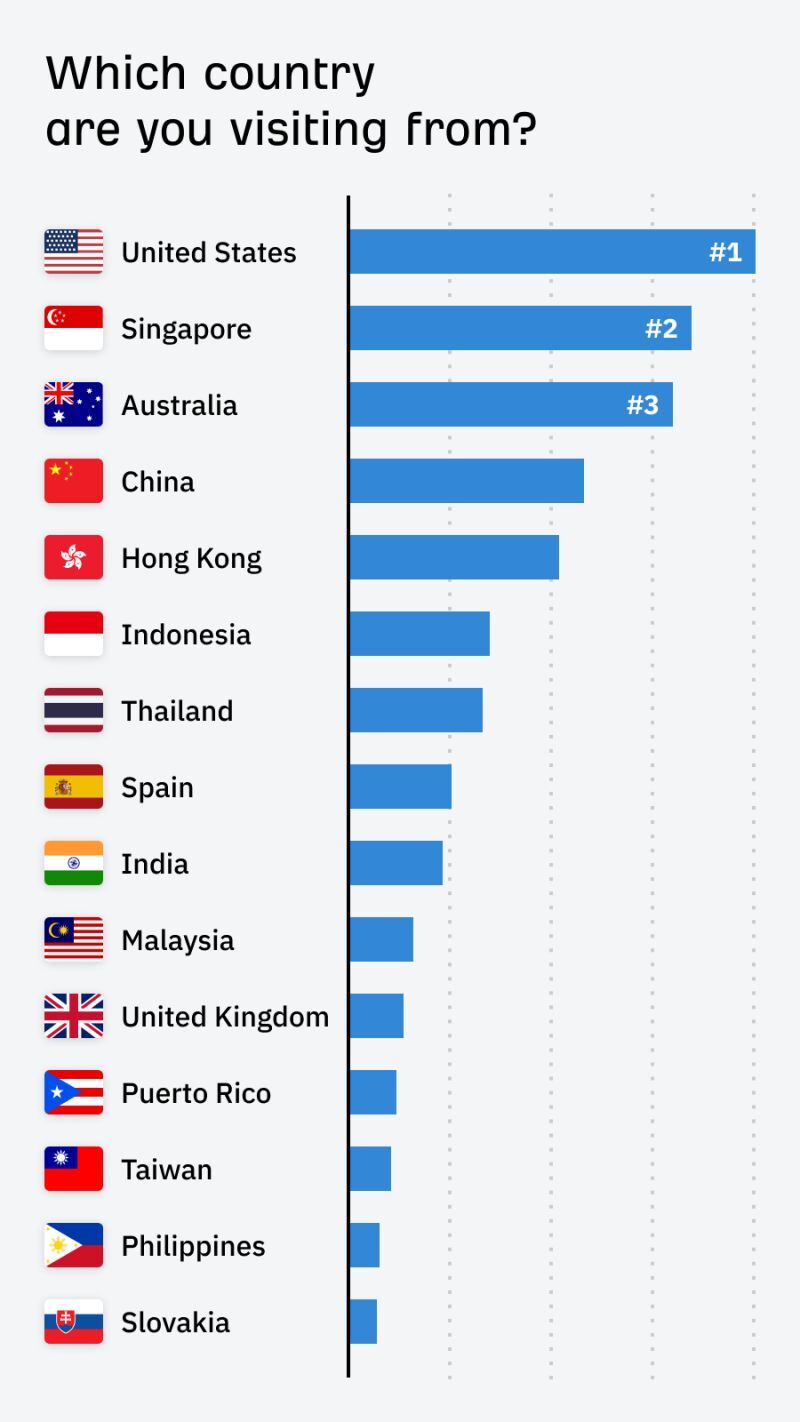

The “international” part of Evolve matters too. Evolve is a different crowd to your local run-of-the-mill conference. It’s a chance to meet with people from markets you wouldn’t normally meet—from Australia to Indonesia and beyond.

If you’re an Ahrefs customer (thank you!), you’ll learn tons of tips, tricks and workflow improvements from attending Evolve. You’ll have opportunities to:

- Attend talks from the Ahrefs team, showcasing advanced features and strategies that you can use in your own business.

- Pick our brains at the Ahrefs booth, where we’ll offer informal 1:1 coaching sessions and previews of up-coming releases (like our new content optimization tool 🤫).

- Join dedicated Ahrefs training workshops, hosted by the Ahrefs team and Ahrefs power users (tickets for these workshops will sold separately).

As a manager myself, there are two questions I need answered when approving expenses:

- Is this a reasonable cost?

- Will we see a return on this investment?

To answer those questions: early bird tickets for Evolve start at $570. For context, “super early bird” tickets for MozCon (another popular SEO conference) this year were almost twice as much: $999.

There’s a lot included in the ticket price too:

- World-class international speakers,

- 5-star hotel venue,

- 5-star hotel food (two tea breaks with snacks & lunch),

- Networking afterparty, and

- Full talk recordings to later share with your team.

SEO is a crucial growth channel for most businesses. If you can improve your company’s SEO performance after attending Evolve (and we think you will), you’ll very easily see a positive return on the investment.

Traveling to tropical Singapore (and eating tons of satay) is great for you, but it’s also great for your team. Attending Evolve is a chance to break with routine, reignite your passion for marketing, and come back to your job reinvigorated.

This would be true for any international conference, but it goes double for Singapore. It’s a truly unique place: an ultra-safe, high-tech city that brings together dozens of different cultures.

You’ll discover different beliefs, working practices, and ways of business—and if you’re anything like me, come back a richer, wiser person for the experience.

If you’re nervous about pitching your boss on attending Evolve, remember: the worst that can happen is a polite “not this time”, and you’ll find yourself in the same position you are now.

So here goes: take this message template, tweak it to your liking, and send it to your boss over email or Slack… and I’ll see you in Singapore 😉

Email template

Hi [your boss’ name],

Our SEO tool provider, Ahrefs, is holding an SEO and digital marketing conference in Singapore in October. I’d like to attend, and I think it’s in the company’s interest:

- The talks will help us respond to all the changes happening in SEO today. I’m particularly interested in the talks about AI and recent Google updates.

- I can network with my peers. I can discover what’s working at other companies, and explore opportunities for partnerships and co-marketing.

- I can learn how we can use Ahrefs better across the organization.

- I’ll come back reinvigorated with new ideas and motivation, and I can share my top takeaways and talk recordings with my team after the event.

Early bird tickets are $570. Given how important SEO is to the growth of our business, I think we’ll easily see a return from the spend.

Can we set up time to chat in more detail? Thanks!

![How AEO Will Impact Your Business's Google Visibility in 2026 Why Your Small Business’s Google Visibility in 2026 Depends on AEO [Webinar]](https://articles.entireweb.com/wp-content/uploads/2026/01/How-AEO-Will-Impact-Your-Businesss-Google-Visibility-in-2026-400x240.png)

![How AEO Will Impact Your Business's Google Visibility in 2026 Why Your Small Business’s Google Visibility in 2026 Depends on AEO [Webinar]](https://articles.entireweb.com/wp-content/uploads/2026/01/How-AEO-Will-Impact-Your-Businesss-Google-Visibility-in-2026-80x80.png)