SEO

What It Is & Why It Matters For SEO

You may have run across the W3C in your web development and SEO travels.

The W3C is the World Wide Web Consortium, and it was founded by the creator of the World Wide Web, Tim Berners-Lee.

This web standards body creates coding specifications for web standards worldwide.

It also offers a validator service to ensure that your HTML (among other code) is valid and error-free.

Making sure that your page validates is one of the most important things one can do to achieve cross-browser and cross-platform compatibility and provide an accessible online experience to all.

Invalid code can result in glitches, rendering errors, and long processing or loading times.

Simply put, if your code doesn’t do what it was intended to do across all major web browsers, this can negatively impact user experience and SEO.

W3C Validation: How It Works & Supports SEO

Web standards are important because they give web developers a standard set of rules for writing code.

If all code used by your company is created using the same protocols, it will be much easier for you to maintain and update this code in the future.

This is especially important when working with other people’s code.

If your pages adhere to web standards, they will validate correctly against W3C validation tools.

When you use web standards as the basis for your code creation, you ensure that your code is user-friendly with built-in accessibility.

When it comes to SEO, validated code is always better than poorly written code.

According to John Mueller, Google doesn’t care how your code is written. That means a W3C validation error won’t cause your rankings to drop.

You won’t rank better with validated code, either.

But there are indirect SEO benefits to well-formatted markup:

- Eliminates Code Bloat: Validating code means that you tend to avoid code bloat. Validated code is generally leaner, better, and more compact than its counterpart.

- Faster Rendering Times: This could potentially translate to better render times as the browser needs less processing, and we know that page speed is a ranking factor.

- Indirect Contributions to Core Web Vitals Scores: When you pay attention to coding standards, such as adding the width and height attribute to your images, you eliminate steps that the browser must take in order to render the page. Faster rendering times can contribute to your Core Web Vitals scores, improving these important metrics overall.

Roger Montti compiled these six reasons Google still recommends code validation, because it:

- Could affect crawl rate.

- Affects browser compatibility.

- Encourages a good user experience.

- Ensures that pages function everywhere.

- Useful for Google Shopping Ads.

- Invalid HTML in head section breaks Hreflang.

Multiple Device Accessibility

Valid code also helps translate into better cross-browser and cross-platform compatibility because it conforms to the latest in W3C standards, and the browser will know better how to process that code.

This leads to an improved user experience for people who access your sites from different devices.

If you have a site that’s been validated, it will render correctly regardless of the device or platform being used to view it.

That is not to say that all code doesn’t conform across multiple browsers and platforms without validating, but there can be deviations in rendering across various applications.

Common Reasons Code Doesn’t Validate

Of course, validating your web pages won’t solve all problems with rendering your site as desired across all platforms and all browsing options. But it does go a long way toward solving those problems.

In the event that something does go wrong with validation on your part, you now have a baseline from which to begin troubleshooting.

You can go into your code and see what is making it fail.

It will be easier to find these problems and troubleshoot them with a validated site because you know where to start looking.

Having said that, there are several reasons pages may not validate.

Browser Specific Issues

It may be that something in your code will only work on one browser or platform, but not another.

This problem would then need to be addressed by the developer of the offending script.

This would mean having to actually edit the code itself in order for it to validate on all platforms/browsers instead of just some of them.

You Are Using Outdated Code

The W3C only started rendering validation tests over the course of the past couple of decades.

If your page was created to validate in a browser that predates this time (IE 6 or earlier, for example), it will not pass these new standards because it was written with older technologies and formats in mind.

While this is a relatively rare issue, it still happens.

This problem can be fixed by reworking code to make it W3C compliant, but if you want to maintain compatibility with older browsers, you may need to continue using code that works, and thus forego passing 100% complete validation.

Both problems could potentially be solved with a little trial and error.

With some work and effort, both types of sites can validate across multiple devices and platforms without issue – hopefully!

Polyglot Documents

Polyglot documents include any document that may have been transferred from an older version of code, and never re-worked to be compatible with the new version.

In other words, it’s a combination of documents with a different code type than what the current document was coded for (say an HTML 4.01 transitional document type compared to an XHTML document type).

Make no mistake: Even though both may be “HTML” per se, they are very different languages and need to be treated as such.

You can’t copy and paste one over and expect things to be all fine and dandy.

What does this mean?

For example, you may have seen situations where you may validate code, but nearly every single line of a document has something wrong with it on the W3C validator.

This could be due to somebody transferring over code from another version of the site, and not updating it to reflect new coding standards.

Either way, the only way to repair this is to either rework the code line by line (an extraordinarily tedious process).

How W3C Validation Works

The W3C validator is this author’s validator of choice for making sure that your code validates across a wide variety of platforms and systems.

The W3C validator is free to use, and you can access it here.

With the W3C validator, it’s possible to validate your pages by page URL, file upload, and Direct Input.

- Validate Your Pages by URL: This is relatively simple. Just copy and paste the URL into the Address field, and you can click on the check button in order to validate your code.

- Validate Your Pages by File Upload: When you validate by file upload, you will upload the html files of your choice one file at a time. Caution: if you’re using Internet Explorer or certain versions Windows XP, this option may not work for you.

- Validate Your Pages by Direct Input: With this option, all you have to do is copy and paste the code you want to validate into the editor, and the W3C validator will do the rest.

While some professionals claim that some W3C errors have no rhyme or reason, in 99.9% of cases, there is a rhyme and reason.

If there isn’t a rhyme and reason throughout the entire document, then you may want to refer to our section on polyglot documents below as a potential problem.

HTML Syntax

Let’s start at the top with HTML syntax. Because it’s the backbone of the World Wide Web, this is the most common coding that you will run into as an SEO professional.

The W3C has created a specification for HTML 5 called “the HTML5 Standard”.

This document explains how HTML should be written on an ideal level for processing by popular browsers.

If you go to their site, you can utilize their validator to make sure that your code is valid according to this spec.

They even give examples of some of the rules that they look for when it comes to standards compliance.

This makes it easier than ever to check your work before you publish it!

Validators For Other Languages

Now let’s move on to some of the other languages that you may be using online.

For example, you may have heard of CSS3.

The W3C has standards documentation for CSS 3 as well called “the CSS3 Standard.”

This means that there is even more opportunity for validation!

You can validate your HTML against their standard and then validate your CSS against the same standard to ensure conformity across platforms.

While it may seem like overkill to validate your code against so many different standards at once, remember that this means that there are more chances than ever to ensure conformity across platforms.

And for those of you who only work in one language, you now have the opportunity to expand your horizons!

It can be incredibly difficult if not impossible to align everything perfectly, so you will need to pick your battles.

You may also just need something checked quickly online without having the time or resources available locally.

Common Validation Errors

You will need to be aware of the most common validation errors as you go through the validation process, and it’s also a good idea to know what those errors mean.

This way, if your page does not validate, you will know exactly where to start looking for possible problems.

Some of the most common validation errors (and their meanings) include:

- Type Mismatch: When your code is trying to make one kind of data object appear like another data object (e.g., submitting a number as text), you run the risk of getting this message. This error usually signals that some kind of coding mistake has been made. The solution would be to figure out exactly where that mistake was made and fix it so that the code validates successfully.

- Parse Error: This error tells you that there was a mistake in the coding somewhere, but it does not tell you where that mistake is. If this happens, you will have to do some serious sleuthing in order to find where your code went wrong.

- Syntax Errors: These types of errors involve (mostly) careless mistakes in coding syntax. Either the syntax is typed incorrectly, or its context is incorrect. Either way, these errors will show up in the W3C validator.

The above are just some examples of errors that you may see when you’re validating your page.

Unfortunately, the list goes on and on – as does the time spent trying to fix these problems!

More Specific Errors (And Their Solutions)

You may find more specific errors that apply to your site. They may include errors that reference “type attribute used in tag.”

This refers to some tags like JavaScript declaration tags, such as the following: <script type=”text/javascript”>.

The type attribute of this tag is not needed anymore and is now considered legacy coding.

If you use that kind of coding now, you may end up unintentionally throwing validation errors all over the place in certain validators.

Did you know that not using alternative text (alt text) – also called alt tags by some – is a W3C issue? It does not conform to the W3C rules for accessibility.

Alternative text is the text that is coded into images.

It is primarily used by screen readers for the blind.

If a blind person visits your site, and you do not have alternative text (or meaningful alternative text) in your images, then they will be unable to use your site effectively.

The way these screen readers work is that they speak aloud the words that are coded into images, so the blind can use their sense of hearing to understand what’s on your web page.

If your page is not very accessible in this regard, this could potentially lead to another sticky issue: that of accessibility lawsuits.

This is why it pays to pay attention to your accessibility standards and validate your code against these standards.

Other types of common errors include using tags out of context.

For code context errors, you will need to make sure they are repaired according to the W3C documentation so these errors are no longer thrown by the validator.

Preventing Errors From Impacting Your Site Experience

The best way to prevent validation errors from happening is by making sure your site validates before launch.

It’s also useful to validate your pages regularly after they’re launched so that new errors do not crop up unexpectedly over time.

If you think about it, validation errors are the equivalent of spelling mistakes in an essay – once they’re there, they’re difficult (if not impossible) to erase, and they need to be fixed as soon as humanly possible.

If you adopt the habit of always using the W3C validator in order to validate your code, then you can, in essence, stop these coding mistakes from ever happening in the first place.

Heads Up: There Is More Than One Way To Do It

Sometimes validation won’t go as planned according to all standards.

And there is more than one way to accomplish the same goal.

For example, if you use <button> to create a button and then give it an href tag inside of it using the <a> element, this doesn’t seem to be possible according to W3C standards.

But is perfectly acceptable in JavaScript because there are actually ways to do this within the language itself.

This is an example of how we create this particular code and insert it into the direct input of the W3C validator:

In the next step, during validation, as discussed above we find that there are at least 4 errors just within this particular code alone, indicating that this is not exactly a particularly well-coded line:

Screenshot from W3C validator, February 2022

Screenshot from W3C validator, February 2022While validation, on the whole, can help you immensely, it is not always going to be 100% complete.

This is why it’s important to familiarize yourself by coding with the validator as much as you can.

Some adaptation will be needed. But it takes experience to achieve the best possible cross-platform compatibility while also remaining compliant with today’s browsers.

The ultimate goal here is improving accessibility and achieving compatibility with all browsers, operating systems, and devices.

Not all browsers and devices are created equal, and validation achieves a cohesive set of instructions and standards that can accomplish the goal of making your page equal enough for all browsers and devices.

When in doubt, always err on the side of proper code validation.

By making sure that you work to include the absolute best practices in your coding, you can ensure that your code is as accessible as it possibly can be for all types of users.

On top of that, validating your HTML against W3C standards helps you achieve cross-platform compatibility between different browsers and devices.

By working to always ensure that your code validates, you are on your way to making sure that your site is as safe, accessible, and efficient as possible.

More resources:

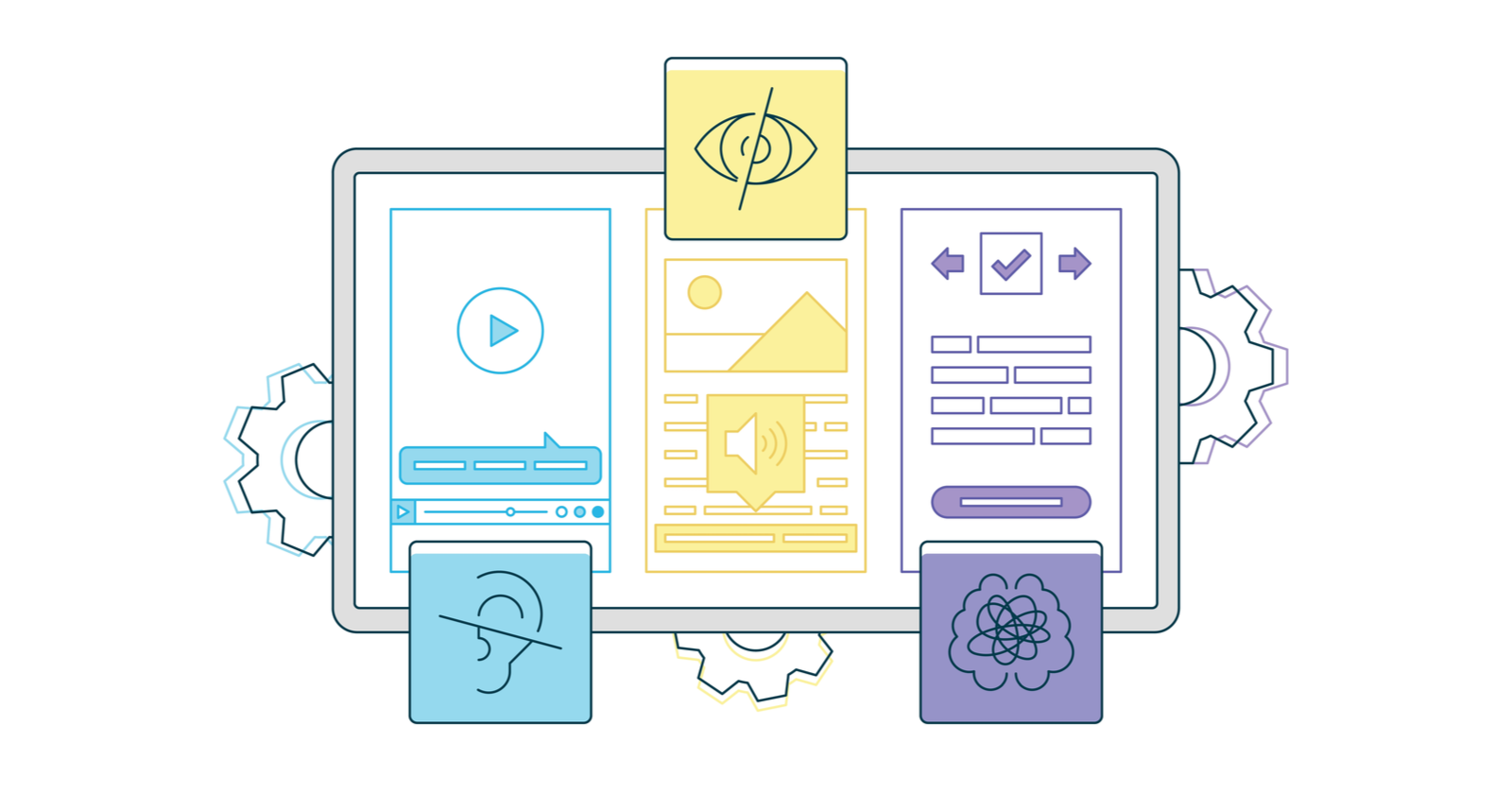

Featured Image: graphicwithart/Shutterstock

You must be logged in to post a comment Login