SOCIAL

Facebook Announces Official Timeline for Trump Ban, Changes to Rules Around Political Speech

The door has been opened for former President Donald Trump to potentially return to Facebook, his key promotional platform of choice – though he will have to wait for two years (dating back to January 7th), and he will have to undergo an assessment to decide whether he should have his accounts reinstated.

The announcement comes as part of Facebook’s response to the latest ruling from its indepedent Oversight Board in relation to its decision to ban Trump back in January, in the wake the Capitol riot. Following the incident, in which Facebook says that Trump both instigated and incited the violent uprising via social media, The Social Network cut off Trump’s access to both Facebook and Instagram, a penalty that it’s maintained ever since.

Trump has been seeking to regain access to the platform, and his 32 million Facebook followers, and the Oversight Board afforded Trump an opportunity to share his perspective on the ban as part of its assessment process.

And now, Facebook has announced the next steps it will take in relation to the Oversight Board’s findings.

Here’s how Facebook’s rules around political leaders, and what they can share on Facebook, will change as a result.

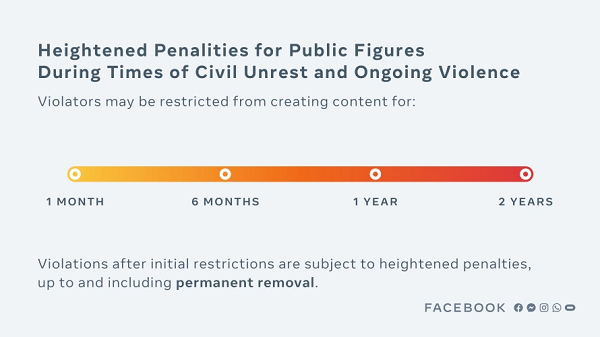

First off, Facebook will now implement clearly defined penalties for suspensions, even those relating to significant incidents that could lead to broad-ranging social unrest.

As explained by Facebook:

“The [Oversight Board] criticized the open-ended nature of [Trump’s] suspension, stating that “it was not appropriate for Facebook to impose the indeterminate and standardless penalty of indefinite suspension.” The board instructed us to review the decision and respond in a way that is clear and proportionate, and made a number of recommendations on how to improve our policies and processes.”

Based on this, Facebook has now established a clear framework around future incidents, with escalating penalties of up to two years for the most significant violations.

Given the nature of the Trump ban, Facebook puts this incident in its ‘most severe’ category, meaning it garners the most significant penalty available. Hence, Trump is now banned for two years, effective from the date of the initial suspension on January 7th.

But that doesn’t definitively mean that Trump will be able to start posting again on January 7th 2023:

“At the end of this period, we will look to experts to assess whether the risk to public safety has receded. We will evaluate external factors, including instances of violence, restrictions on peaceful assembly and other markers of civil unrest. If we determine that there is still a serious risk to public safety, we will extend the restriction for a set period of time and continue to re-evaluate until that risk has receded.”

So Trump could return to Facebook in 2023, just in time for a re-election campaign, with the top job up again in 2024. But Facebook could also decide that he still poses a significant risk to public debate.

And going on Trump’s official response to today’s ruling, that seems like a strong possibility.

Despite it all, Trump is still pushing ahead with the ‘rigged election’ narrative, which is what sparked the Capitol riots in the first place. Given this, it seems like a very strong possibility that he may have trouble regaining Facebook access, even in 2023 – and without access to Facebook’s platform to push any potential re-election promotions and campaign material, that will be a big blow to Trump’s chances, if he were to seek re-election in the next period.

So while Trump could return to the platform in two years, it’s not a given that it will happen – in fact, right now, you’d have to think that won’t be much of a chance.

But the key point here is that Facebook has established clearer, more transparent rules around such, and what the maximum penalties will be from now on, which is critically important in ensuring clearer guidance around its official processes.

Furthering this, Facebook has also set down more specific parameters around what politicians can say on the platform, and how its rules will apply to public figures, which has been another point of contention.

Up till now, Facebook has allowed certain ‘newsworthy’ content that might otherwise violate its rules to remain up on its platform, in the interests of public debate and transparency.

Facebook CEO Mark Zuckerberg defended this approach in a speech to Georgetown in 2019, explaining that:

“I don’t think it’s right for a private company to censor politicians or the news in a democracy. […] We don’t do this to help politicians, but because we think people should be able to see for themselves what politicians are saying.”

But now, Facebook is re-assessing this.

In line with the Oversight Board’s recommendations to establish clearer rules for all users, Facebook will now evaluate all content under the same parameters, even if it’s from a politician or public figure.

“We grant our newsworthiness allowance to a small number of posts on our platform. Moving forward, we will begin publishing the rare instances when we apply it. Finally, when we assess content for newsworthiness, we will not treat content posted by politicians any differently from content posted by anyone else. Instead, we will simply apply our newsworthiness balancing test in the same way to all content, measuring whether the public interest value of the content outweighs the potential risk of harm by leaving it up.”

There is some room for leniency here. As Facebook notes, it will still allow some exemptions under its ‘newsworthy content‘ provisions. But the rules will now be much clearer around such, and all users will essentially face the same penalties and restrictions.

That’s a significant change in approach, but the idea here is that it will provide more transparency over the various assessments and decisions, ensuring that all users understand what’s acceptable, and what’s not, and what the penalties can be in each case.

Will that stop people from abusing the massive reach of Facebook’s platforms to spread divisive messaging, and maximize their own political interests?

No – in fact, if anything, the latest data suggests that more political regimes are now recognizing the potential of Facebook in this regard, and are using the platform for domestic influence campaigns.

It seems, in some ways, that the Trump campaign’s reliance on Facebook to expand its reach and messaging has shined a lot on this type of usage, which has lead to more, smaller-scale efforts to manipulate voters.

Facebook’s new rules will play a part in providing more transparency around such, but the stats indicate that this will be an ongoing concern, with Facebook’s unmatched reach providing a big lure for politically affiliated groups to boost their messaging.

Facebook is also well-aware that these updates won’t address every concern:

“We know today’s decision will be criticized by many people on opposing sides of the political divide – but our job is to make a decision in as proportionate, fair and transparent a way as possible, in keeping with the instruction given to us by the Oversight Board.”

In this respect, these are good changes, which reflect that Facebook’s independent board does indeed have significant influence on the company’s decisions, and will act as a valuable, outside voice in guiding its rules, even in the highest-profile cases.

Which has been a key concern around the Oversight Board, that essentially, it will be a ‘toothless tiger’, and that Facebook will simply ignore the rulings that it doesn’t like, in order to continue on as it always has.

But thus far, that hasn’t been the case. Facebook has tuned into its independent experts, and is working to align with their rulings, in almost all respects, providing much-needed input into its policy decision-making.

Because it shouldn’t come down to what Zuckerberg believes. At Facebook’s scale, it needs outside voices in the room.

And Facebook has, once again, reiterated that this should go even further:

“In the absence of frameworks agreed upon by democratically accountable lawmakers, the board’s model of independent and thoughtful deliberation is a strong one that ensures important decisions are made in as transparent and judicious a manner as possible. The Oversight Board is not a replacement for regulation, and we continue to call for thoughtful regulation in this space.”

Yes, social media platforms should be regulated, in relation to what can and cannot be posted, and Facebook itself advocates for this. In this respect, the Trump decision underlines the value of independent oversight, and why broader rulings like this should apply to all platforms, taking such decisions out of the hands of business managers who have a clear vested interest, and putting it under the guidance of elected officials.

That’s a more complex journey, but the process here points to the value of outside perspective.