Facebook will reconsider Trump’s ban in two years

The clock is ticking on former President Donald Trump’s ban from Facebook, formerly indefinite and now for a period of two years, the maximum penalty under a newly revealed set of rules for suspending public figures. But when the time comes, the company will reevaluate the ban and make a decision then whether to end or extend it, rendering it indefinitely definite.

The ban of Trump in January was controversial in different ways to different groups, but the issue on which Facebook’s Oversight Board stuck as it chewed over the decision was that there was nothing in the company’s rules that supported an indefinite ban. Either remove him permanently, they said, or else put a definite limit to the suspension.

Facebook has chosen… neither, really. The two-year limit on the ban (backdated to January) is largely decorative, since the option to extend it is entirely Facebook’s prerogative, as VP of public affairs Nick Clegg writes:

At the end of this period, we will look to experts to assess whether the risk to public safety has receded. We will evaluate external factors, including instances of violence, restrictions on peaceful assembly and other markers of civil unrest. If we determine that there is still a serious risk to public safety, we will extend the restriction for a set period of time and continue to re-evaluate until that risk has receded.

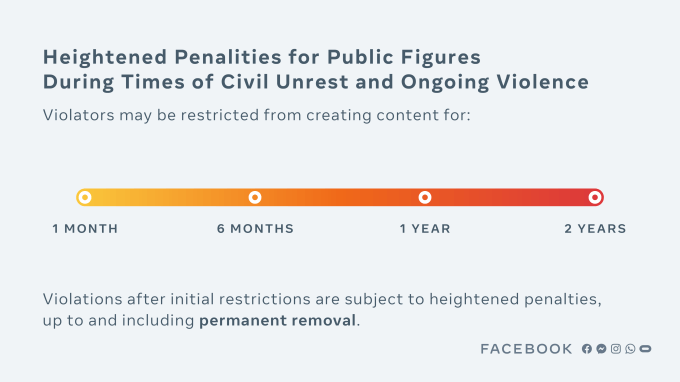

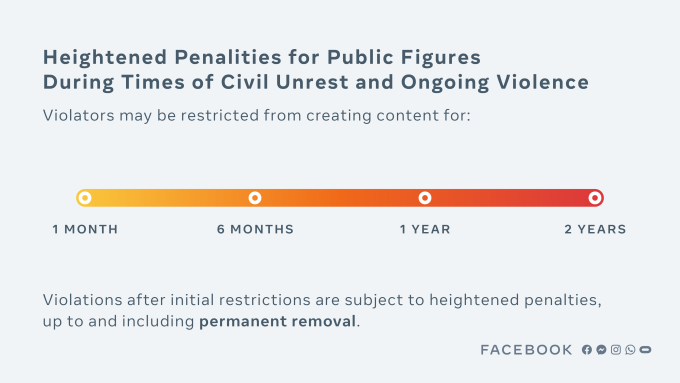

When the suspension is eventually lifted, there will be a strict set of rapidly escalating sanctions that will be triggered if Mr. Trump commits further violations in future, up to and including permanent removal of his pages and accounts.

It sort of fulfills the recommendation of the Oversight Board, but truthfully Trump’s position is no less precarious than before. A ban that can be rescinded or extended whenever the company chooses is certainly “indefinite.”

In a statement, Trump called the ruling “an insult.”

That said, the Facebook decision here does reach beyond the Trump situation. Essentially the Oversight Board suggested they need a rule that defines how they act in situations like Trump’s, so they’ve created a standard… of sorts.

This highly specific “enforcement protocol” is sort of like a visual representation of Facebook saying “we take this very seriously.” While it gives the impression of some kind of sentencing guidelines by which public figures will systematically be given an appropriate ban length, every aspect of the process is arbitrarily decided by Facebook.

What circumstances justify the use of these “heightened penalties”? What kind of violations qualify for bans? How is the severity decided? Who picks the duration of the ban? When that duration expires, can it simply be extended if “there is still a serious risk to public safety”? What are the “rapidly escalating sanctions” these public figures will face post-suspension? Are there time limits on making decisions? Will they be deliberated publicly?

It’s not that we must assume Facebook will be inconsistent or self-deal or make bad decisions on any of these questions and the many more that come to mind, exactly (though that is a real risk), but that this neither adds nor exposes any machinery of the Facebook moderation process during moments of crisis when we most need to see it working.

Despite the new official-looking punishment gradient and re-re-reiterated promise to be transparent, everything involved in what Facebook proposes seems just as obscure and arbitrary as the decision that led to Trump’s ban.

“We know that any penalty we apply — or choose not to apply — will be controversial,” writes Clegg. True, but while some people will be happy with some decisions and others angry, all are united in their desire to have the processes that lead to said penalties elucidated and adhered to. Today’s policy changes do not appear to accomplish that, regarding Trump or anyone else.