SOCIAL

Meta Faces Another Antitrust Challenge, with the FTC’s Case Against the Company Approved for the Next Stage

Meta is facing another antitrust probe in the US, with the FTC today being granted permission to present its case against the company over its acquisitions of Instagram and WhatsApp, which the FTC says were specifically aimed at eliminating competition in the market.

Which, in some ways, they likely were, but at the same time, there’s also a strong case to suggest that Meta has built both apps into what they are as a result of its investment in each, using its resources and reach to boost them both beyond a billion users. Now a court will need to decide which is the more substantive motivator, and whether Meta’s conduct is in breach of the antitrust law.

The ruling is a reversal of the Federal Court’s initial finding in June last year, which saw it dismiss the FTC’s case against Meta, due to the FTC failing to present strong enough arguments to suggest that Meta had acquired either or both apps as an anti-competitive move.

As per the June ruling:

“The FTC has failed to plead enough facts to plausibly establish a necessary element of all of its Section 2 claims – namely, that Facebook has monopoly power in the market for Personal Social Networking (PSN) Services.”

So it’s not that the court disagreed with the suggestion that Meta (then Facebook) potentially acted in an anti-competitive way, but the FTC’s case failed to clearly show that Meta had gained a significant market advantage as a result, as there are various other social apps and platforms that have succeeded in spite of Meta’s efforts.

The judge in that initial instance gave the FTC an opportunity to re-submit its case, which has now lead to this new ruling in its favor, opening the door for a new legal push which, if successful could force Meta to divest both WhatsApp and Instagram, making them independent entities once again.

Though that does seem like a long shot at this stage.

In anticipation of such investigations, Meta has been working to reform its business, and meld together its various elements, which would make it much harder to separate out each, if it were ruled to do so.

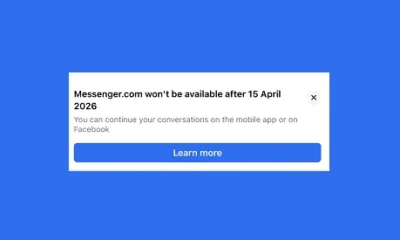

Over the past three years now, Meta has been merging its messaging back-end, in order to facilitate interoperability, which will mean that, eventually, the messaging elements of Messenger, Instagram and WhatsApp will all be working from the same system, and therefore no longer be operable in a separate capacity.

Meta has also changed its corporate name, while it’s also added clear, distinctive branding to all of its apps, another move designed to merge all of its services into one, connected entity.

Of course, each platform operated separately once before, so they could, theoretically, do so again. But it seems that Meta has been working to solidify its internal systems, so that there’s no simple way to break them all apart, which will likely form a key part of its legal defense.

Meta also has the benefit of time. It originally acquired Instagram in 2012, and WhatsApp in 2014, with both deals passing all the necessary regulatory requirements each time. Given that we’re now a decade on, that will also work in Meta’s favor, and it’s also worth noting that the judge dismissed another element of the FTC’s complaint – that Meta changed its platform policies to cut off services to rivals – because the issue is now too far in the past.

Time doesn’t change the facts of Meta’s conduct, but again, Meta will no doubt argue that all of its acquisitions were approved by the necessary regulatory groups, each of which assessed potential antitrust concerns and found no cause to stop the process. And with a decade of development, now it’s too late to be revising the terms of past deals.

It does seem like a fairly fraught case, with some clearly relevant points, though likely not enough to prove, definitively, that Meta acted in an anti-competitive way. In some ways – well, really, the only way – Meta would actually be glad for the presence of TikTok at this time, because the success of TikTok shows that Meta doesn’t have monopoly control of the social media market, while Google holds a significant enough stake in digital advertising to also counter that element.

But maybe, had Meta’s attempts to purchase Snapchat been successful, it would have been in a less defensible spot. It’s no surprise that Meta has slowed its acquisition momentum significantly of late.

There’s still some way to go, and many, many pages of court documents and rulings to read, but at this stage, it seems more likely that Meta’s empire will remain unchanged at the end of the proceedings.

Though it is interesting, and relevant, to note that the Federal Court has even approved such a case, which points to further scrutiny of similar tech acquisitions in future.

Source link

You must be logged in to post a comment Login