SEO

8 Free SEO Reporting Tools

There’s no shortage of SEO reporting tools to choose from—but what are the core tools you need to put together an SEO report?

In this article, I’ll share eight of my favorite SEO reporting tools to help you create a comprehensive SEO report for free.

Price: Free

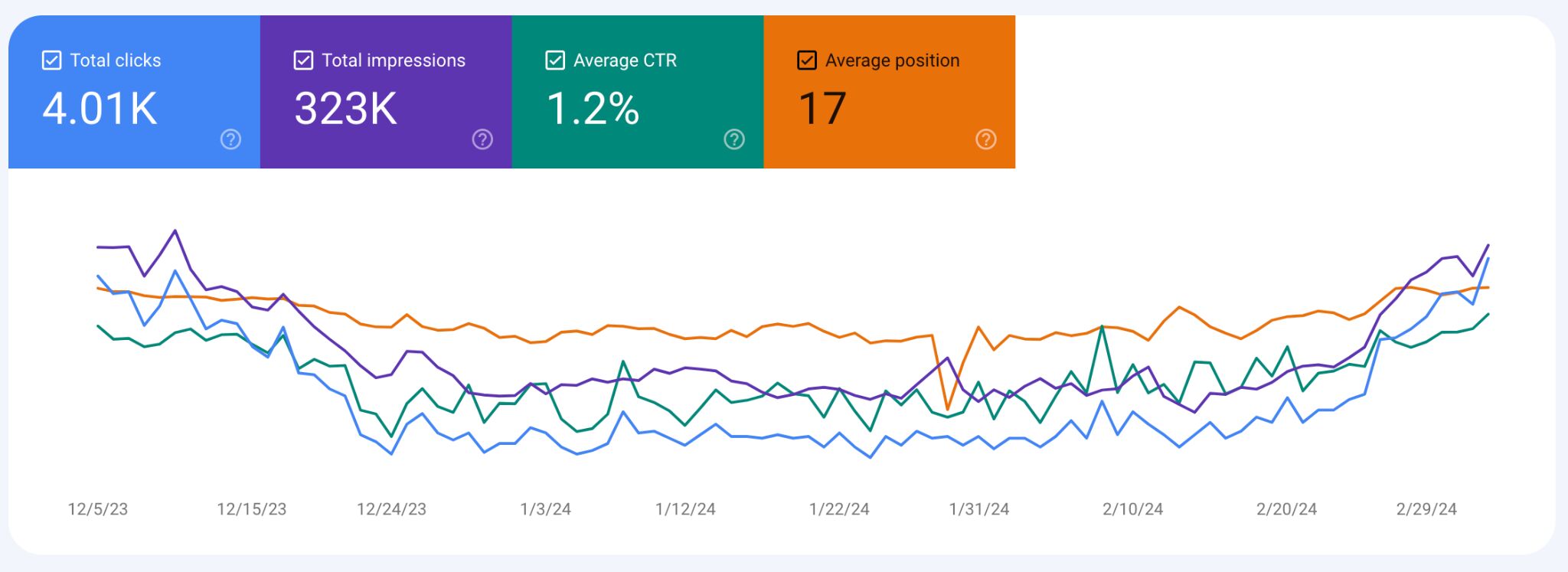

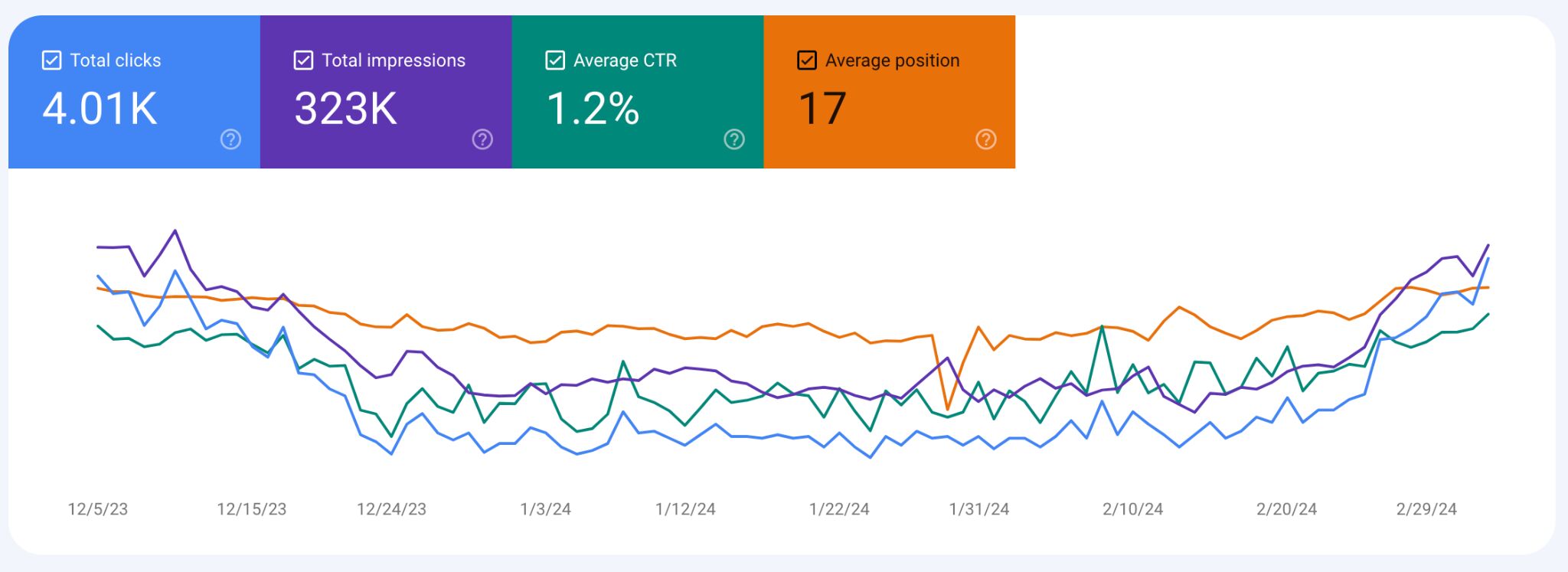

Google Search Console, often called GSC, is one of the most widely used tools to track important SEO metrics from Google Search.

Most common reporting use case

GSC has a ton of data to dive into, but the main performance indicator SEOs look at first in GSC is Clicks on the main Overview dashboard.

As the data is from Google, SEOs consider it to be a good barometer for tracking organic search performance. As well as clicks data, you can also track the following from the Performance report:

- Total Impressions

- Average CTR

- Average Position

Tip

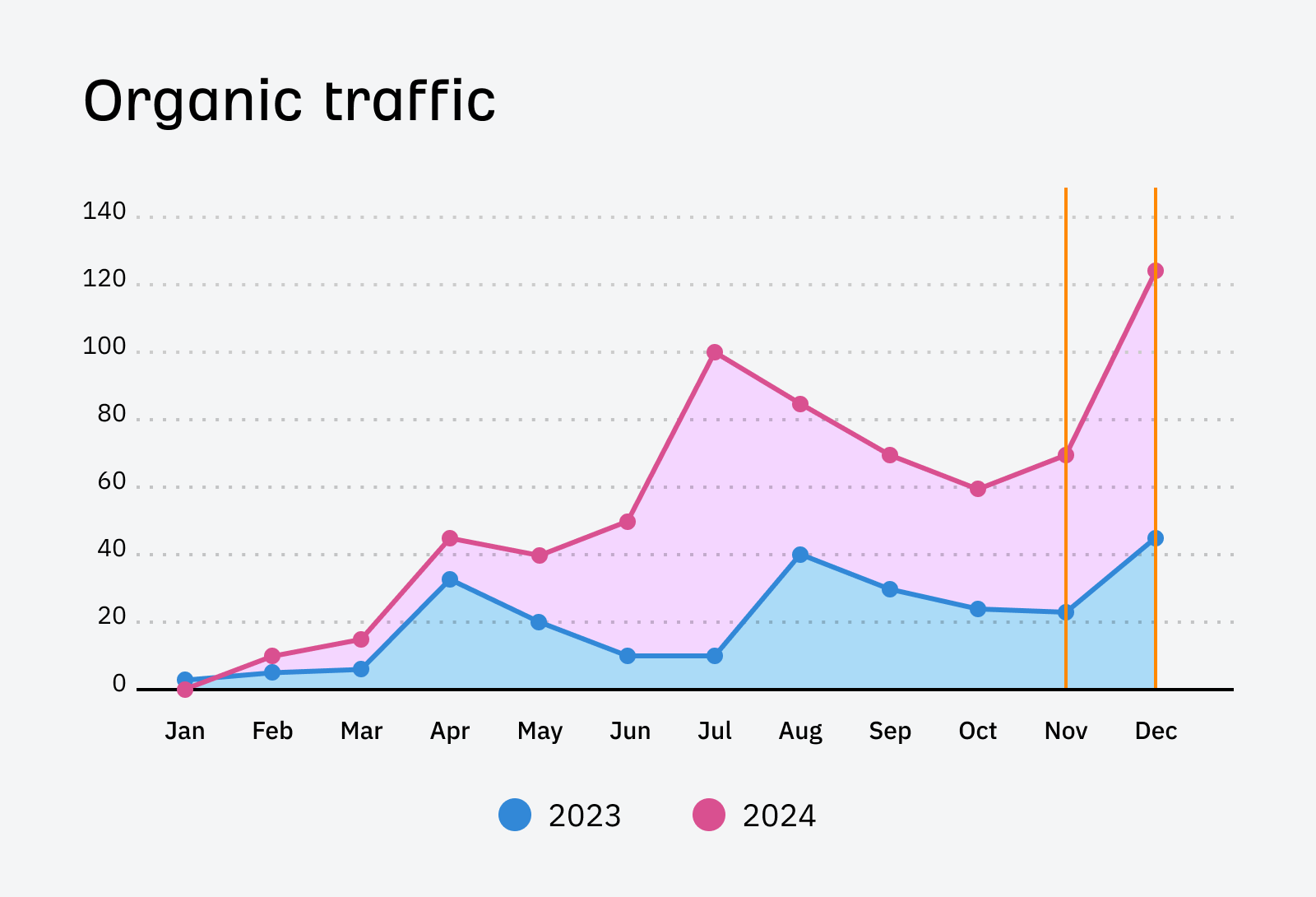

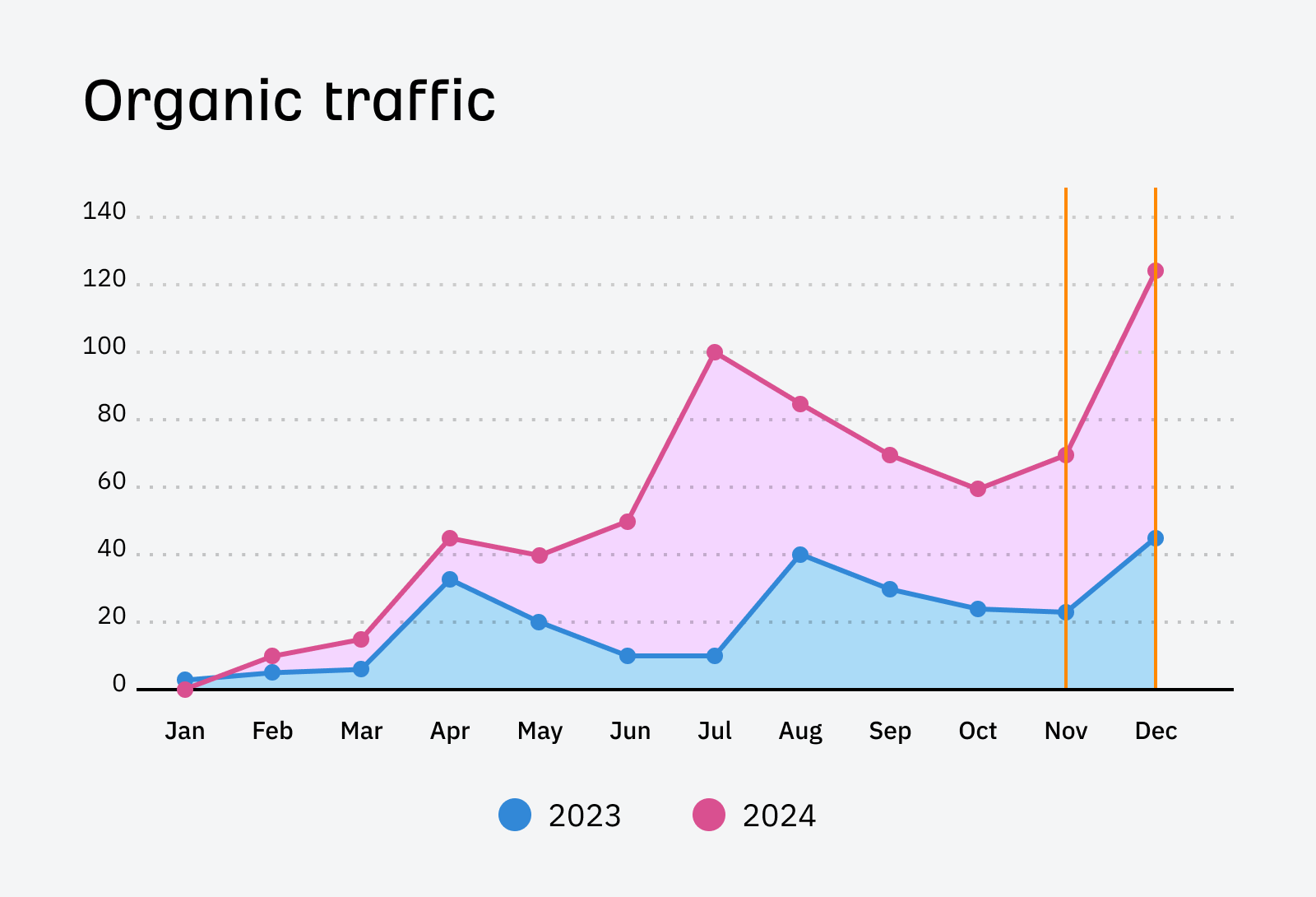

But for most SEO reporting, GSC clicks data is exported into a spreadsheet and turned into a chart to visualize year-over-year performance.

Favorite feature

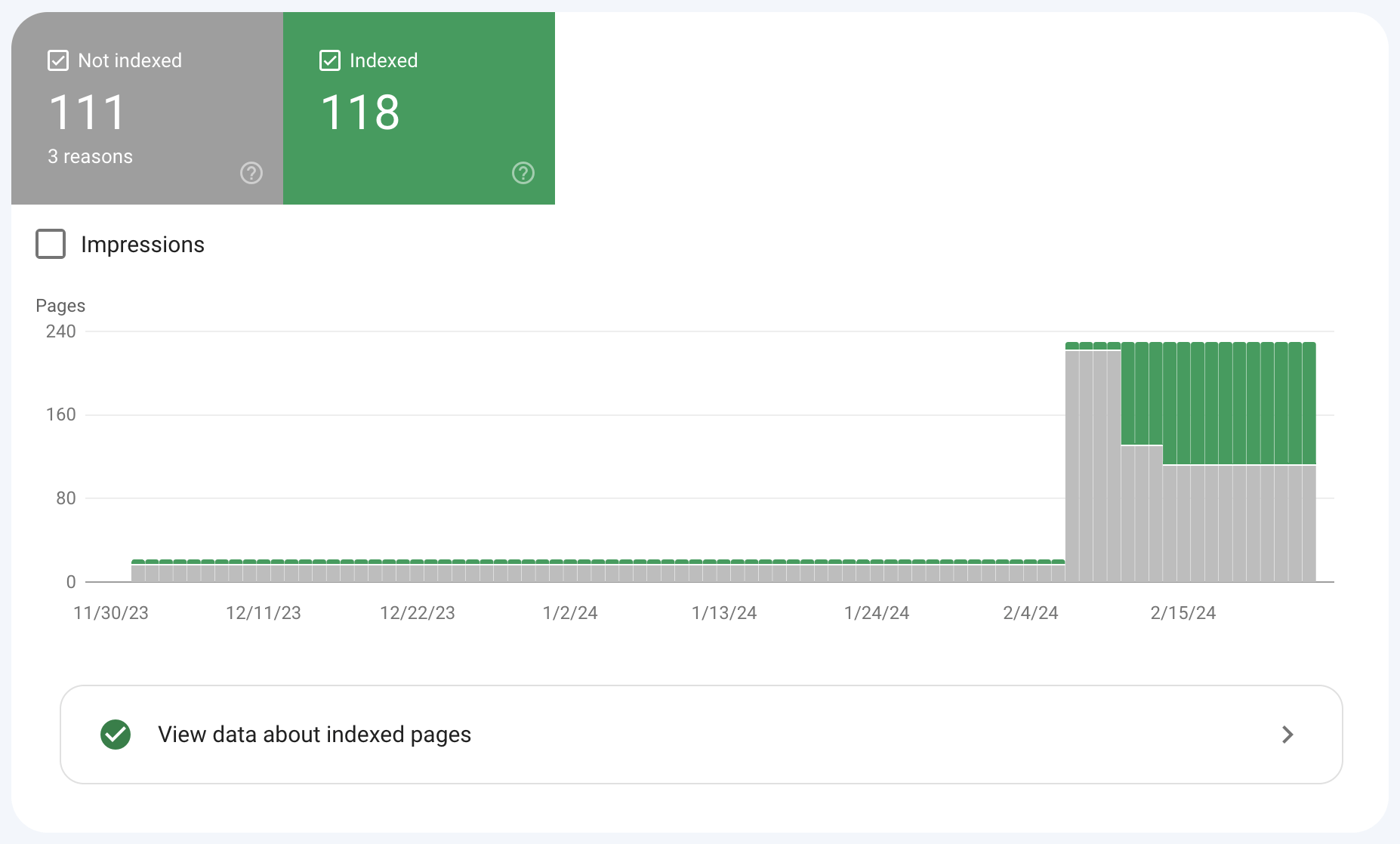

One of my favorite reports in GSC is the Indexing report. It’s useful for SEO reporting because you can share the indexed to non-indexed pages ratio in your SEO report.

If the website has a lot of non-indexed pages, then it’s worth reviewing the pages to understand why they haven’t been indexed.

Price: Free

Google Looker Studio (GLS), previously known as Google Data Studio (GDS), is a free tool that helps visualize data in shareable dashboards.

Most common reporting use case

Dashboards are an important part of SEO reporting, and GLS allows you to get a total view of search performance from multiple sources through its integrations.

Out of the box, GLS allows you to connect to many different data sources.

Such as:

- Marketing products – Google Ads, Google Analytics, Display & Video 360, Search Ads 360

- Consumer products – Google Sheets, YouTube, and Google Search Console

- Databases – BigQuery, MySQL, and PostgreSQL

- Social media platforms – Facebook, Reddit, and Twitter

- Files – CSV file upload and Google Cloud Storage

Sidenote.

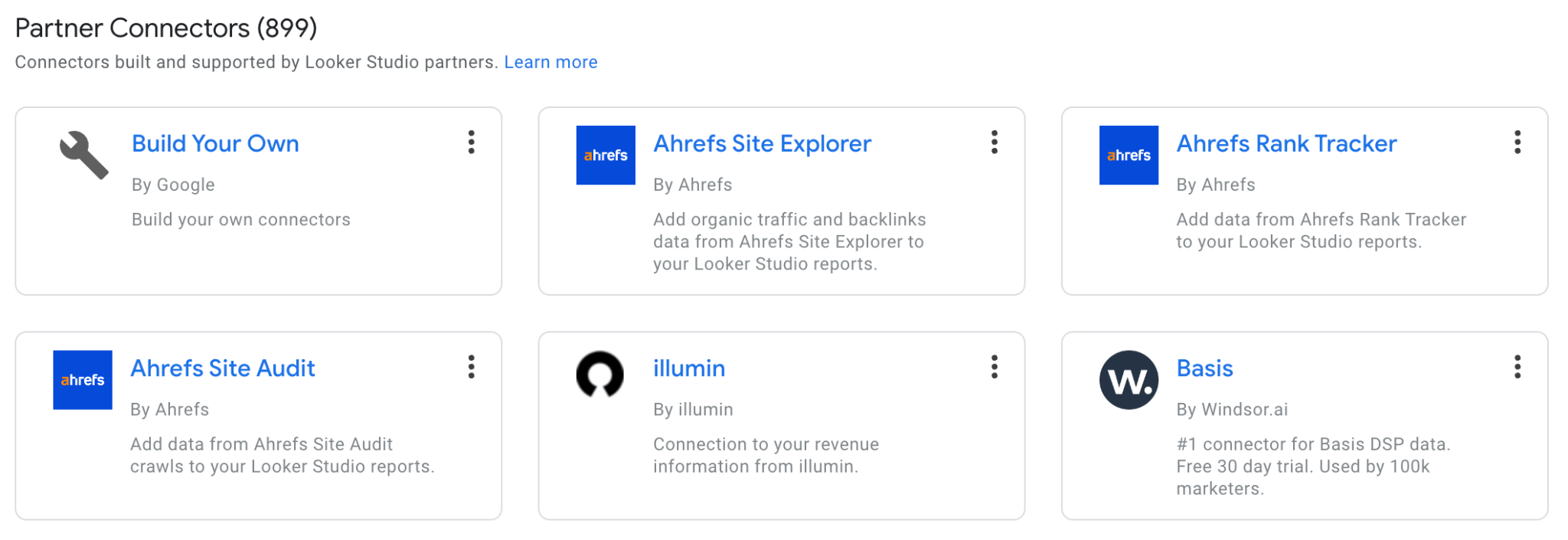

If you don’t have the time to create your own report manually, Ahrefs has three Google Looker Studio connectors that can help you create automated SEO reporting for any website in a few clicks

Here’s what a dashboard in GLS looks like:

With this type of dashboard, you share reports that are easy to understand with clients or other stakeholders.

Favorite feature

The ability to blend and filter data from different sources, like GA and GSC, means you can get a customized overview of your total search performance, tailored to your website.

Price: Free for 500 URLs

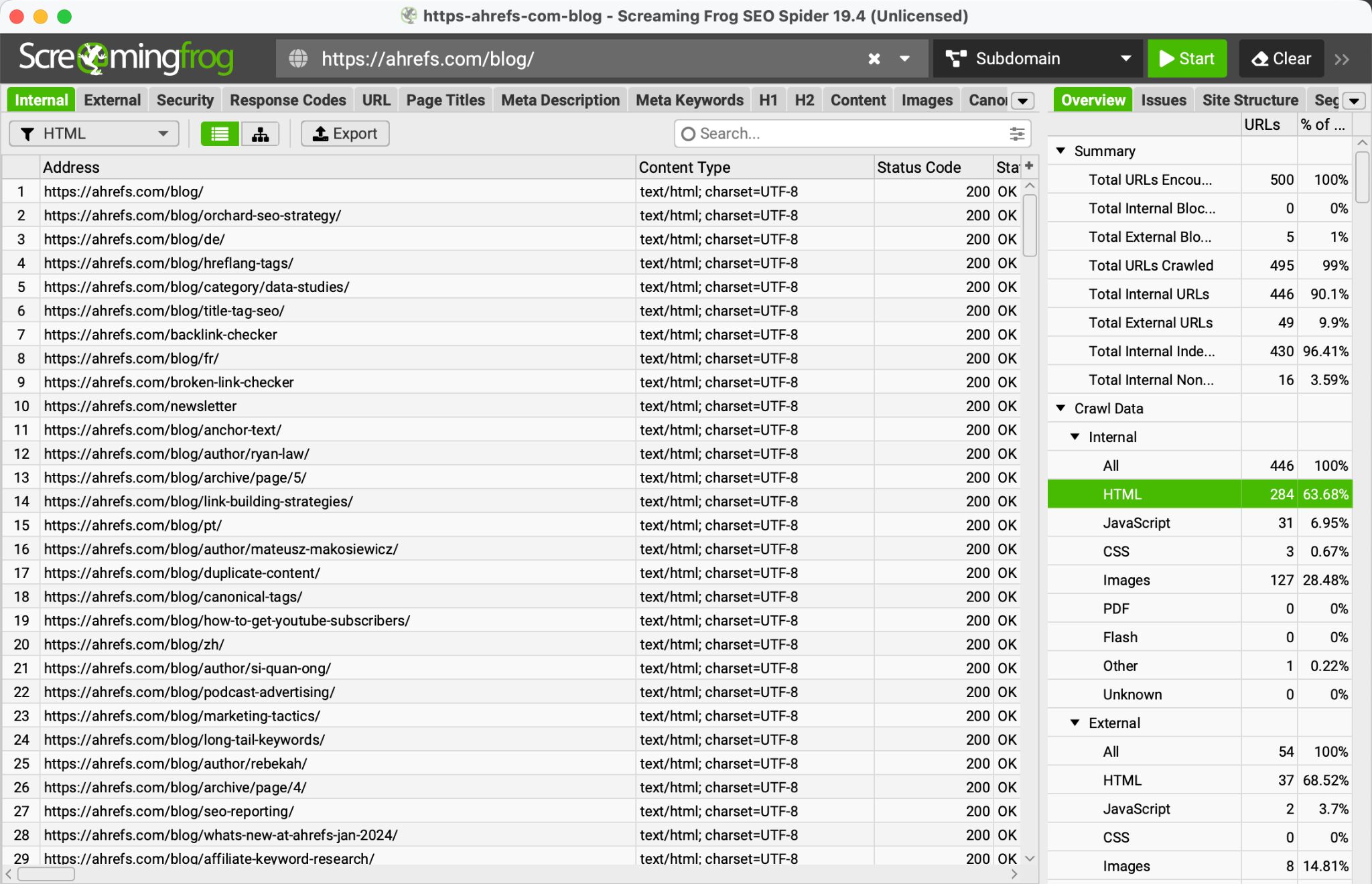

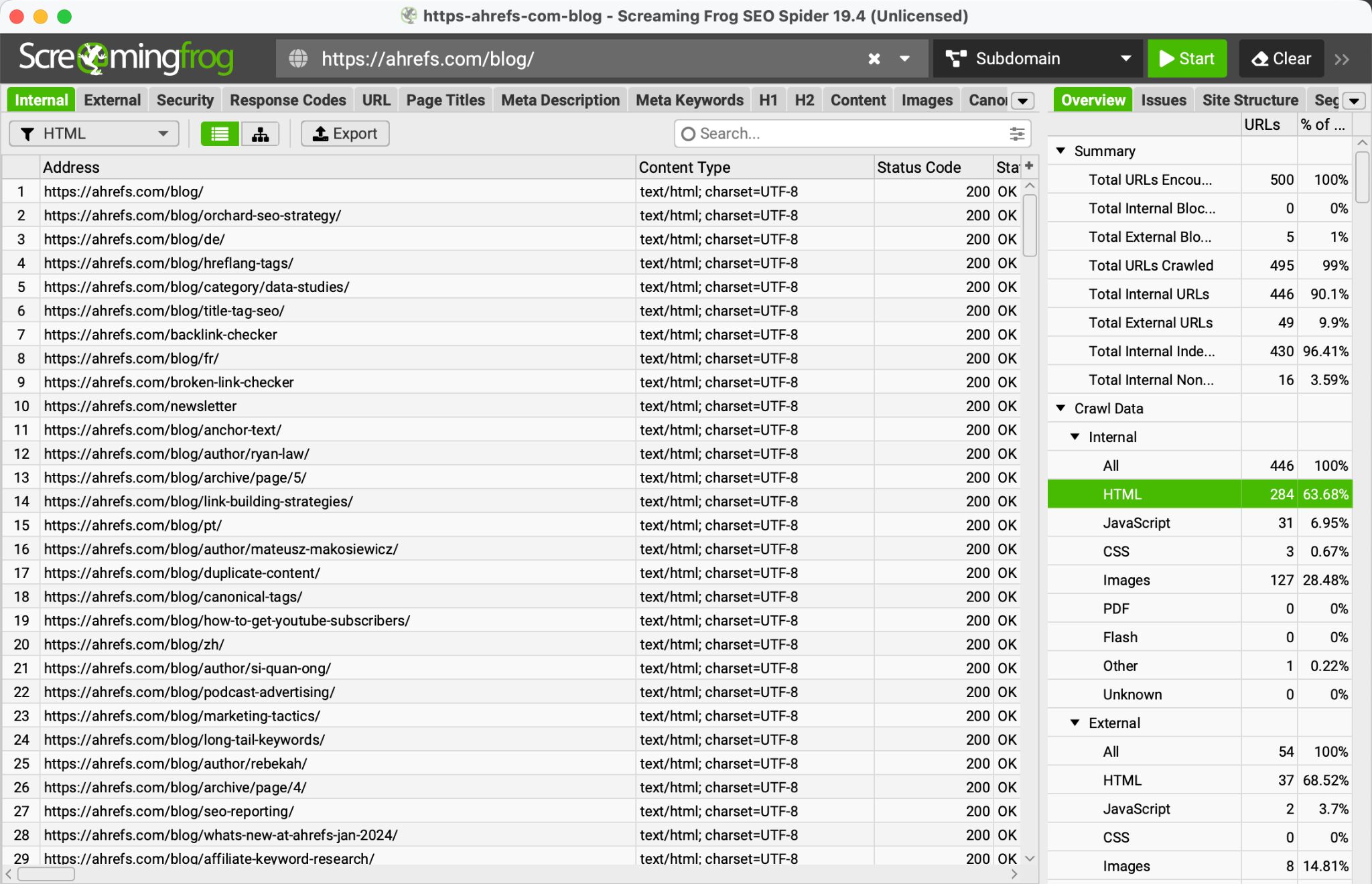

Screaming Frog is a website crawler that helps you audit your website.

Screaming Frog’s free version of its crawler is perfect if you want to run a quick audit on a bunch of URLs. The free version is limited to 500 URLs—making it ideal for crawling smaller websites.

Most common reporting use case

When it comes to reporting, the Reports menu in Screaming Frog SEO Spider has a wealth of information you can look over that covers all the technical aspects of your website, such as analyzing, redirects, canonicals, pagination, hreflang, structured data, and more.

Once you’ve crawled your site, it’s just a matter of downloading the reports you need and working out the main issues to summarize in your SEO report.

Favorite feature

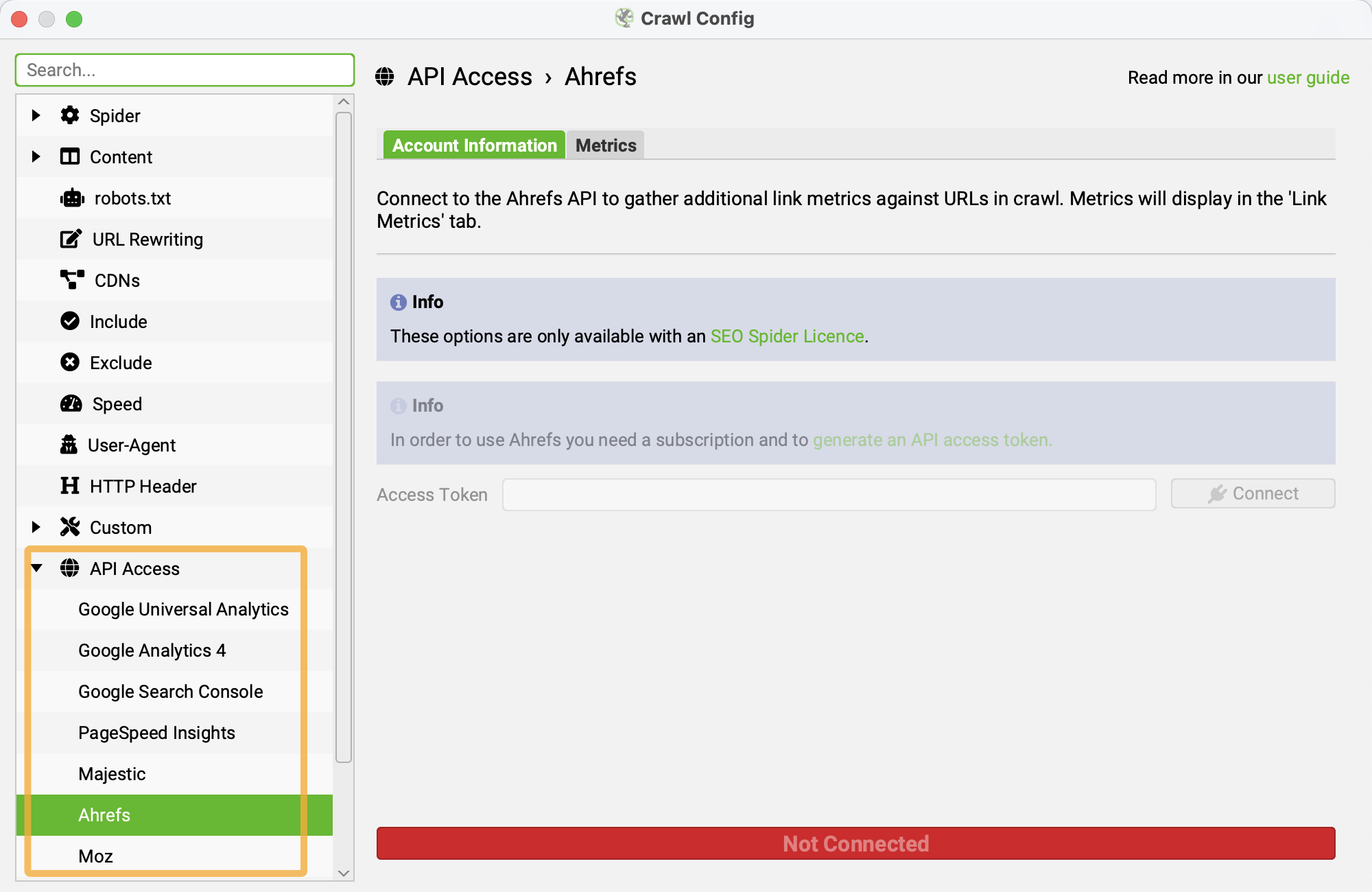

Screaming Frog can pull in data from other tools, including Ahrefs, using APIs.

If you already had access to a few SEO tools’ APIs, you could pull data from all of them directly into Screaming Frog. This is useful if you want to combine crawl data with performance data or other 3rd party tools.

Even if you’ve never configured an API, connecting other tools to Screaming Frog is straightforward.

Price: Free

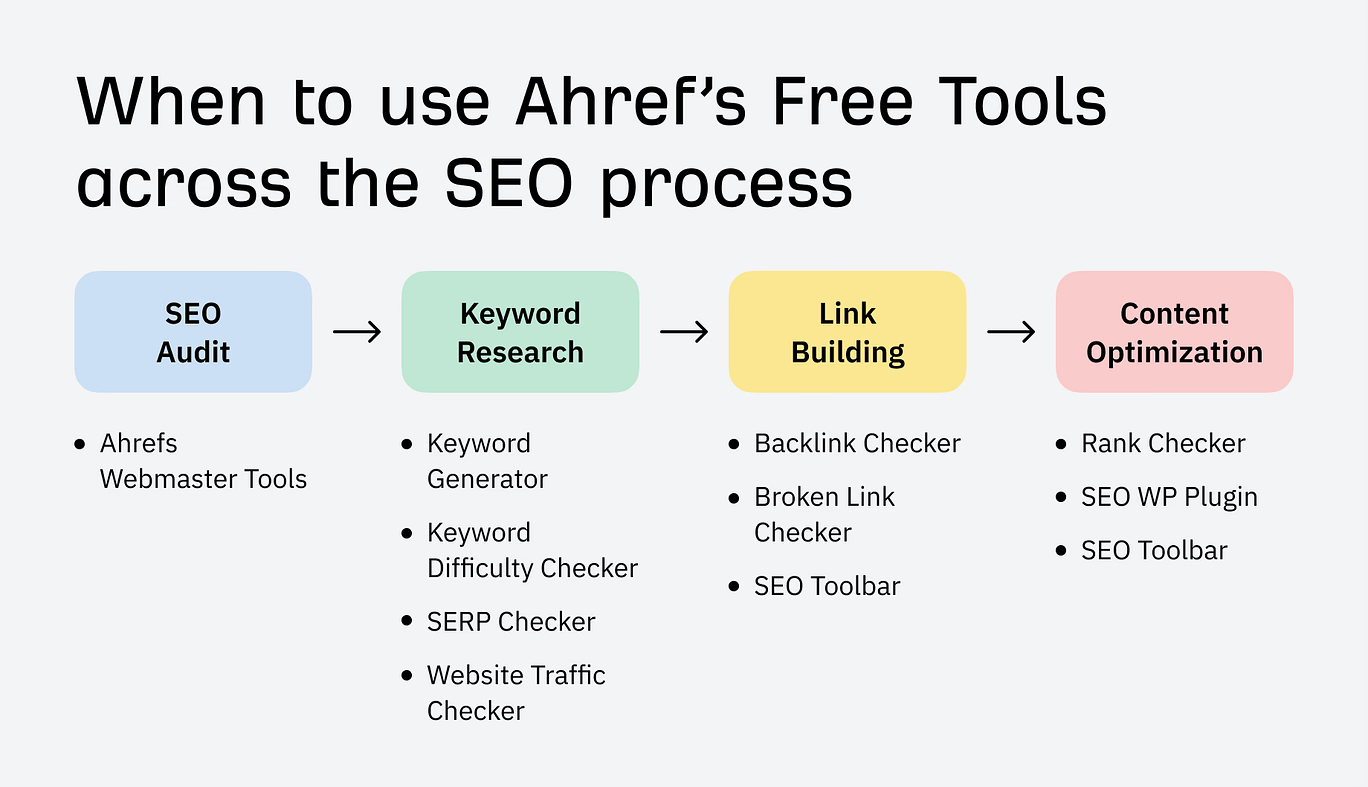

Ahrefs has a large selection of free SEO tools to help you at every stage of your SEO campaign, and many of these can be used to provide insights for your SEO reporting.

For example, you could use our:

Most common reporting use case

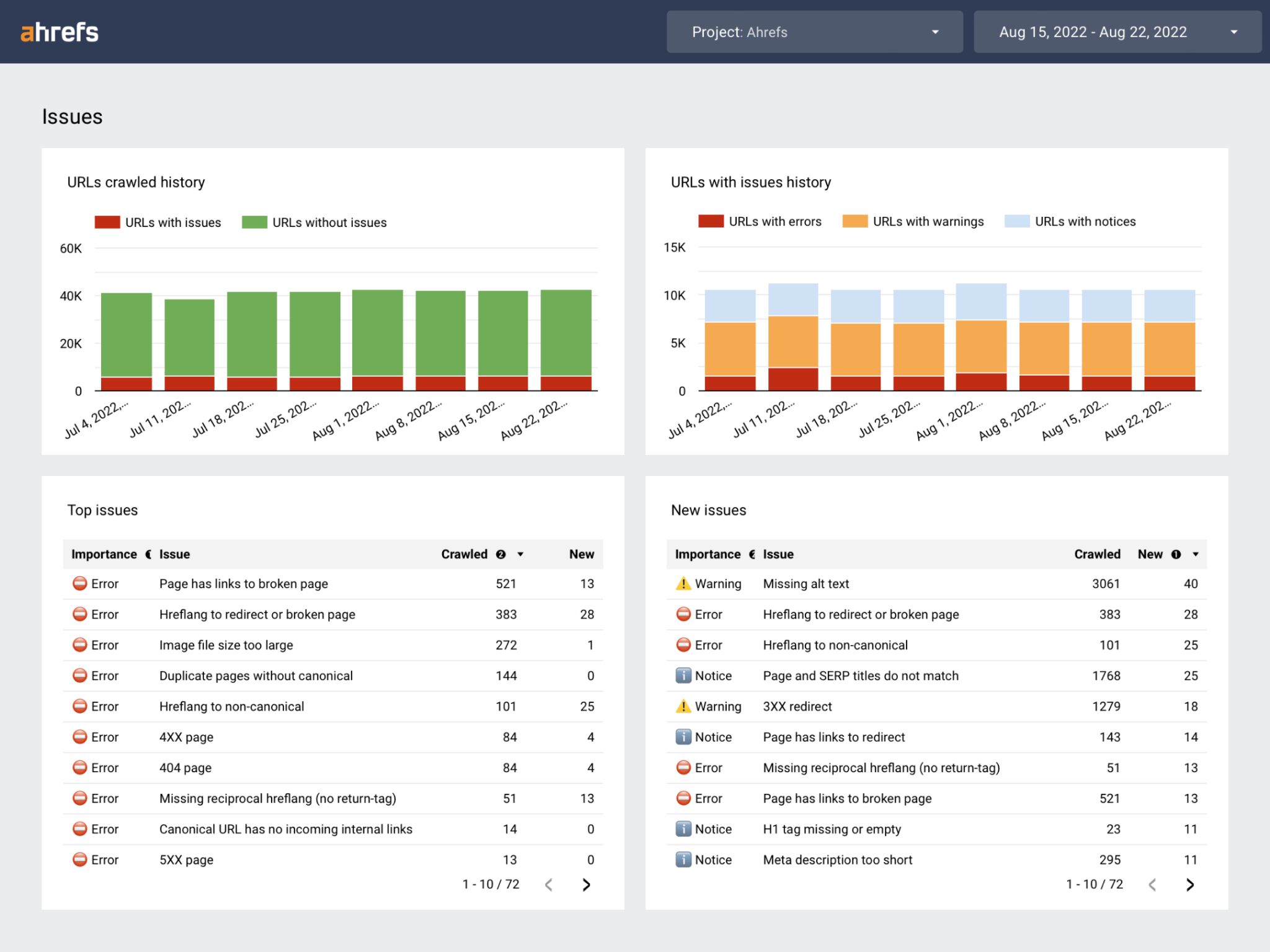

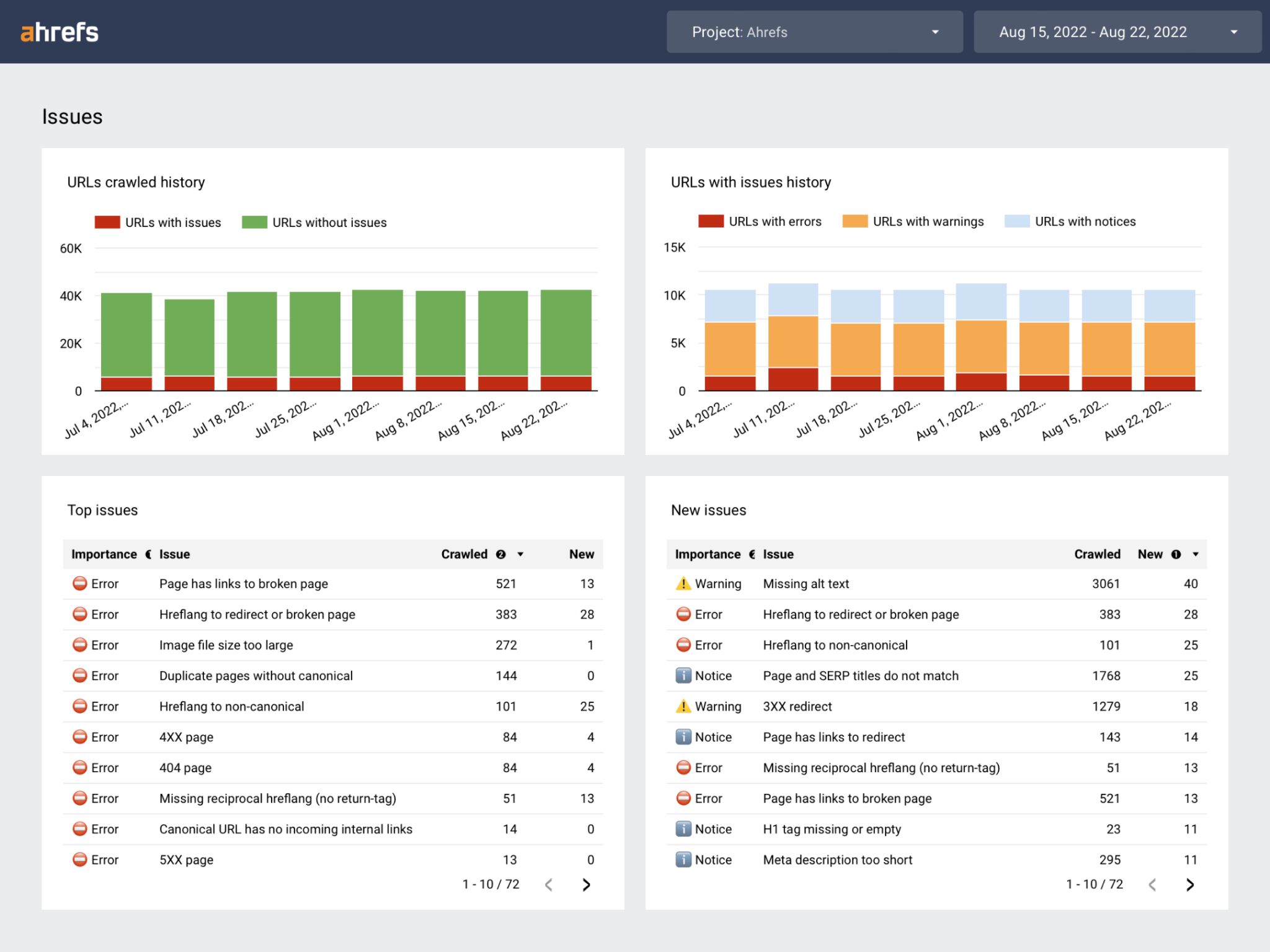

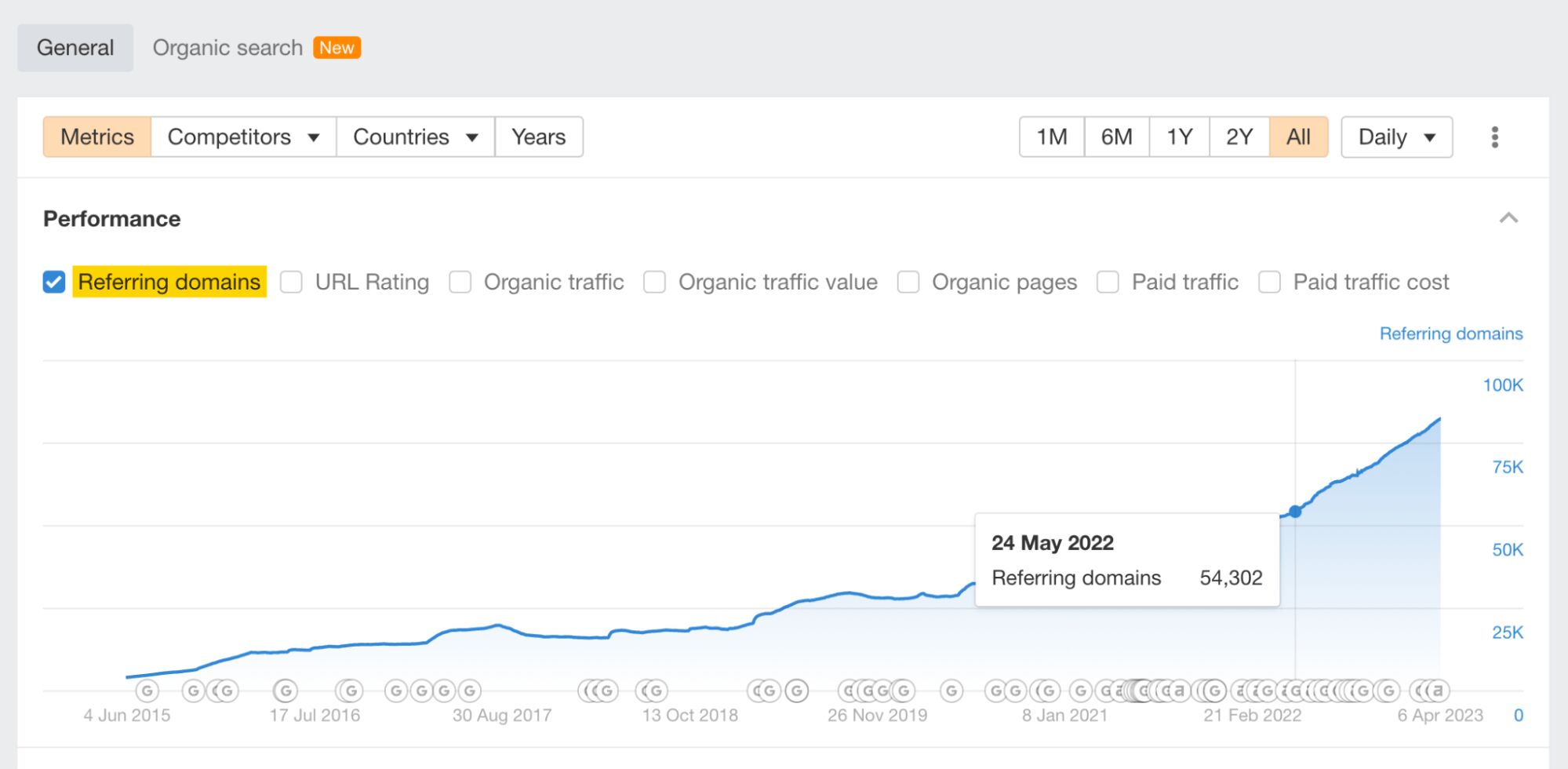

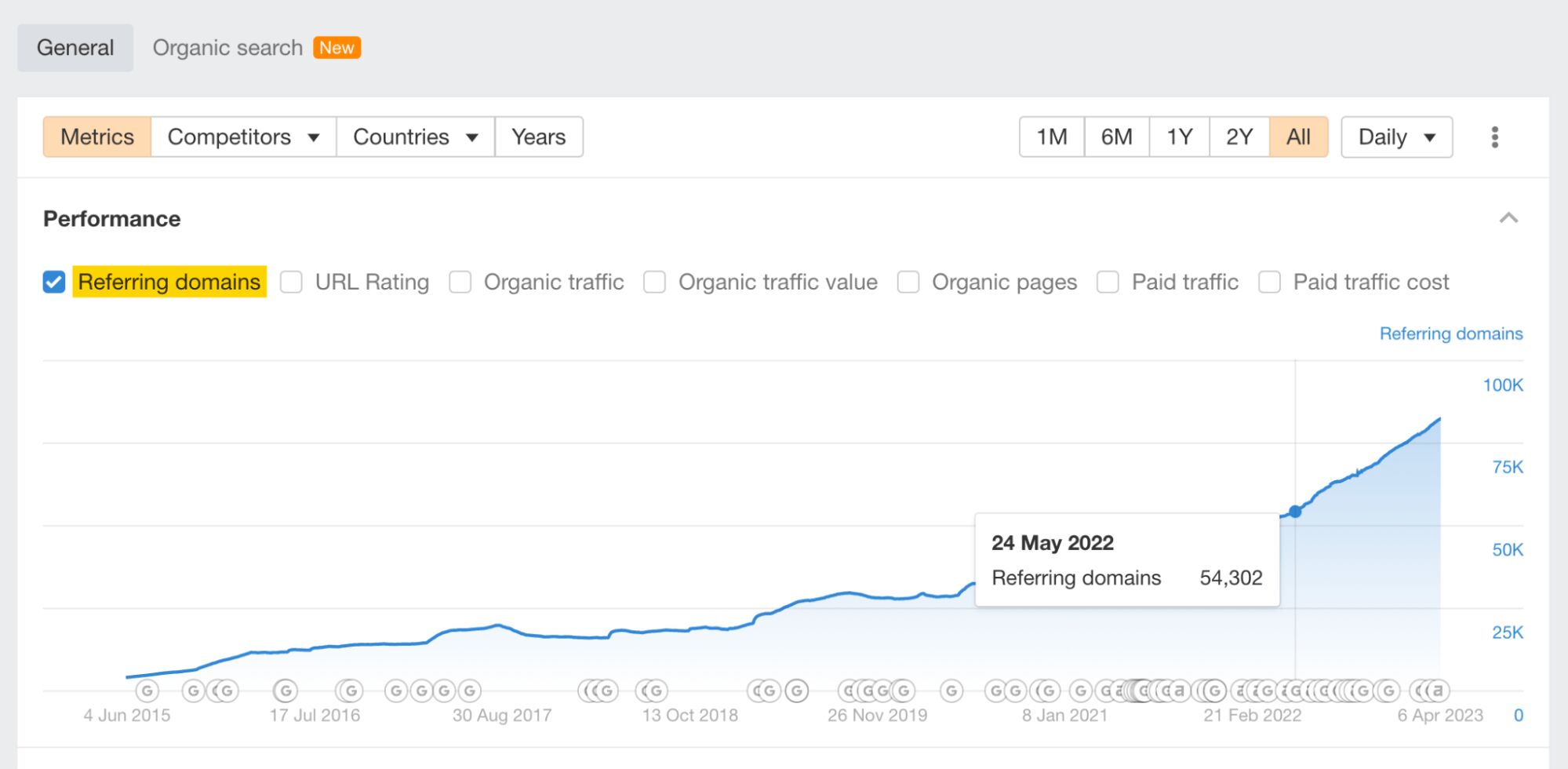

One of our most popular free SEO tools is Ahrefs Webmaster Tools (AWT), which you can use for your SEO reporting.

With AWT, you can:

- Monitor your SEO health over time by setting up scheduled SEO audits

- See the performance of your website

- Check all known backlinks for your website

Favorite feature

Of all the Ahrefs free tools, my favorite is AWT. Within it, site auditing is my favorite feature—once you’ve set it up, it’s a completely hands-free way to keep track of your website’s technical performance and monitor its health.

If you already have access to Google Search Console, it’s a no-brainer to set up a free AWT account and schedule a technical crawl of your website(s).

Price: Free

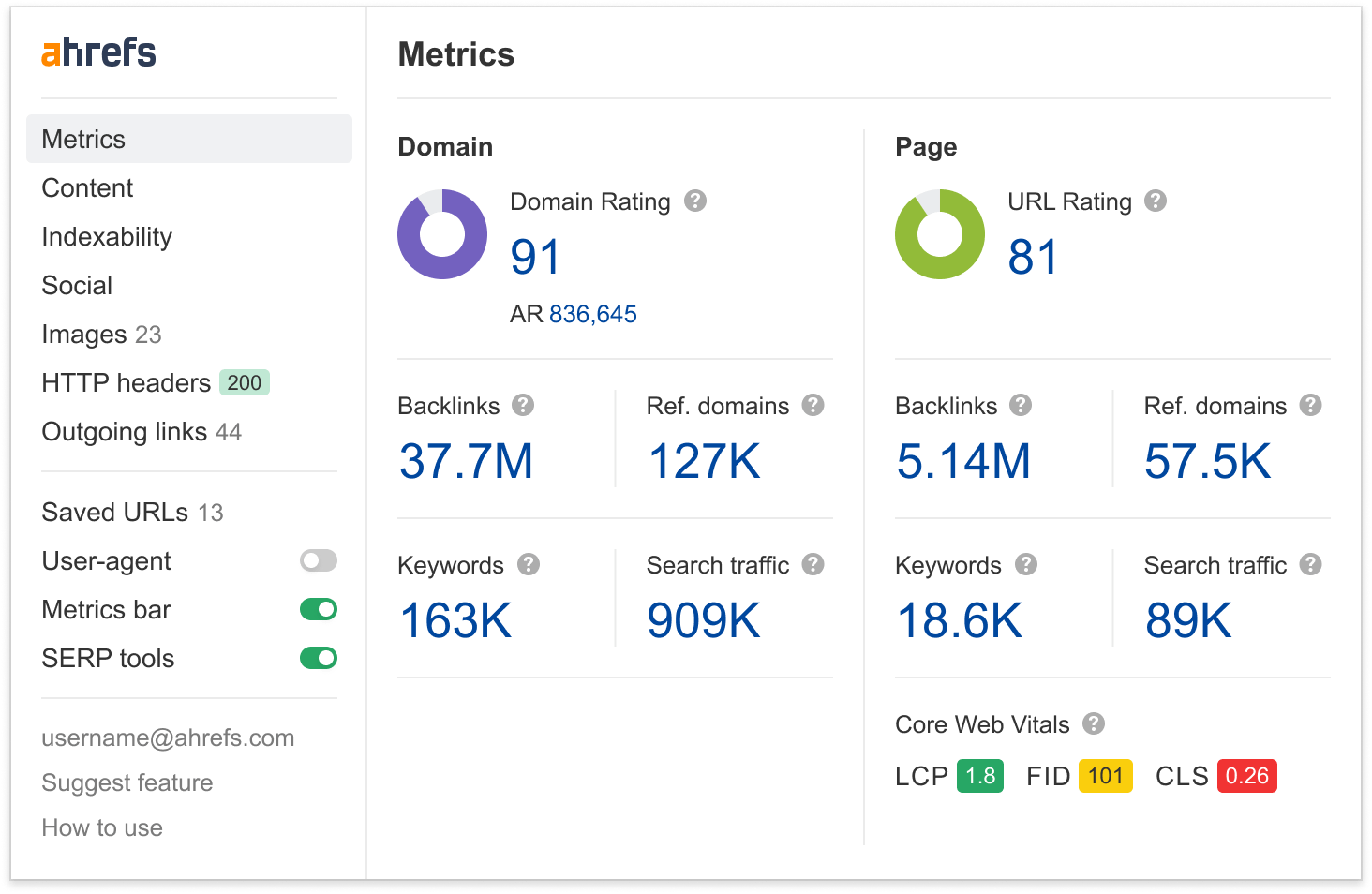

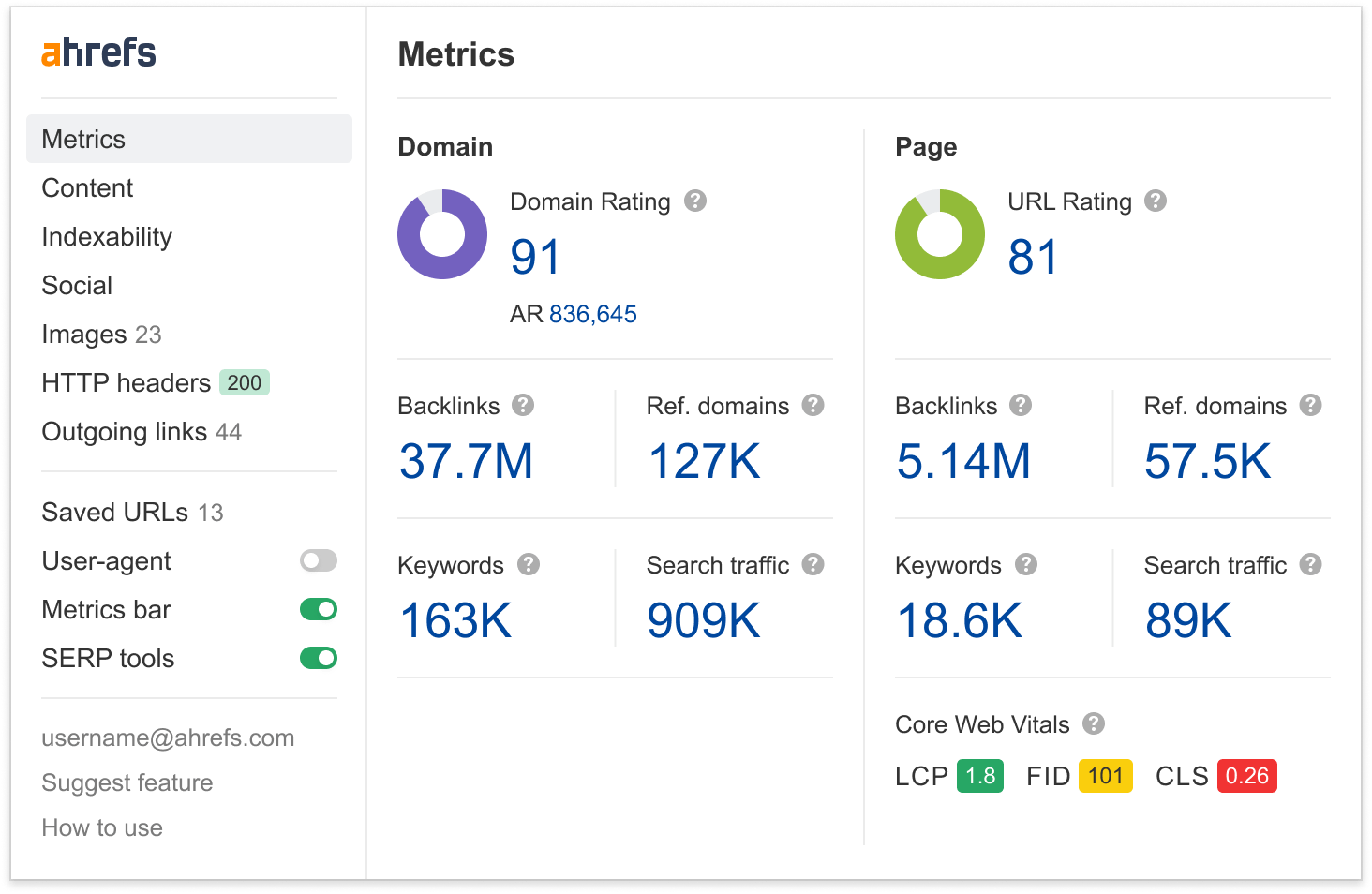

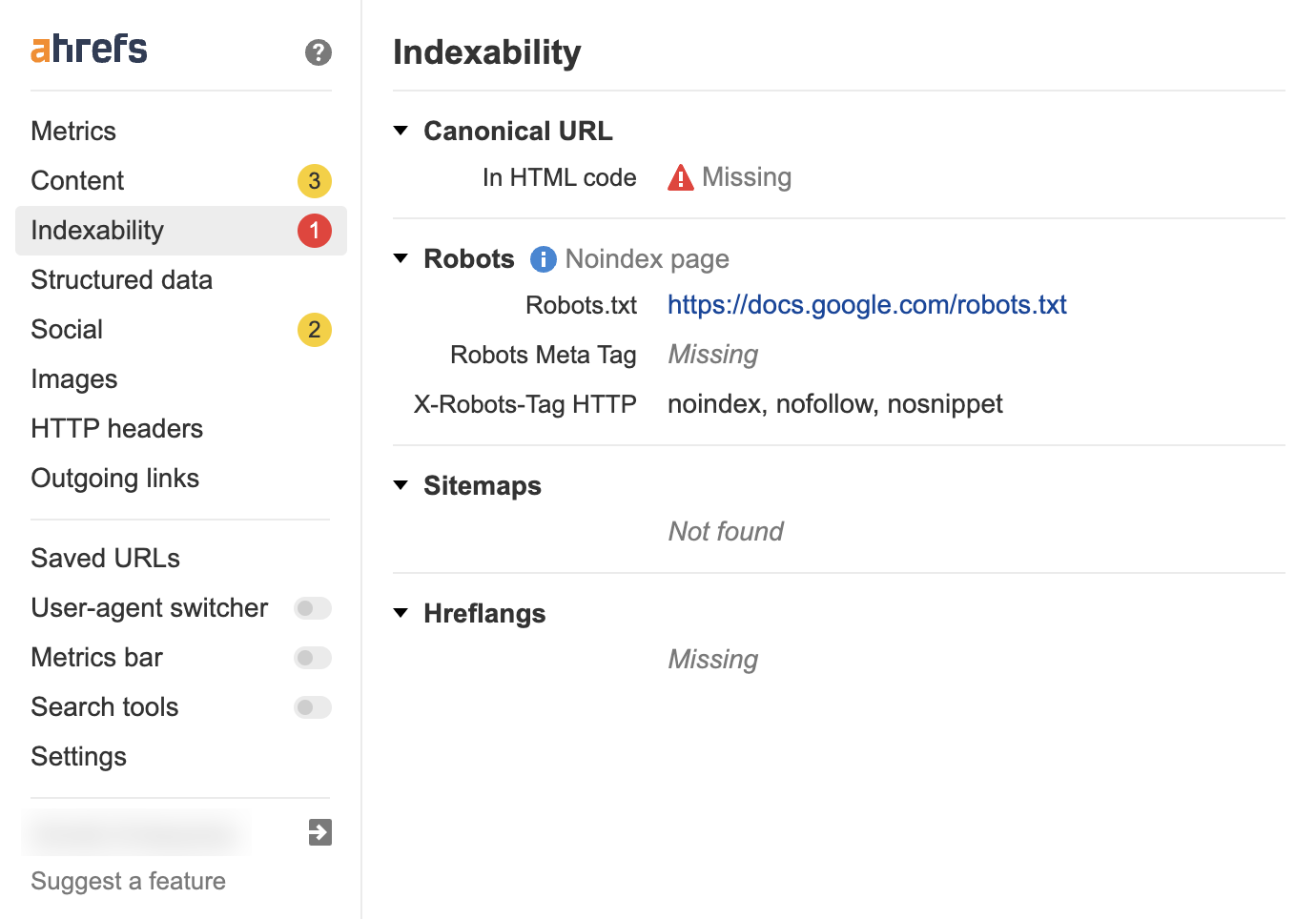

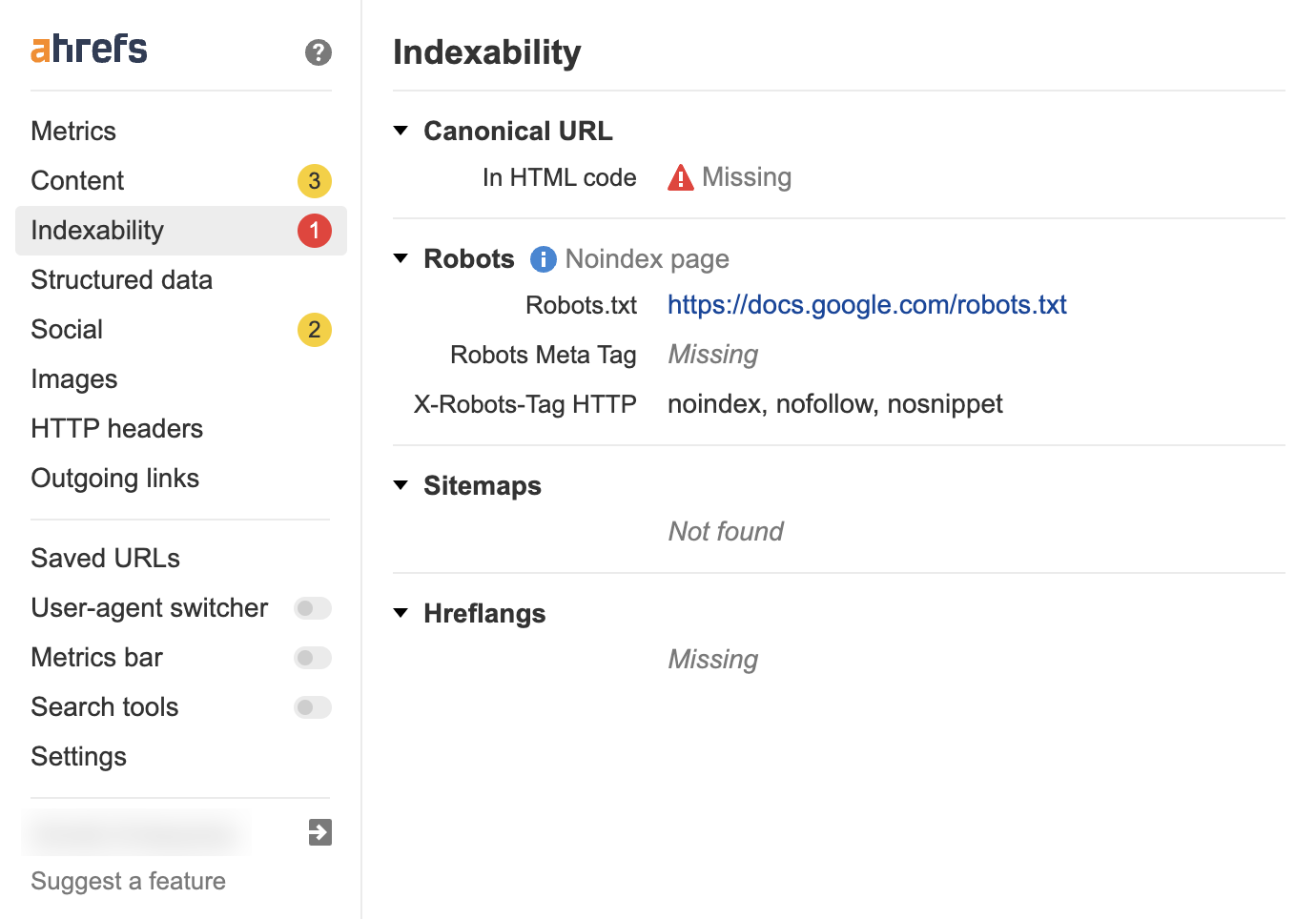

Ahrefs’ SEO Toolbar is a free Chrome and Firefox extension useful for diagnosing on-page technical issues and performing quick spot checks on your website’s pages.

Most common reporting use case

For SEO reporting, it’s useful to run an on-page check on your website’s top pages to ensure there aren’t any serious on-page issues.

With the free version, you get the following features:

- On-page SEO report

- Redirect tracer with HTTP Headers

- Outgoing links report with link highlighter and broken link checker

- SERP positions

- Country changer for SERP

The SEO toolbar is excellent for spot-checking issues with pages on your website. If you are not confident with inspecting the code, it can also give you valuable pointers on what elements you need to include on your pages to make them search-friendly.

If anything is wrong with the page, the toolbar highlights it, with red indicating a critical issue.

Favorite feature

The section I use the most frequently in the SEO toolbar is the Indexability tab. In this section, you can see whether the page can be crawled and indexed by Google.

Although you can do this by inspecting the code manually, using the toolbar is much faster.

Price: Free

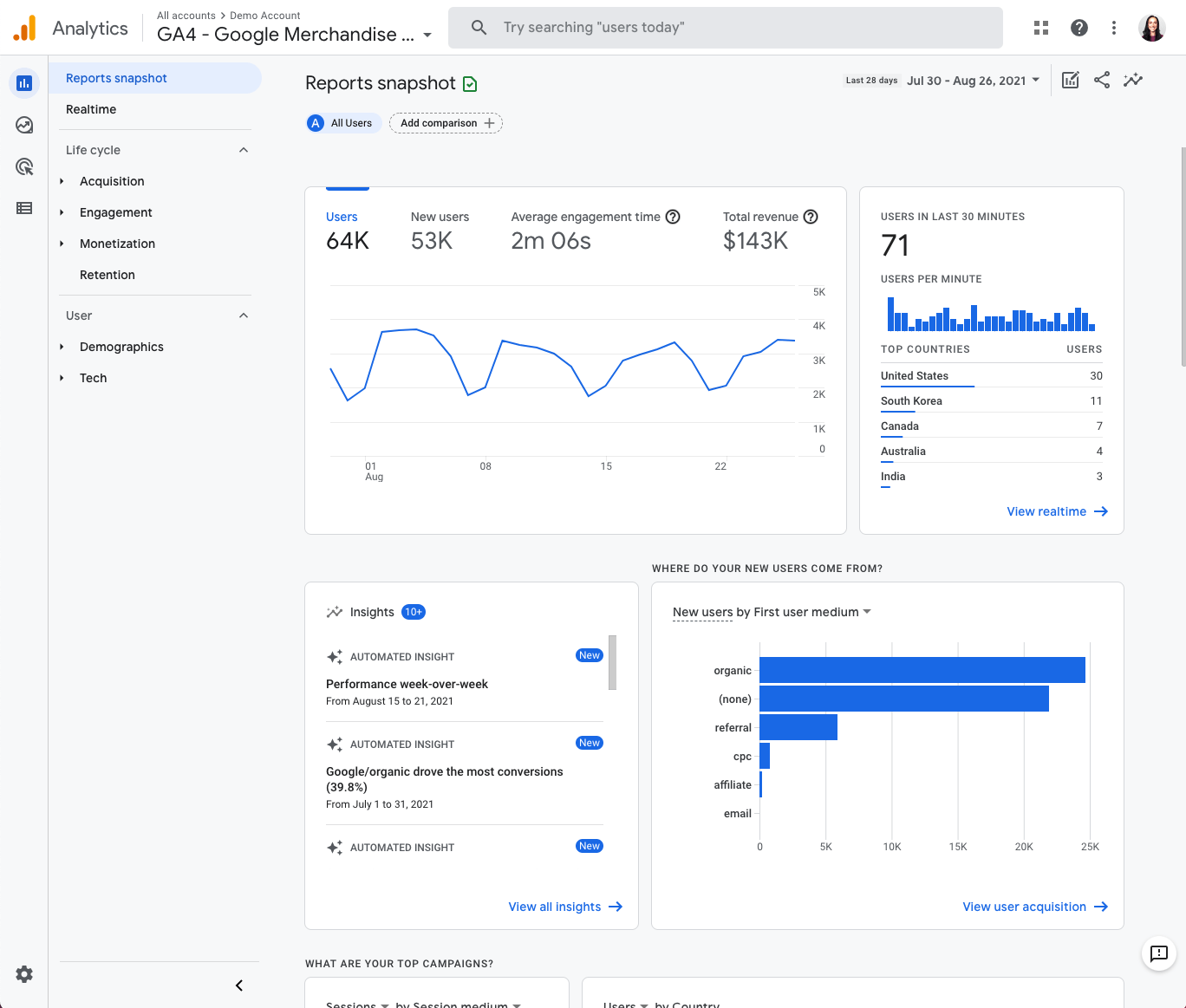

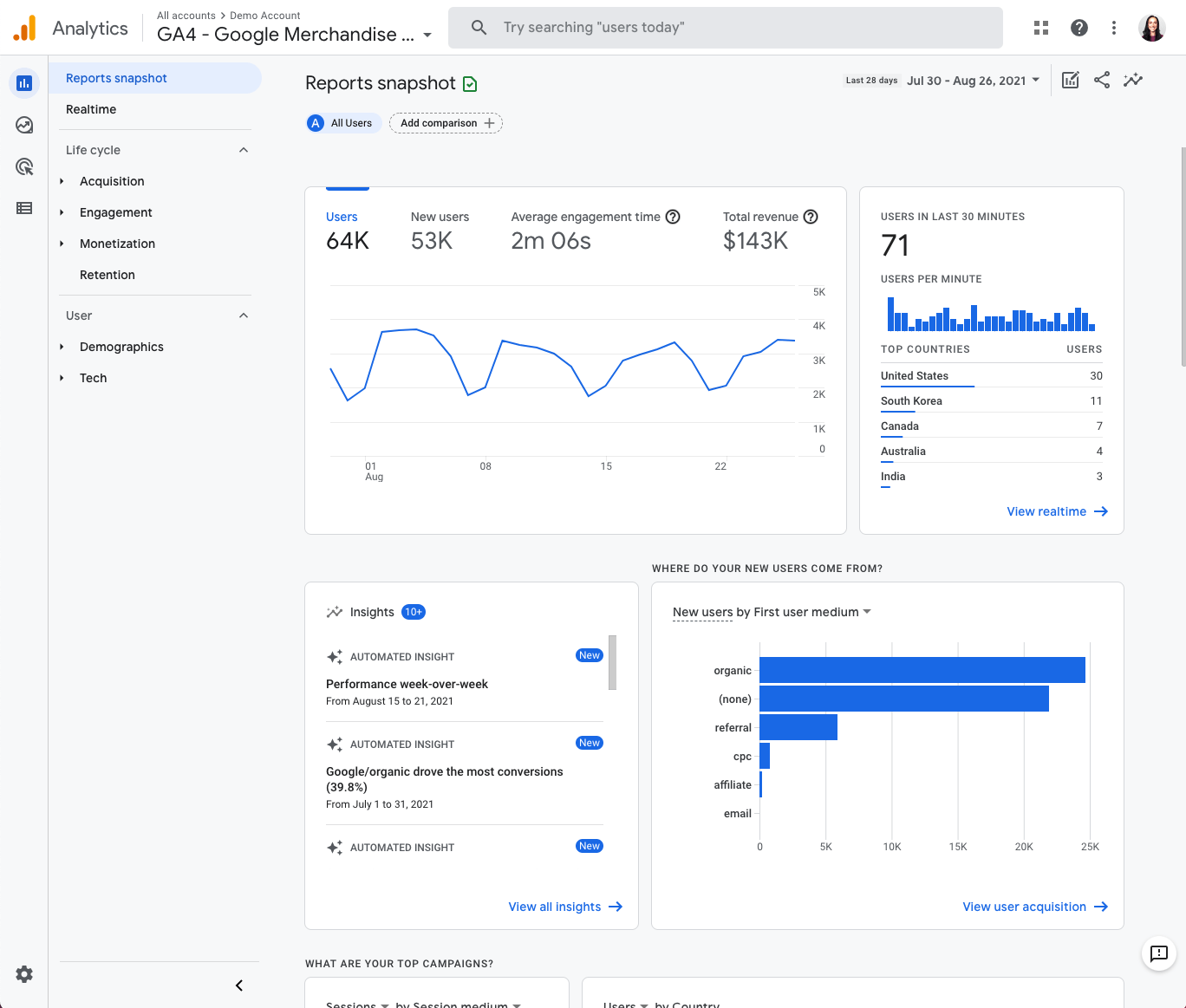

Like GSC, Google Analytics is another tool you can use to track the performance of your website, tracking sessions and conversions and much more on your website.

Most common reporting use case

GA gives you a total view of website traffic from several different sources, such as direct, social, organic, paid traffic, and more.

Favorite feature

You can create and track up to 300 events and 30 conversions with GA4. Previously, with universal analytics, you could only track 20 conversions. This makes conversion and event tracking easier within GA4.

Price: Free

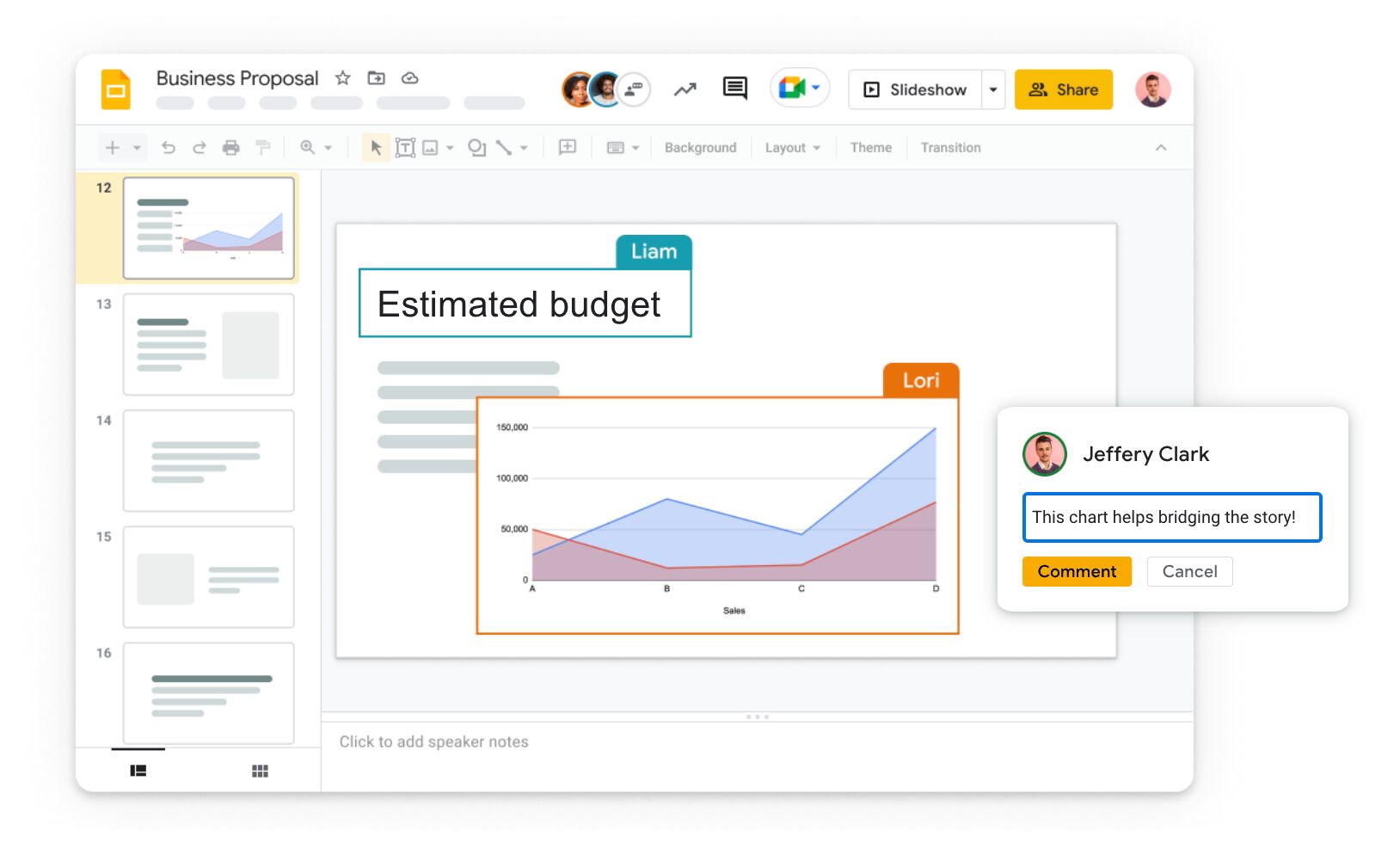

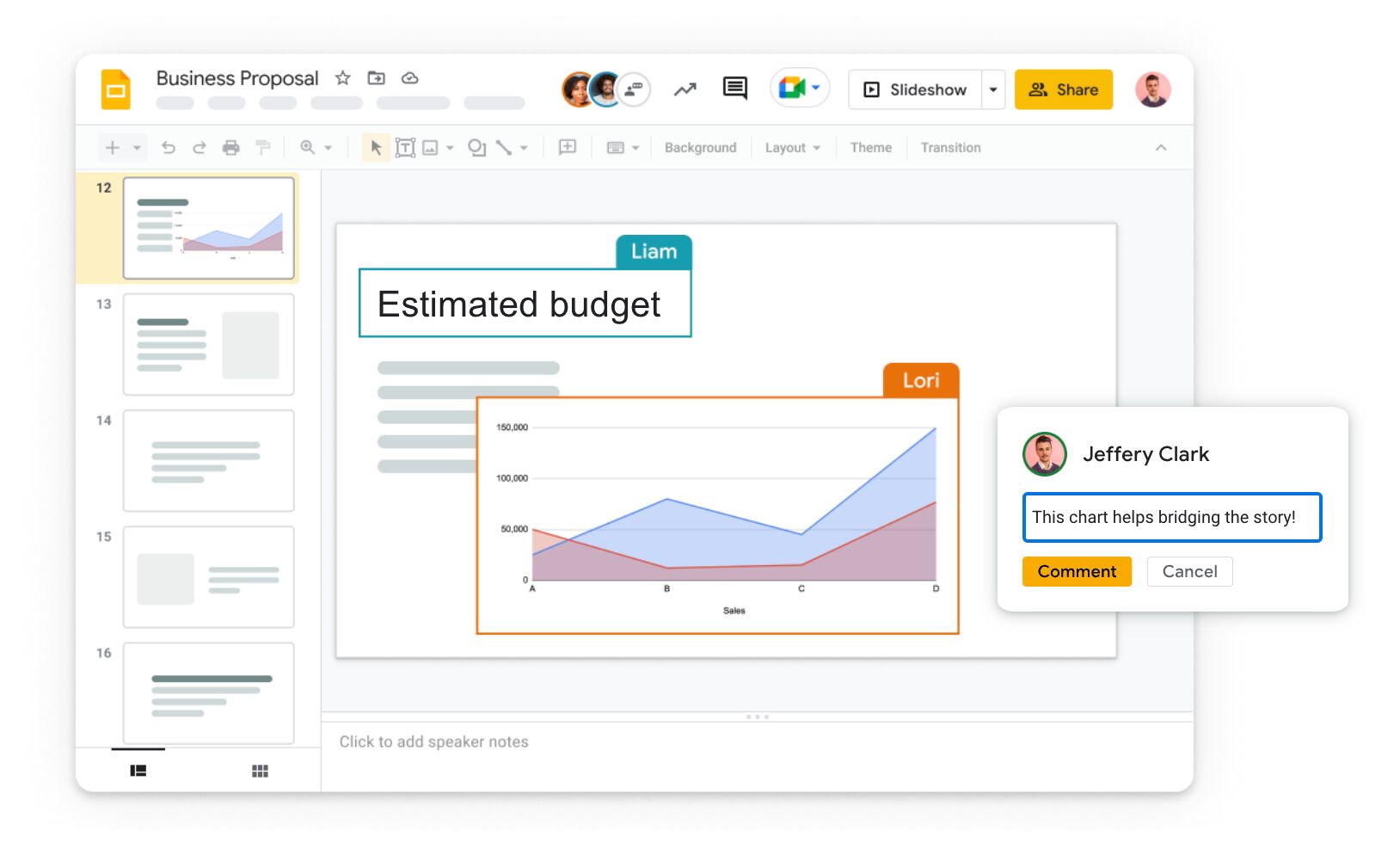

Google Slides is Google’s version of Microsoft PowerPoint. If you don’t have a dashboard set up to report on your SEO performance, the next best thing is to assemble a slide deck.

Many SEO agencies present their report through dashboard insights and PowerPoint presentations. However, if you don’t have access to PowerPoint, then Google Slides is an excellent (free) alternative.

Most common reporting use cases

The most common use of Google Slides is to create a monthly SEO report. If you don’t know what to include in a monthly report, use our SEO report template.

Favorite feature

One of my favorite features is the ability to share your presentation on a video chat directly from Google Slides. You can do this by clicking the camera icon in the top right.

This is useful if you are working with remote clients and makes sharing your reports easy.

Price: Free

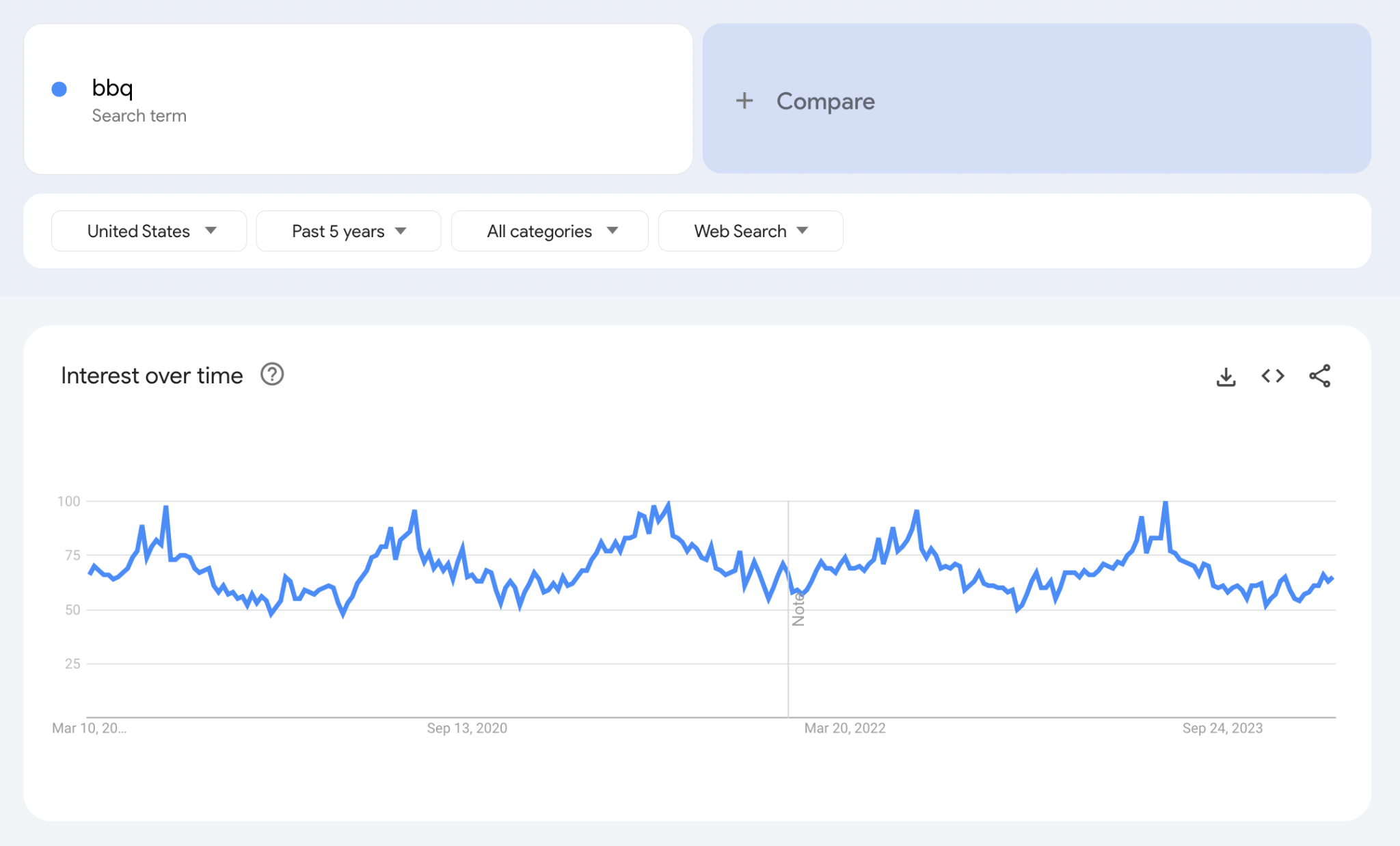

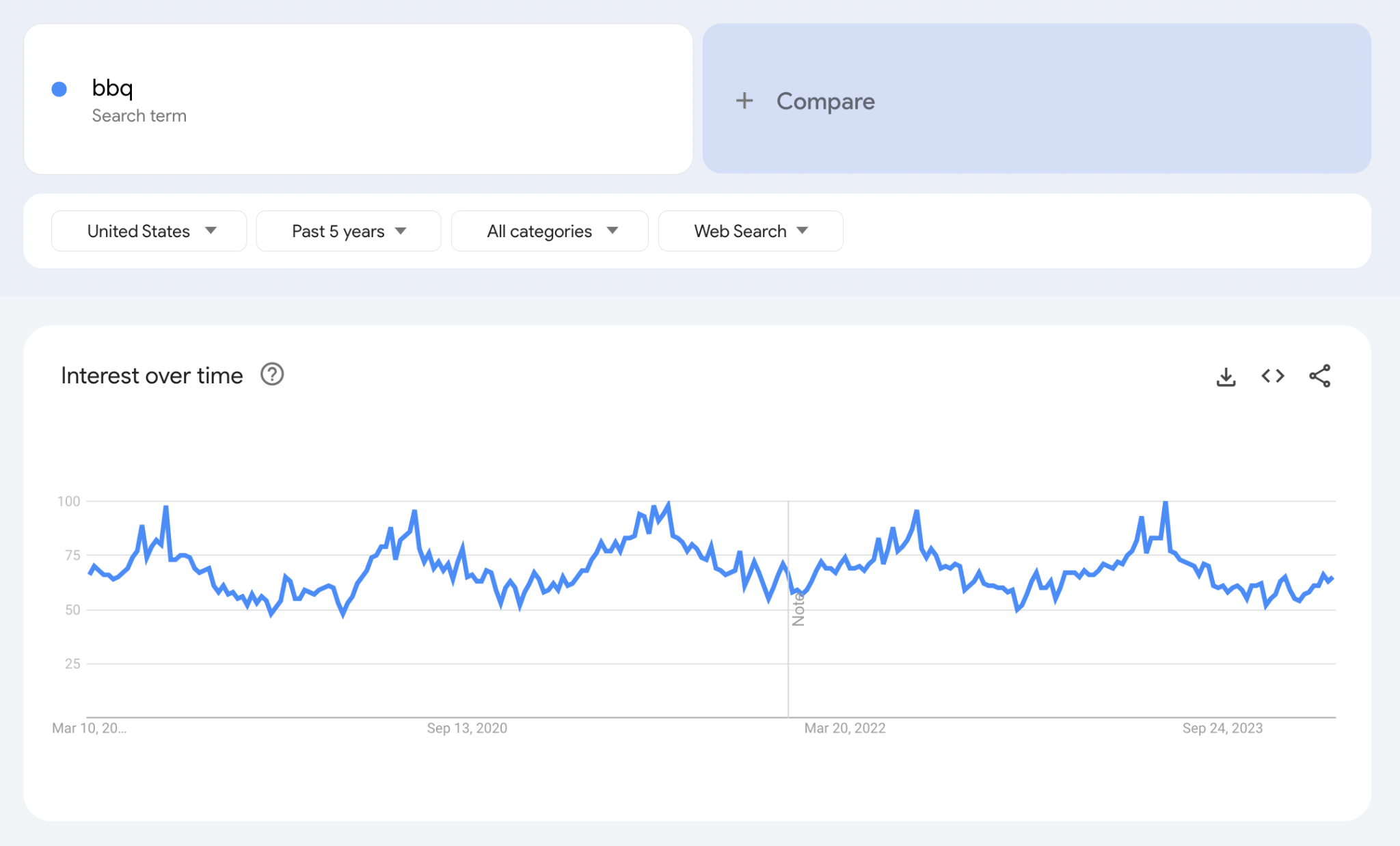

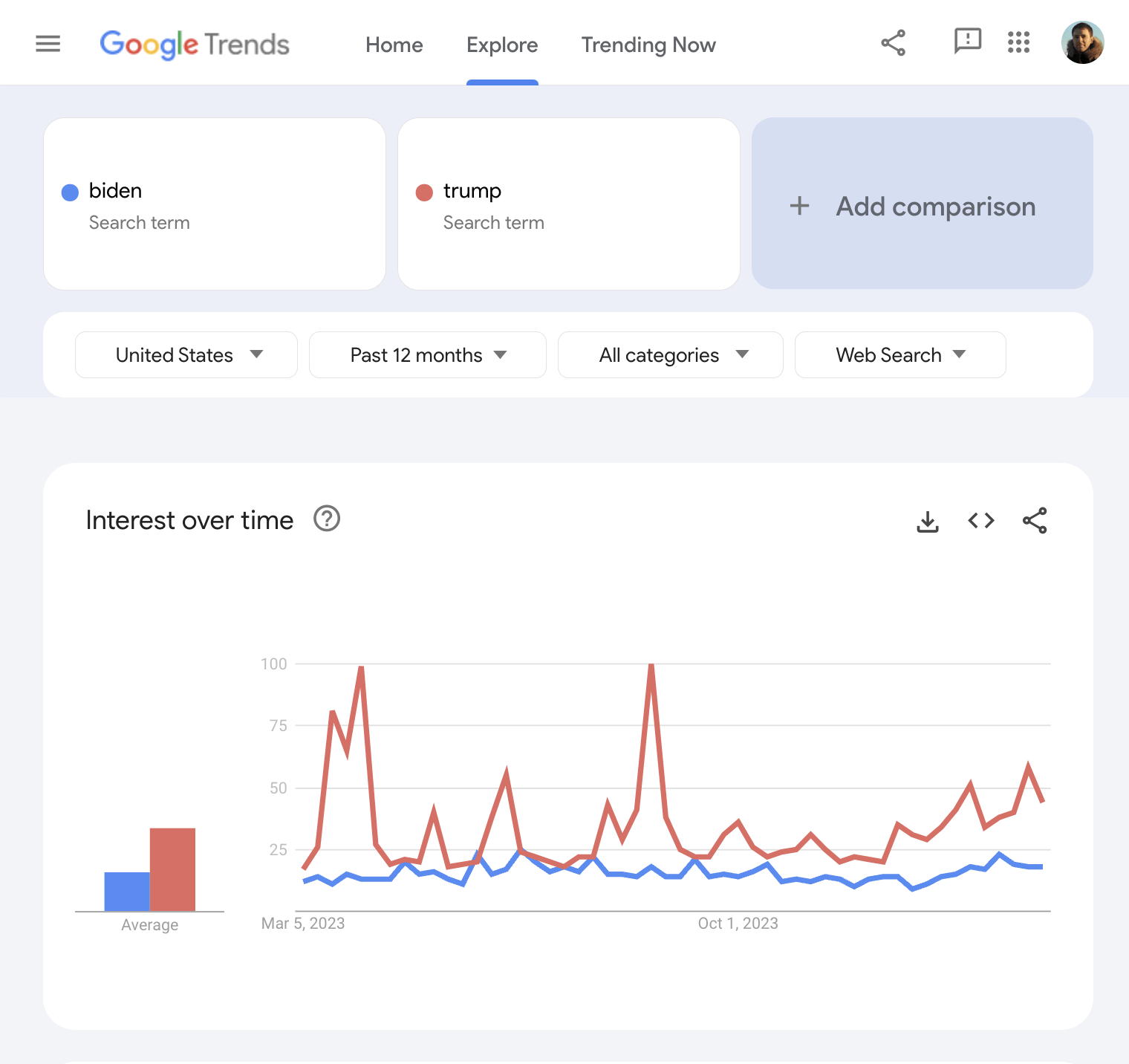

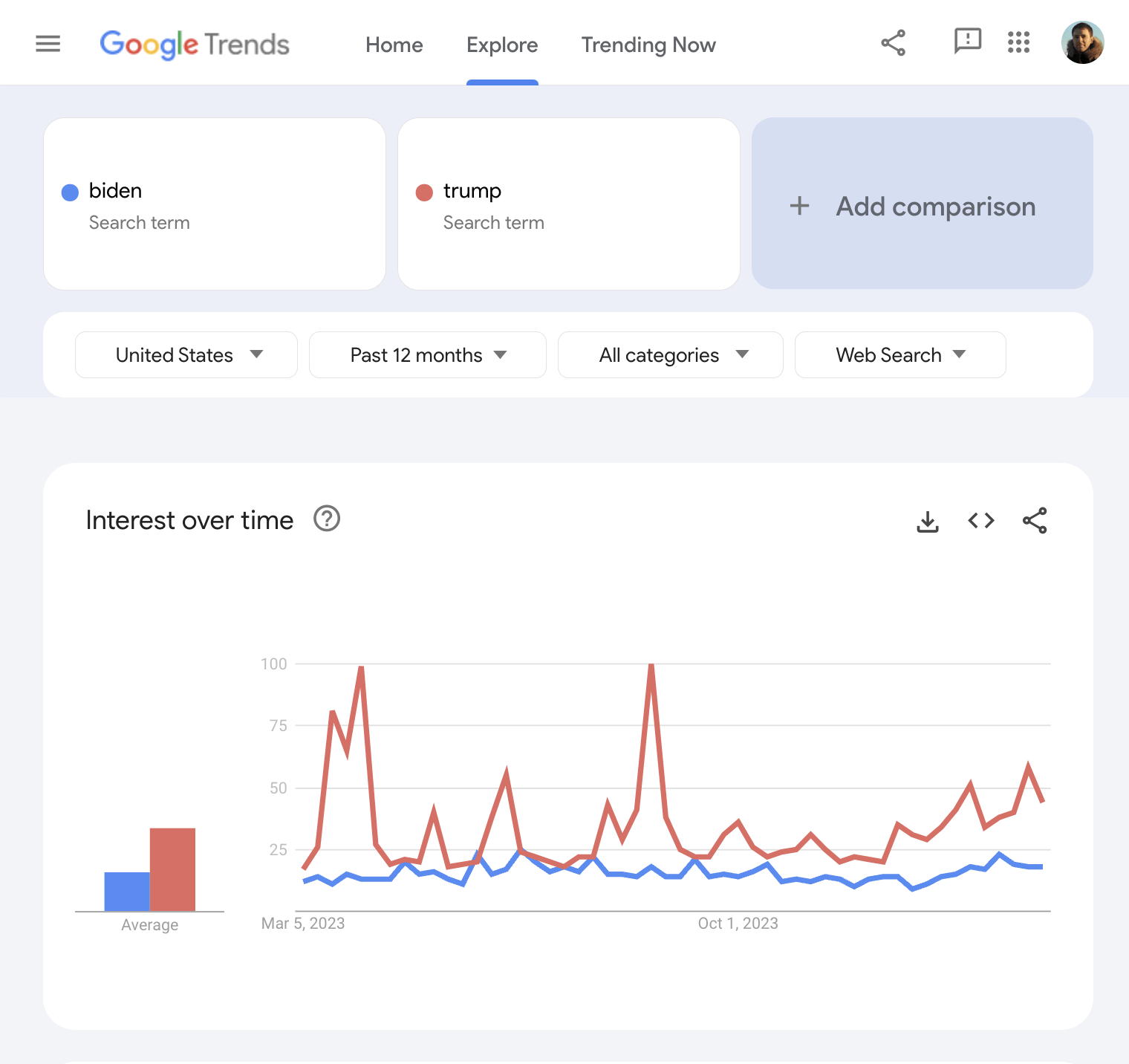

Google Trends allows you to view a keyword’s popularity over time in any country. The data shown is the relative popularity ratio scaled from 0-100, not the direct volume of search queries.

Most common reporting use cases

Google Trends is useful for showing how the popularity of certain searches can increase or decrease over time. If you work with a website that often has trending products, services, or news, it can be useful to illustrate this visually in your SEO report.

Google Trends makes it easy to spot seasonal trends for product categories. For example, people want to buy BBQs when the weather is sunny.

Using Google Trends, we can see that peak demand for BBQs usually happens in June-July every year.

Using this data across the last five years, we could be fairly sure when the BBQ season would start and end.

Favorite feature

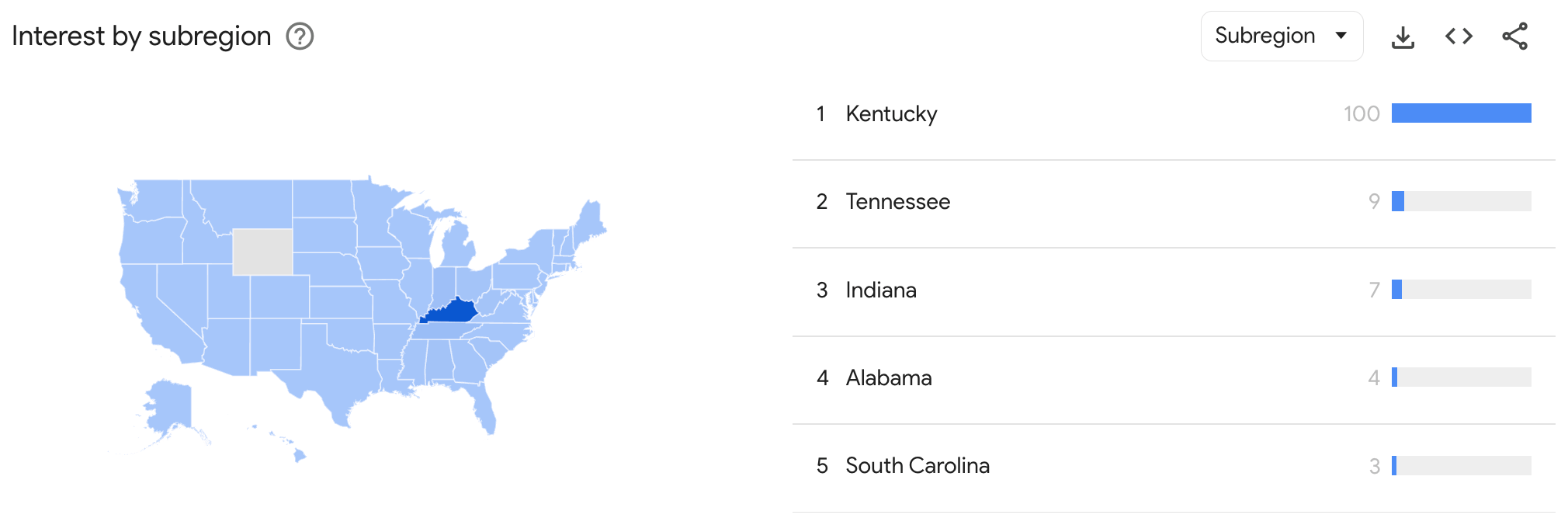

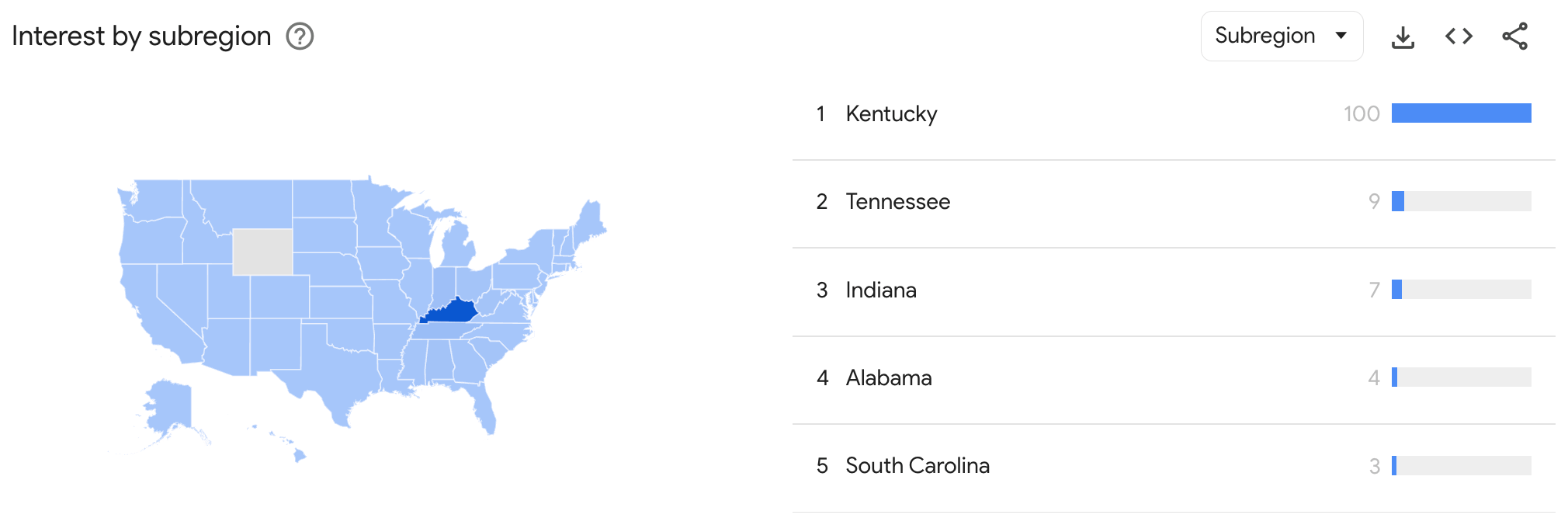

Comparing two or more search terms against each other over time is one of my favorite uses of Google Trends, as it can be used to tell its own story.

Embellishing your report with trends data allows you to gain further insights into market trends.

You can even dig into trends at a regional level if you need to.

Final thoughts

These free tools will help you put together the foundations for a well-rounded SEO report.

The tools you use for SEO reporting don’t always have to be expensive—even large companies use many of the free tools mentioned to create insights for their client’s SEO reports.

Got more questions? Ping me on X 🙂