SOCIAL

Pinterest Provides New Overview of its Evolving Scam Detection and Deactivation Processes

With malicious actors increasingly looking to utilize social media platforms to spread misinformation, operate phishing scams or for other nefarious motives, it’s important that every platform continues to evolve its detection processes, in order to protect users from exploitation, and keep their feeds free of unwanted distraction, helping to maximize engagement.

This week, Pinterest has provided an overview of its evolving efforts to detect and remove spam and malicious content, which details how it detects problematic Pins at domain level, based on where scammers and spammers are looking to refer Pinners to after clicking through via on-platform content.

As explained by Pinterest.

“One tactic malicious actors enact is misusing a Pin’s image and linking to a malicious external website. Our models detect spam vectors, like Pin links, as well as users engaging in spammy behaviors. We quickly limit distribution of Pins with spam links and take direct action against users identified with a high confidence to be engaging in spammy behavior.”

The visual nature of Pinterest makes it a less receptive platform for straight spam messaging, as well as its increasing focus on shopping, which has changed how people use the app. But even so, scammers, as Pinterest notes, will still try to lure Pinners to their websites via misleading means.

Which is why Pinterest’s domain-level approach is a key deterrent – by focusing on detecting scam websites, Pinterest can then eliminate all links to each site systematically, quickly de-activating those links, and protecting users.

“We perform a manual review for those identified with low confidence to limit false positives, and we notify users of our actions to maintain transparency and also provide an option of appeal against our decision. To maximize impact, our model learns to classify a domain as spam rather than a link. We apply the same enforcement to all Pins with links belonging to the same domain.”

This is a good approach, and while not all platforms apply the same, domain-level strategy, in Pinterest’s case, it’s an effective way to more rapidly respond to such threats, and provide more protection for users.

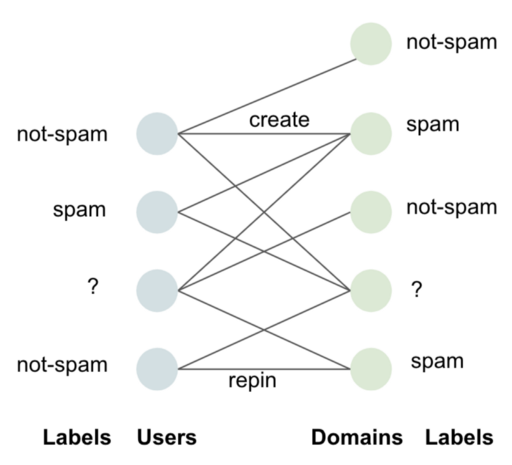

Pinterest also utilizes a similar process in detecting problematic individuals.

“We use features created from user attributes and their past behaviors as inputs. We also use user-domain interaction, summarized as a domain scores distribution for each user where domain scores are reused from the spam domain model, as an input.”

So again, Pinterest uses broader, domain-level detection to weed out potentially problematic individuals, then marks them for potential enforcement.

“We cluster users on attributes which can successfully isolate suspicious groups with high accuracy. Experts identify these attributes by exploring the behavior of suspicious users and their use of resources for creating spammy content.”

Through these expanded detection systems, Pinterest is able to take more wide-reaching, blanket approaches to eliminating spammy behavior, and again, better protecting users, and the user experience, by stamping them out before they can have any real impact.

It seems like an effective approach to stopping Pin misuse for such activity, with broad-reaching enforcement by domain facilitating faster shutdowns of scam networks.

Of course, no process is perfect, and there are still examples of Pins being used to spread misinformation and other scams. But this system makes it much more difficult for scammers to operate, by quickly deactivating large swathes of Pins referring to problematic domains in one step.

You can read more about Pinterest’s evolving spam detection processes here.

Stay in the loop with Entireweb

Get the latest updates delivered straight to your inbox. No spam - unsubscribe anytime.