SEO

How to Get on the First Page of Google in 2023

If you rank on page two of Google or beyond, you’re practically invisible.

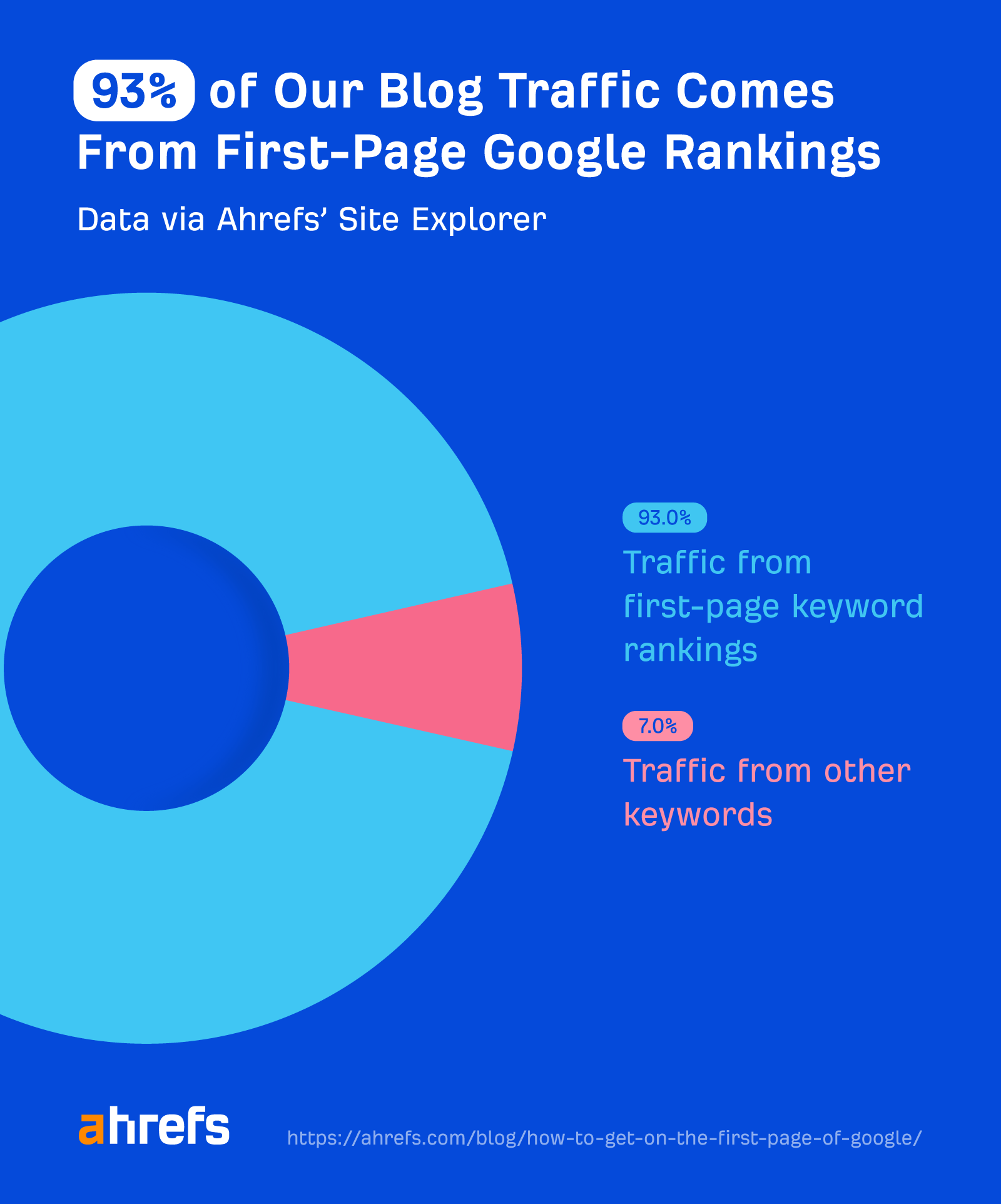

In fact, almost all of the traffic to our blog comes from first-page Google rankings:

Unfortunately, nobody can guarantee first-page Google rankings. But you can improve your chances of getting them by following a logical process.

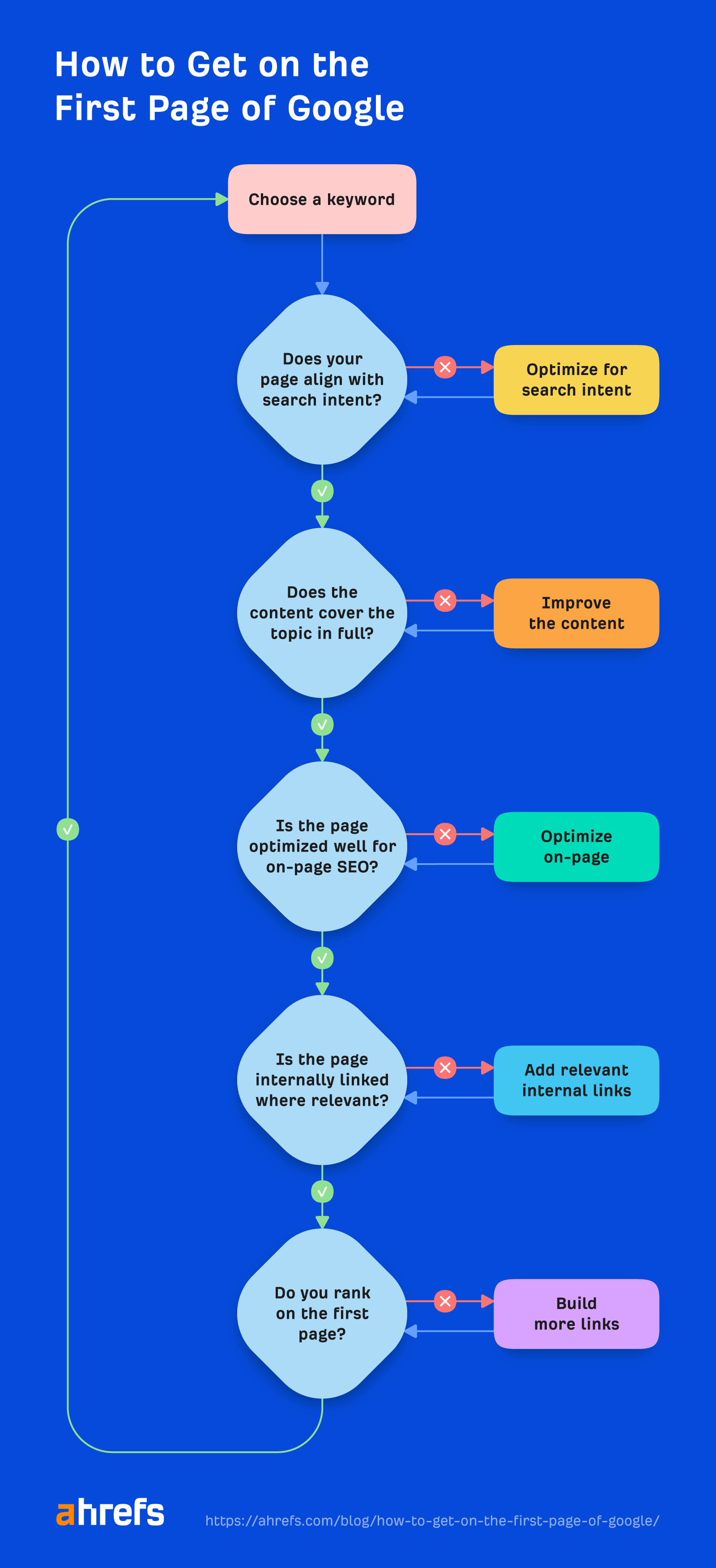

Here it is:

Let’s go through it step by step.

Sidenote.

If you run a local business, read our guide to local SEO instead because there are two main ways to rank on the first page.

Google wants to rank the type of pages that searchers are looking for. Unless your page aligns with the searcher’s intent, it’ll be near impossible to rank on the first page.

Unfortunately, it’s impossible to say for sure what searchers want. But as the point of Google is to rank the most relevant results, you can get a good idea by looking for the most common type, format, and angle of the pages ranking on page one.

Content type

The results you see ranking on the first page will usually be one of these:

- Blog posts

- Interactive tools

- Videos

- Product pages

- Category pages

For example, all first-page results for “days between dates” are interactive calculators:

For “sweaters,” they’re all e-commerce category pages:

Content format

This applies mainly to blog posts. If you’re mainly seeing this content type on the first page, check to see which of these formats appears the most:

- Step-by-step tutorials (i.e., how to do x)

- Listicles

- Opinion pieces

- Reviews

- Comparisons (e.g., x vs. y)

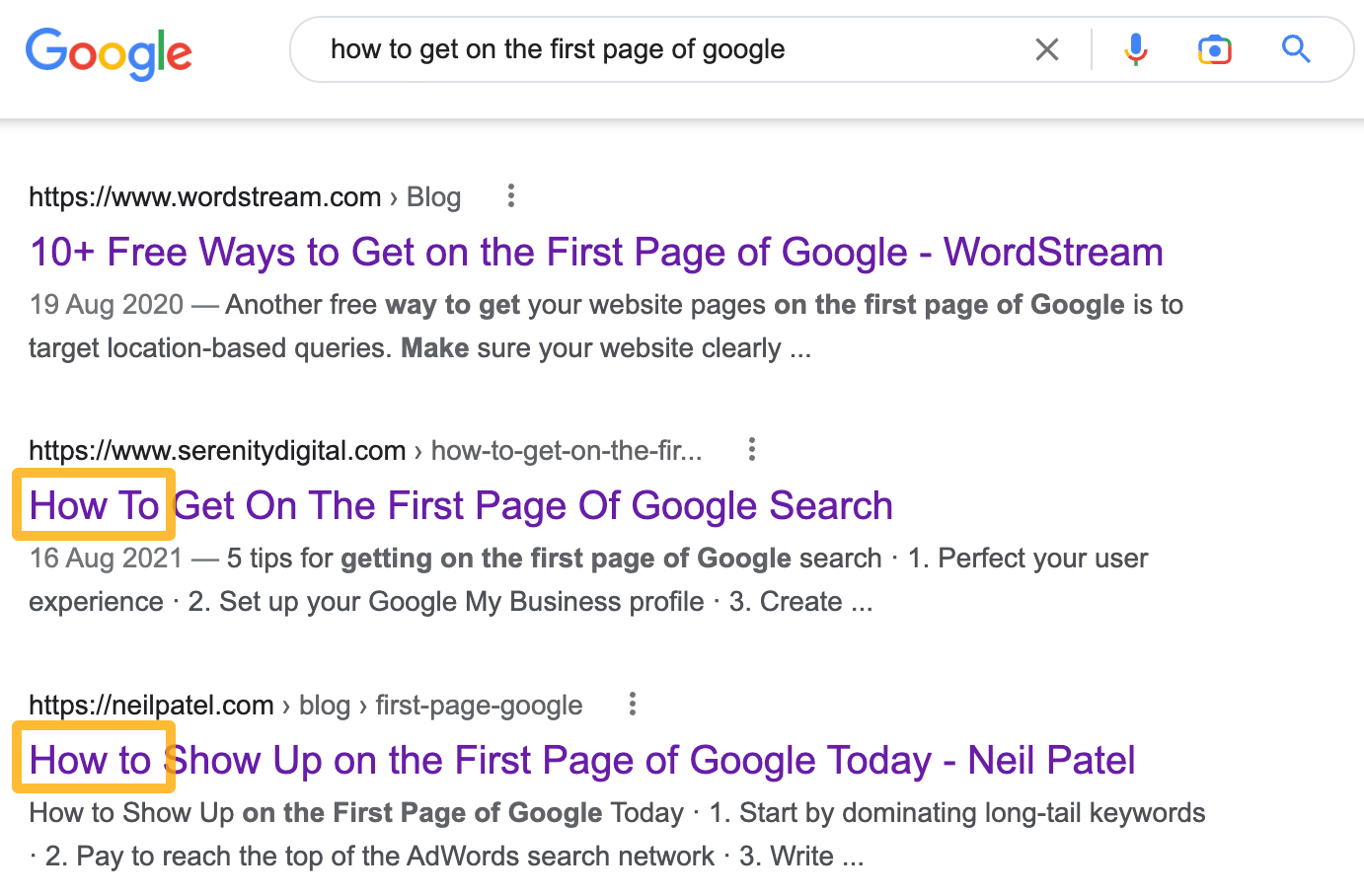

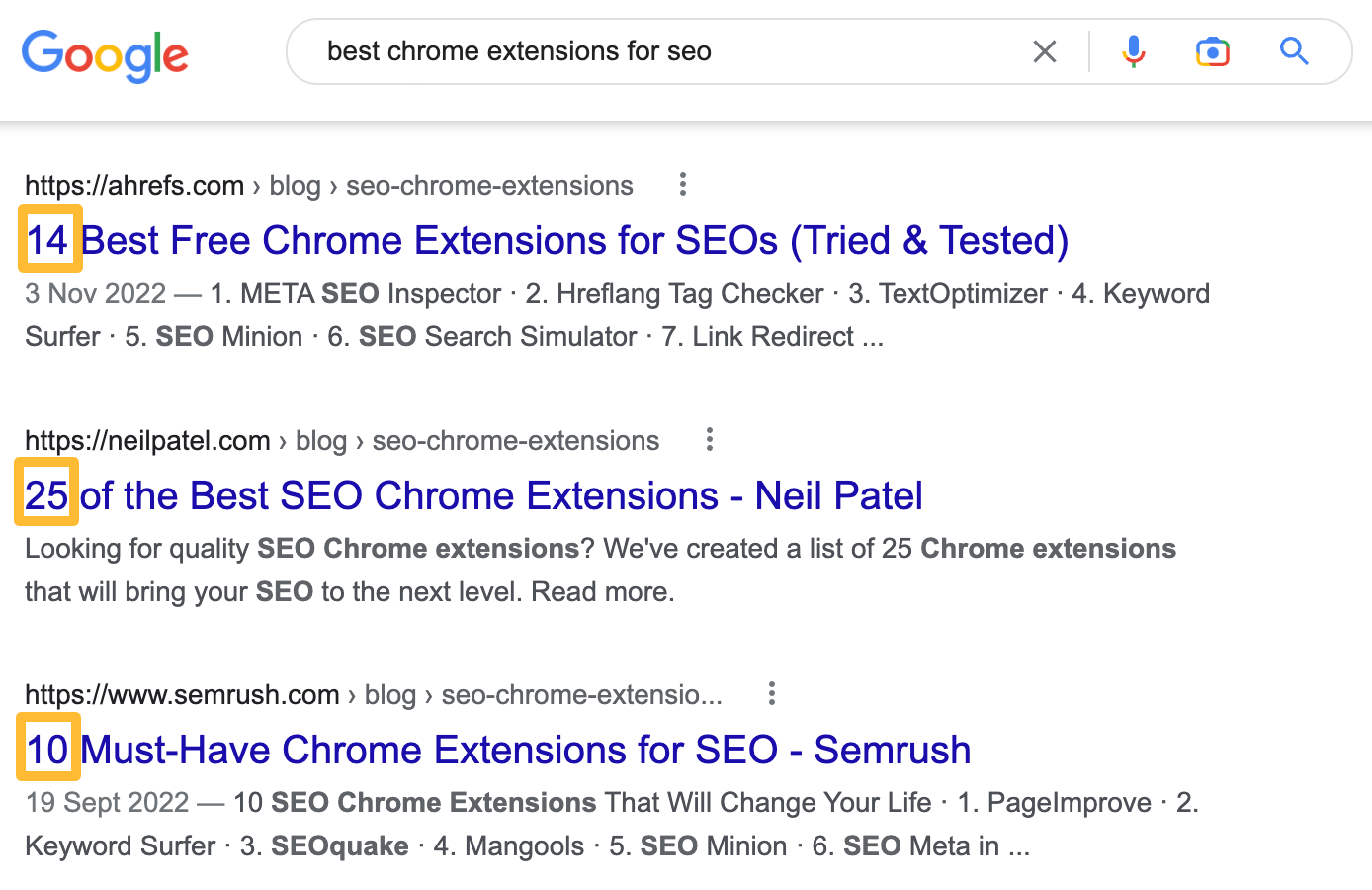

For example, you can tell that most results for “how to get on the first page of google” are step-by-step tutorials from the page titles:

For “best chrome extensions for seo,” on the other hand, they’re mostly listicles:

Content angle

This is harder to quantify than type and format, but it’s basically the most common unique selling proposition.

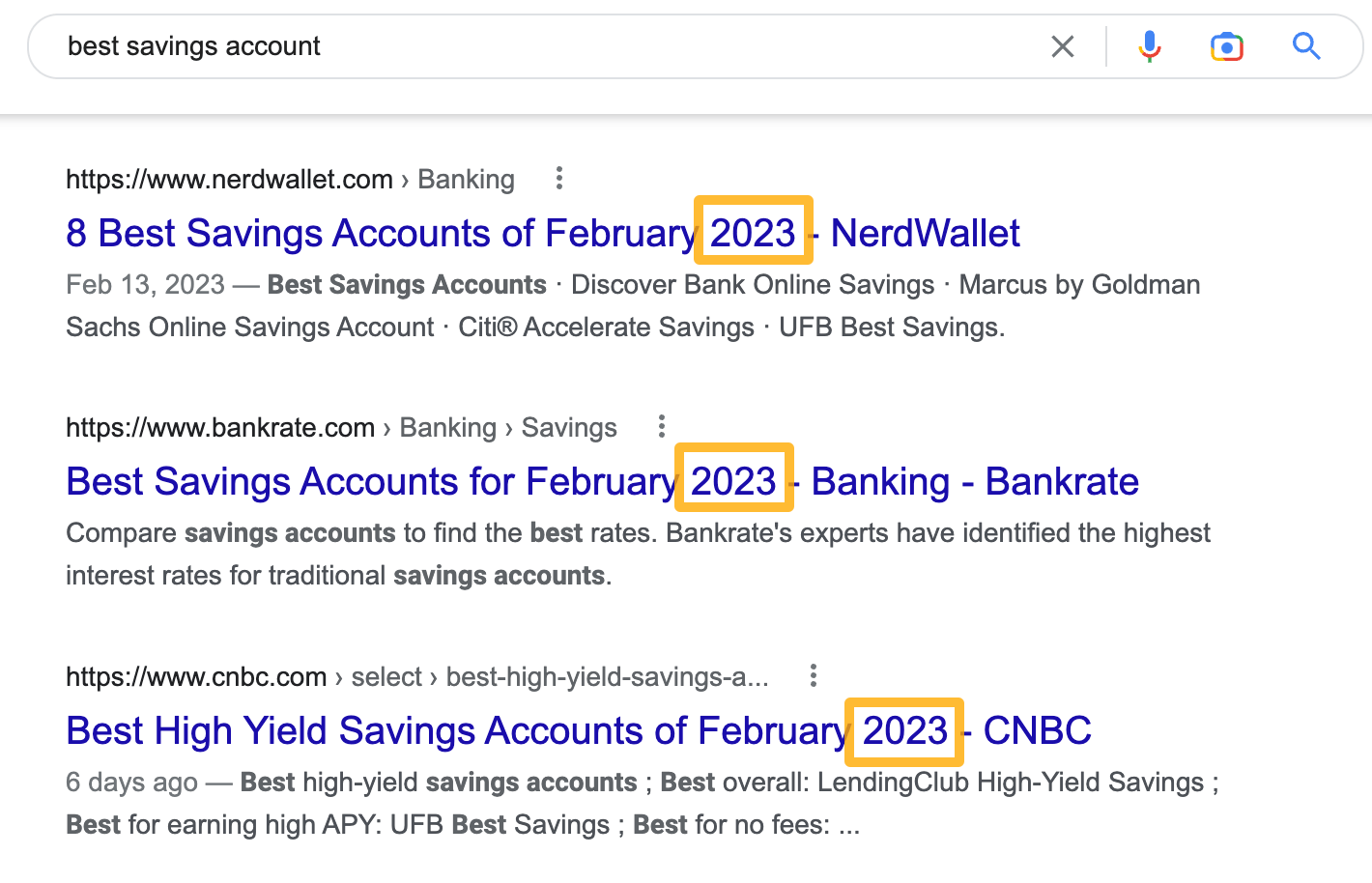

For example, almost all first-page results for “best savings account” have 2023 in their titles:

This indicates that searchers are looking for fresh information.

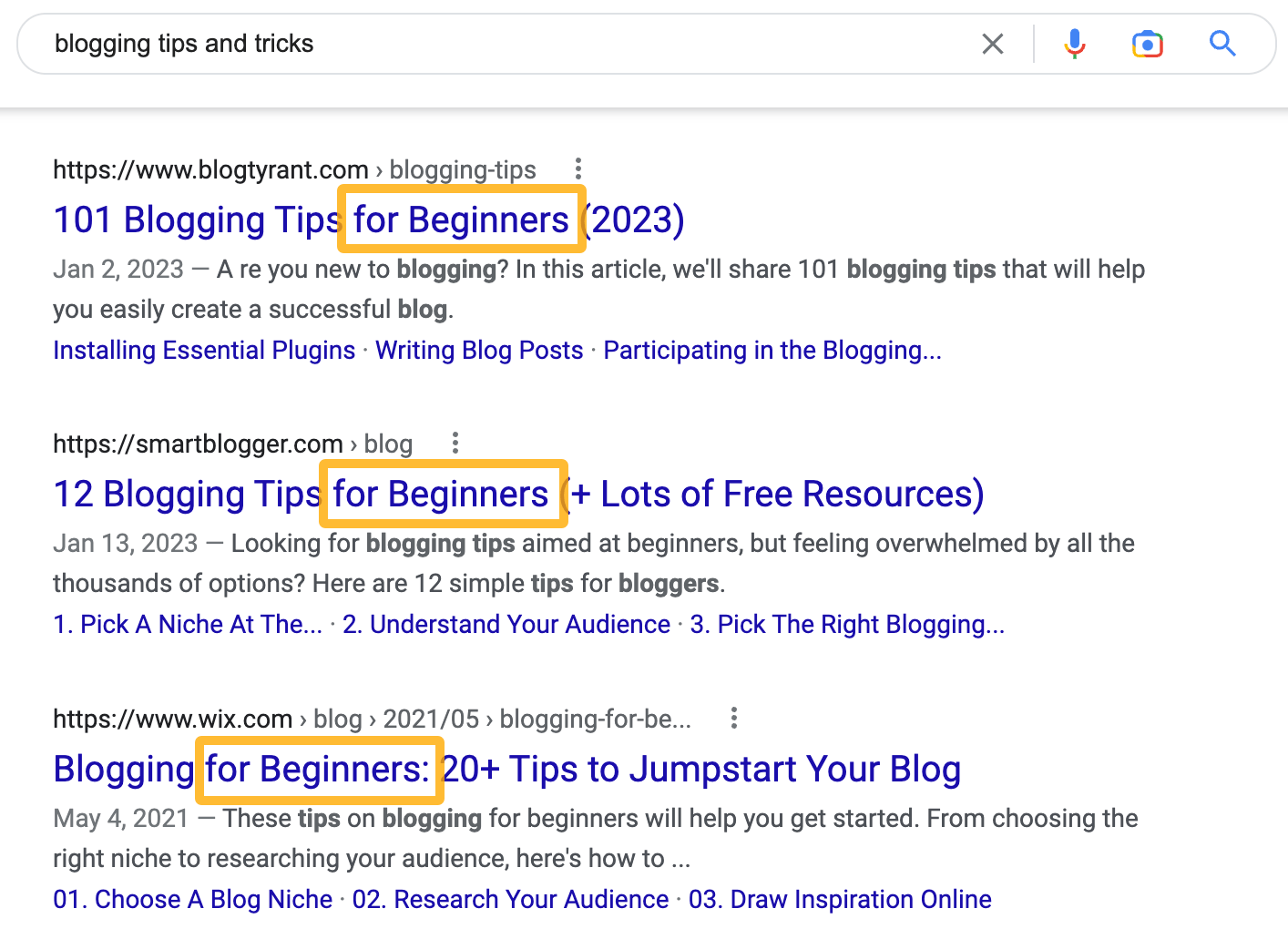

On the other hand, most first-page results for “blogging tips and tricks” are aimed at beginners:

Can’t align your page with search intent?

It’s best to switch gears and target a more relevant keyword. If you try to force an irrelevant page to rank, you’ll be fighting a losing battle.

Having content that broadly aligns with search intent isn’t enough. It also needs to cover everything searchers want to know or expect to see.

For example, every first-page result for “mens sneakers” has a size filter:

This is because searchers will inevitably want to filter for shoes that actually fit.

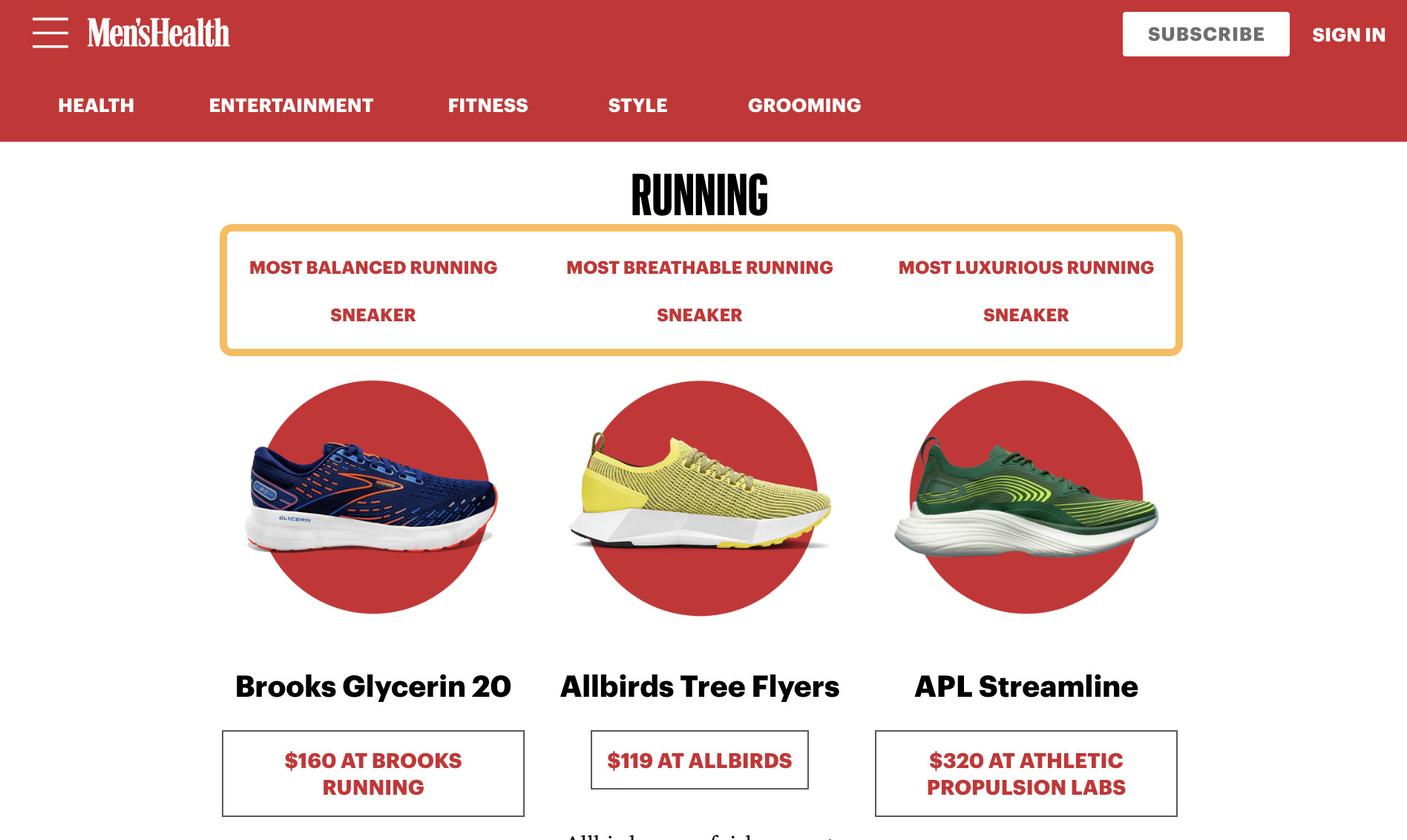

Similarly, all first-page results for “best mens sneakers” break down recommendations into categories like the best for walking, running, or cross training.

This is because the “best” sneakers depend on the activity you need them for.

Here are a few ways to find what searchers may be expecting to see covered on your page:

Look for commonalities among first-page results

This is a manual process where you open and eyeball the pages that rank.

For example, many first-page results for “best running shoes for flat feet” talk about the best budget option:

Look for common keyword mentions on first-page results

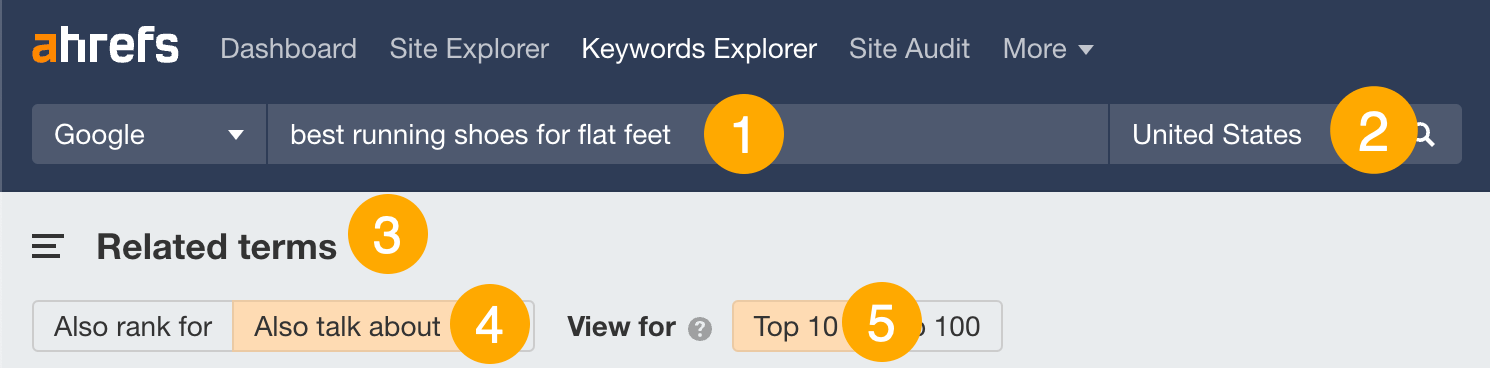

Here’s how to do this with Ahrefs’ Keywords Explorer:

- Enter your keyword

- Choose your target country

- Go to the Related terms report

- Toggle “Also talk about”

- Toggle “Top 10”

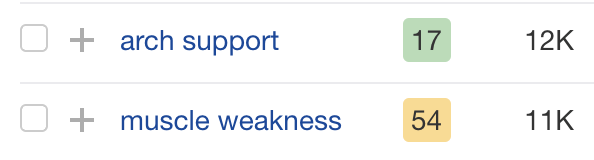

For example, many first-page results for “best running shoes for flat feet” mention “arch support” and “muscle weakness”:

These are obviously problems that folks with flat feet care about, so your content should address them.

Look for common keyword rankings among first-page results

Here’s how to do this with Ahrefs’ Keywords Explorer:

- Enter your keyword

- Choose your target country

- Go to the Related terms report

- Toggle “Also rank for”

- Toggle “Top 10”

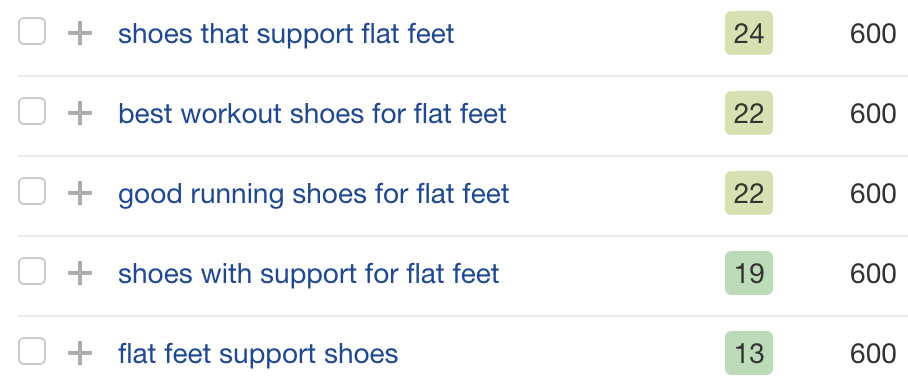

For example, the first-page results for “best running shoes for flat feet” frequently also rank for keywords related to support:

This is clearly an important quality that flat-footed searchers are looking for in a pair of running shoes.

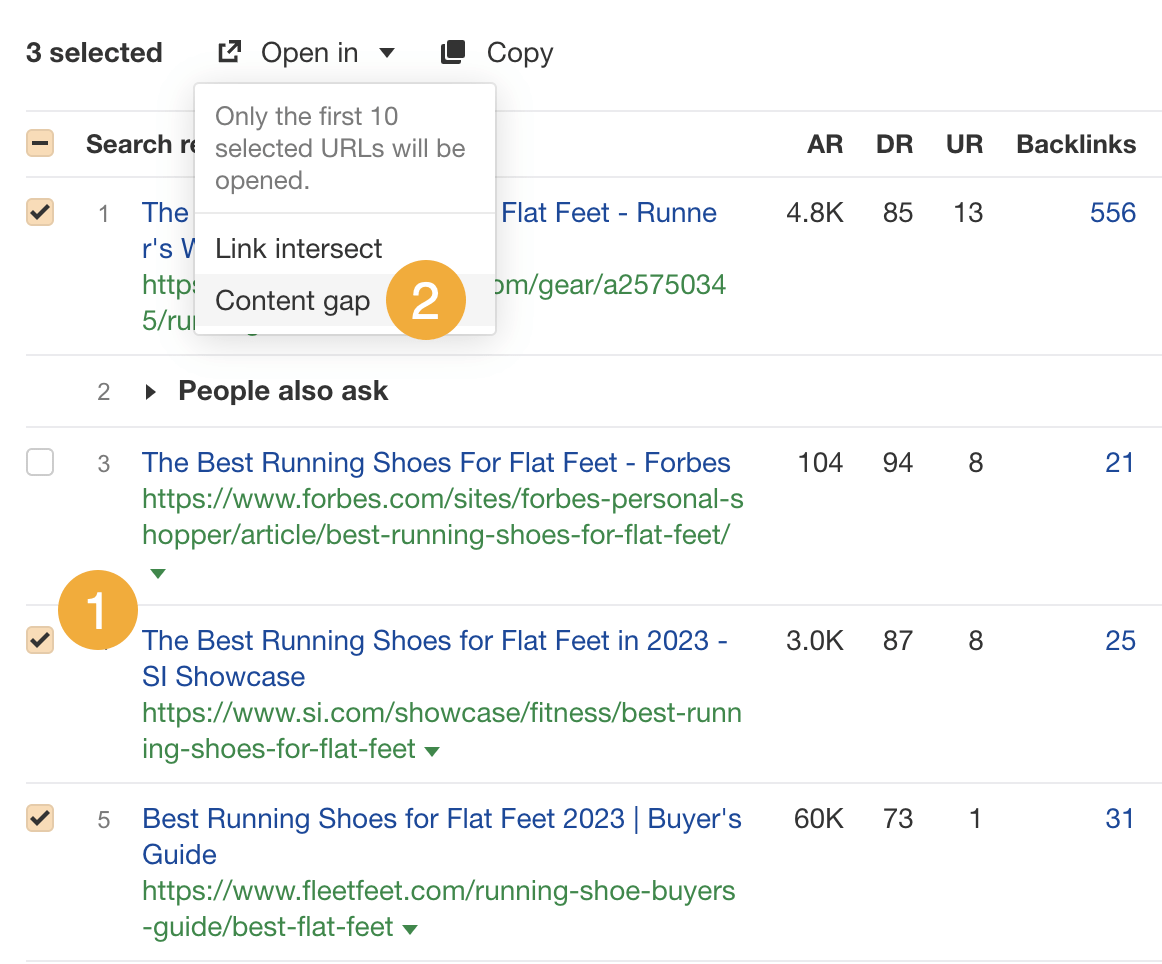

If you’d prefer to see common keyword rankings for specific top-ranking pages, use the Content Gap tool in Ahrefs’ Site Explorer. The quickest way to do this is to enter your keyword in Keywords Explorer, scroll to the SERP overview, and then:

- Select which first-page results to include in the gap analysis.

- Click “Open in” and choose “Content gap.”

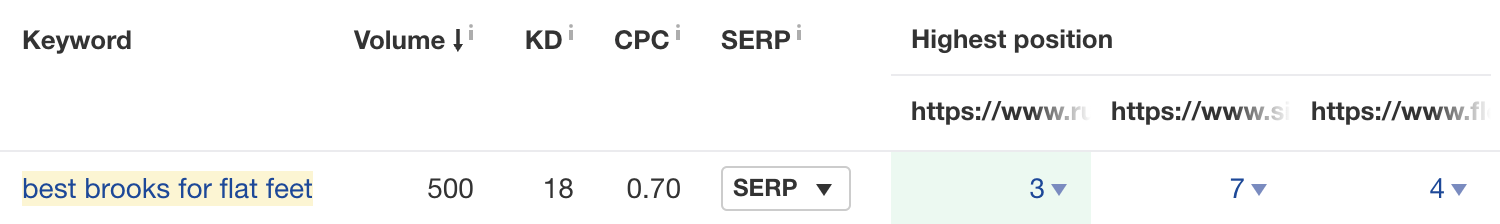

For example, these three pages all rank on the first page for “best brooks for flat feet”:

This tells you that some searchers are looking for the best options from this brand (Brooks), so you should probably include them in your post.

Google looks at things on the page itself to help decide if it should rank. This is where on-page SEO comes in.

Most on-page signals are only small ranking factors. However, as most of them are quick to change and fully within your control, they’re worth optimizing.

Let’s look at a few easy things you can do to improve on-page SEO.

Mention your keyword in the URL

Google says to avoid lengthiness and use words that are relevant to your site’s content in your URLs. This doesn’t mean that you have to use your target keyword. But it makes sense, as it’s short and describes your page.

For example, the target keyword for this post is “how to get on the first page of google,” so that’s what we used for the URL.

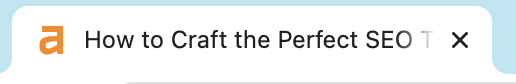

Mention your keyword in the title tag

A title tag is a bit of HTML code that wraps around the page title. You’ll often see it displayed in search engine results, social networks like Twitter, and browser tabs.

Google’s John Mueller says it’s only a tiny ranking factor, but we think it’s still a good place to mention your keyword. Just make sure to do it naturally.

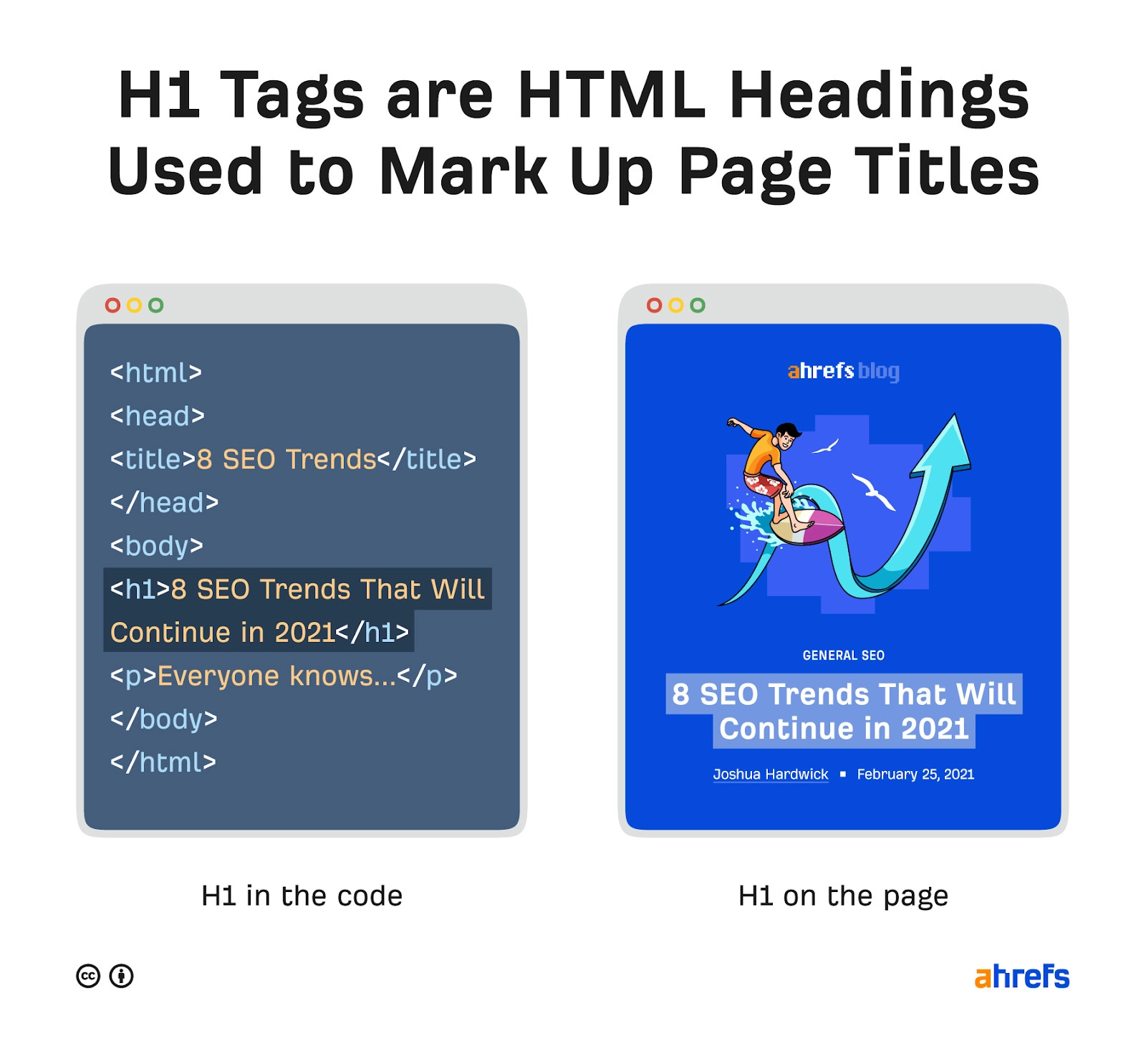

Wrap the visible page title in an H1 tag

H1 tags are HTML code used to mark up page titles.

Google is a bit unclear on the importance of H1 tags. John is on record saying that they’re not critical for search ranking, but Google’s official documentation says to “place the title of your article in a prominent spot above the article body, such as in a <h1> tag.”

Our advice is to use one per page for the page title and to include your keyword where relevant.

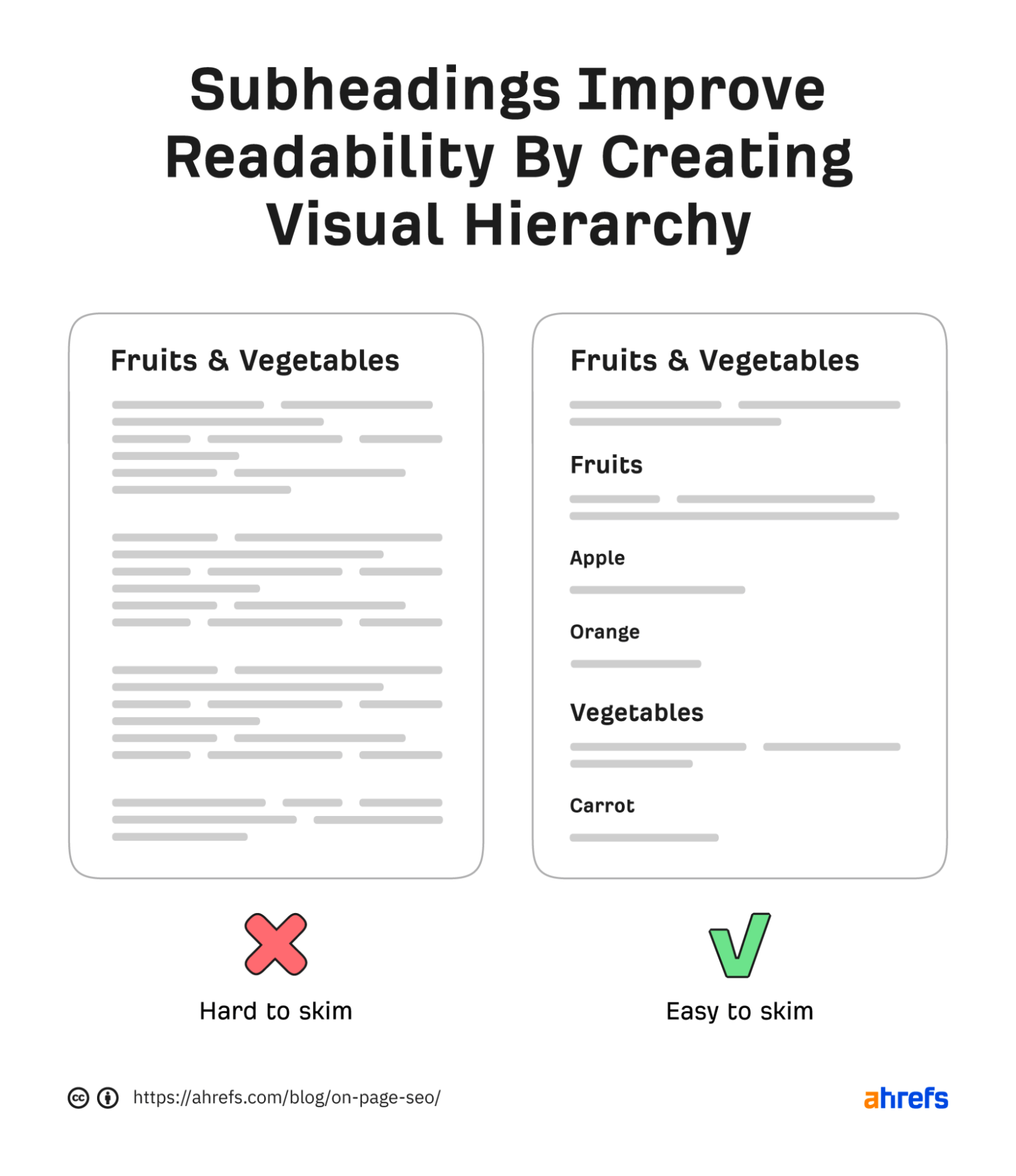

Use subheadings to improve readability

Google uses subheadings to try to better understand the content on the page.

This doesn’t necessarily mean they’re a ranking factor, but they improve your content by making it easier to digest and skim. That can have an indirect impact on SEO.

Our advice is to use subheadings for important subtopics.

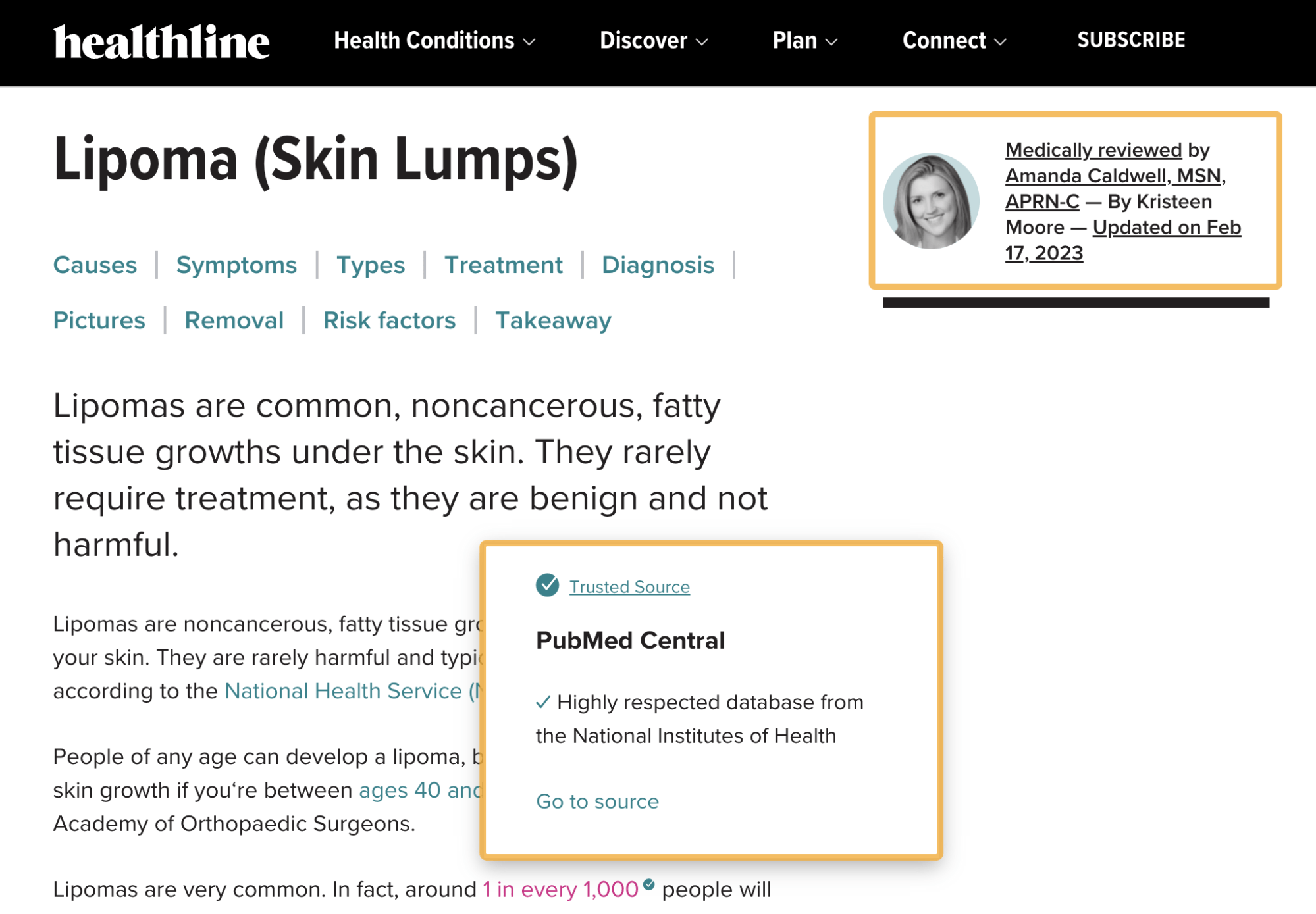

Showcase the author’s expertise

Google wants to rank content written by experts, so it’s important to demonstrate that expertise on the page.

Here are a few ways Google suggests to do that:

- Provide clear sourcing

- Provide background information about the author

- Keep the content free of easily verified factual errors

Here’s a great example from Healthline:

Internal links are backlinks from one page on your website to another.

Generally speaking, the more of these a page has, the more PageRank (PR) it will receive. That’s good because Google still uses PR to help rank webpages.

Let’s look at a few ways to find relevant internal linking opportunities.

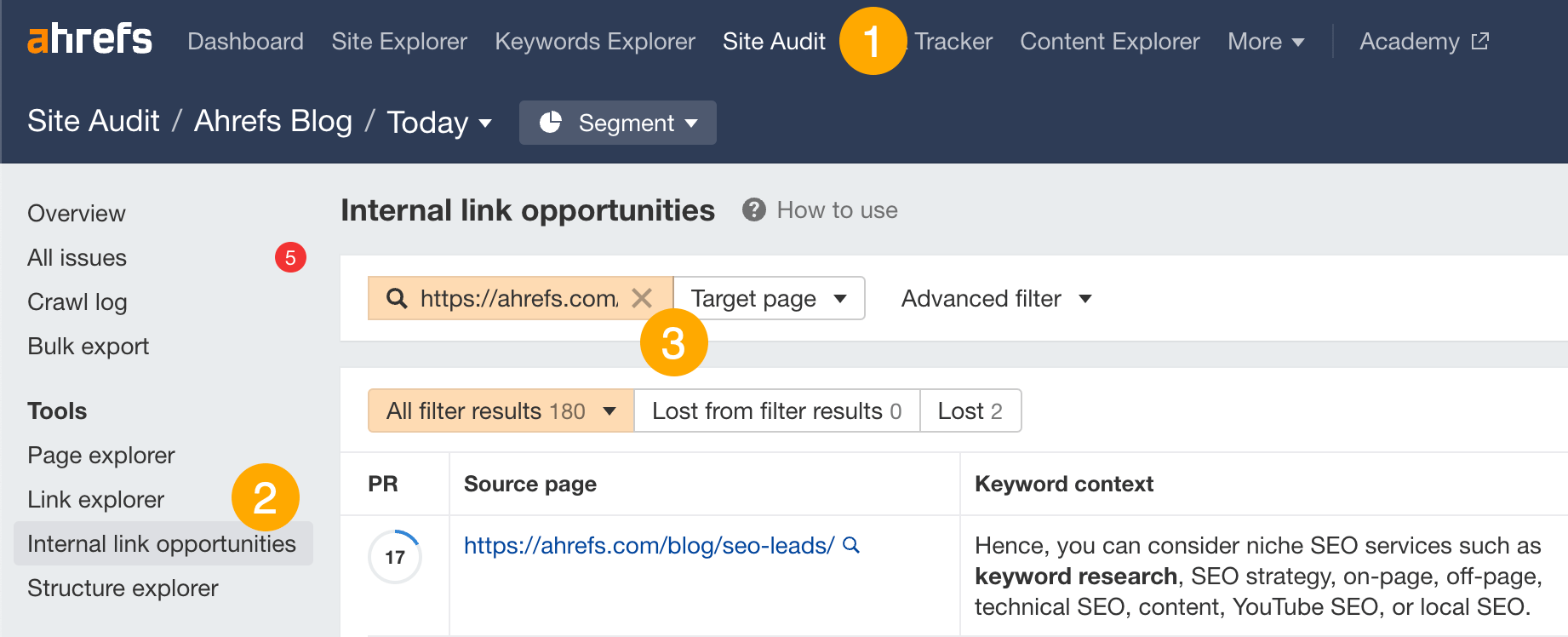

Use the Internal Link Opportunities report in Ahrefs’ Site Audit

This report finds on-site mentions of words and phrases your page already ranks for. It’s free to use with an Ahrefs Webmaster Tools (AWT) account.

Here’s how to use it:

- Go to Site Audit (and choose your project)

- Click the Internal link opportunities report

- Search for the URL of the page you want to rank on the first page, and choose “Target URL” from the dropdown

For example, as our keyword research guide ranks for “keyword research,” the report finds unlinked mentions of that keyword on our site. We can then internally link those words and phrases to our guide.

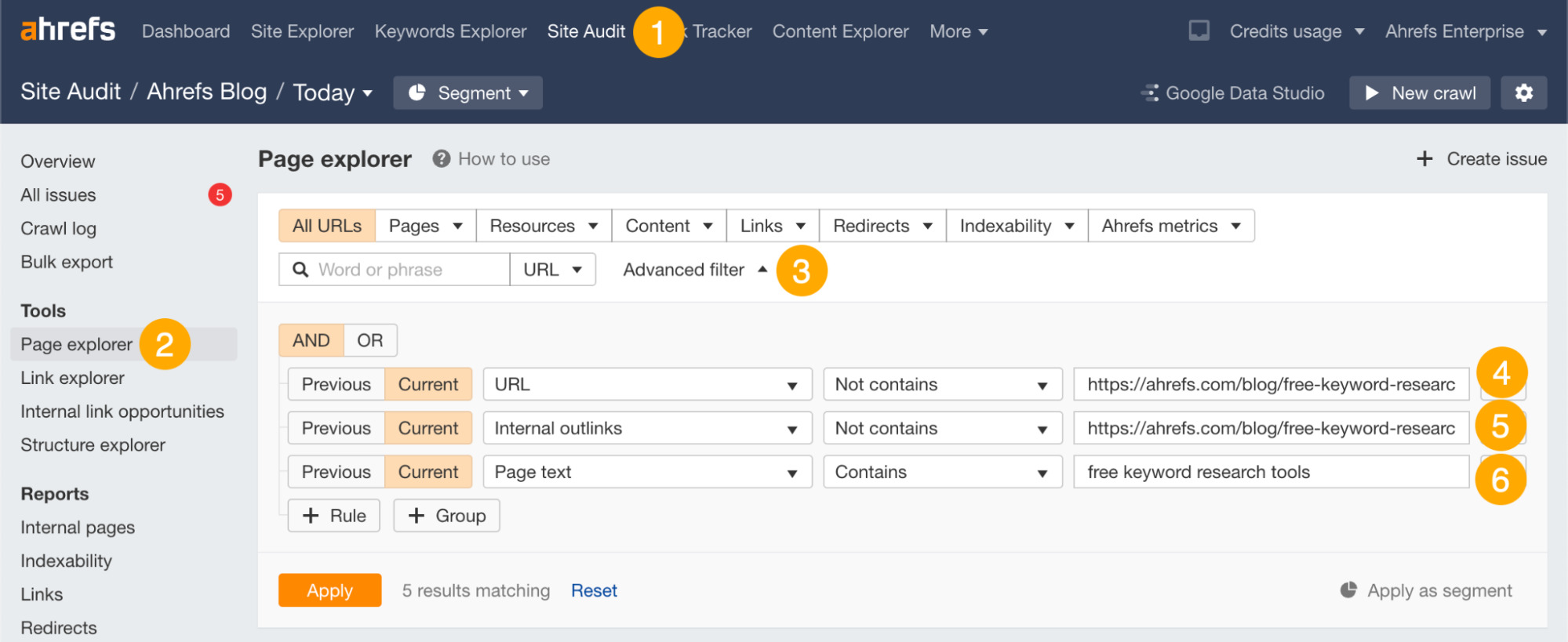

Use the Page Explorer tool in Ahrefs’ Site Audit

This tool shows all kinds of data about the pages on your website, but you can apply filters to find internal linking opportunities. It’s free to use with Ahrefs Webmaster Tools (AWT).

How’s how to use it:

- Go to Site Audit (and choose your project)

- Click the Page Explorer tool

- Click “Advanced filter”

- Set the first rule to URL Not contains [URL of the page you want to add internal links to]

- Set the second rule to Internal outlinks Not contains [URL of the page you want to add internal links to]

- Set the third rule to Page text Contains [keyword you want to rank for on the first page]

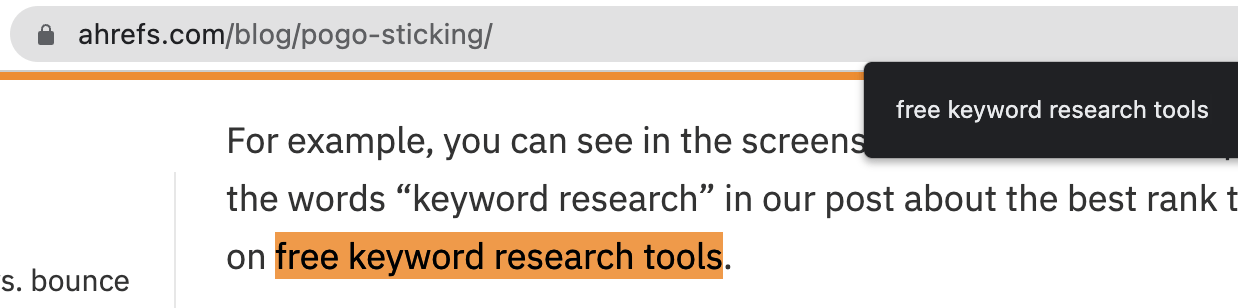

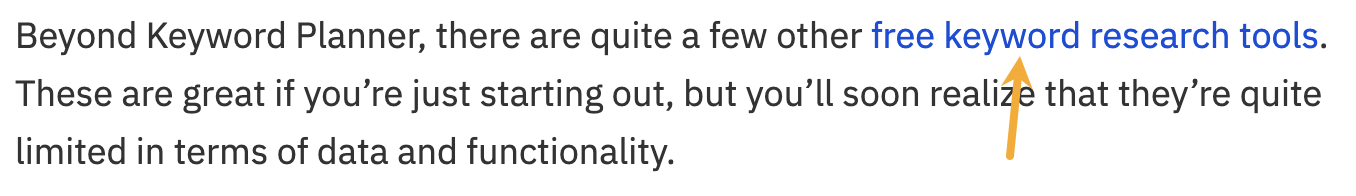

For example, the tool tells us that our pogo-sticking guide mentions the keyword “free keyword research tools” but doesn’t link to our list of free keyword tools.

If we open the page and search for this keyword, we see a clear opportunity for a relevant internal link:

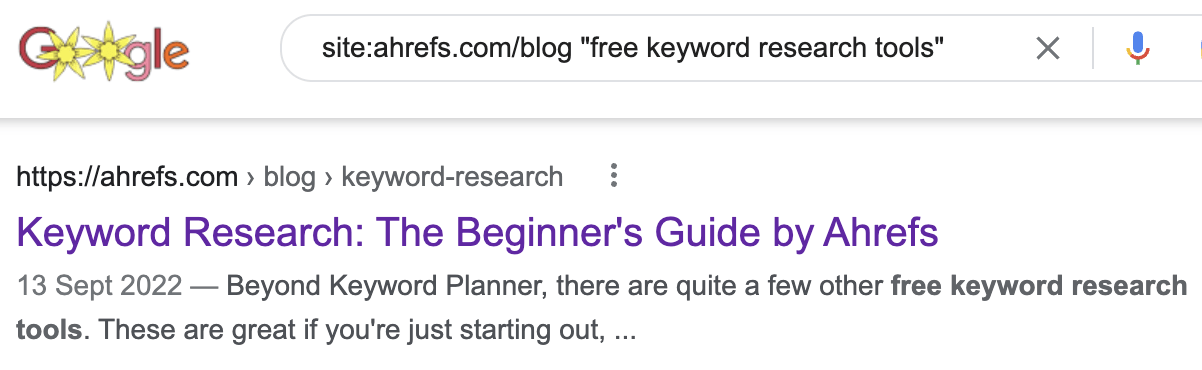

Use Google

If you search Google for site:yourwebsite.com "keyword", you’ll see all pages on your website that mention the keyword.

For example, it tells us that our keyword research guide mentions “free keyword research tools”:

The problem with this tactic is that it doesn’t tell you whether there’s already an internal link.

In fact, in this case, the internal link is already there:

This makes it time-consuming and inefficient compared to the previous methods.

Backlinks are an important ranking factor. If you don’t rank on the first page of Google by now, it’s probably because you don’t have enough of them.

But how many backlinks do you need, and how do you get them?

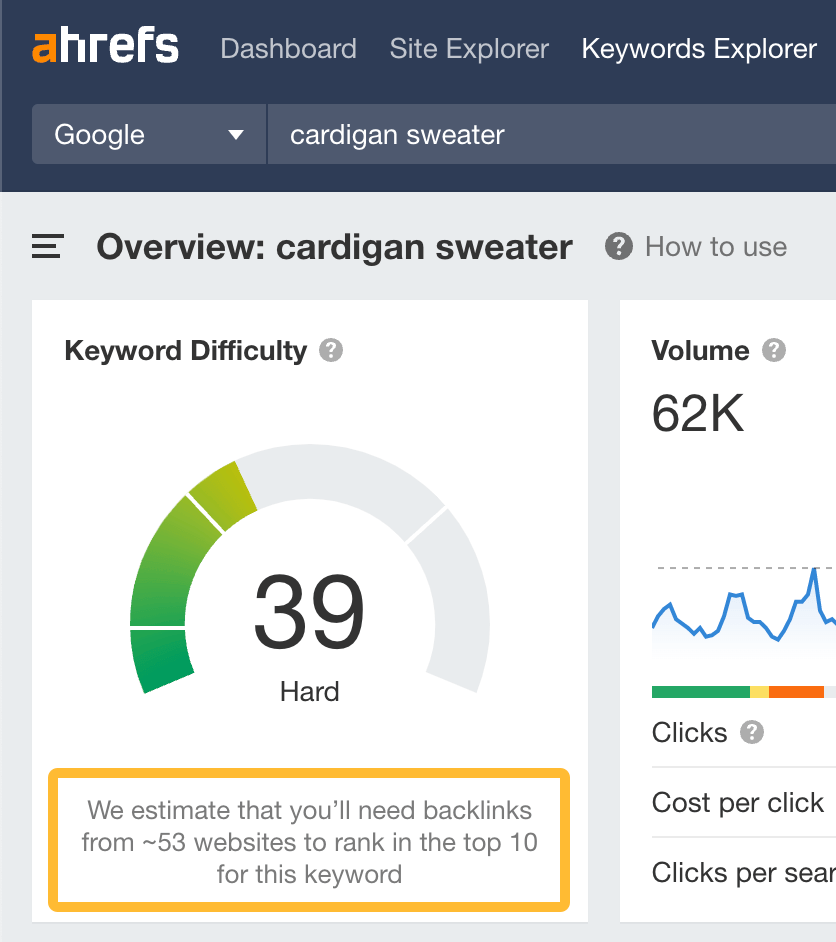

Given that some backlinks are more powerful than others, it’s impossible to say exactly how many you’ll need to rank on the first page. However, we do offer a rough estimate below the Keyword Difficulty score shown in Ahrefs’ Keywords Explorer.

Just remember to take this number with a very large pinch of salt, as it’s far from an exact science.

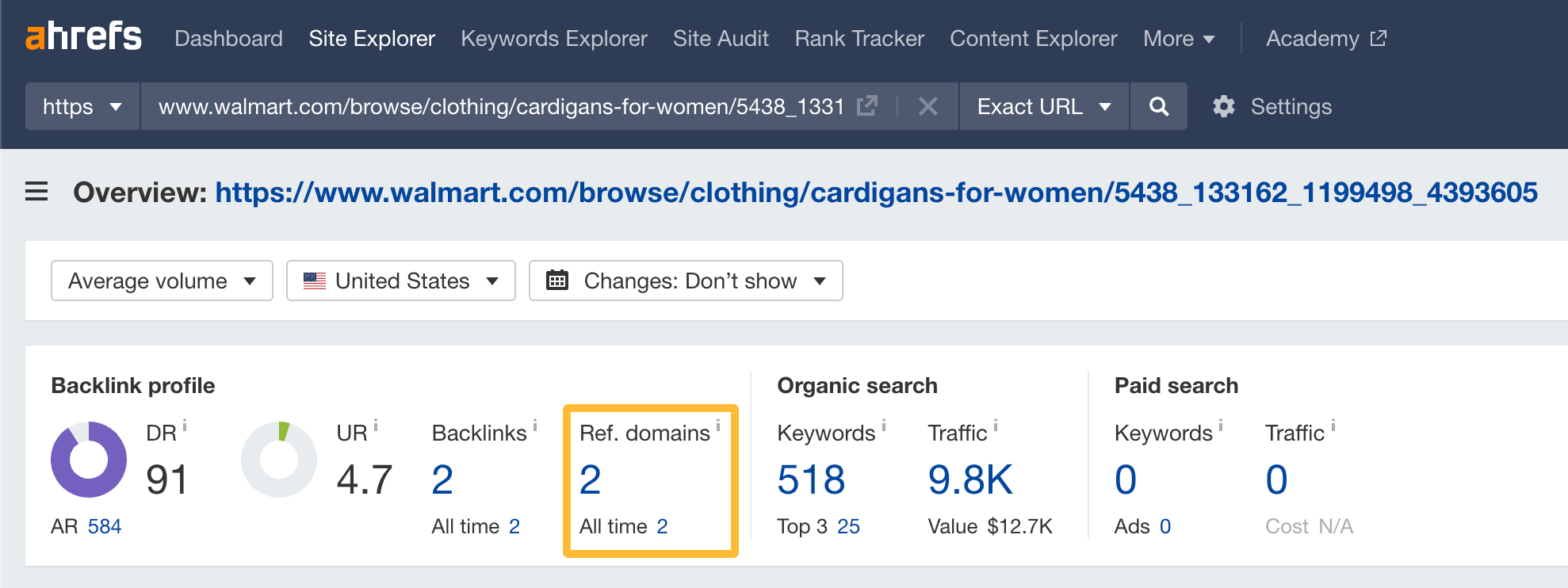

For example, Ahrefs estimates that you’ll need backlinks from ~53 websites to rank on the first page for “cardigan sweater.” But if you plug one of the first-page results into Ahrefs’ Site Explorer (or our free backlink checker), you’ll see it only has links from two referring domains.

This happens for two reasons:

- Backlinks aren’t the only ranking factor – There are other ranking factors that matter.

- Some backlinks are more powerful than others – You’ll need fewer of these to rank.

If you think you need more backlinks to rank, check out the resources below or take our free link building course. Just know that building backlinks can be challenging, so it may take a while to build enough to rank for competitive keywords.

Final thoughts

Following this process should help you rank on the first page of Google, but it still takes time.

How much time? It’s hard to say. But our poll of 4,300 SEOs revealed that 83.8% think SEO takes three months or more to show results.

It’s also true that unless you rank high on Google’s first page, you likely won’t get much traffic.

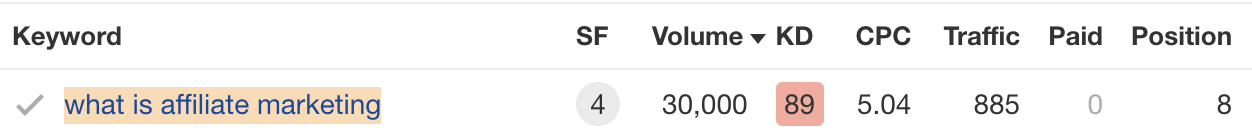

For example, we rank #8 for “what is affiliate marketing.” But despite having a monthly search volume of 30K, the keyword only sends us an estimated 885 monthly visits from the U.S.

So once you’re on the first page, your goal should be to rank #1.

Following these two guides will help:

Got questions? Leave a comment or ping me on Twitter.

![How AEO Will Impact Your Business's Google Visibility in 2026 Why Your Small Business’s Google Visibility in 2026 Depends on AEO [Webinar]](https://articles.entireweb.com/wp-content/uploads/2026/01/How-AEO-Will-Impact-Your-Businesss-Google-Visibility-in-2026-400x240.png)

![How AEO Will Impact Your Business's Google Visibility in 2026 Why Your Small Business’s Google Visibility in 2026 Depends on AEO [Webinar]](https://articles.entireweb.com/wp-content/uploads/2026/01/How-AEO-Will-Impact-Your-Businesss-Google-Visibility-in-2026-80x80.png)