SEO

How to Use Keywords for SEO: The Complete Beginner’s Guide

In this guide, I’ll cover in detail how to make the best use of keywords in three steps:

- Finding good keywords: keywords that are rankable and bring value to your site.

- Using keywords in content and meta tags: how to use the target keyword to structure and write content that will satisfy readers and send relevance signals to search engines.

- Tracking keywords: monitoring your (and your competitors’) performance.

There’s really a lot you can do with just a single keyword, so at the end of the article, you’ll find a few advanced SEO tips.

Once you know how to find one good keyword, you will be able to create an entire list of keywords.

1. Pick relevant seed keywords to generate keyword ideas

Seed keywords are words or phrases that you can use as the starting point in a keyword research process to unlock more keywords. For example, for our site, these could be general terms like “seo, organic traffic, digital marketing, keywords, backlinks”, etc.

There are many good sources of seed keywords, and it’s not a bad idea to try them all:

- Brainstorming. This involves gathering a team or working solo to think deeply about the terms your potential customers might use when searching for your products or services.

- Your competitors’ website navigation. The labels they use in their navigation menus, headers, and footers often highlight critical industry terms and popular products or services that you might also want to target.

- Your competitors’ keywords. Tools like Ahrefs can help you discover which keywords your competitors are targeting in their SEO and paid ad campaigns. I’ll cover that in a bit.

- Your website and promo materials. Review your website’s text, especially high-performing pages, as well as any promotional materials like brochures, ads, and press releases. These sources can reveal the terms that already resonate with your audience.

- Generative AI. AI tools can generate keyword ideas based on brief descriptions of your business, products, or industry (example below).

Here’s what you can ask any generative AI for, whether that’s Copilot, ChatGPT, Perplexity, etc.

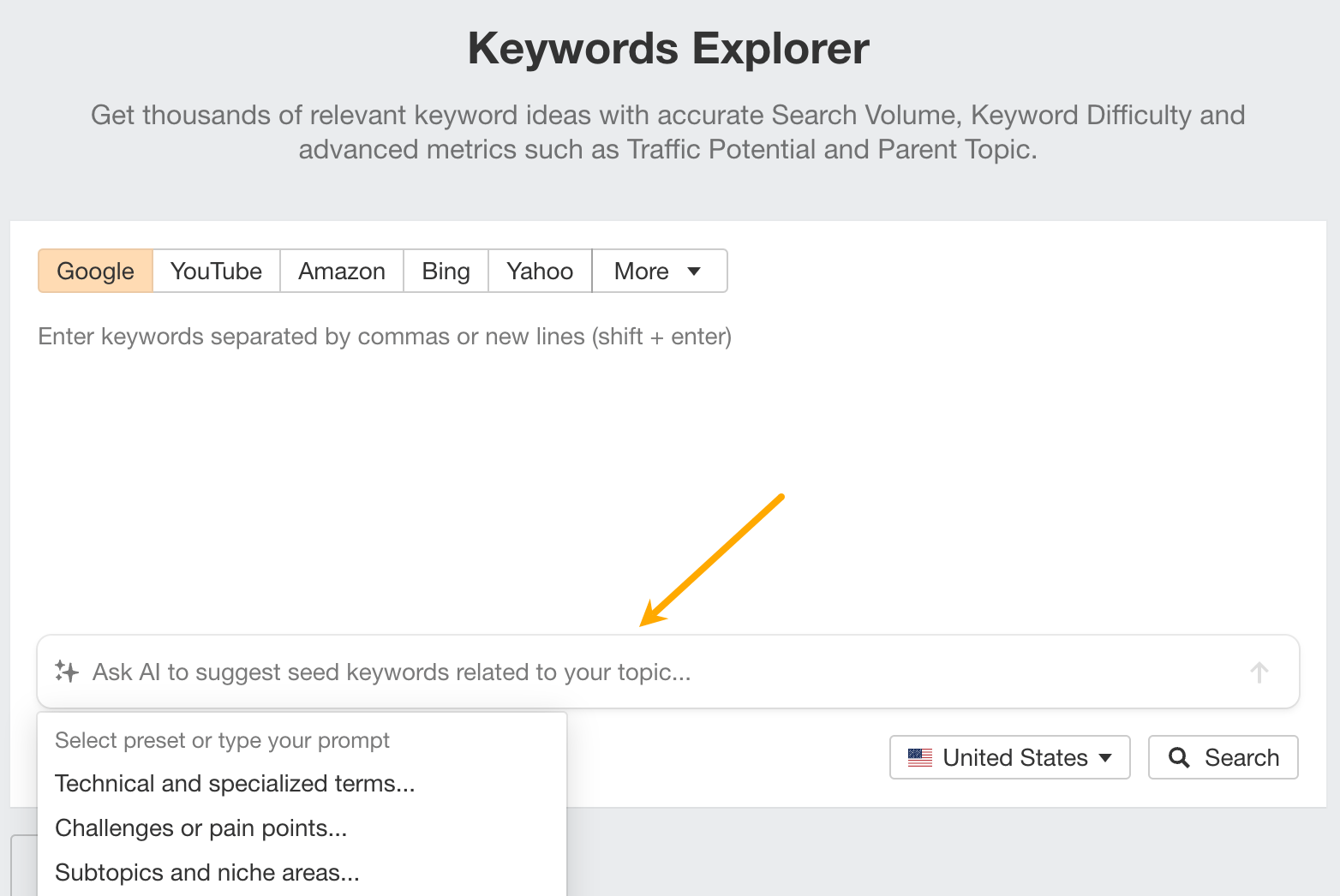

Next, paste your seed keywords into a tool like Ahrefs Keywords Explorer to generate keyword ideas. If you’re using Ahrefs, you can go straight into Keywords Explorer, get AI suggestions there, and start researching right away.

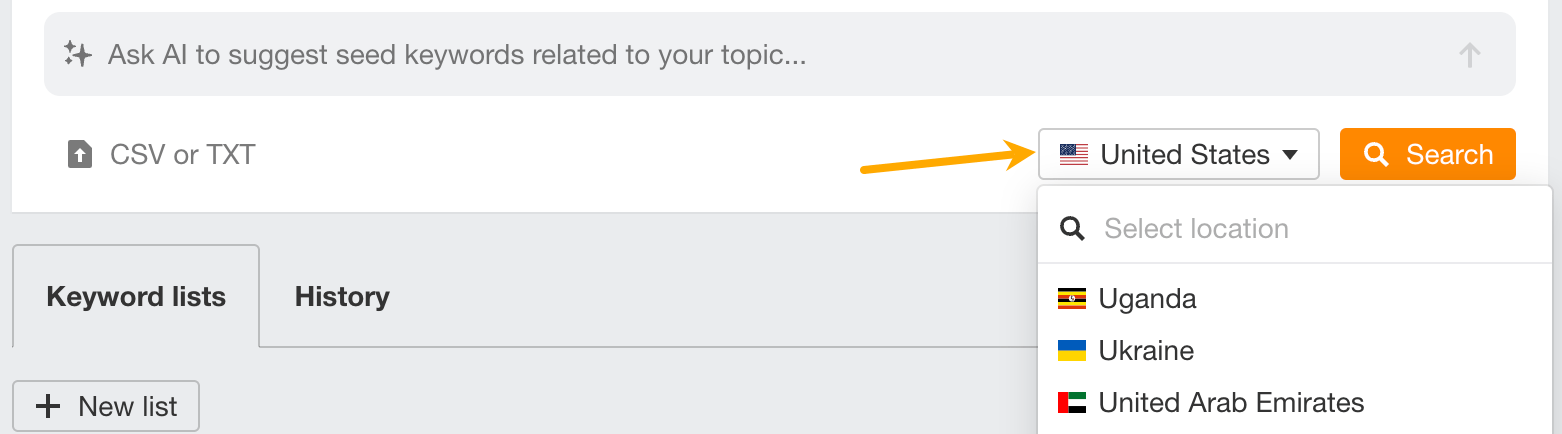

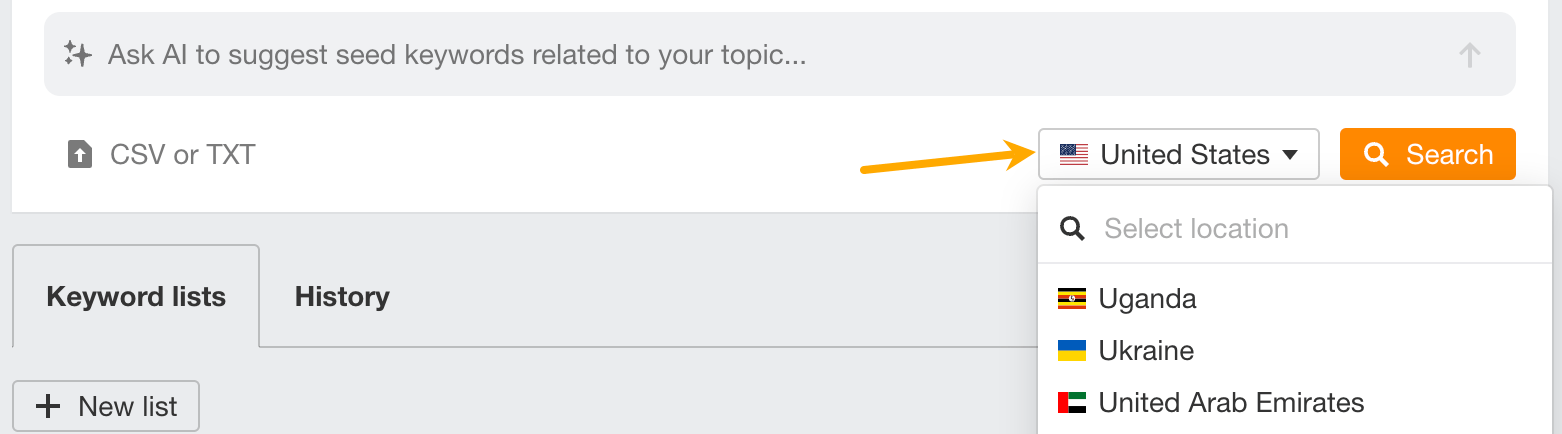

Next, make sure you’ve set up the country in which you’ll want to rank and hit “Search”.

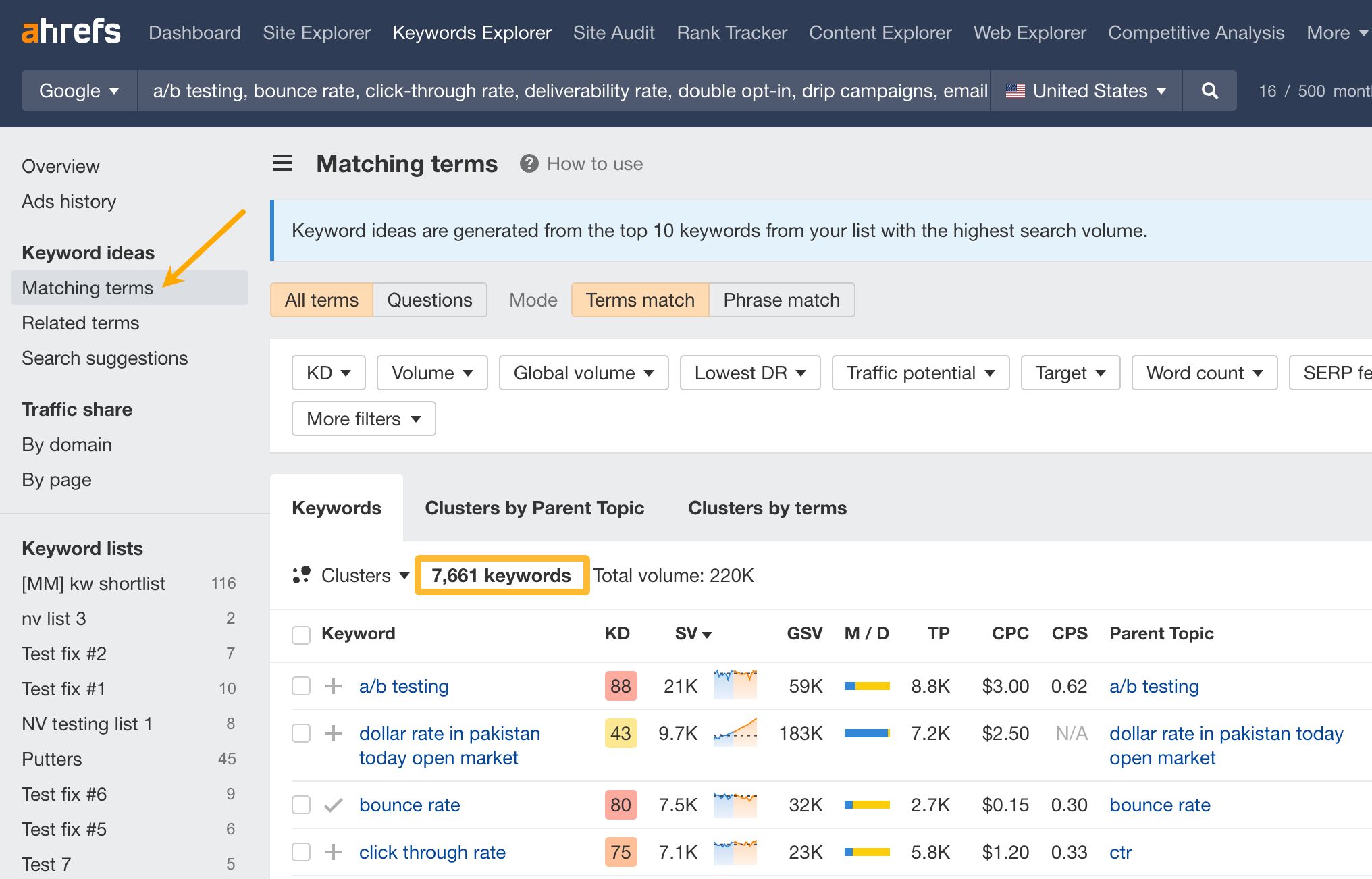

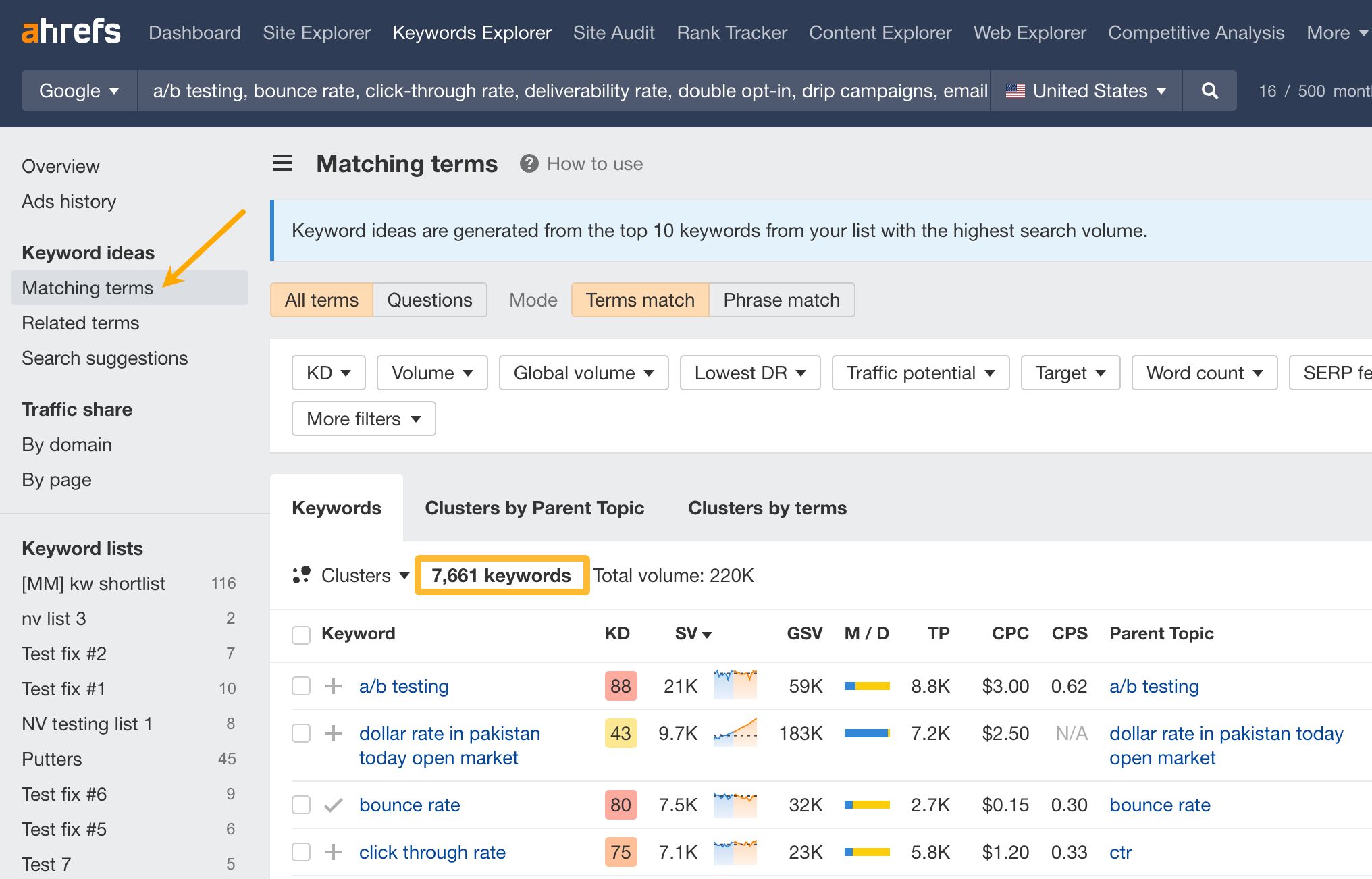

After hitting the “Search” button, go to the Matching terms report. You will see a big list of keywords.

The list you’ll get will be quite raw — not all keywords will be equally good and the list will likely be too big to manage. Next steps are all about refining the list because we’ll be looking for target keywords — the keywords that will become the topic of your content.

2. Refine the list and cluster

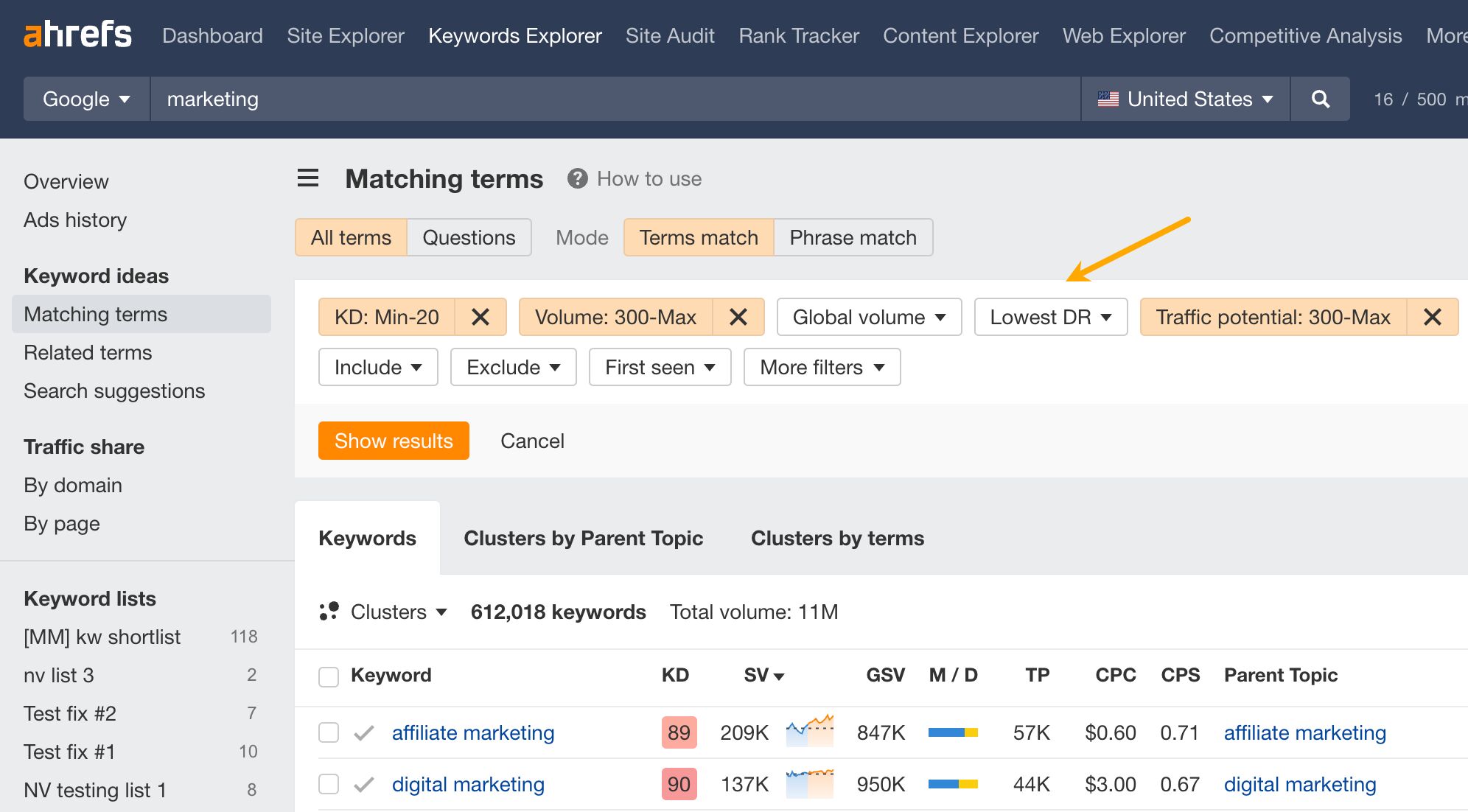

The next step is to refine your list using filters.

Some useful basic filters are:

- KD (Keyword Difficulty): how difficult it would be to rank on the first page of Google for a given keyword.

- Traffic potential: traffic you can get for ranking #1 for that keyword and other relevant keywords (based on the page that currently ranks #1).

- Lowest DR (Domain Rating): plug in the DR of your site to see keywords where another site with the same DR already ranks in the top 10. In other words, it helps to find “rankable” keywords.

- Target: one of the main use cases is excluding keywords you already rank for.

- Include/Exclude: see keywords that contain specific words to increase relevancy/hide keywords with irrelevant words.

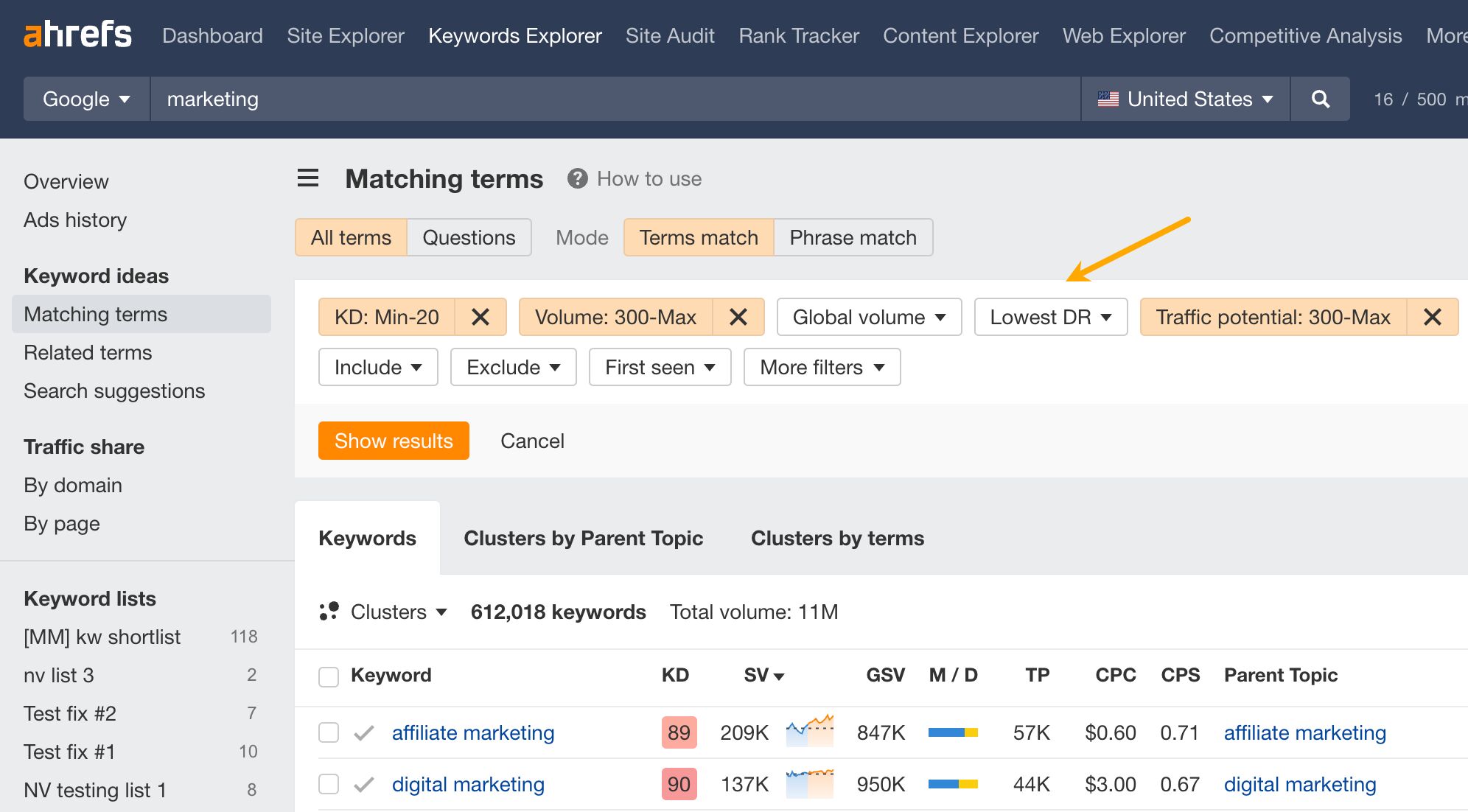

For example, here’s how to find potentially rankable keywords with traffic potential above 300 monthly visits. Go to the Matching terms report in Keywords Explorer and set filters: keyword difficulty filter (KD) to your site’s Domain Rating, Traffic potential, and Volume filters to a minimum of 300.

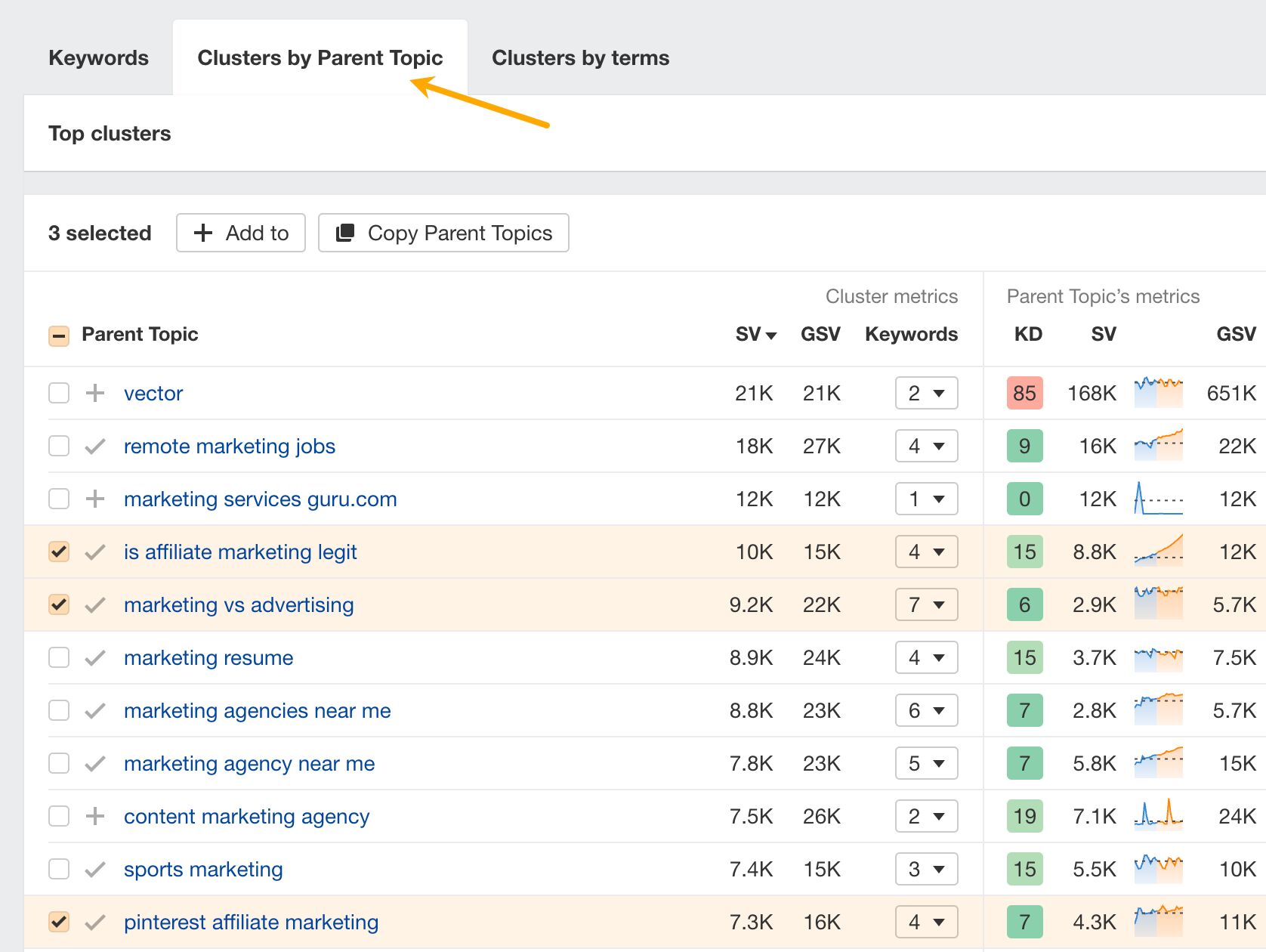

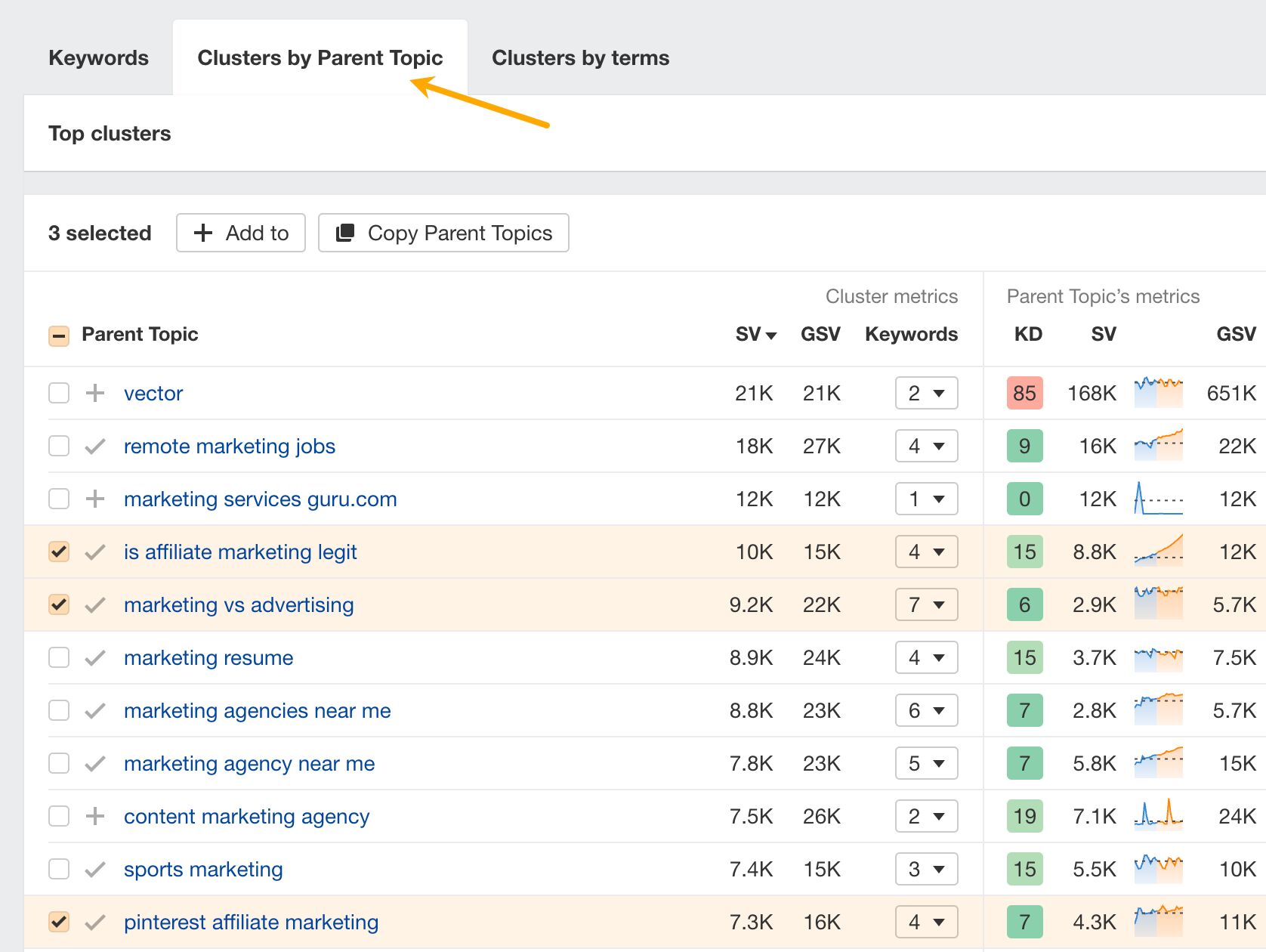

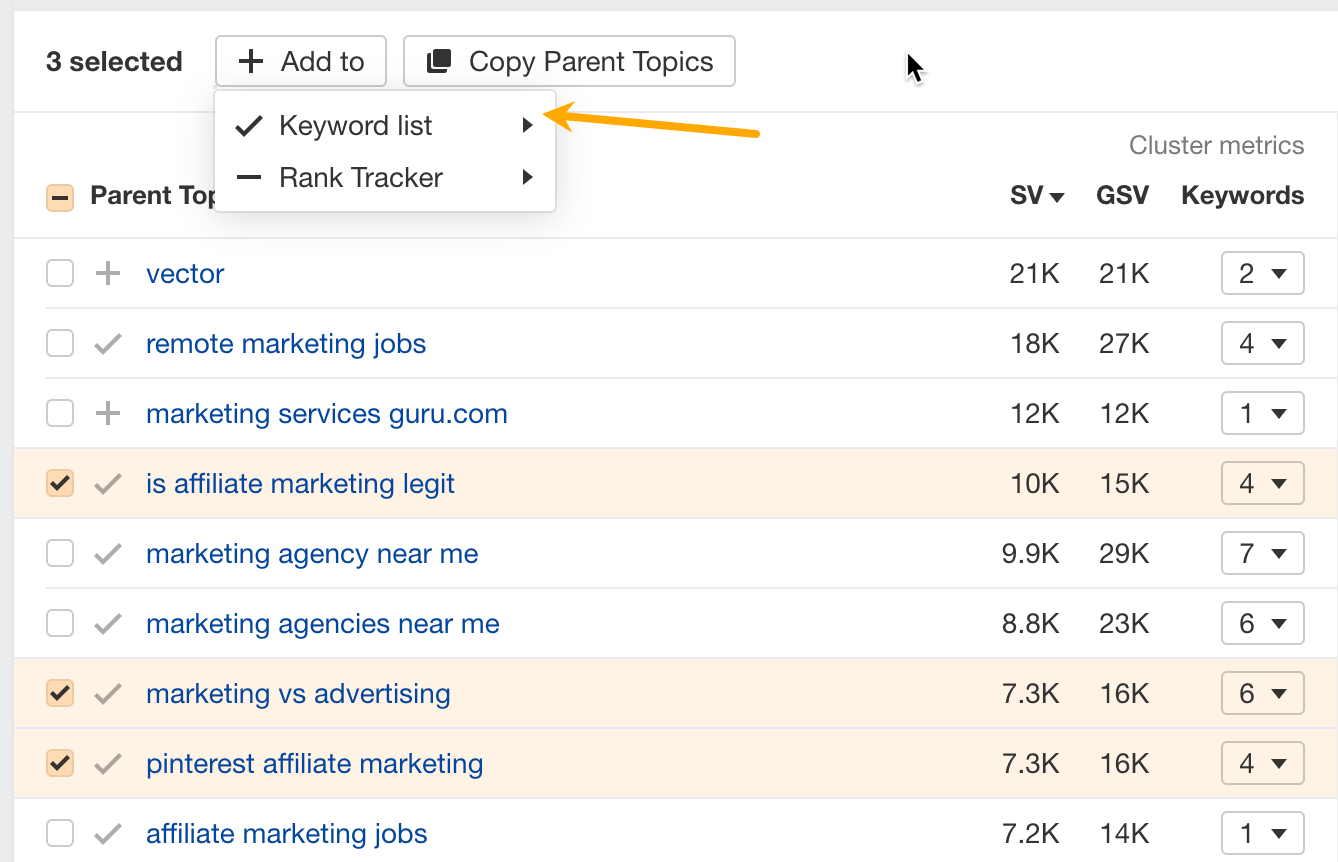

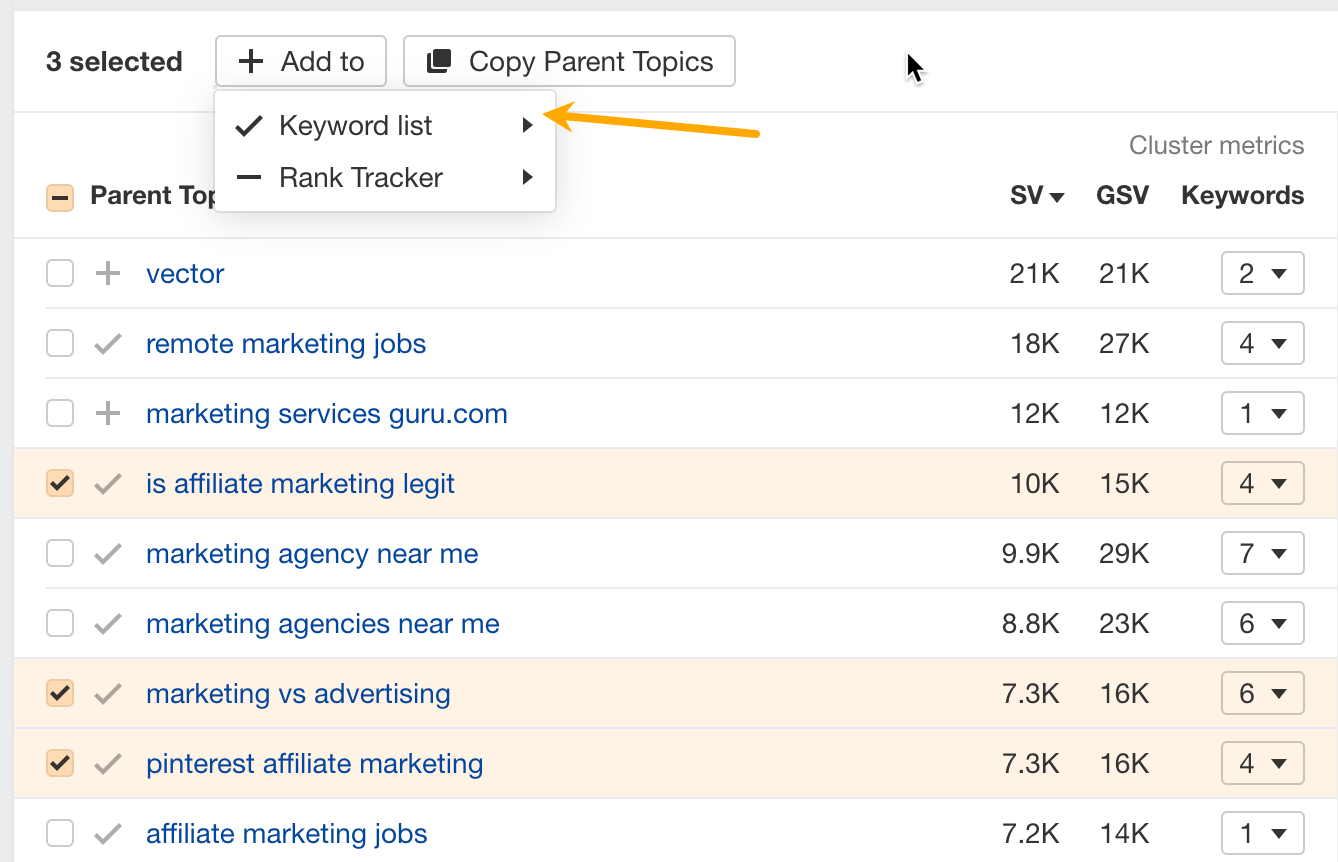

Clustering is another step to refine your list. It shows you if there is another keyword you could target to get more traffic (aka parent topic). At the same time, it shows which keywords most likely belong on the same page.

For example, here are some clusters distilled from low-competition topics about marketing.

Pro tip

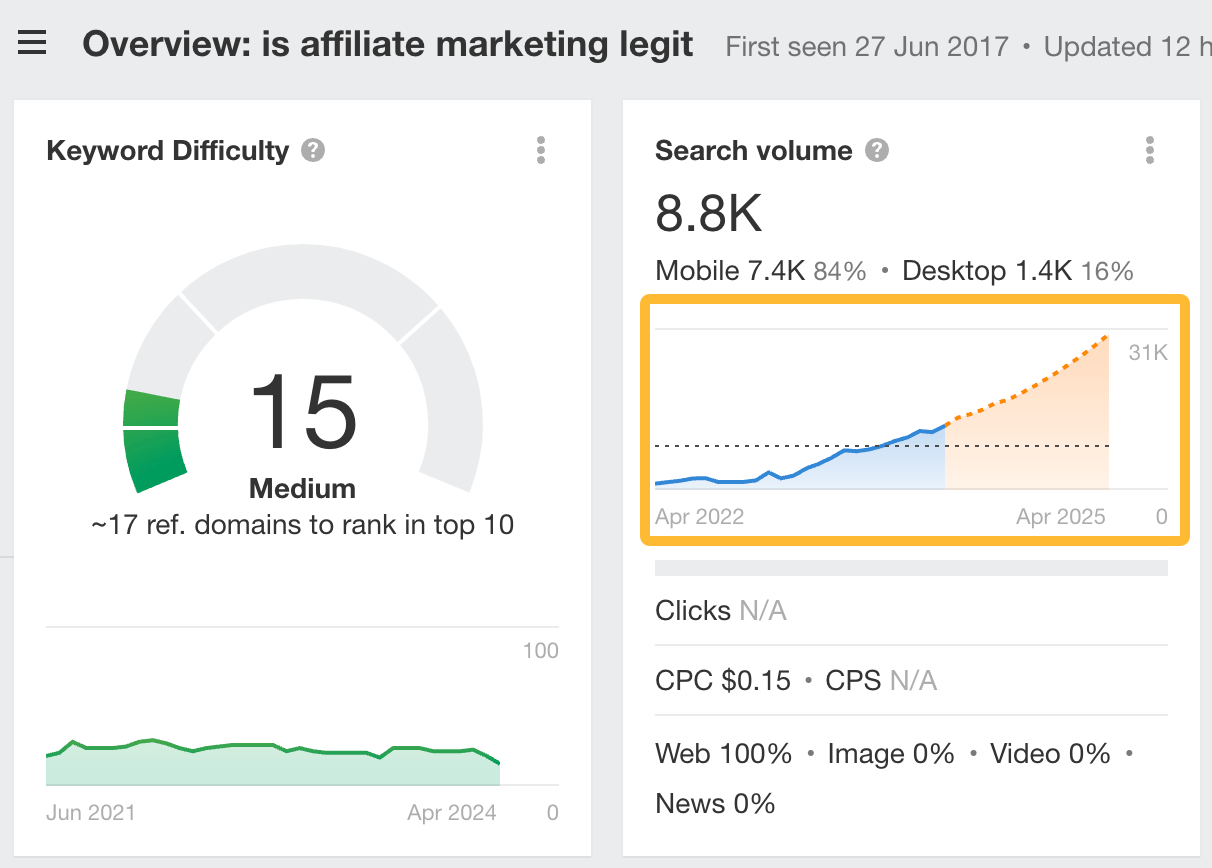

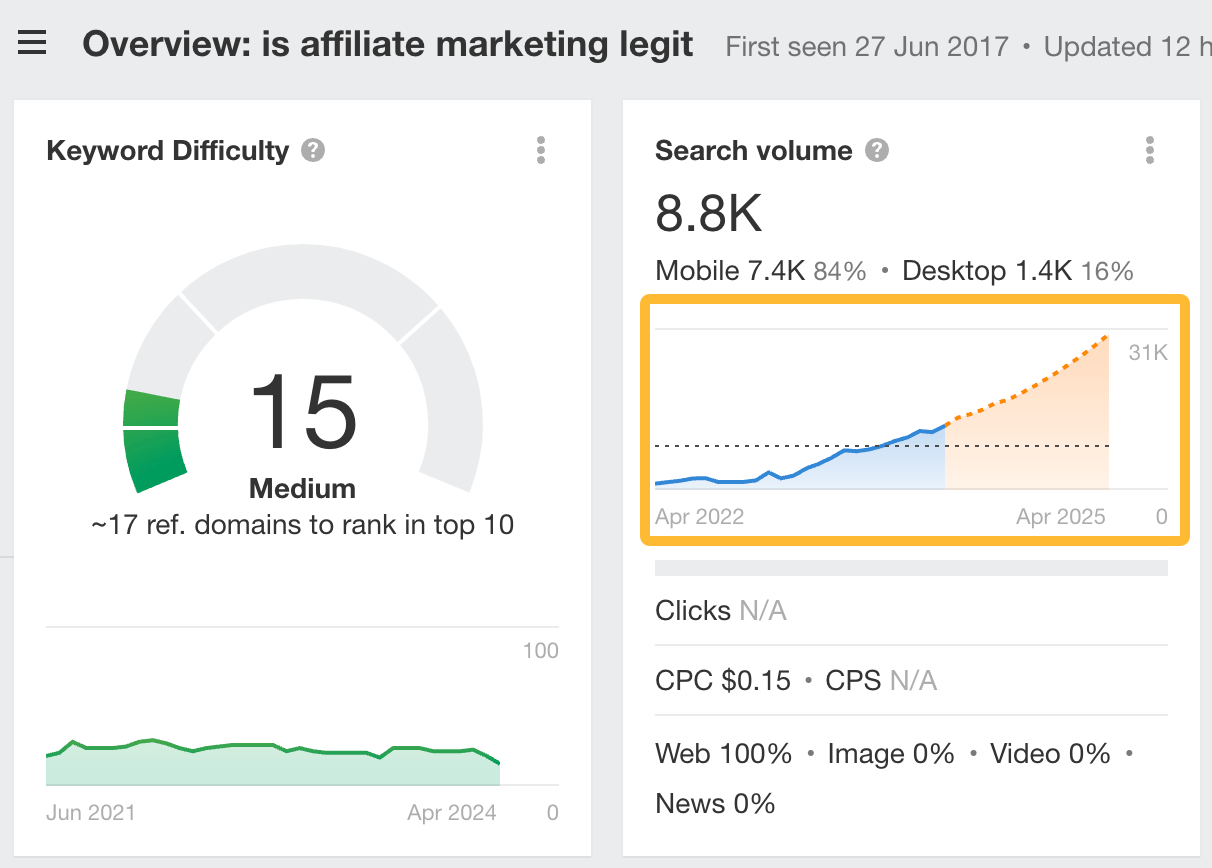

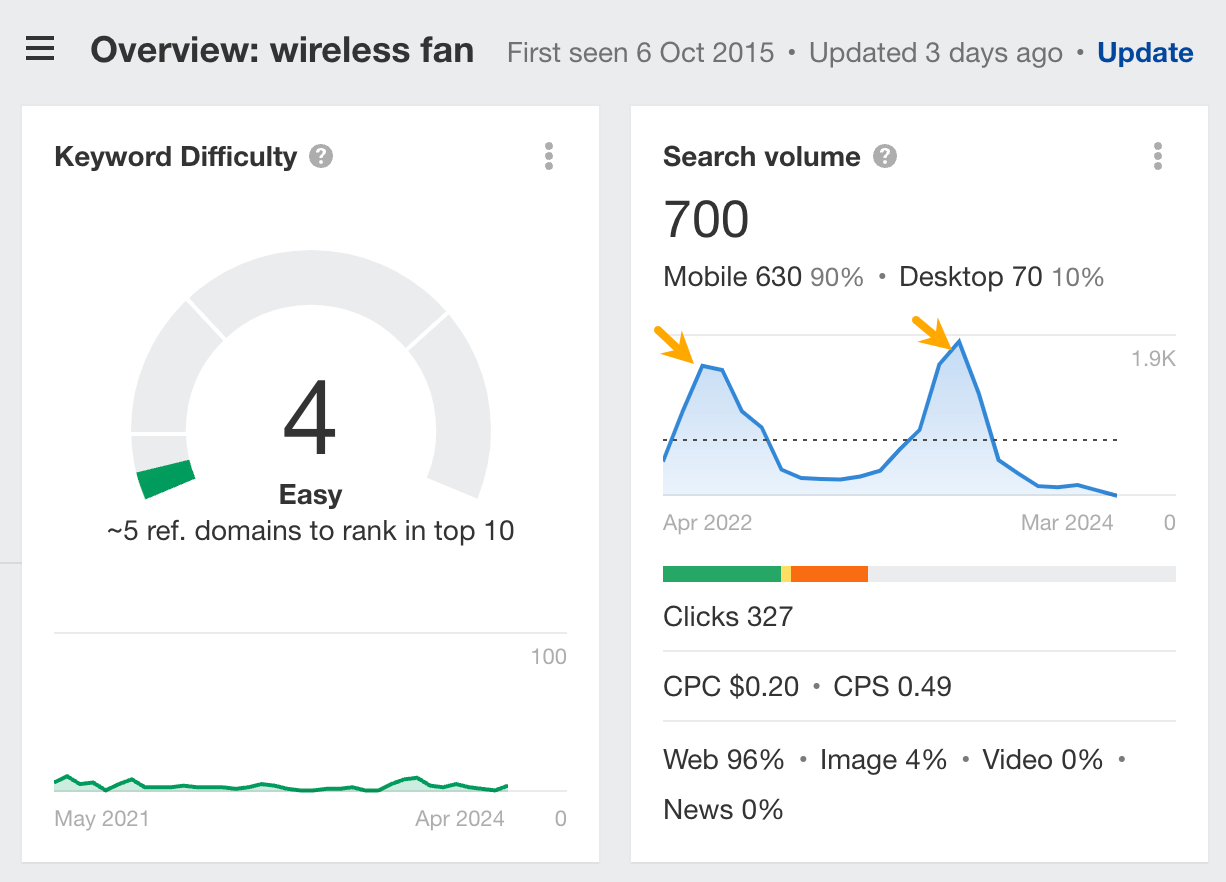

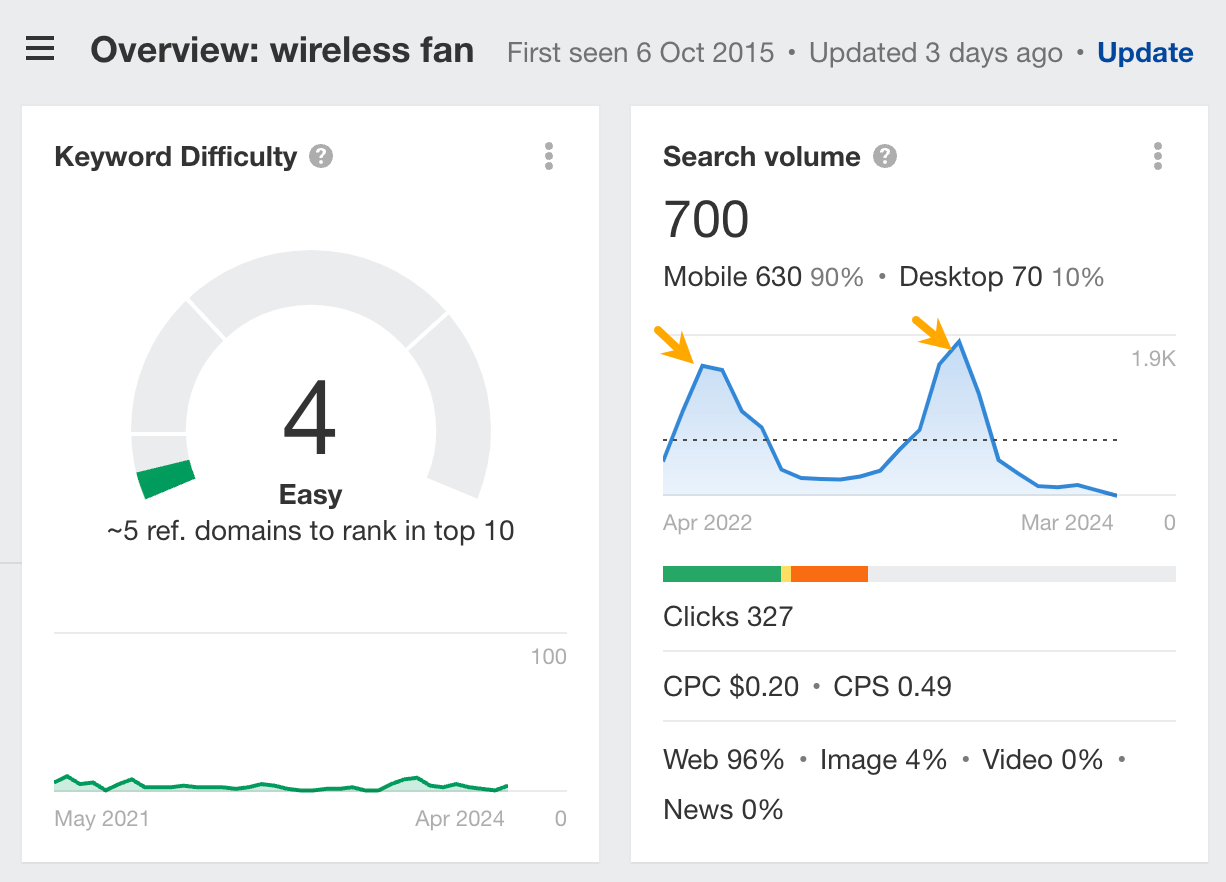

Take keyword trends into account when choosing keywords.

For example, the keyword “is affiliate marketing legit” is at 8.8k monthly search volume right now, but based on our forecast in Keywords Explorer (the orange part of the chart), if it continues its current growth rate it should be more than triple next year.

The graphs will also show you if the search volume is affected by seasonality (fluctuations in search volume throughout different times of the year).

4. Identify search intent and determine value for your site

Before investing time in content, make sure you can give searchers what they want and that the keywords will attract the right kind of audience.

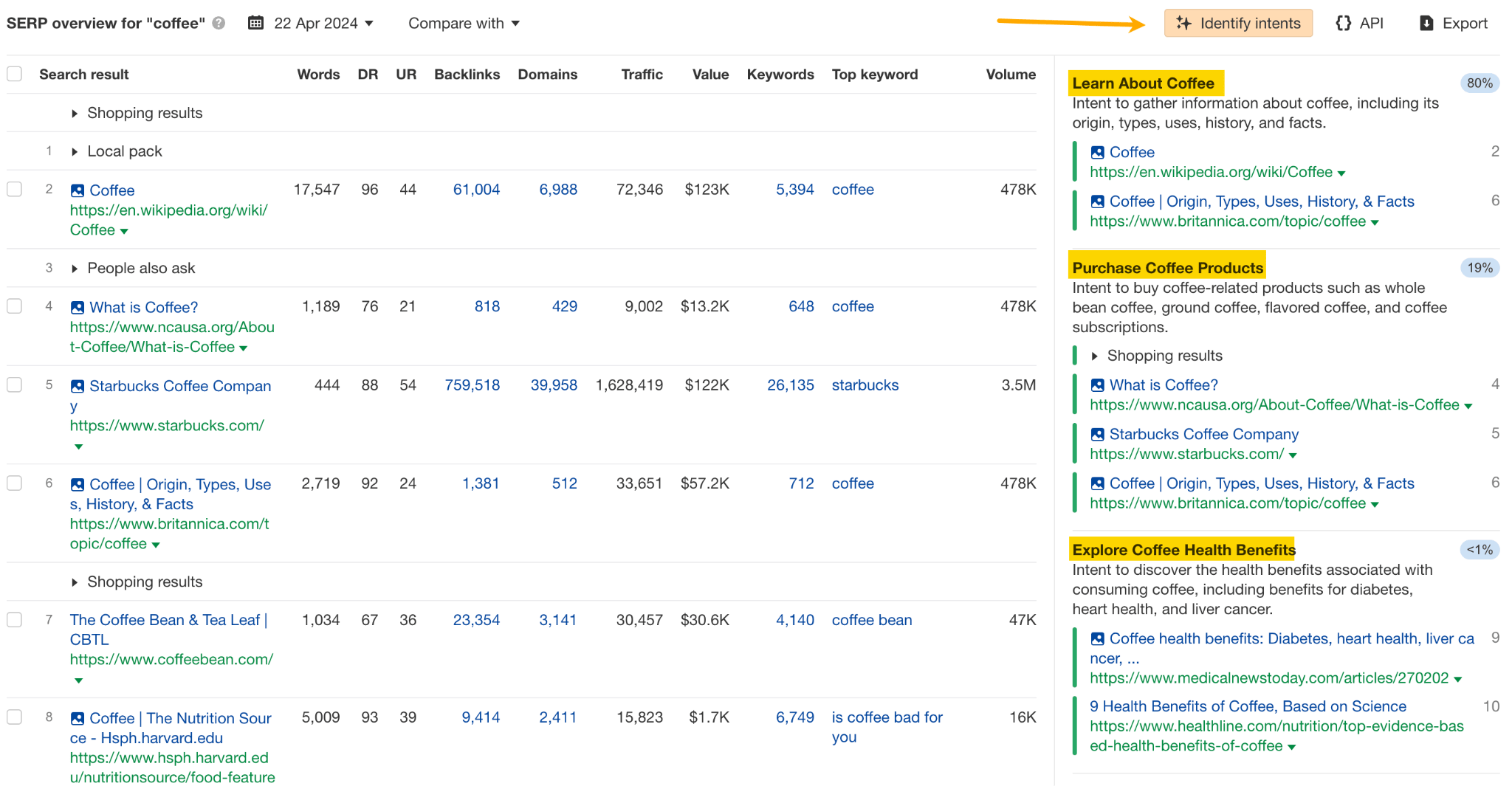

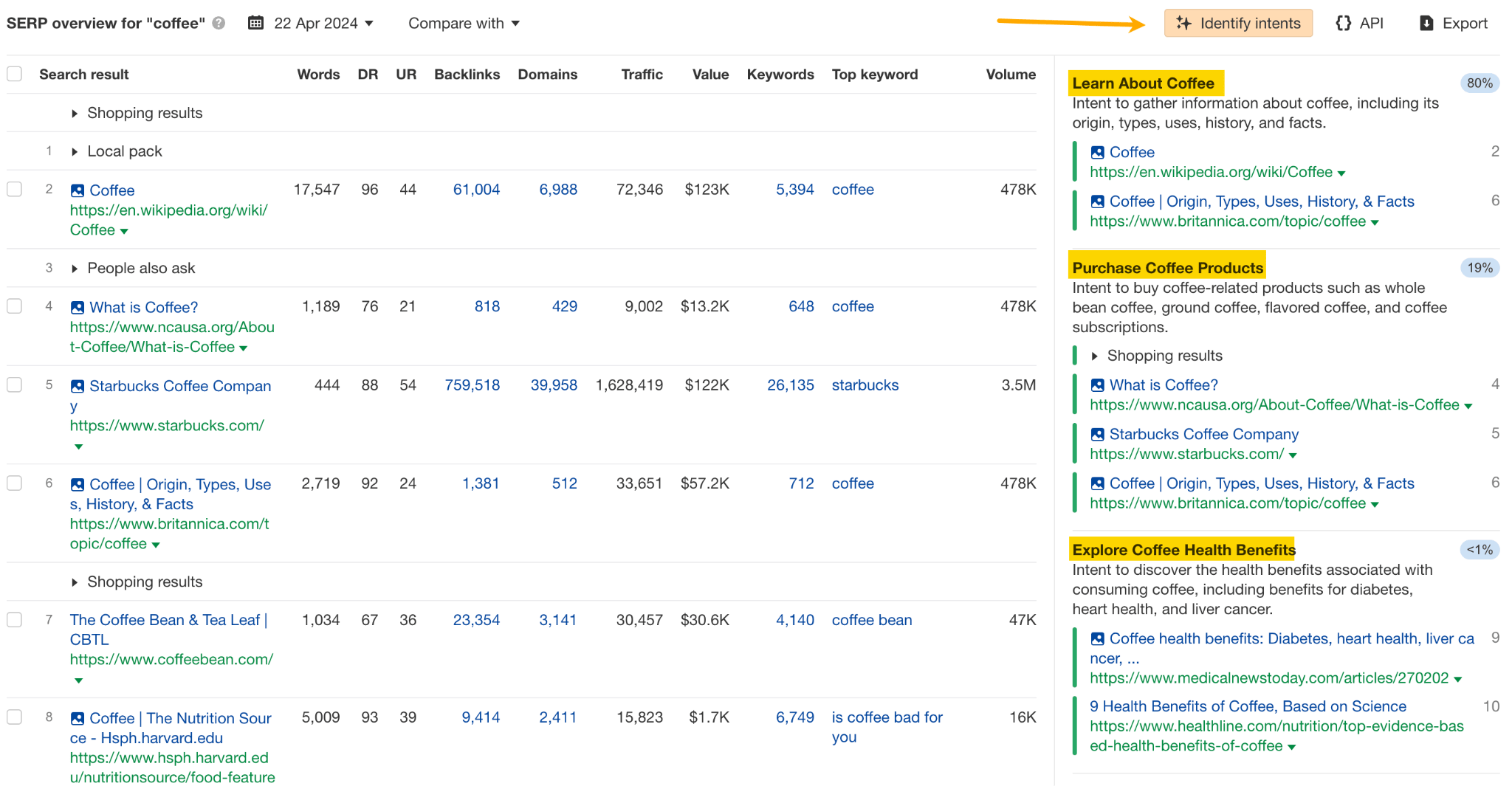

To identify the type of page you need to create to satisfy searchers, look at the top-ranking pages to see what purpose they serve (are they more informational or commercial), or simply use the Identify intents AI feature in Keywords Explorer.

So, for example, if the top-ranking pages are ecommerce pages and you’re not offering products on your site, it’s going to be very hard to rank.

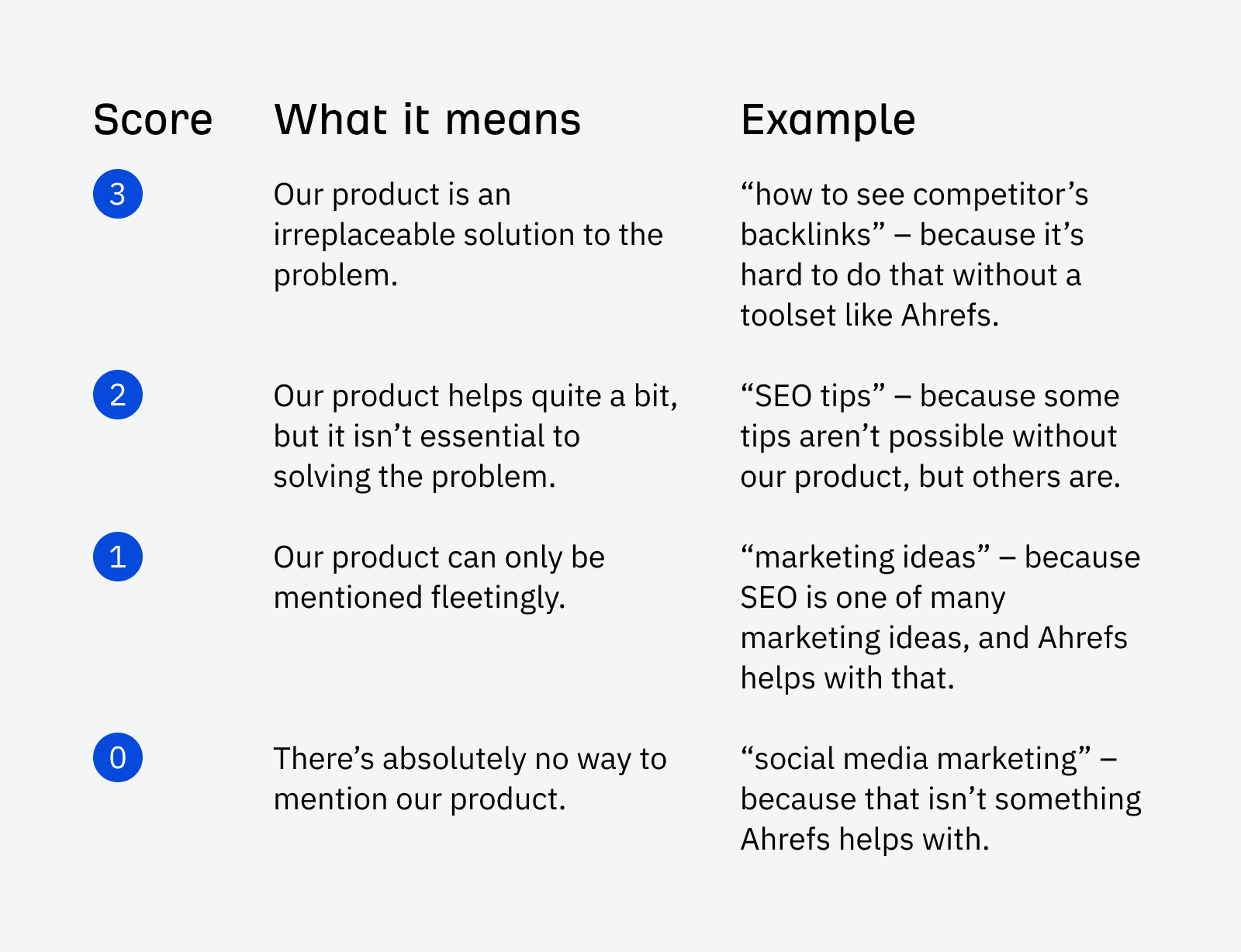

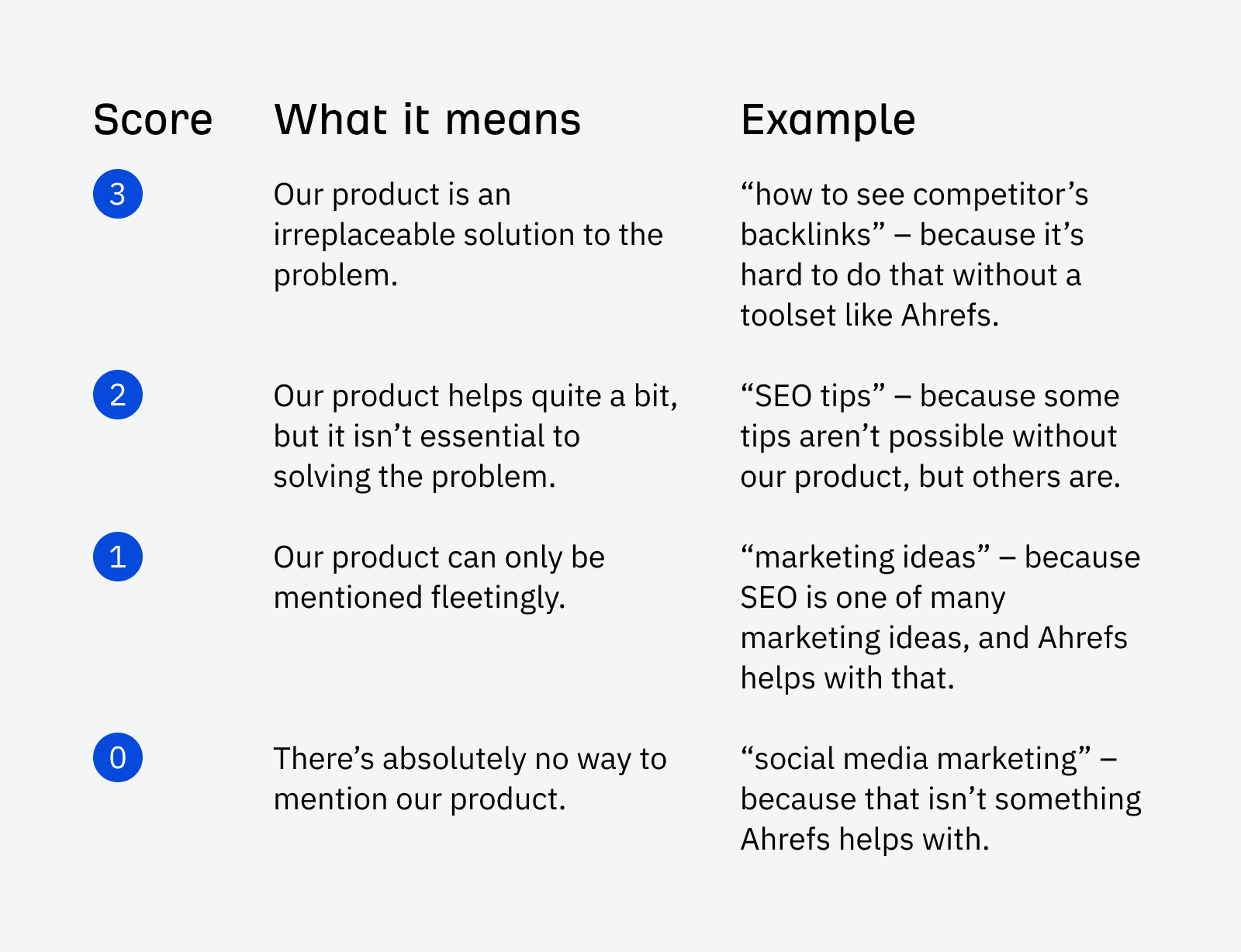

Then, ask yourself if visitors attracted by a keyword will be valuable to your business — whether they’re likely to subscribe to your newsletter or make a purchase. At Ahrefs, we use a business potential score to evaluate this.

Finally, if a keyword checks all boxes, add it to a keyword list.

Now you’ve got a list of relevant, valuable target keywords with traffic potential ready for content creation. You can repeat the process as many times as you like with different seed keywords or different filters and find new ideas.

There’s one more great source of keywords — competitors.

5. Enrich the list with your competitors’ keywords

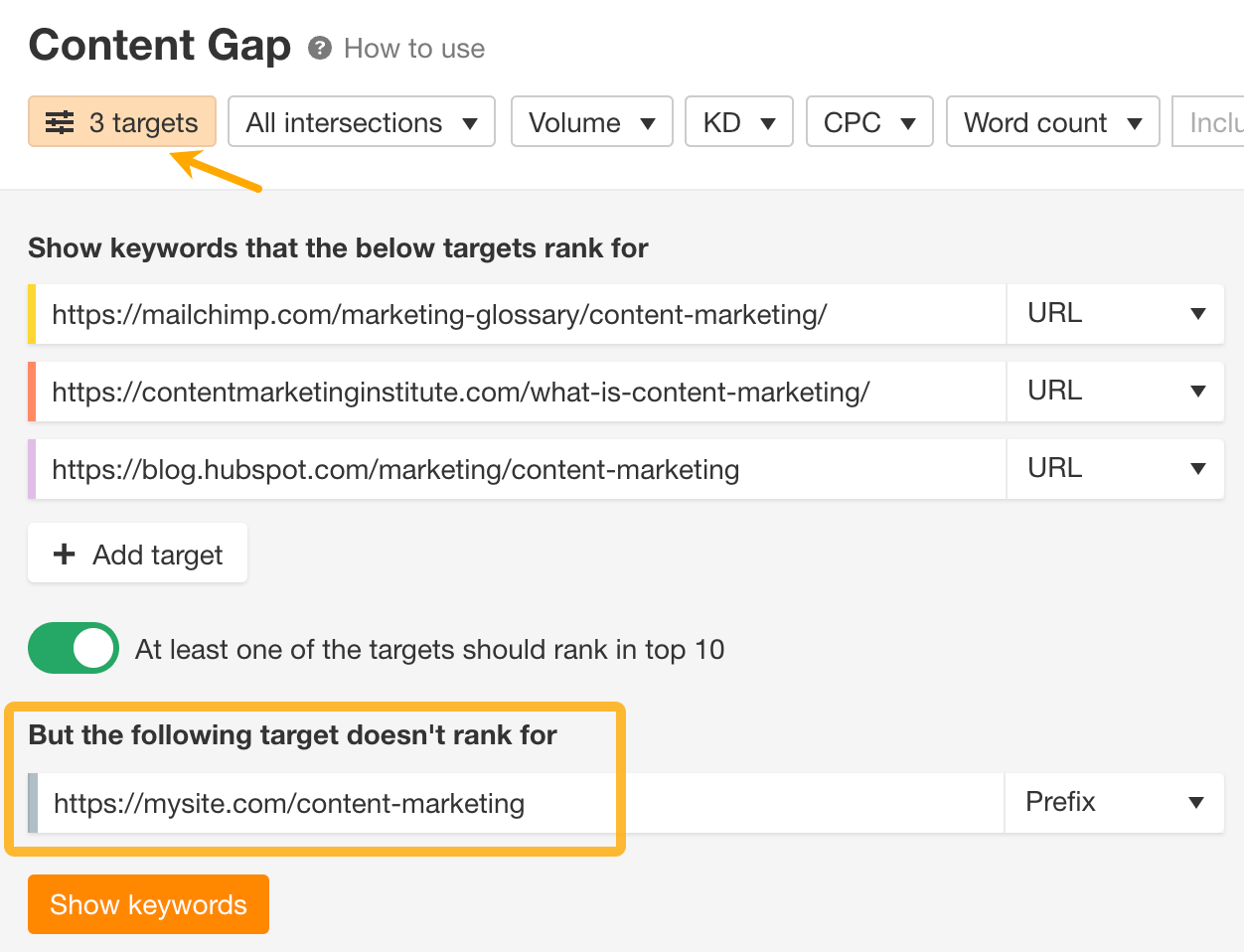

In this step, we’ll do a content gap analysis to find keywords your competitors already rank for, but you don’t.

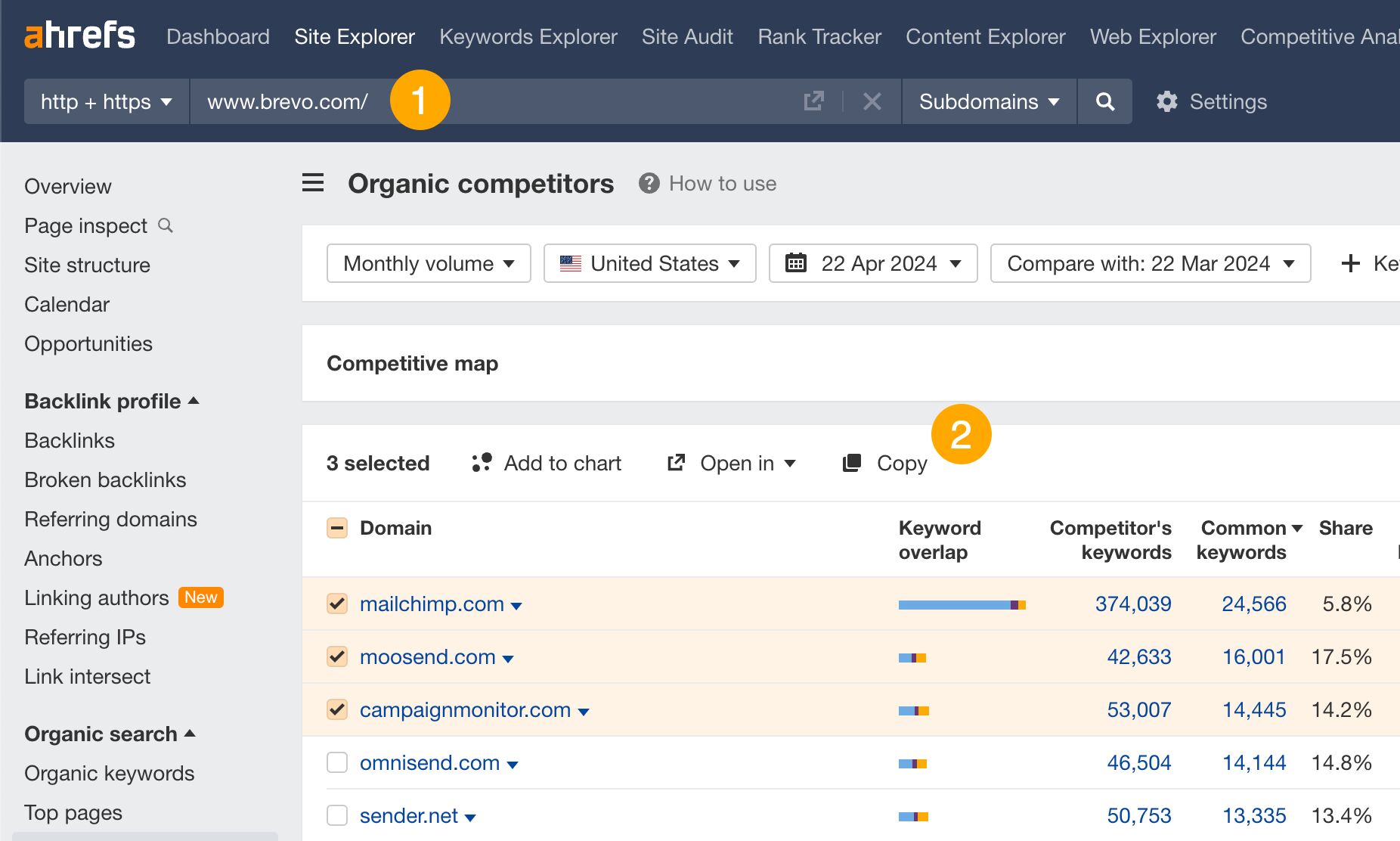

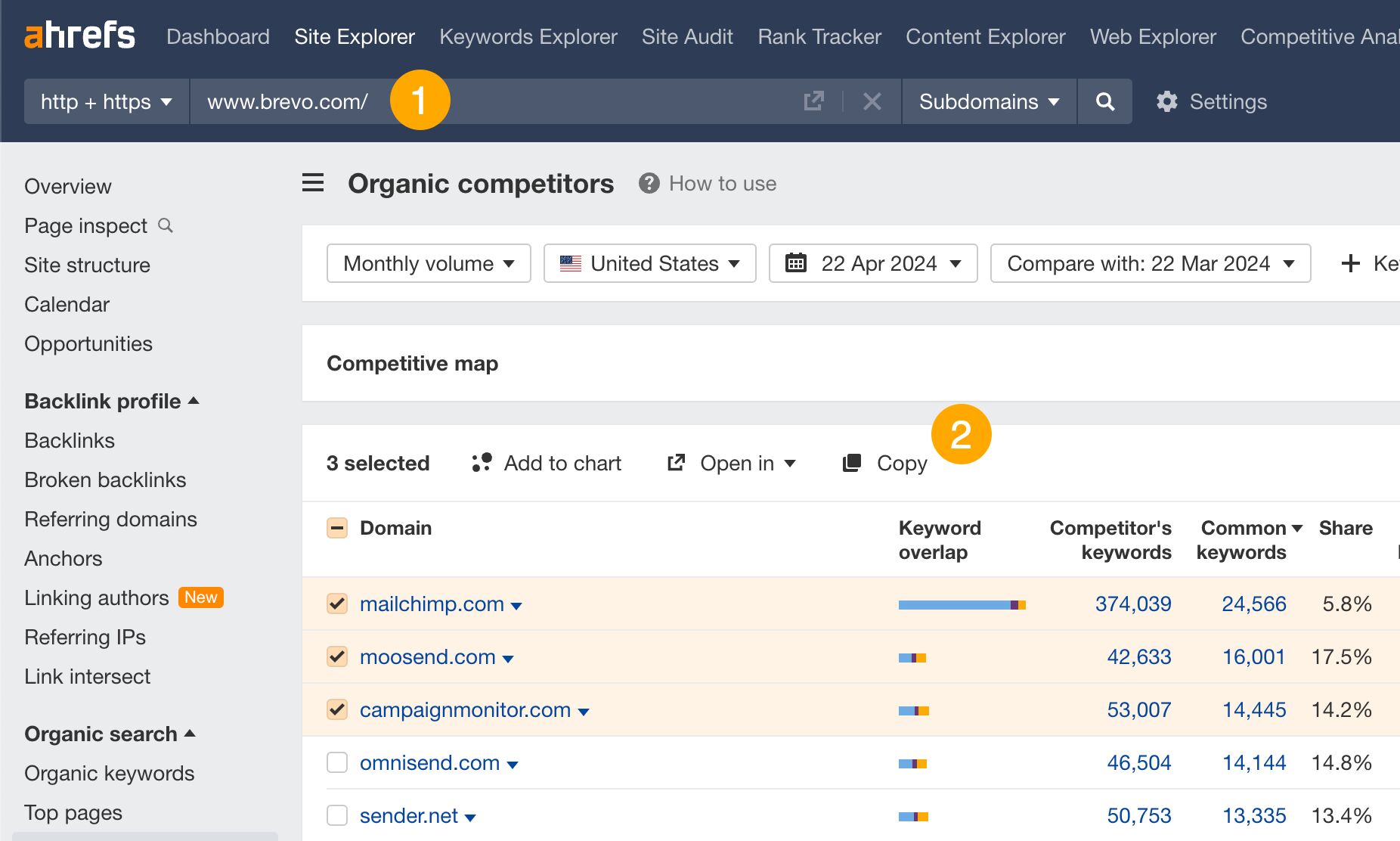

First, let’s find your competitors.

- Enter your domain in Ahrefs’ Site Explorer and go to the Organic competitors report.

- Select the most relevant competitors and click on Copy (this copies URLs — we’ll use them in another tool).

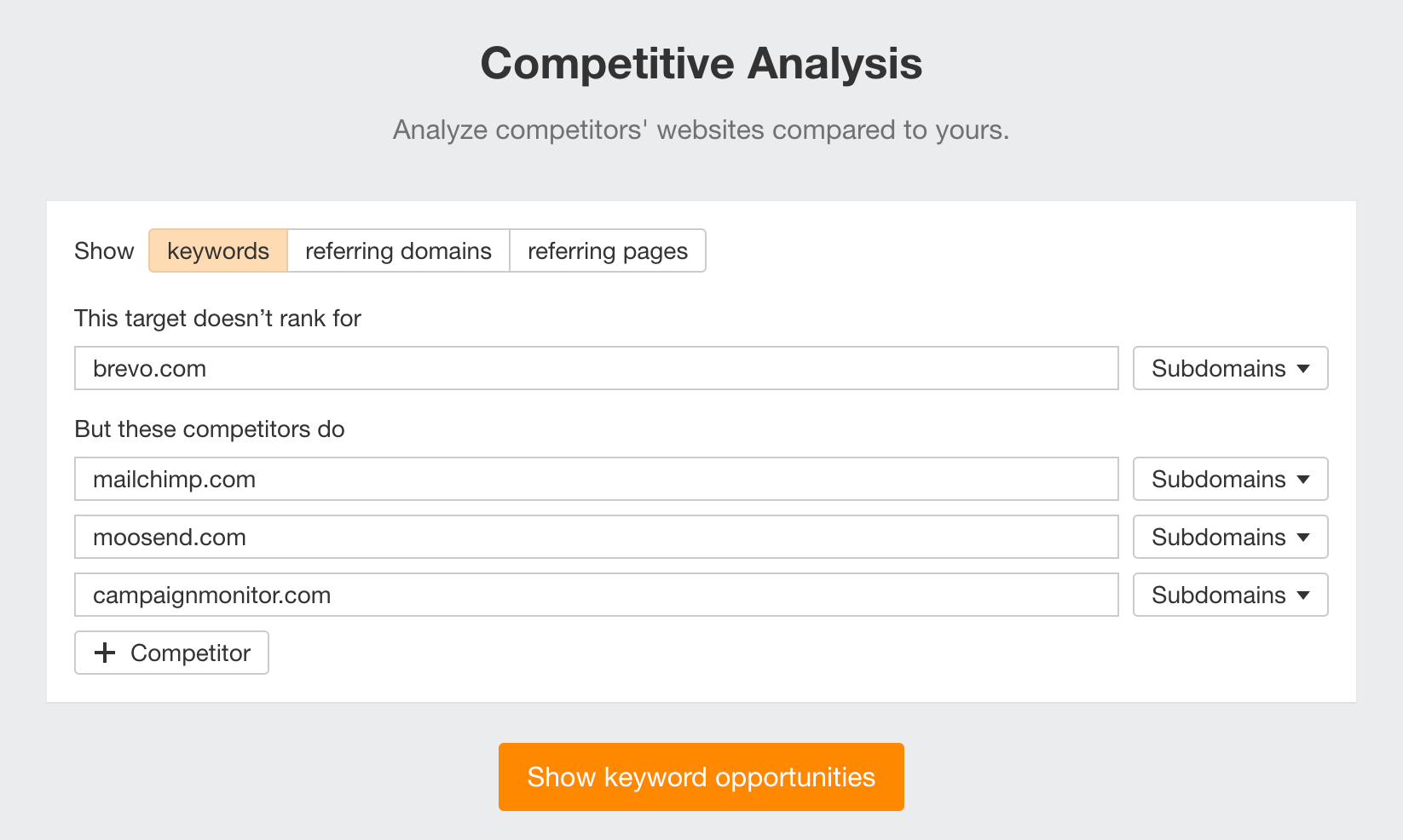

Next, we’ll see which keywords you’re missing.

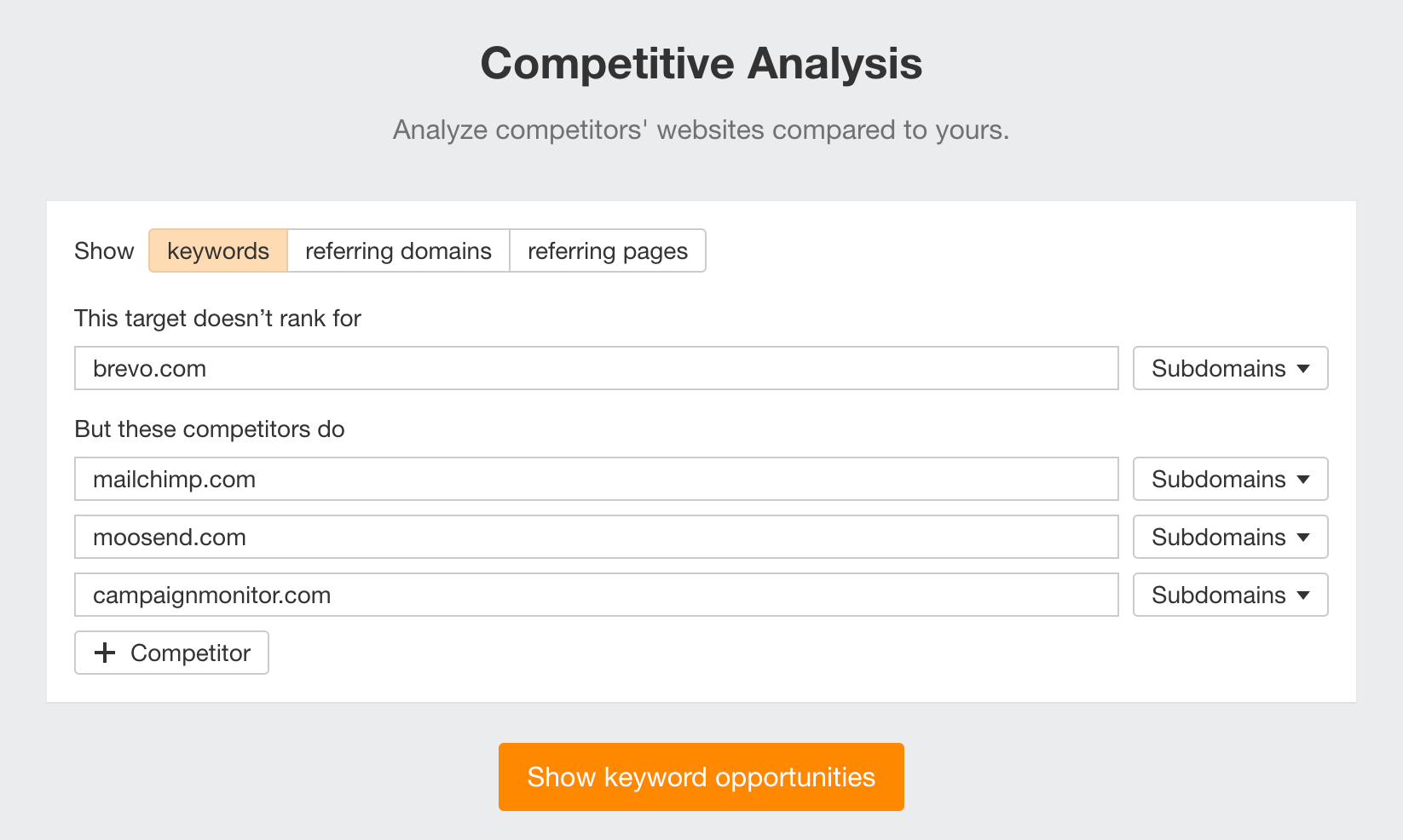

- Go to Ahrefs’ Competitive Analysis tool, paste the previously copied URLs, enter your domain on top and hit Show keyword opportunities.

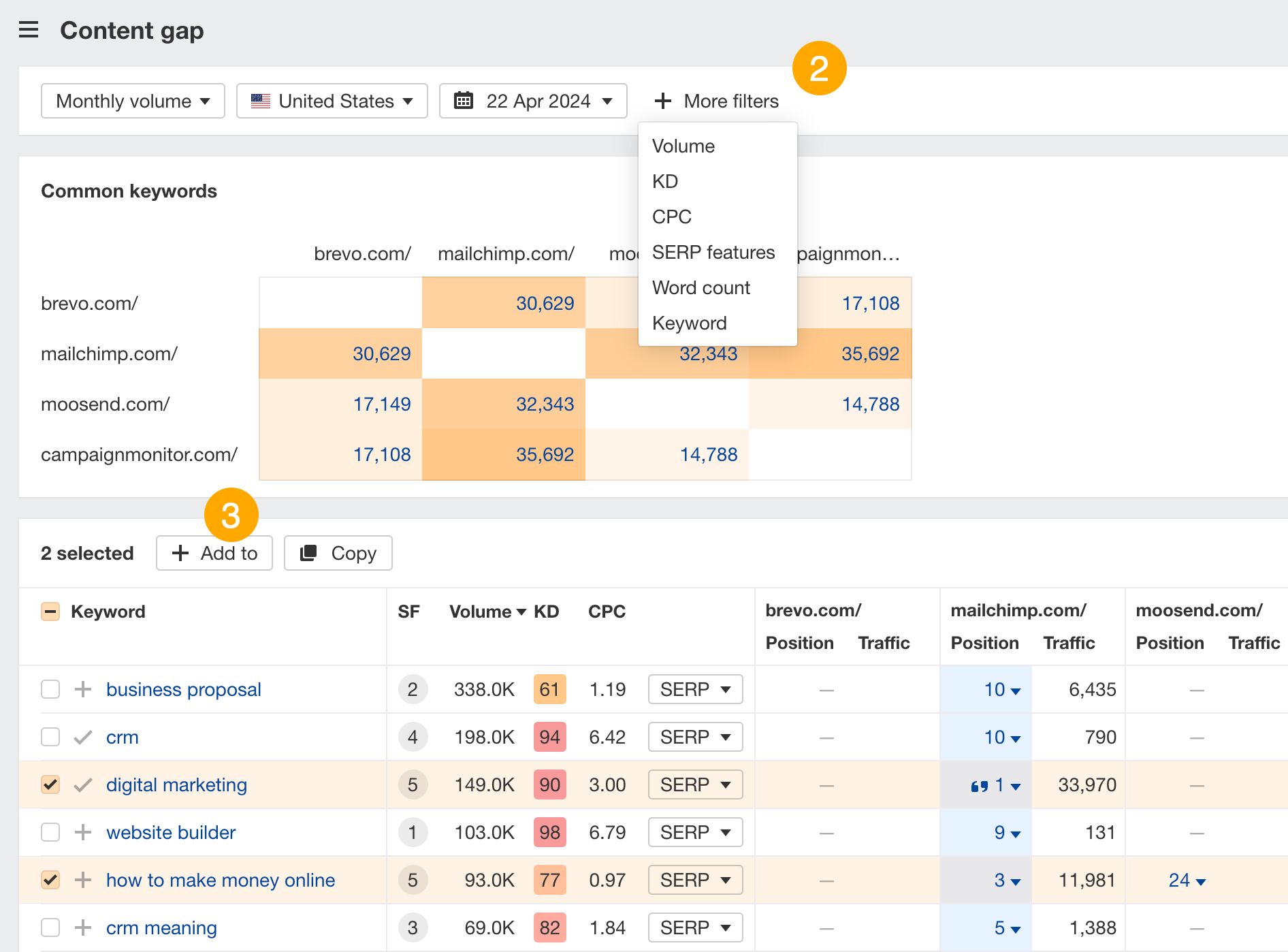

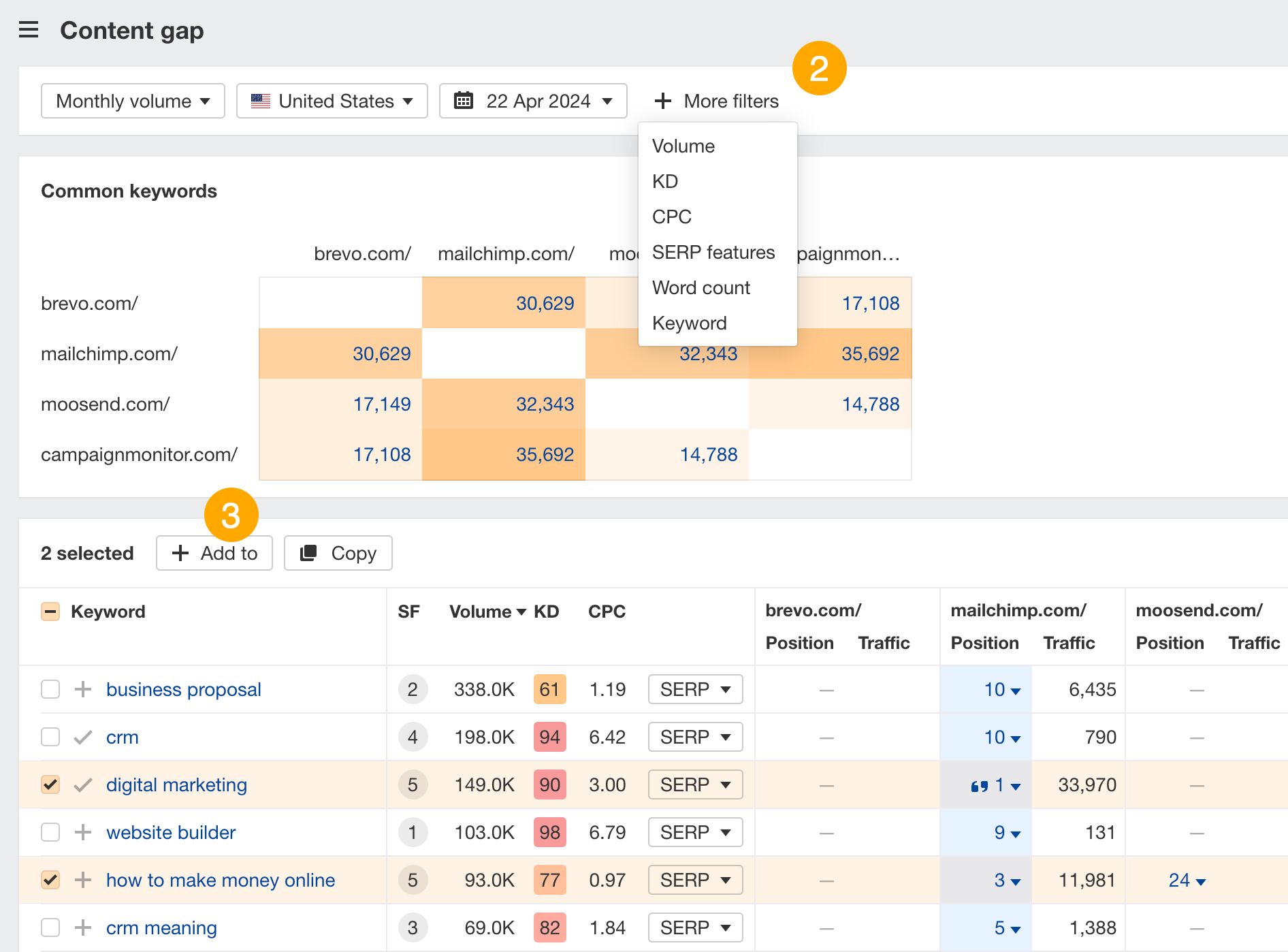

- In the Content gap report, use filters to refine the report.

- Select keywords and add them to your list.

Pro tip

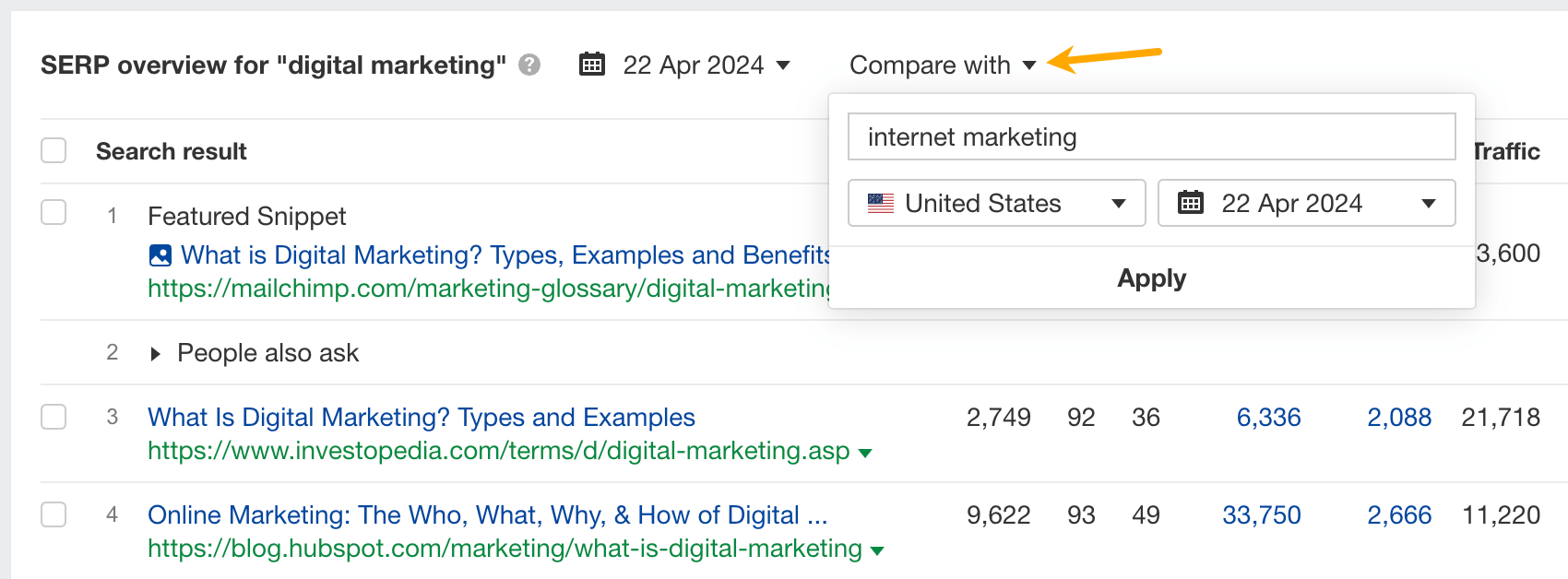

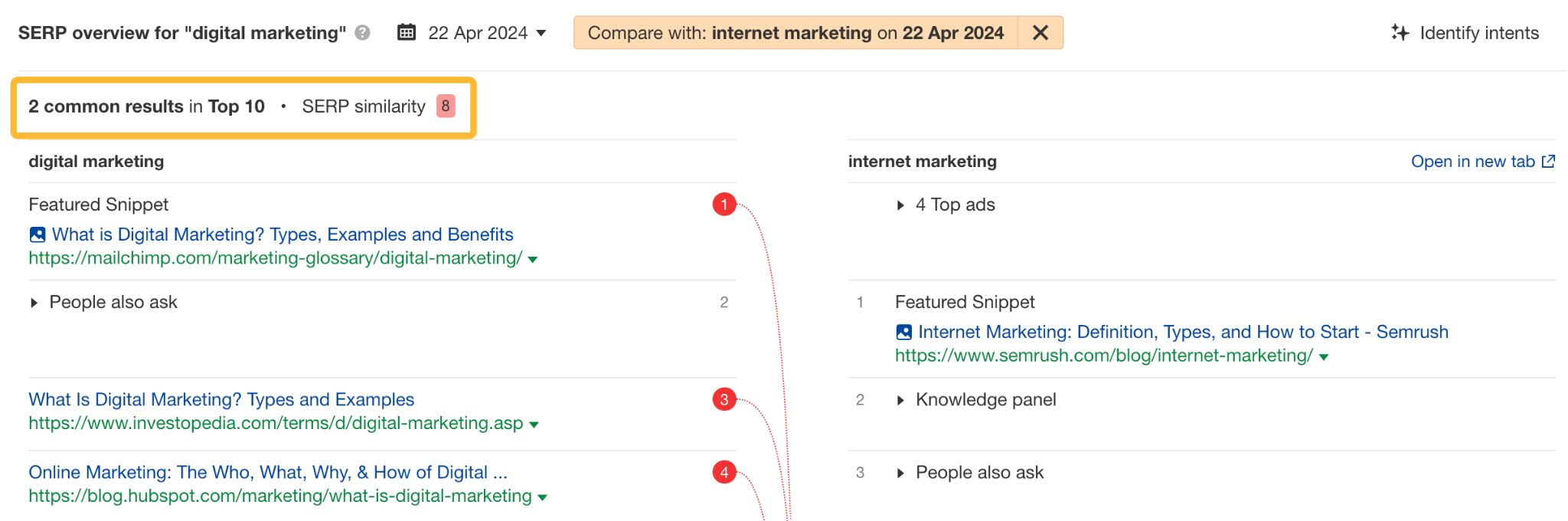

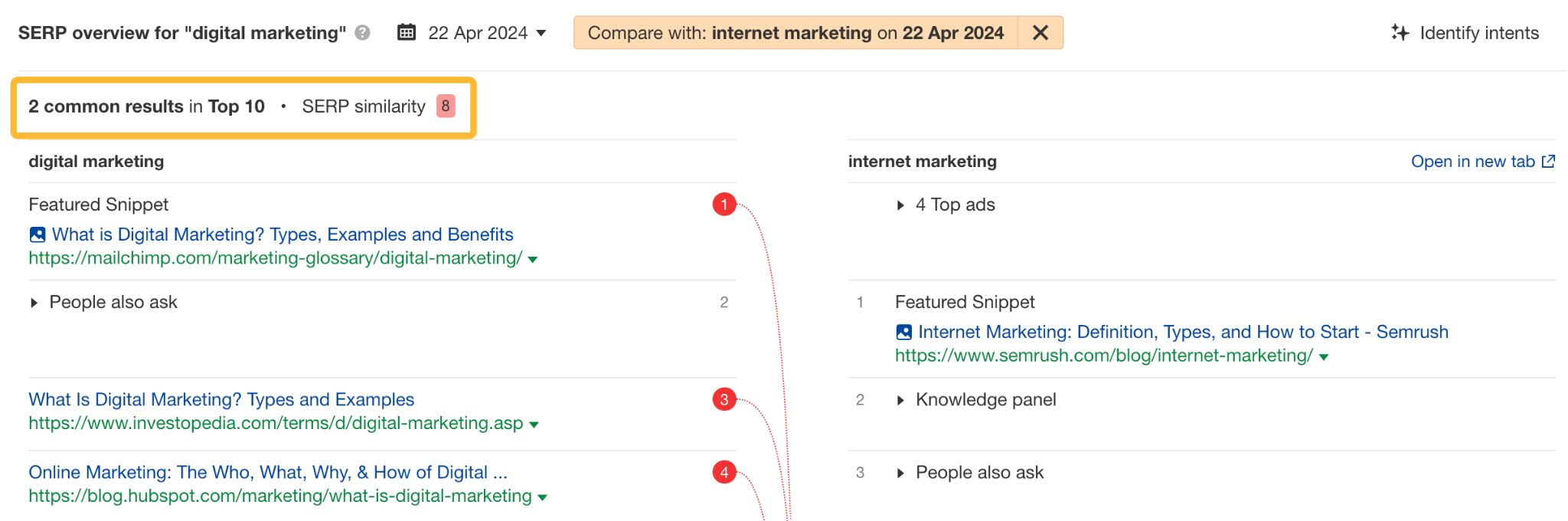

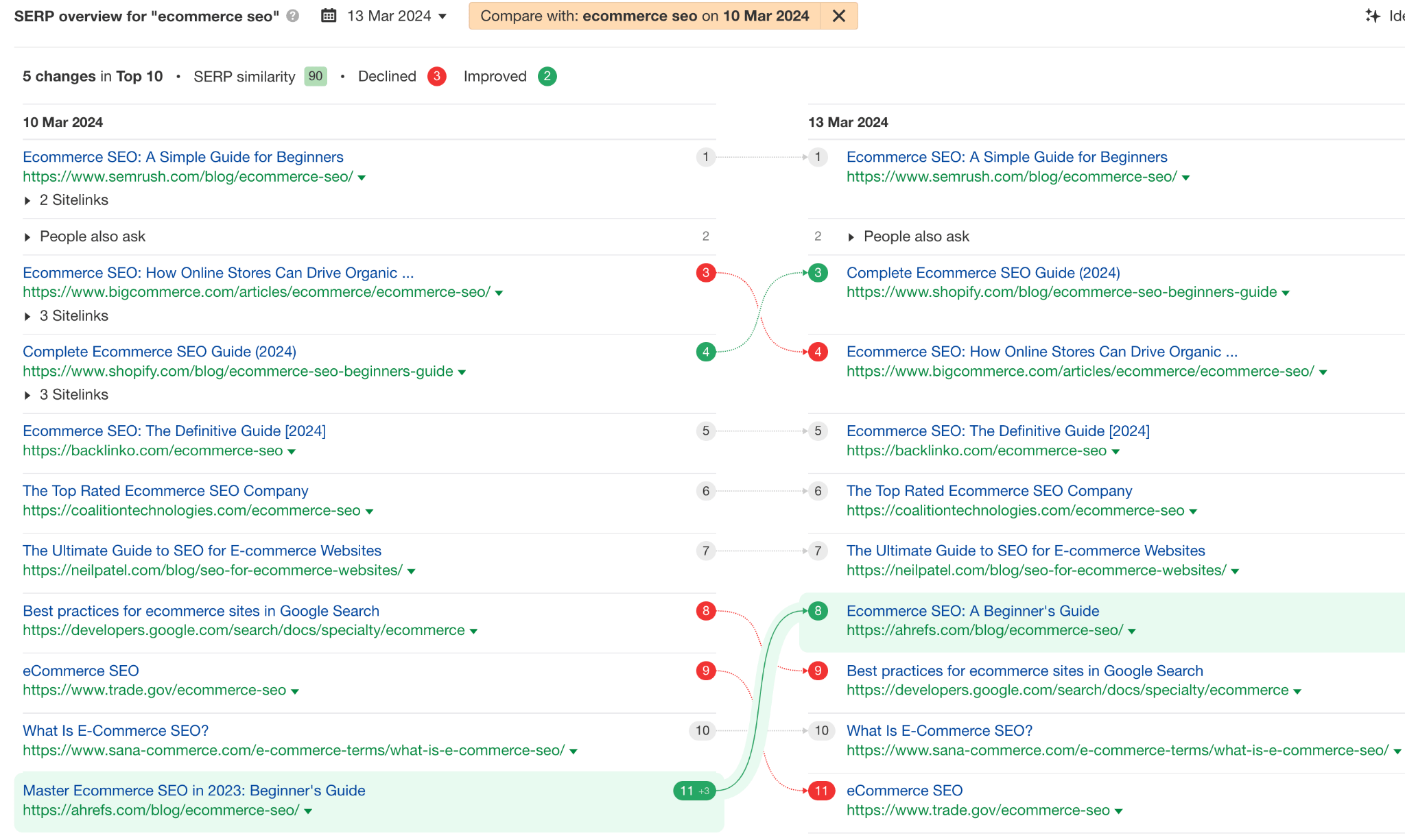

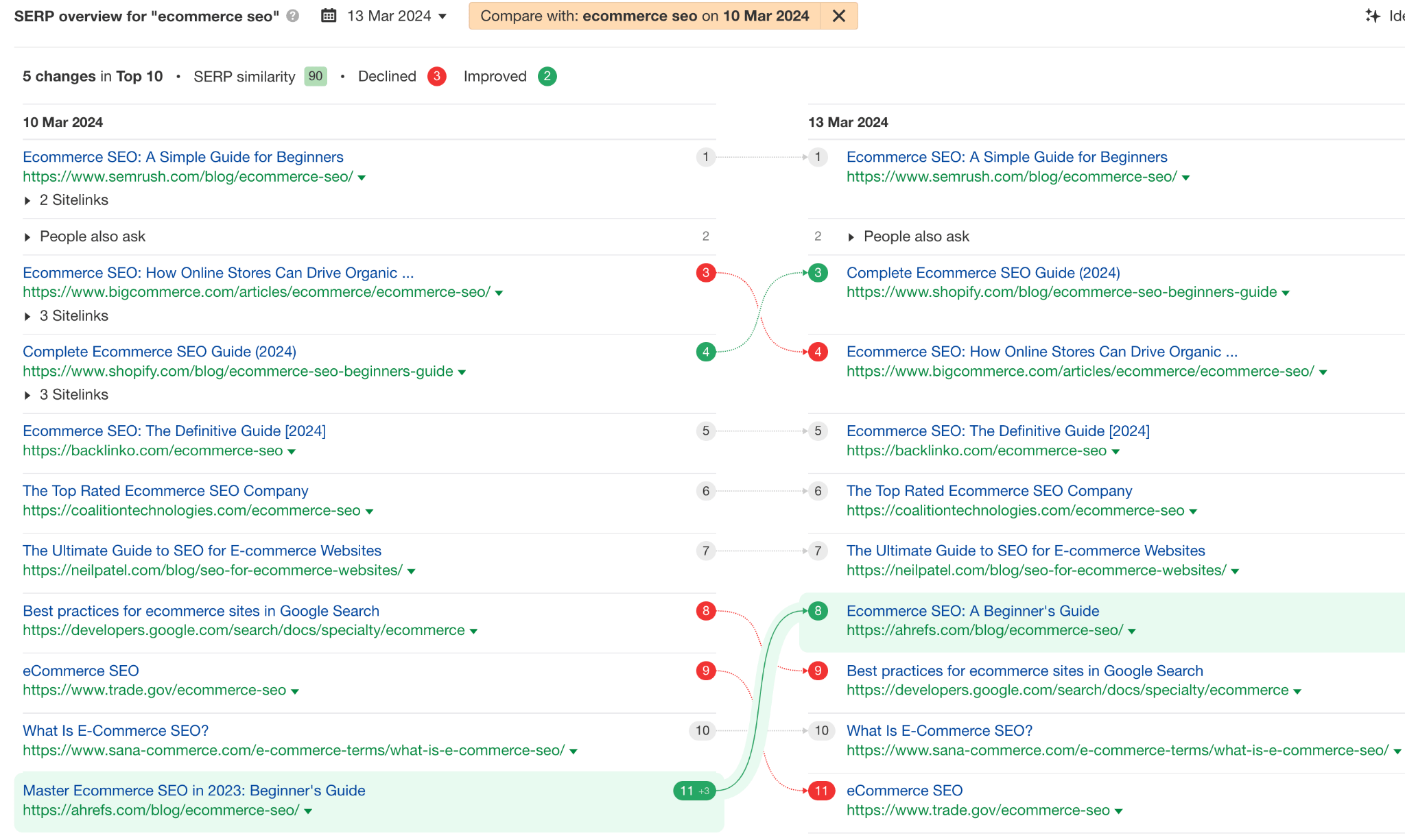

If you stumble across two similar keywords there’s an easy way to determine if they belong on the same page.

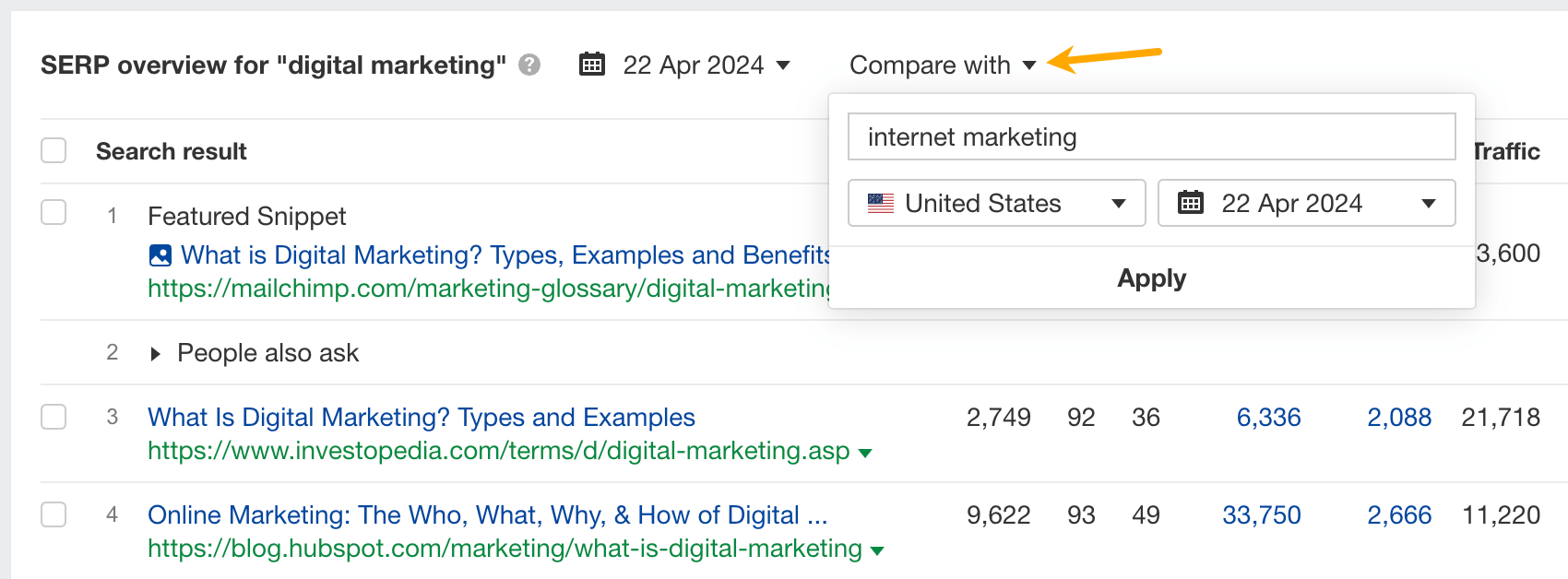

- Enter the keyword in Keywords Explorer.

- Scroll to SERP overview, click Compare with, and enter the keyword to compare with.

- Fewer common results and low SERP (Search Engine Result Page) similarity mean separate pages should target the two keywords.

Once you have your target keyword, you can include it in relevant places in your on-page content, including:

- Key elements of search intent (content type, format, and angle).

- URL slug.

- Title and H1.

- Meta description.

- Subheadings (H2 – H6).

- Main content.

- Anchor text for links.

And, just so we’re on the same page, the target keyword is the topic of the content and the main keyword you’ll be optimizing for and tracking later on.

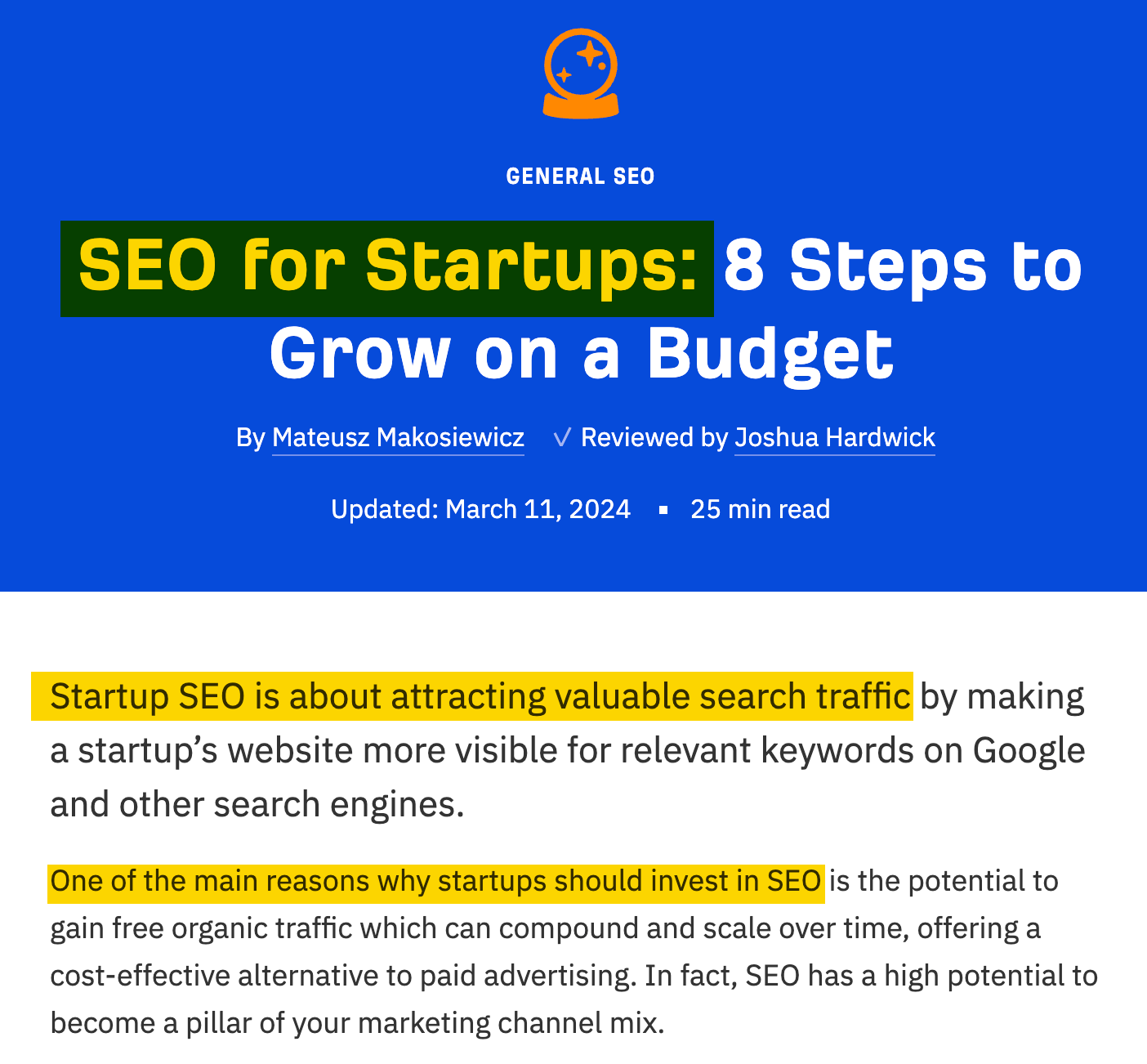

Use the target keyword to determine the search intent

Search intent is the reason behind the search. Understanding it tells you what users are looking for and what you need to deliver in your content.

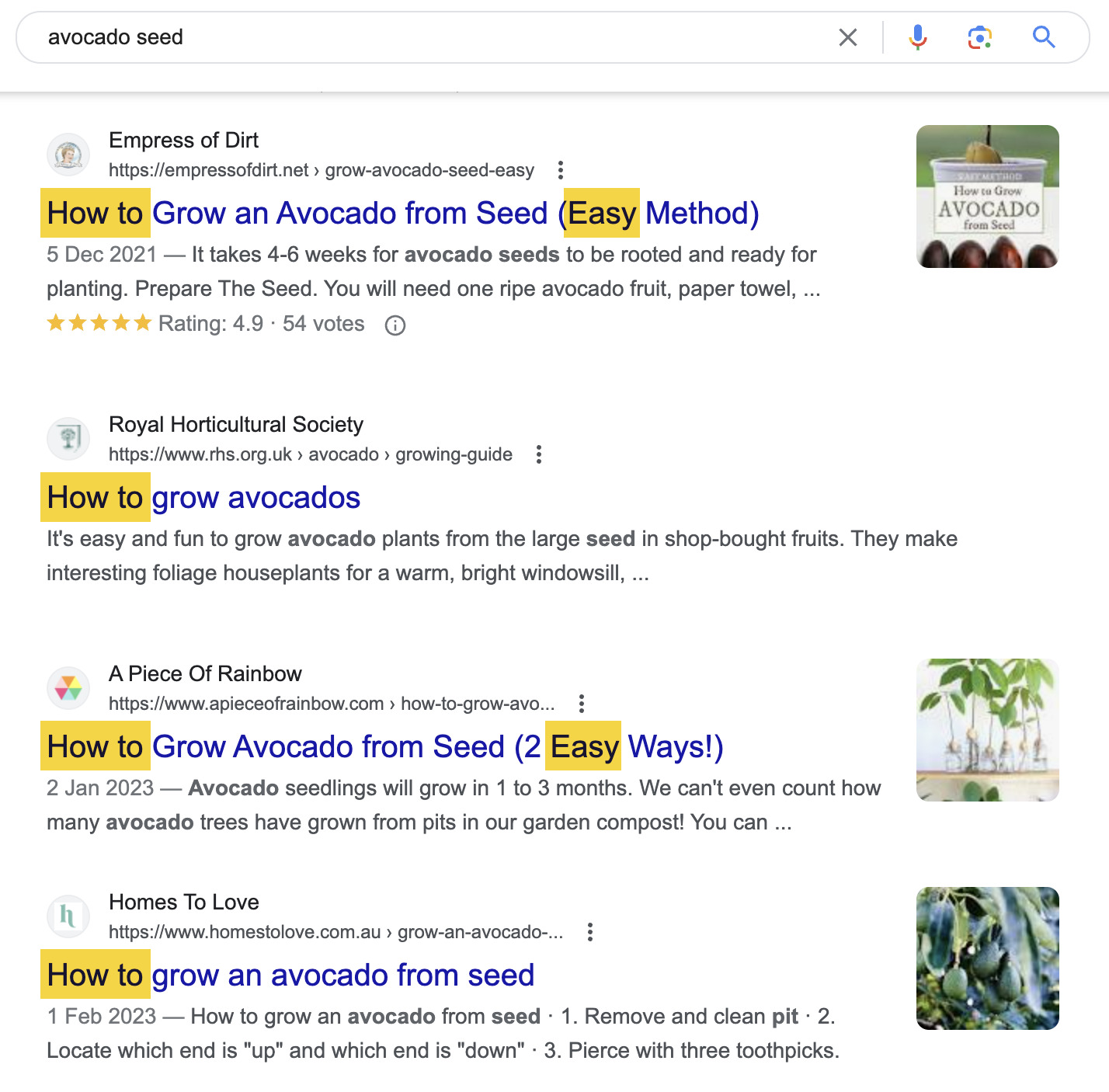

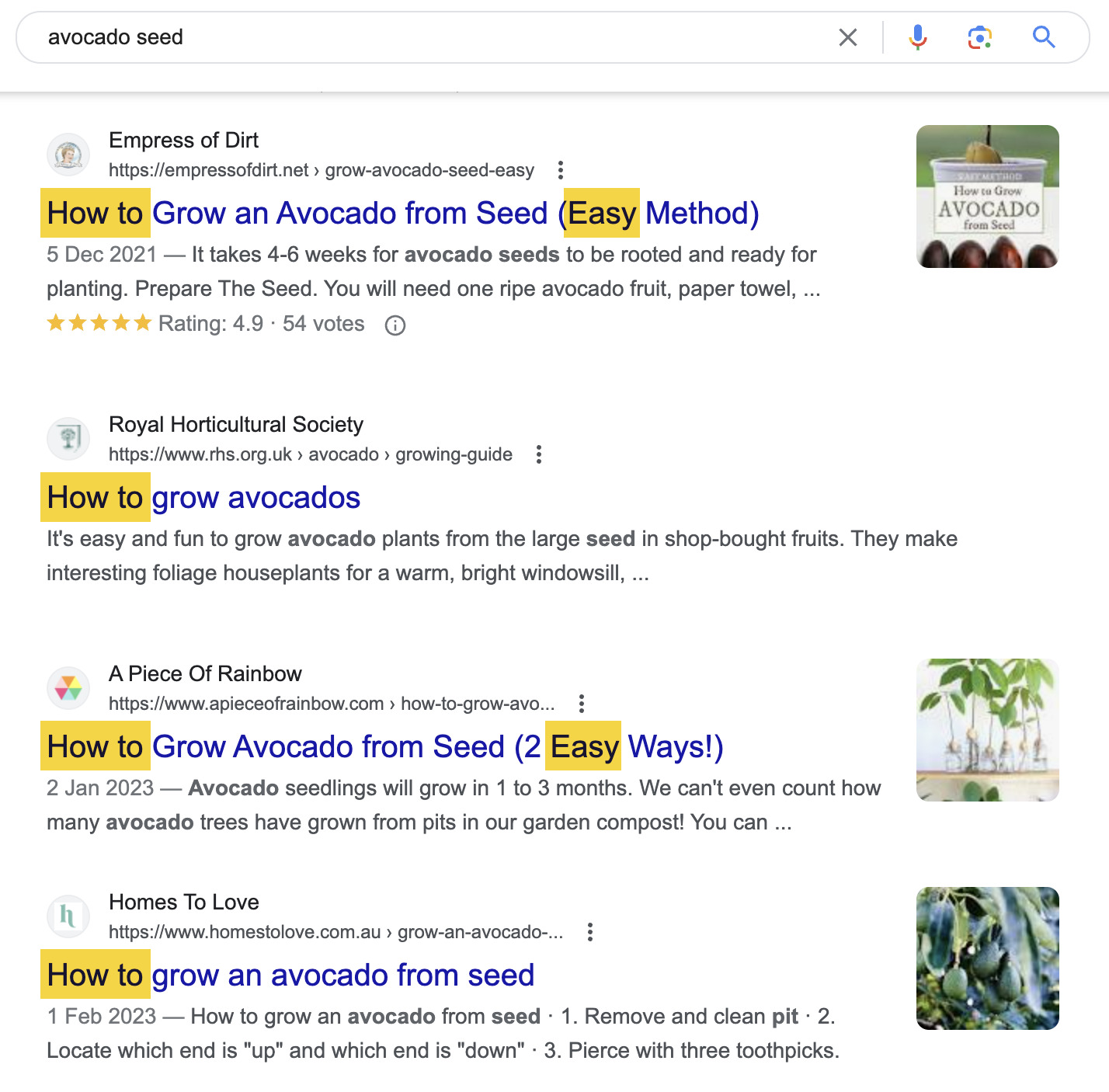

To identify search intent, look at the top-ranking results for your target keyword on Google and identify the three Cs of search intent:

- Content type – What is the dominating type of content? Is it a blog post, product page, video, or something else? If you’ve done that during the keyword research phase (highly recommended), only two elements to go.

- Content format – Some common formats include how-to guides, list posts, reviews, comparisons, etc.

- Content angle – The unique selling point of the top-ranking points, e.g., “best,” “cheapest,” “for beginners,” etc. Provides insight into what searchers value in a particular search.

For example, most top-ranking pages for “avocado seed” are blog posts serving as how-to guides for planting the seed. The use of easy and simple angles indicates that searchers are beginners looking for straightforward advice.

Use the target keyword in the URL slug

A URL slug is the part of the URL that identifies a specific page on a website in a form readable by both users and search engines.

If you look at the URL of the page you’re on, that will be the last part, “how-to-use-keywords-seo”.

https://ahrefs.com/blog/how-to-use-keywords-seoGoogle says to use words that are relevant to your content inside page URLs (source). Usually, the easiest way to do that is to set your target keyword in the slug part of the URL.

Use the target keyword in the title and match it with the H1 tag

A title tag is a bit of HTML code used to specify the title of a webpage.

<title>How to Use Keywords for SEO: A-Z Guide For Beginners</title>The H1 tag is an HTML heading that’s most commonly used to mark up a web page title.

<h1>How to Use Keywords for SEO: A-Z Guide For Beginners</h1>Both are very important to Google and searchers. Since they both indicate what the page is about, you can just match them, like I did in this article.

Titles help Google understand the context of a page. What’s more, even a slight improvement to your title can improve your rankings.

Google advises focusing on creating good titles, which should be “unique to the page, clear and concise, and accurately describe the contents of the page” (source). It’s hard to think of a better way to accurately describe the contents other than using the target keyword.

If it makes sense for the title, aim for an exact match of the keyword. But if you need to insert a preposition or break the phrase, this won’t make Google think your page is less relevant. Google understands close variations of the keyword really well, so there’s no need to stuff in similar keywords, misspellings, etc.

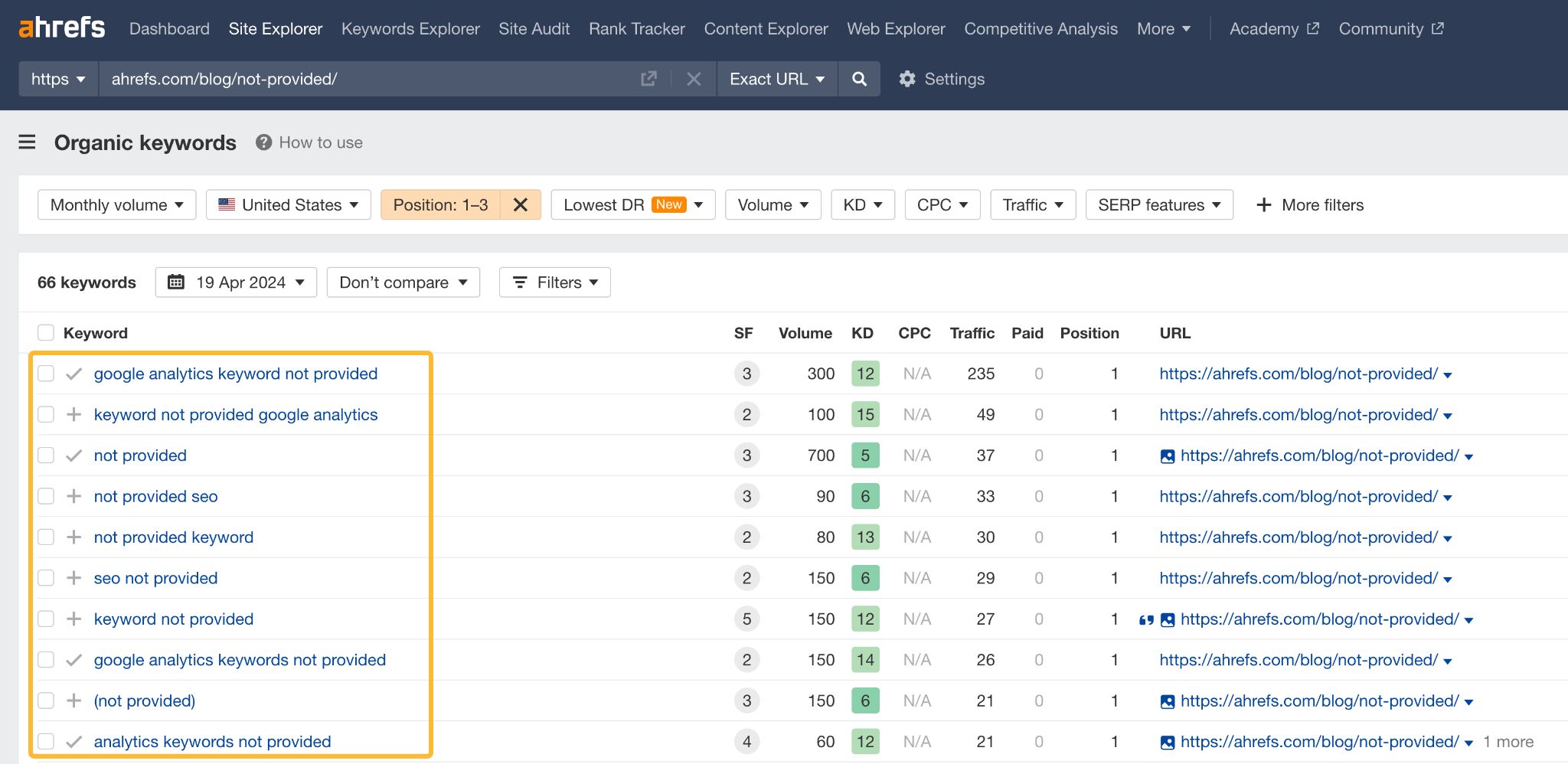

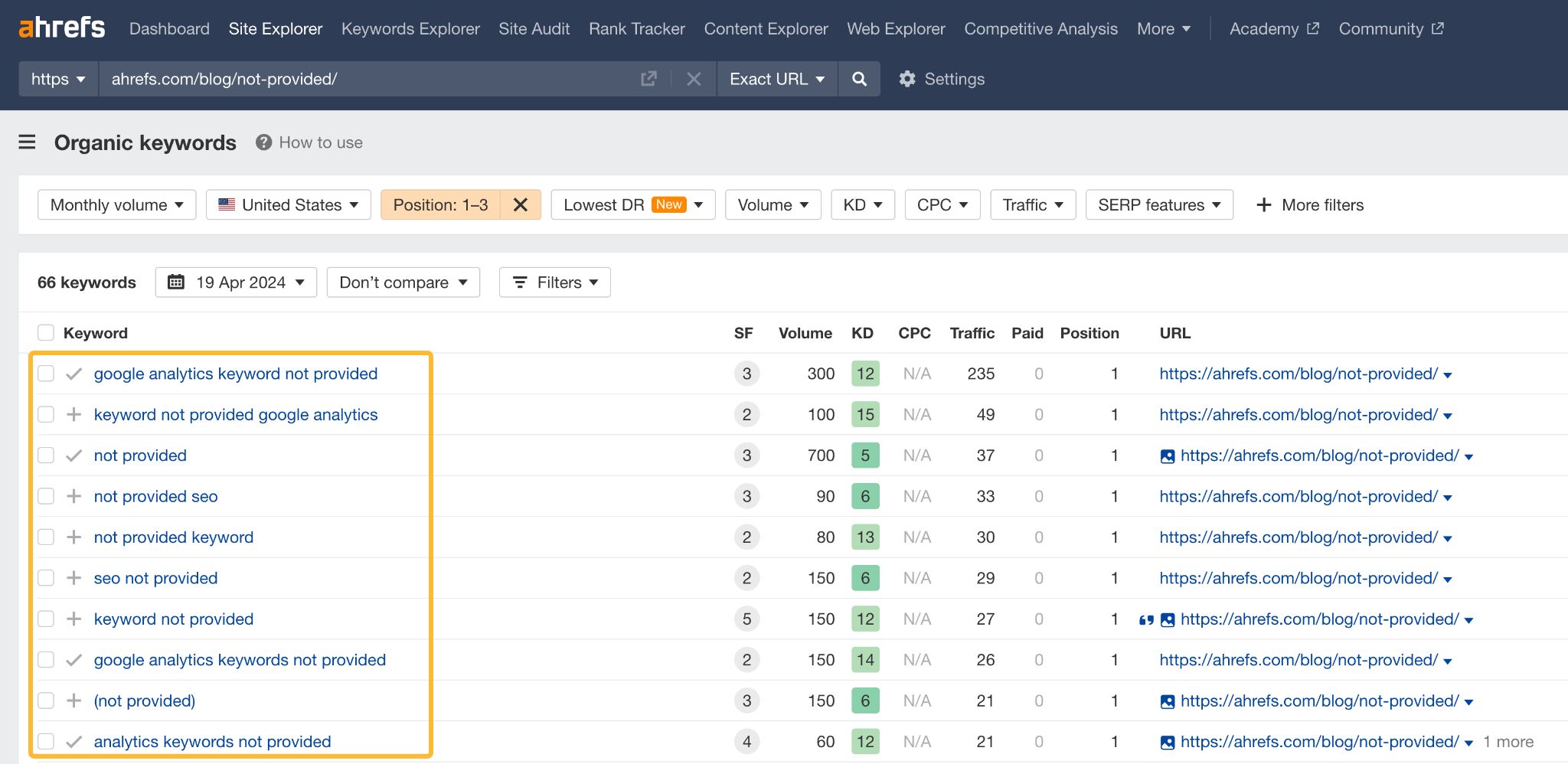

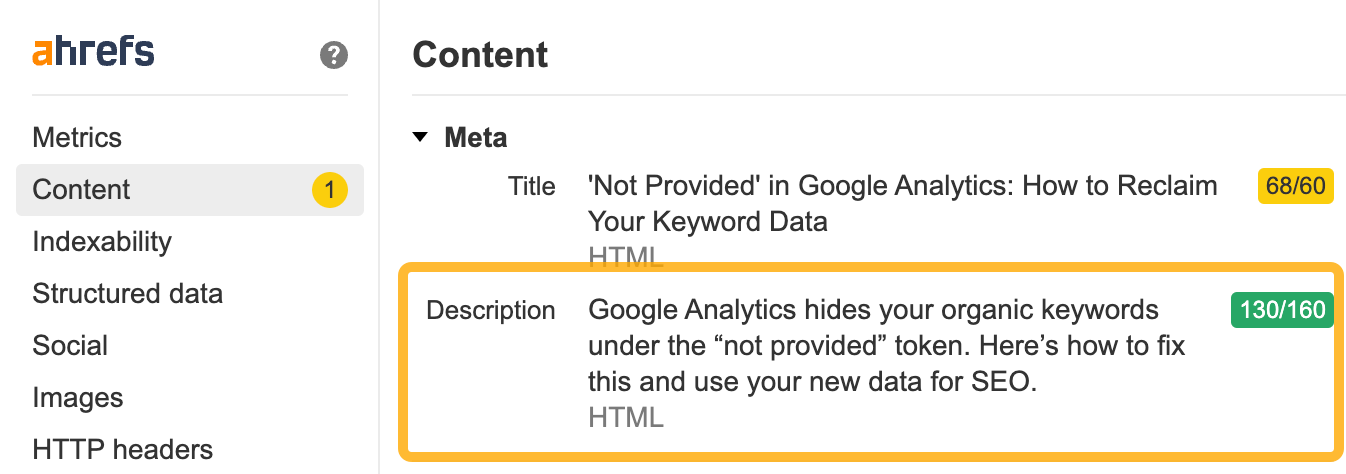

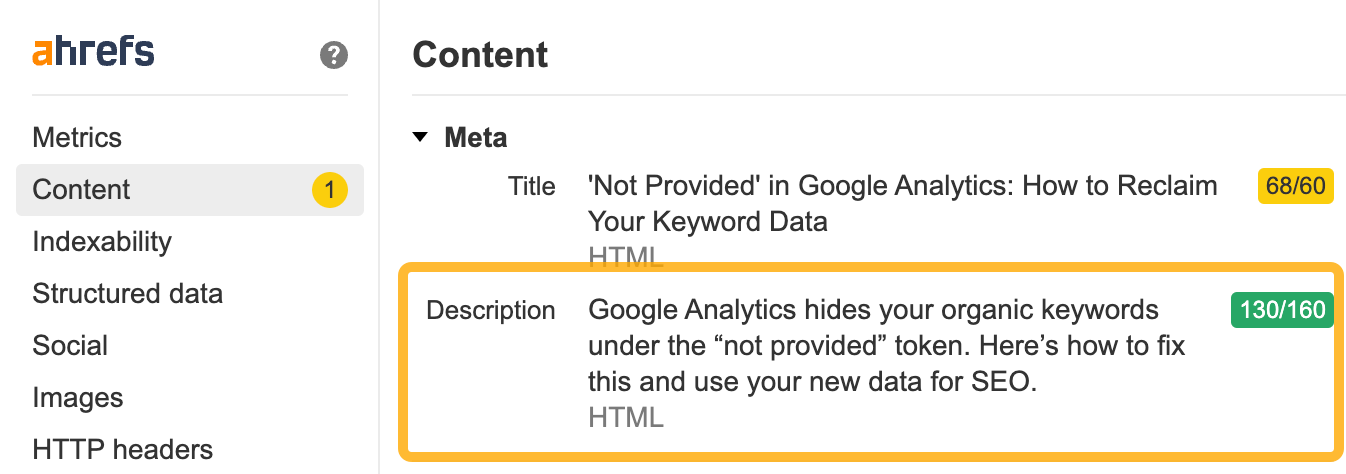

To illustrate, my old article on how to see keywords that Google Analytics won’t show ranks #1 for many variations of the phrase in the title.

Use the keyword in meta description

However, don’t write meta descriptions solely for Google; Google rewrites them more than half of the time (study) and doesn’t use them for ranking purposes. Instead, focus on crafting meta descriptions for searchers.

These descriptions appear in the SERPs, where users can read them. If your description is relevant and compelling, it can increase the likelihood of users clicking on your link.

Including your target keyword in the meta description is usually natural. For instance, consider the description of the article mentioned earlier. Incorporating the keyword into the sentences simply provides a comprehensive way to describe the issue.

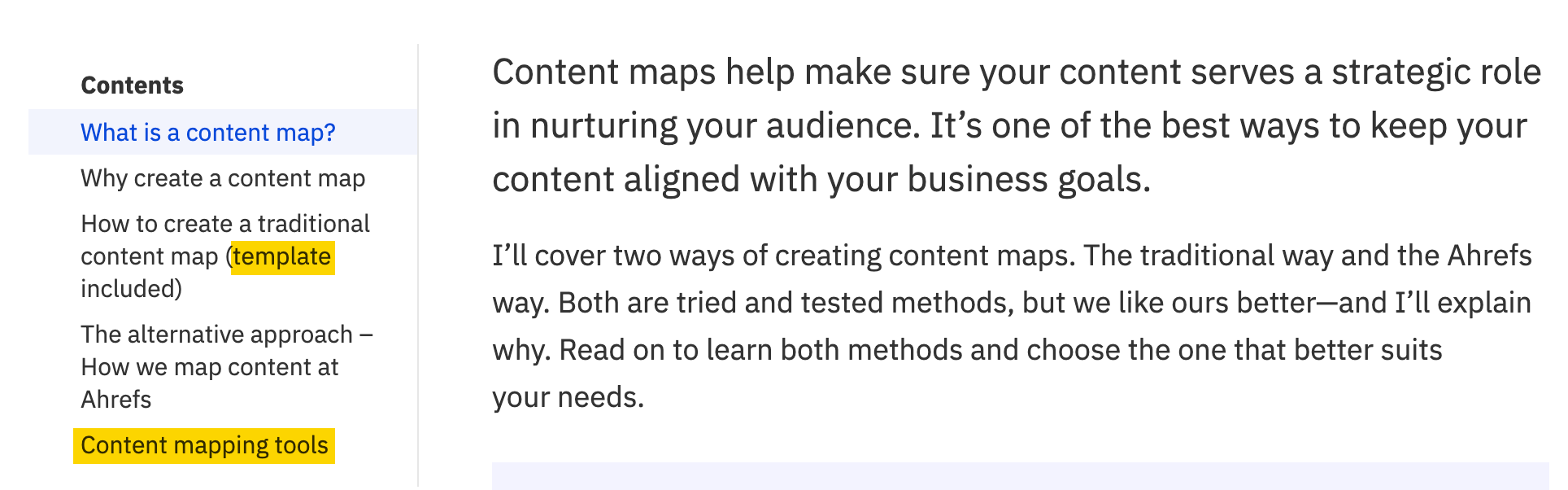

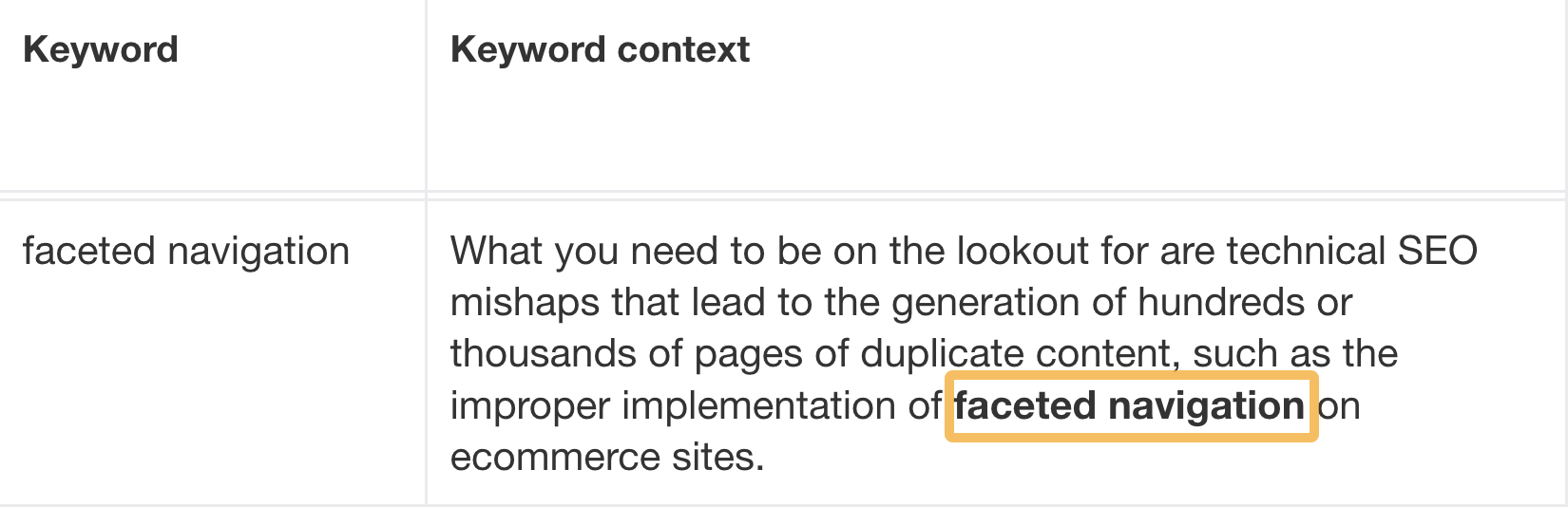

Use the target keyword to find secondary keywords

Secondary keywords are any keywords closely related to the primary keyword that you’re targeting with your page.

You can find them through your primary keyword and use them as subheadings (H2 to H6 tags) and talking points throughout the content. Here’s how.

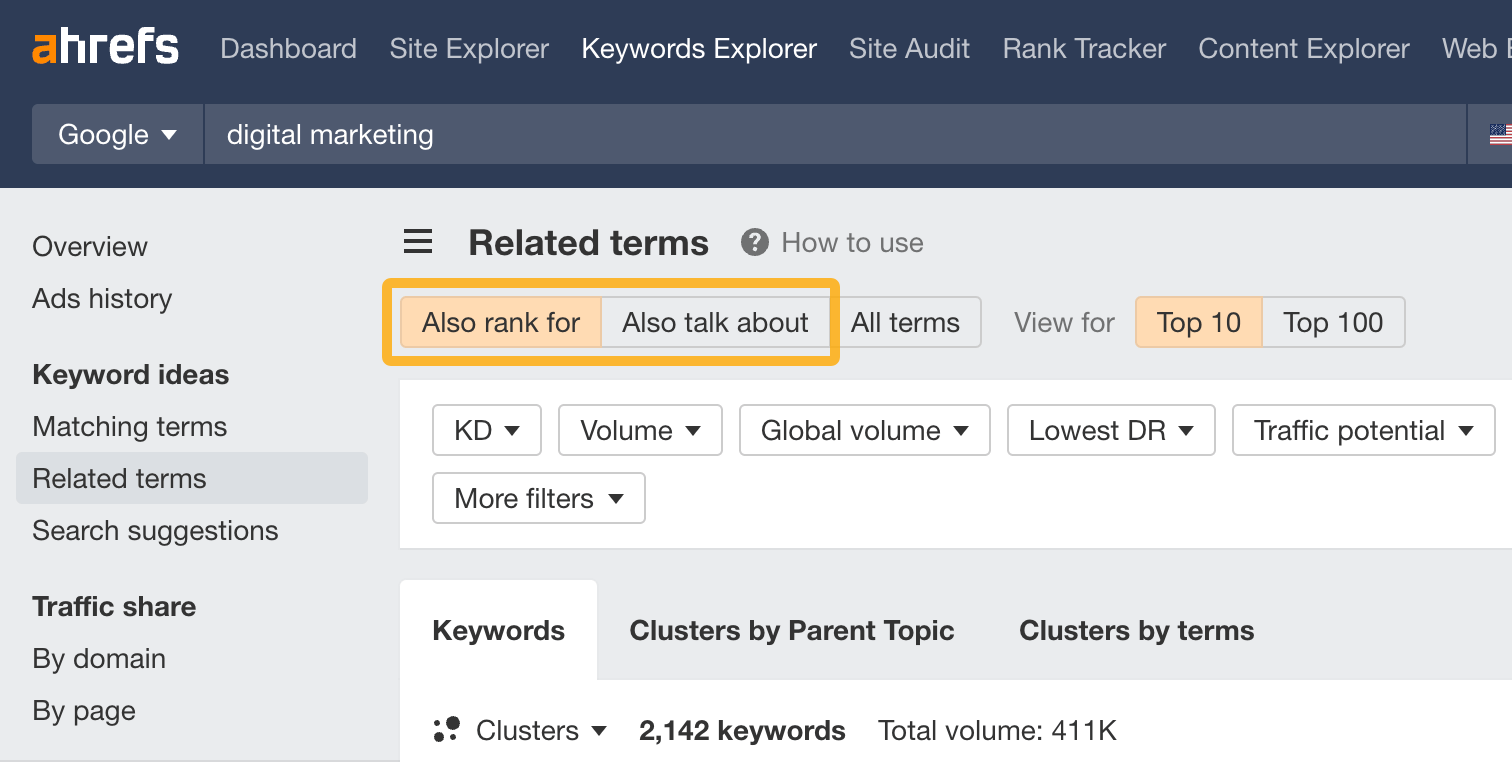

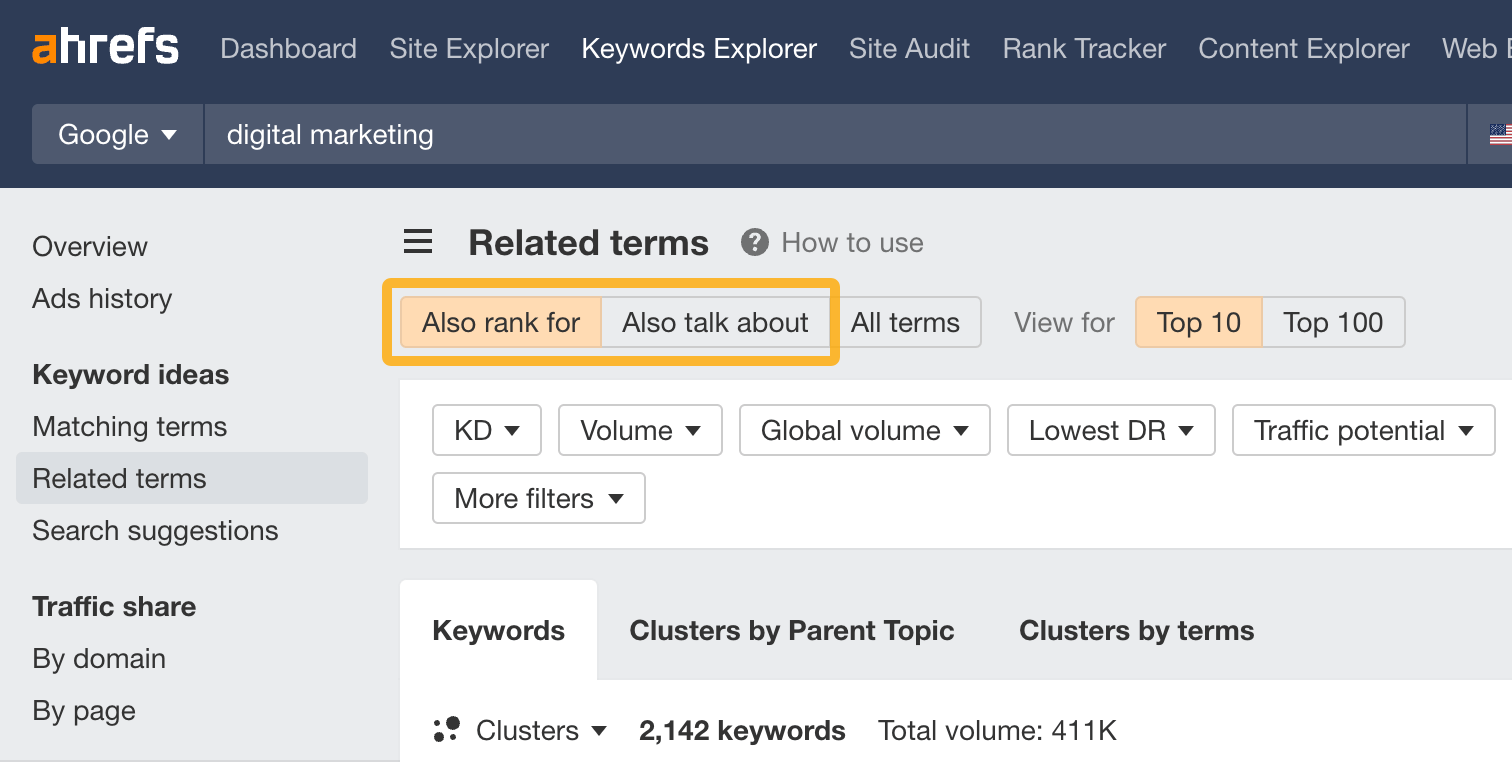

Go to Keywords Explorer and plug in your target keyword. From there, head on to the Related terms report and toggle between:

- Also rank for: keywords that the top 10 ranking pages also rank for.

- Also talk about: keywords frequently mention by top-ranking articles.

Now, to know how to use these keywords in your text, just manually look at the top ranking pages and see how and where they cover the keywords.

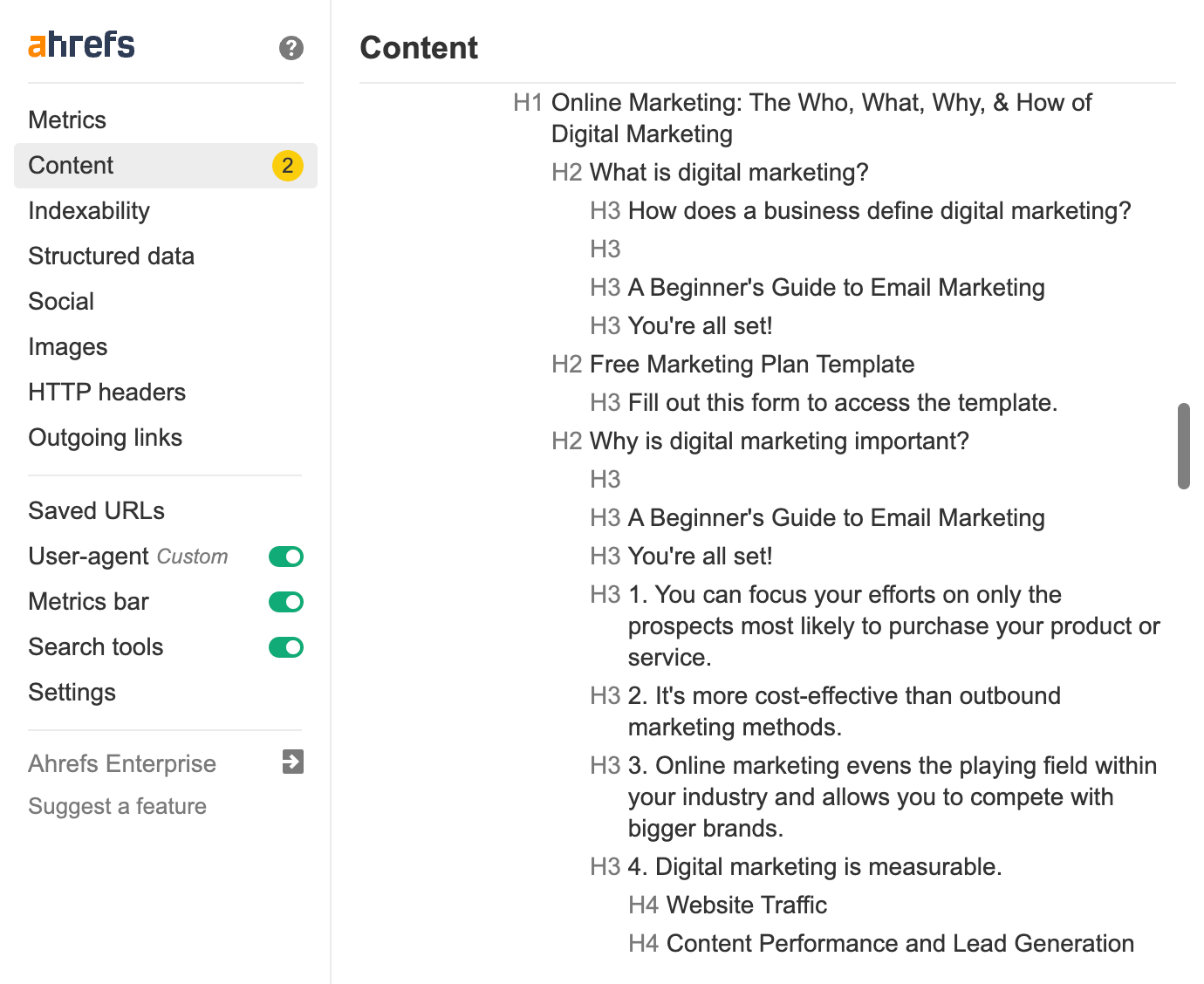

For example, looking at one of the top articles for “digital marketing”, we can see right away that some of the most important aspects are the definition, a template and importance. You can use the free Ahrefs SEO Toolbar to break down the structure of any page instantly.

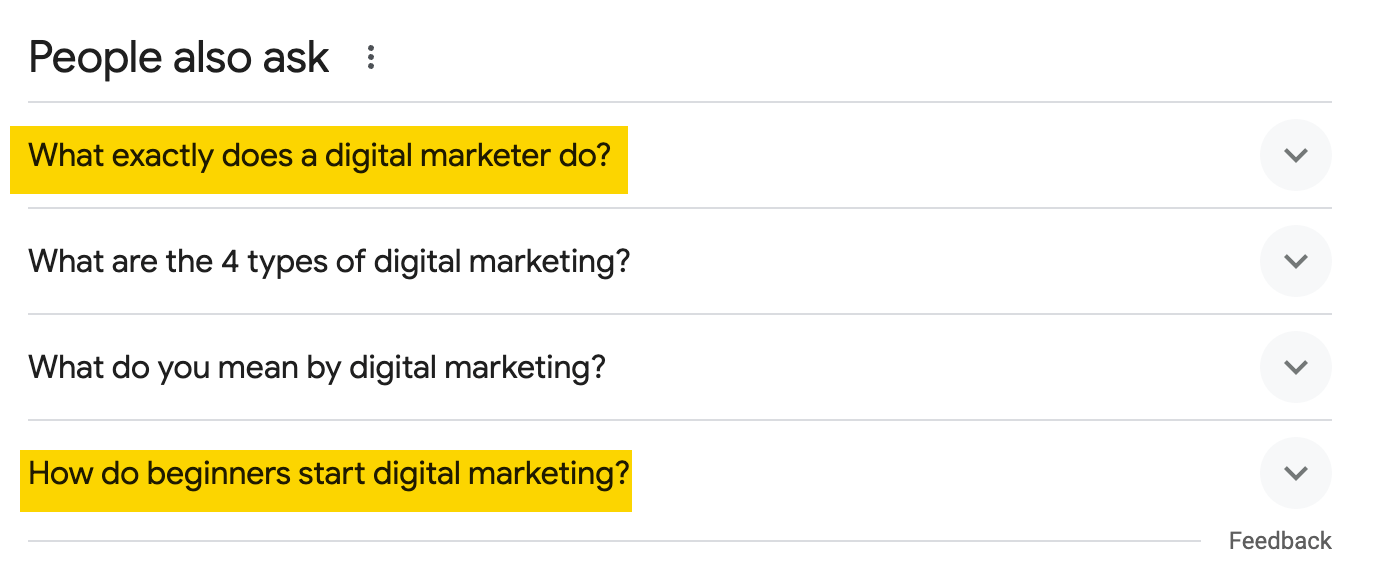

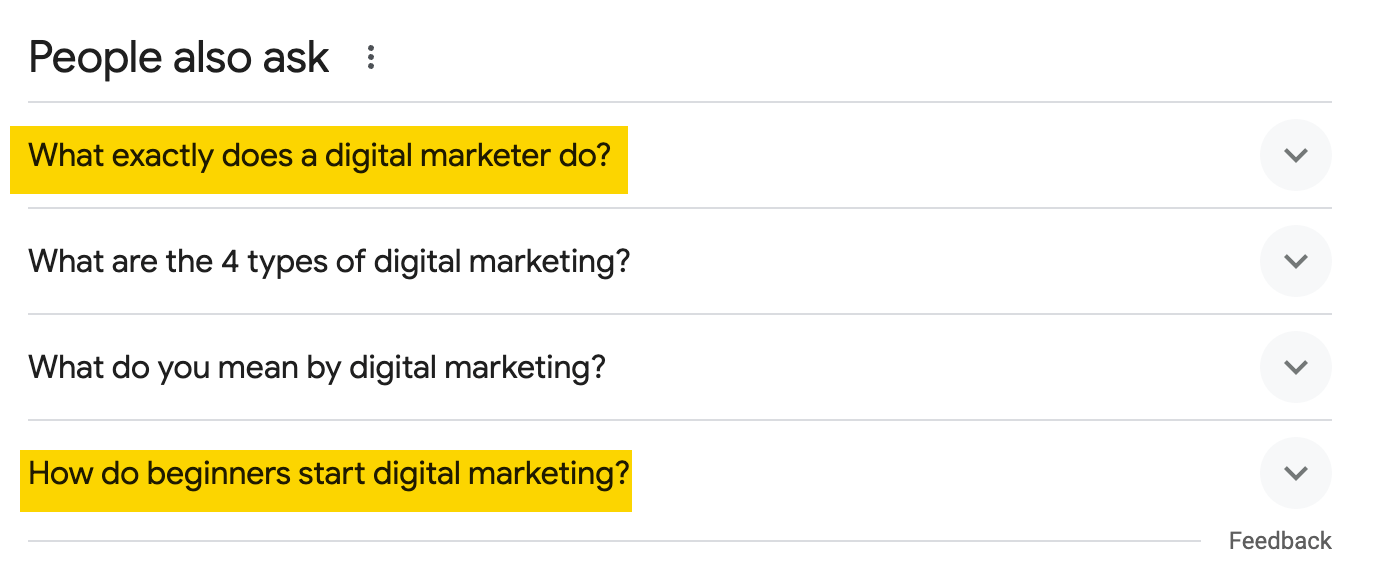

Another place you can look for inspiration is the People Also Ask Box in the SERPs. Use it to find words and subtopics that may be worth adding to the article.

Pro tip

Optimizing an existing article?

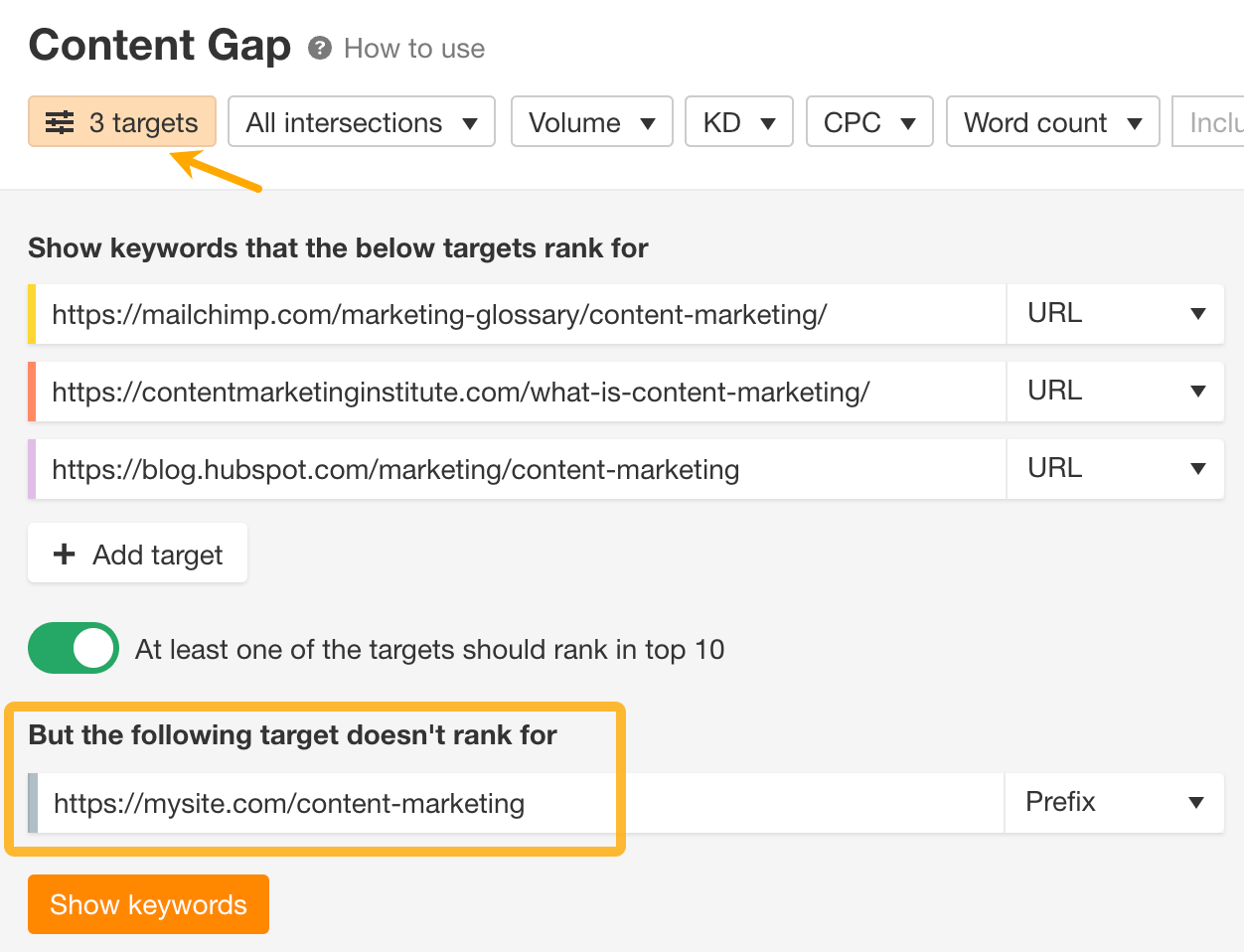

Use the Content Gap tool to find subtopics you may be missing. The tool shows keywords that your competitors’ pages rank for, and your page doesn’t.

- Go to Keywords Explorer and enter your target keyword.

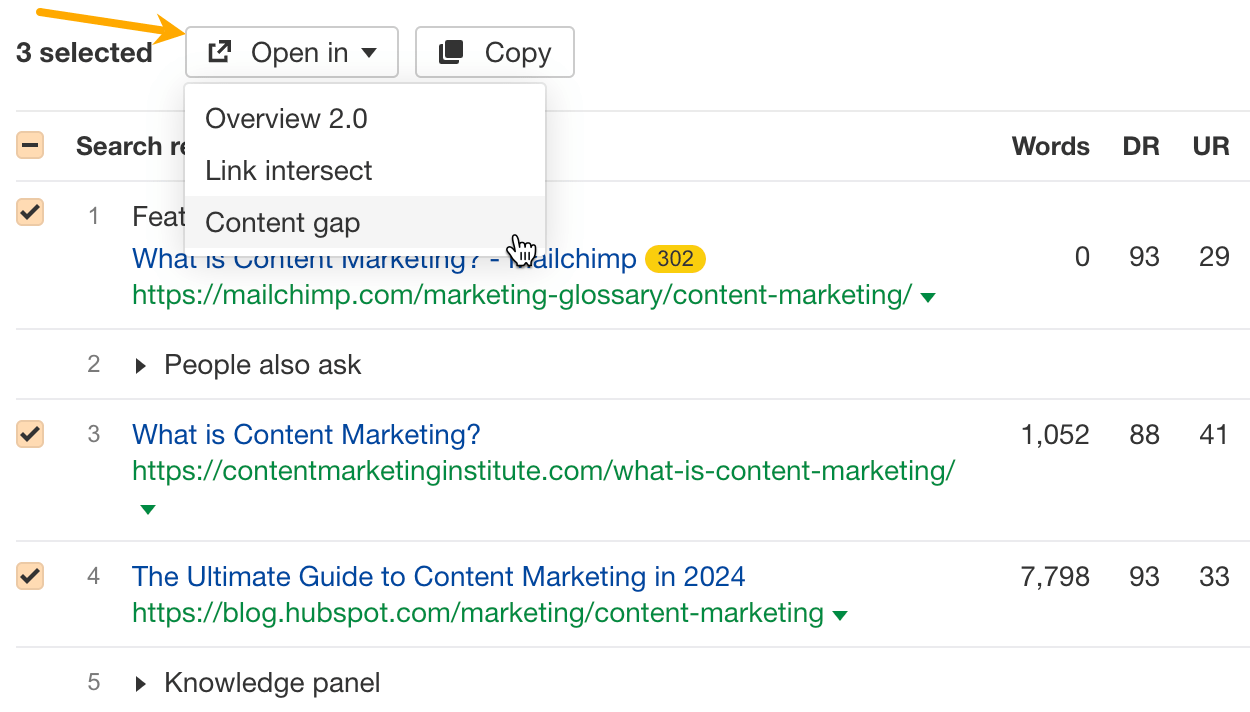

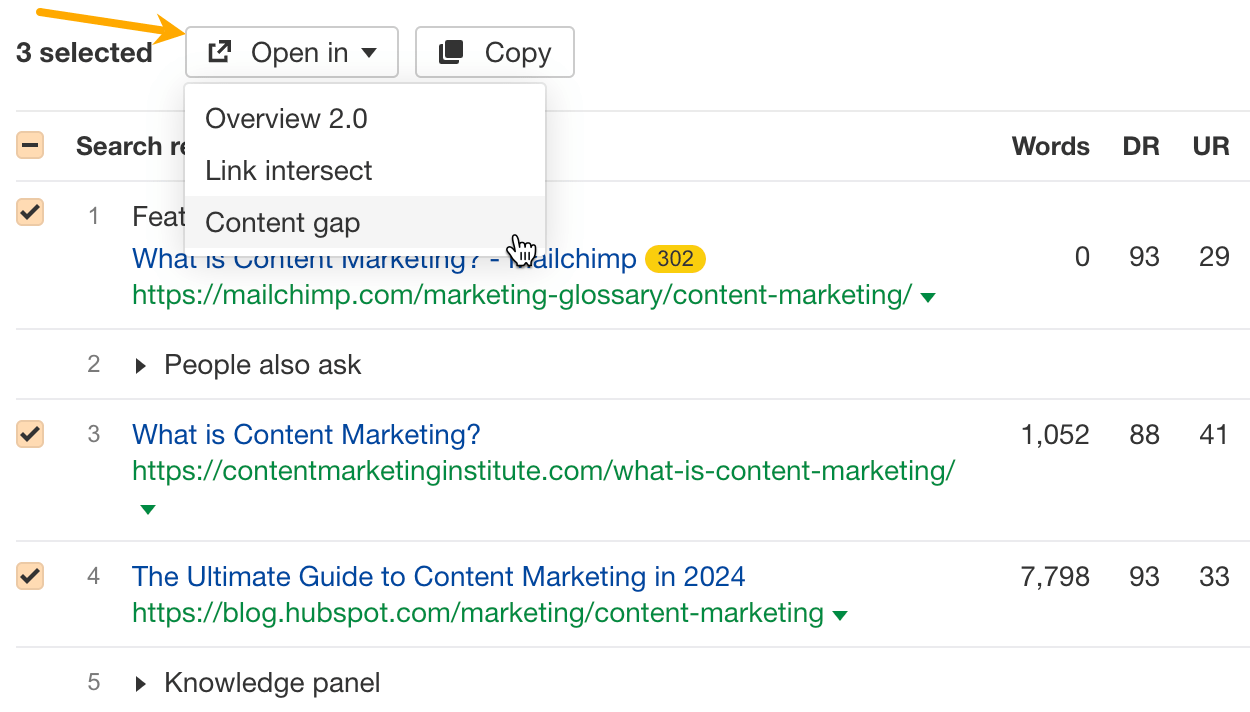

- Scroll down to the SERP overview, select a few top pages, and click Open in Content Gap.

- In Content Gap, click on Targets and add the page you’re optimizing in the last field.

Use primary and secondary keywords in the main content

To rank high on search engines, it’s important to include your keywords in your text. Even though Google is good at understanding similar words and variations, it still helps to use the specific keywords people might search for. Google explains that in their short guide to how search works:

The most basic signal that information is relevant is when content contains the same keywords as your search query. For example, with webpages, if those keywords appear on the page, or if they appear in the headings or body of the text, the information might be more relevant.

When writing, it’s important to incorporate keywords naturally. Start your content with the most relevant information that people are likely to search for. This ensures that key points are immediately visible to your readers and search engines.

If you have a secondary keyword that’s less critical but still relevant, consider giving it a dedicated section. This approach allows you to explore the topic in detail, rather than briefly mentioning it at the end of your content.

However, avoid overemphasizing the frequency of your keywords. Effective SEO involves more than just repeating keywords. If SEO were simply about keyword density, it would be straightforward, but such strategies don’t lead to long-term success and can make your content feel spammy.

For instance, if ‘content strategy’ is a central theme of your discussion and you mention it only once, Google might perceive your content as incomplete. On the other hand, stuffing your article with the term ‘content strategy’ more than necessary won’t outperform your competitors and could potentially lead to your site being flagged as spam.

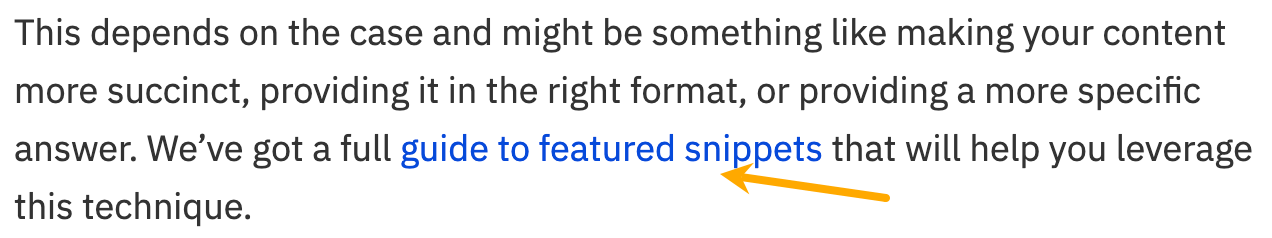

Use the target keyword in link anchor text and/or surrounding text

The anchor text or link text is the clickable text of an HTML hyperlink.

Google uses anchors to understand the page’s context. There even seems to be a consensus that anchor text is a ranking factor, although, according to our study, it is likely a weak one.

In situations like these, it’s just best to stick with Google’s advice:

Good anchor text is descriptive, reasonably concise, and relevant to the page that it’s on and to the page it links to. It provides context for the link, and sets the expectation for your readers. (…)

Remember to give context to your links: the words before and after links matter, so pay attention to the sentence as a whole.

So use the target keyword in the anchor text and or surrounding text but keep it natural — add only on pages that are related to the page you’re linking to and use text that will help the readers understand where and why you’re linking.

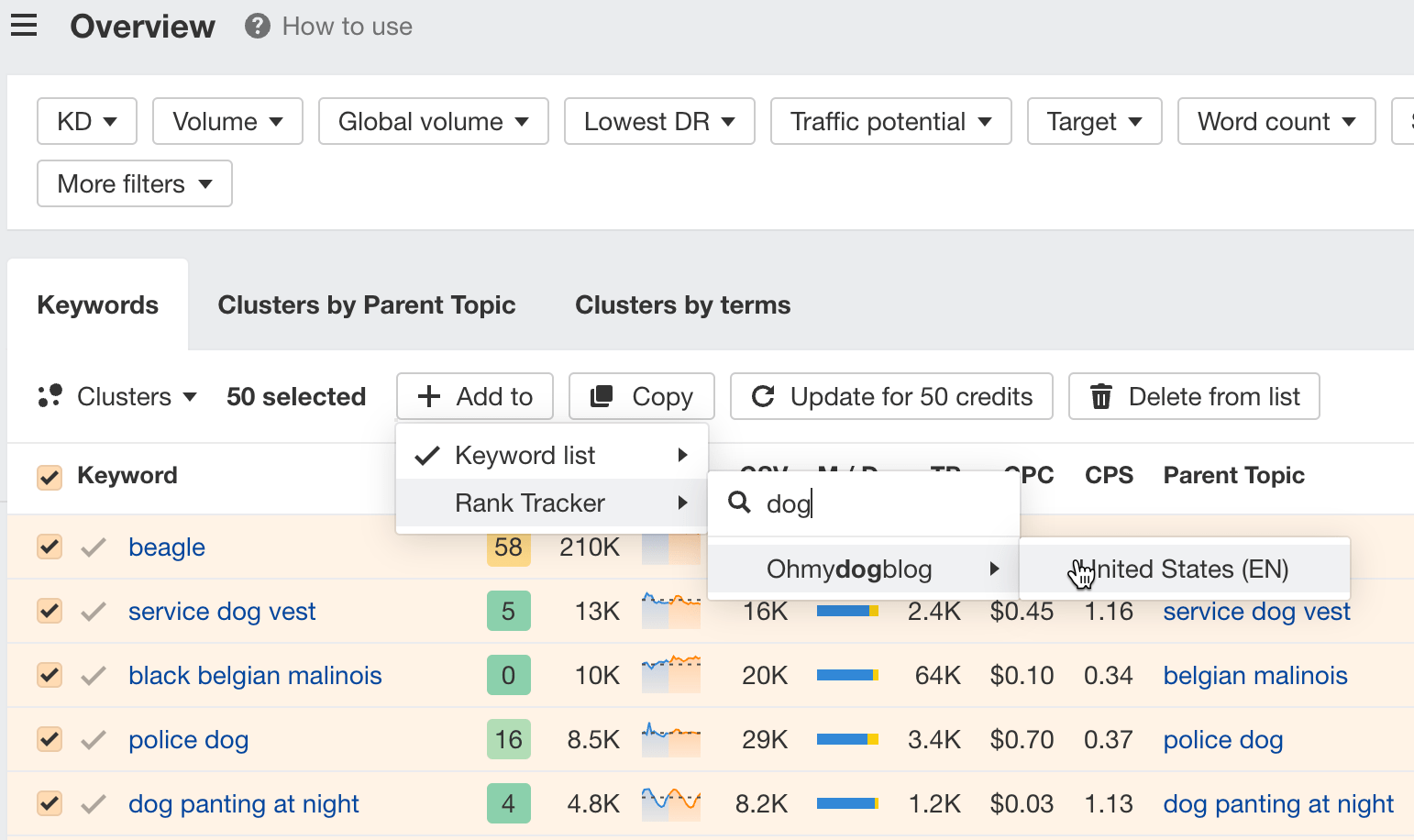

Rank tracking refers to monitoring the positions of a website’s pages in search engine results for specific keywords.

It’s pretty much an automated process; everything can be handled by a tool like Ahrefs’ Rank Tracker. No need to check rankings manually and note them down in a spreadsheet.

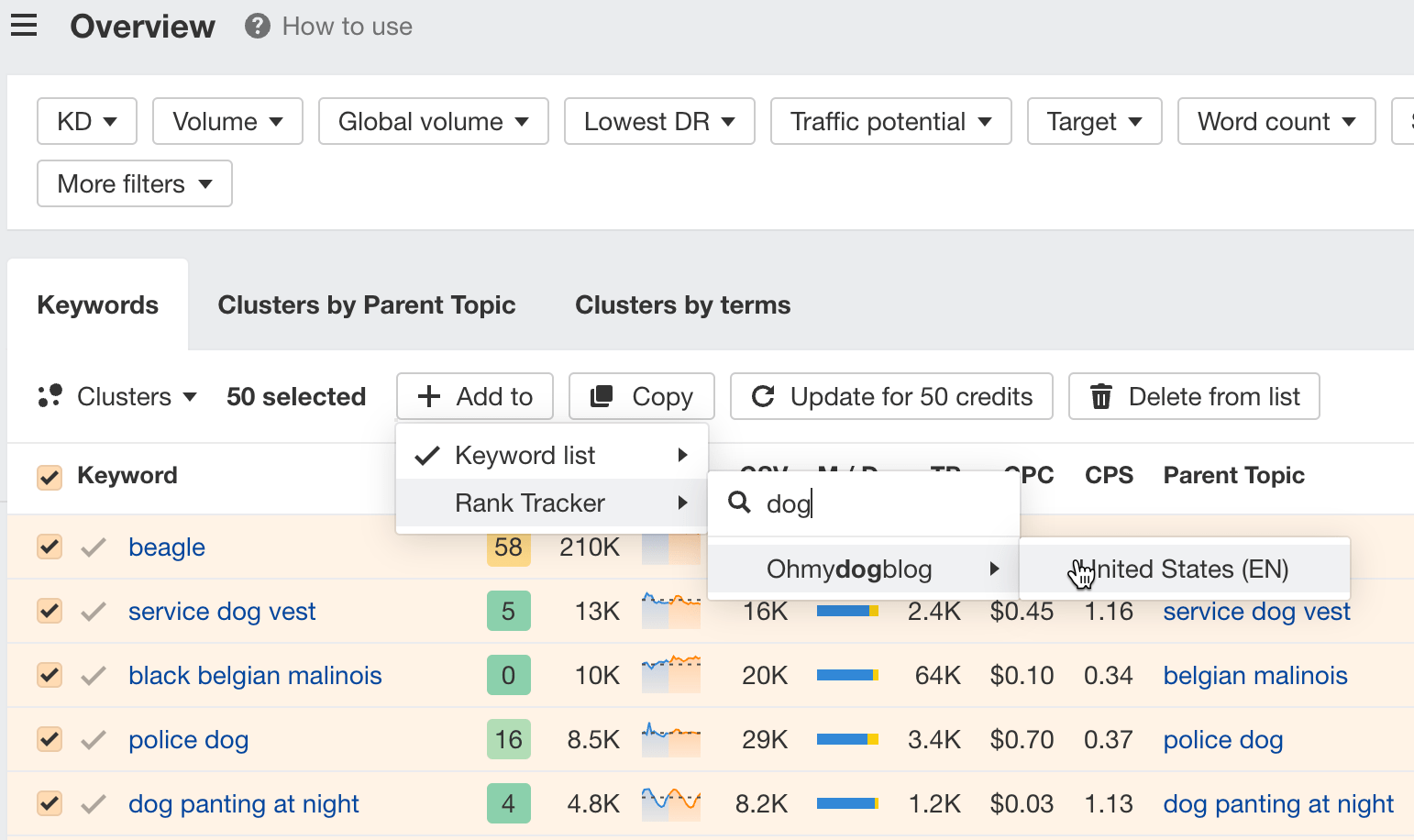

If you have a keyword list ready, all you need to do is add that list to Rank Tracker.

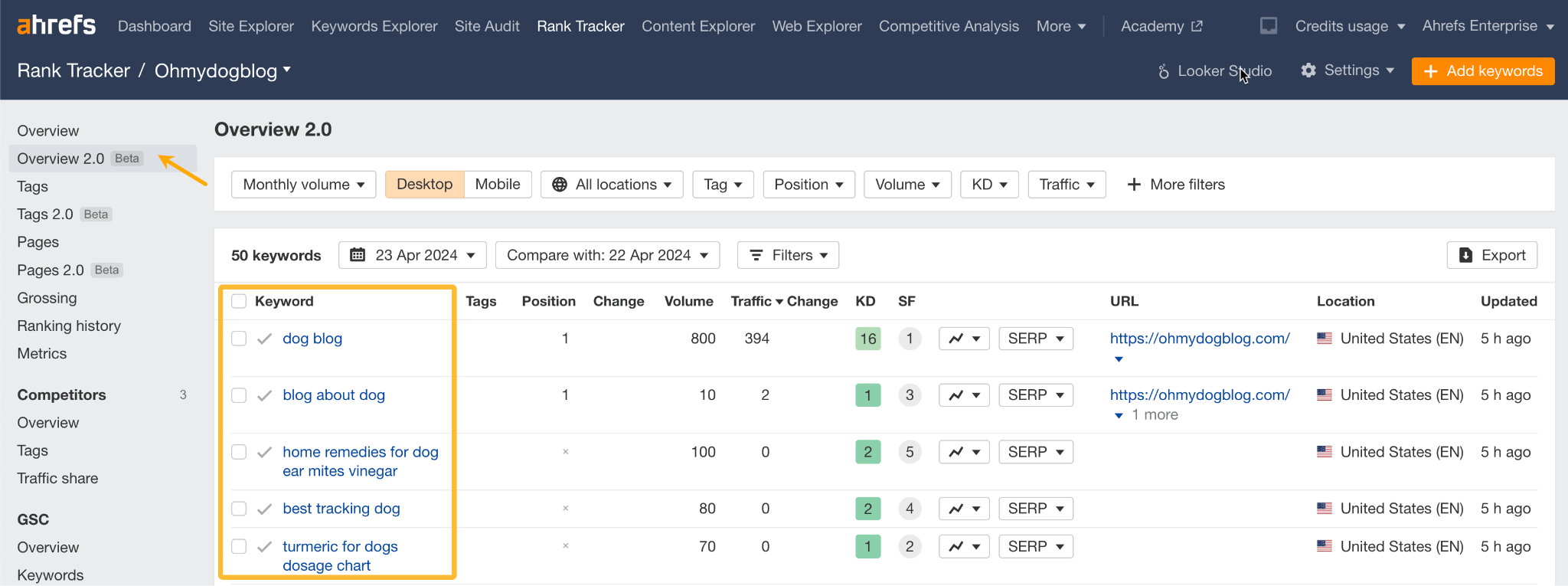

The keywords will appear in Rank Tracker’s Overview report.

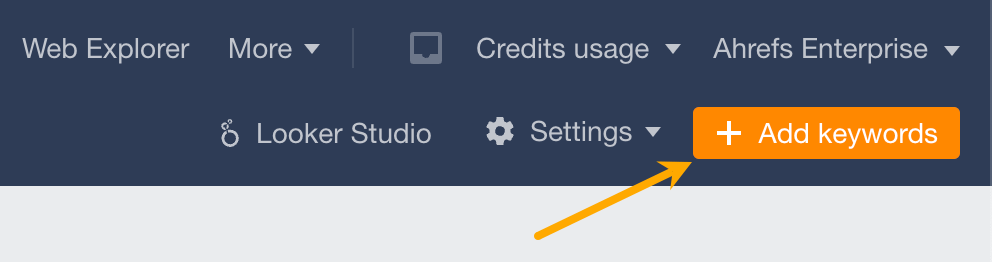

Another way to add keywords is to hit Add keywords in the top right corner (best for adding single keywords or importing a list from a document).

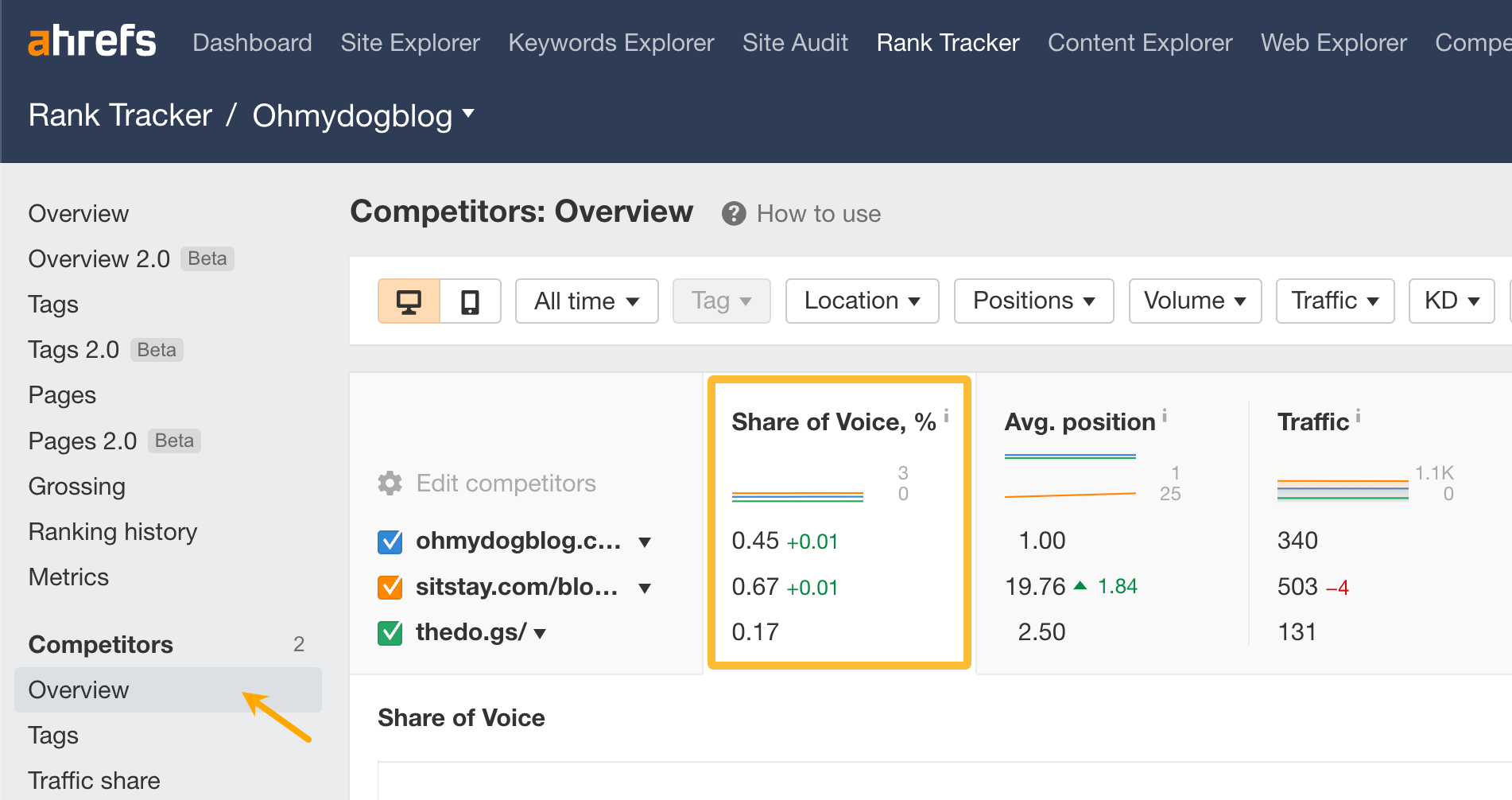

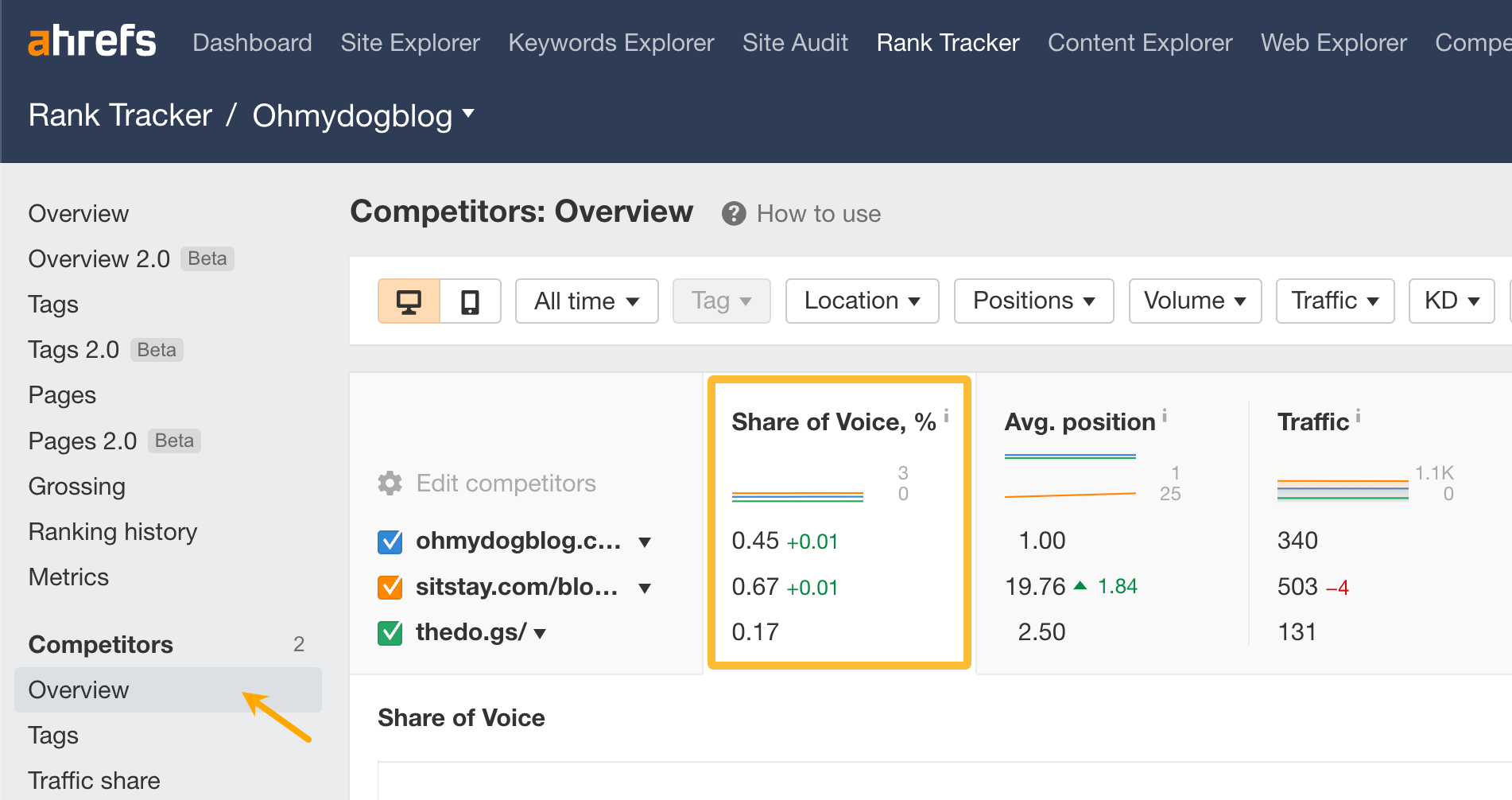

Now to compare your performance against competitors, just go to the Competitors report. The metric I recommend tracking is SOV (Share of Voice). It shows how many clicks go to your pages compared to competitors.

One of the key advantages of SOV is that it accounts for fluctuations in search volume trends. Therefore, if you notice a decrease in traffic but maintain a high SOV, it indicates that the drop is due to a decrease in the overall popularity of the keywords, not a decline in your SEO effectiveness.

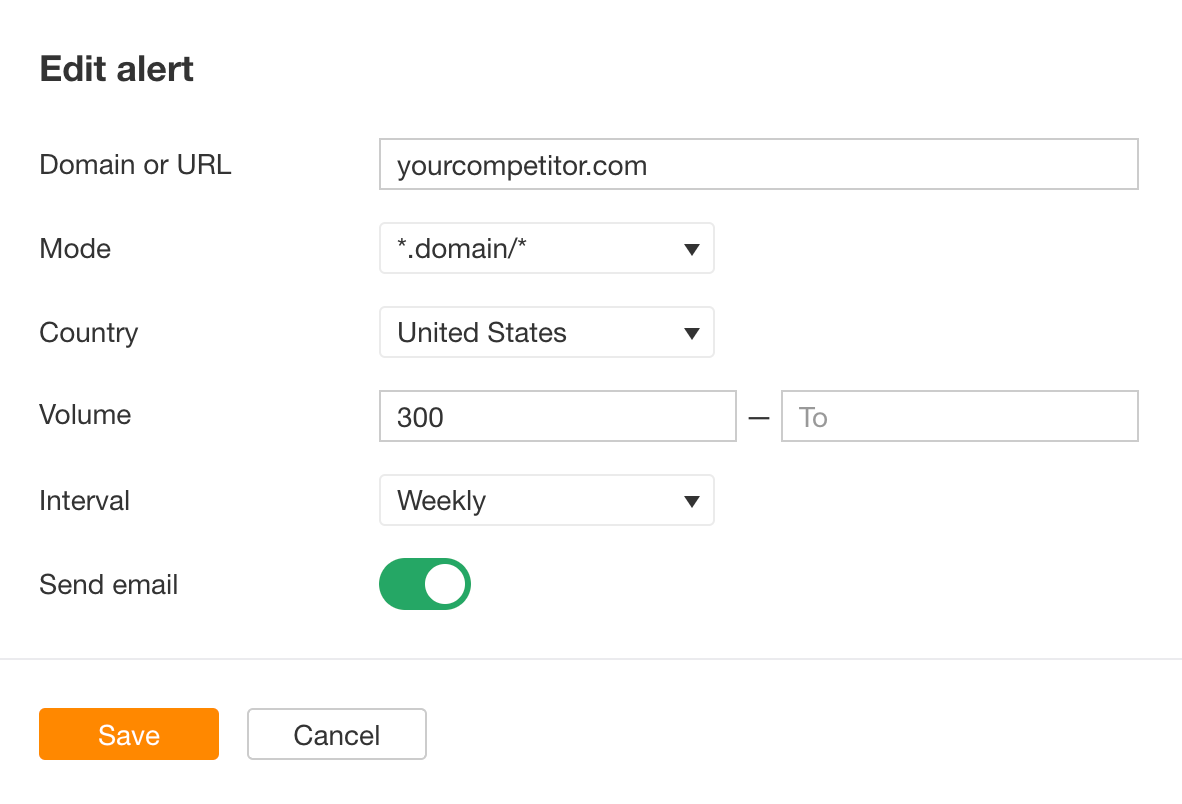

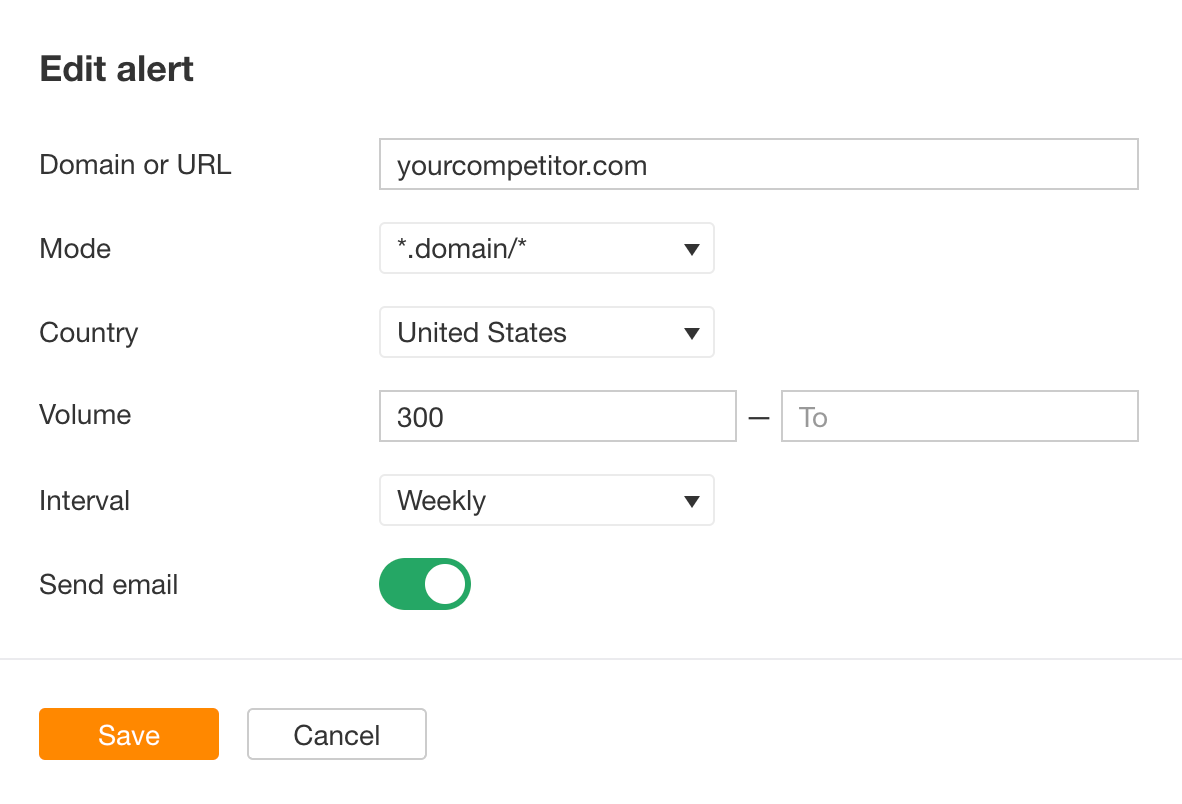

But not only can you track your competitors’ keywords, you can also monitor them. Use a tool like Ahrefs Alerts to get notifications whenever your competitors started working for a new keyword.

Just to go Alerts tool in Ahrefs and fill in the details.

There’s even more you can do with keywords and a bit more you should know to avoid some common mistakes.

1. Use keywords to find guest blogging opportunities

Guest blogging is the practice of writing and publishing a blog post on another person or company’s website.

It’s one of the most popular link building tactics with a few other benefits like exposure to a new, targeted audience.

Here’s how to find relevant, high-quality sites to pitch:

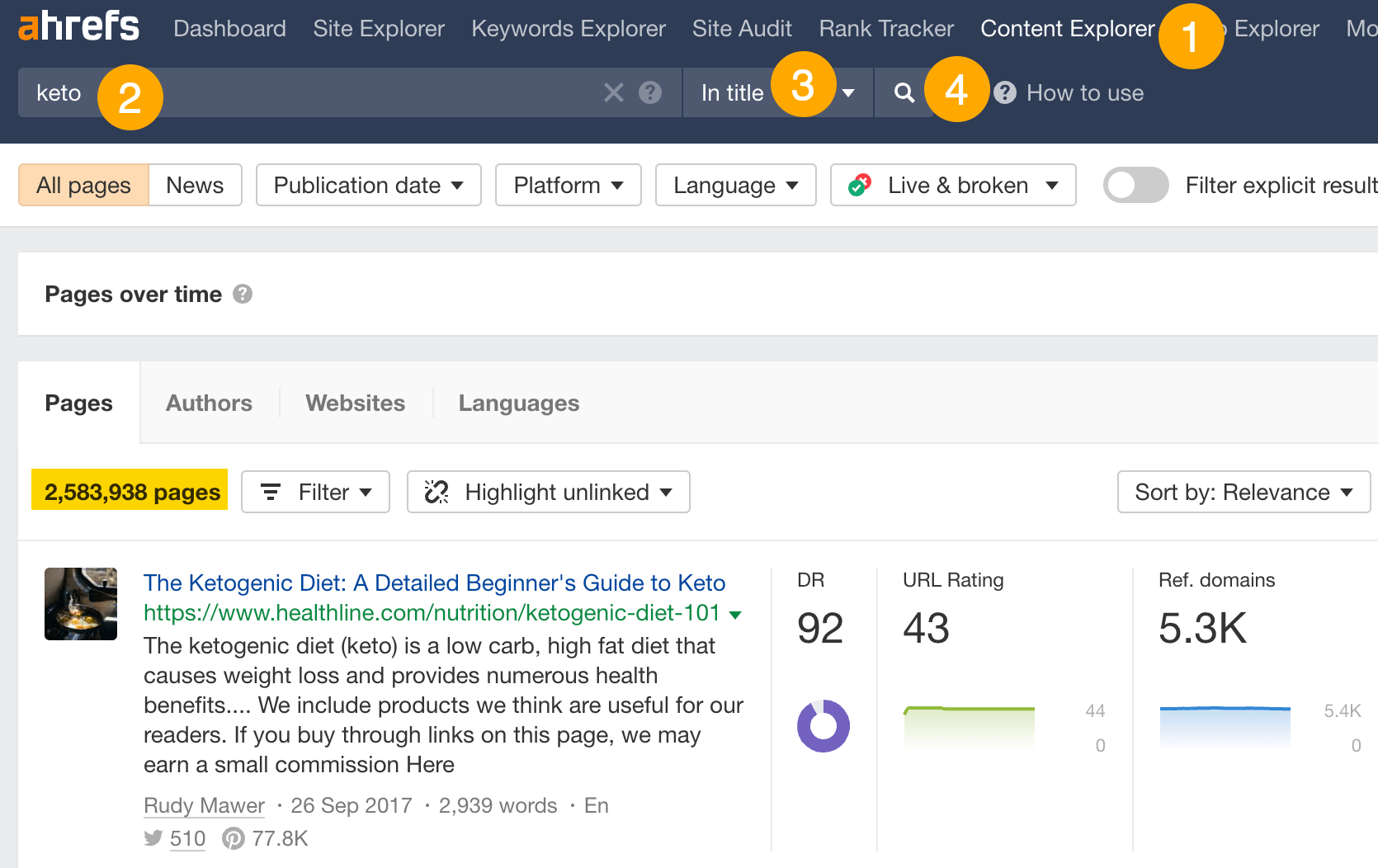

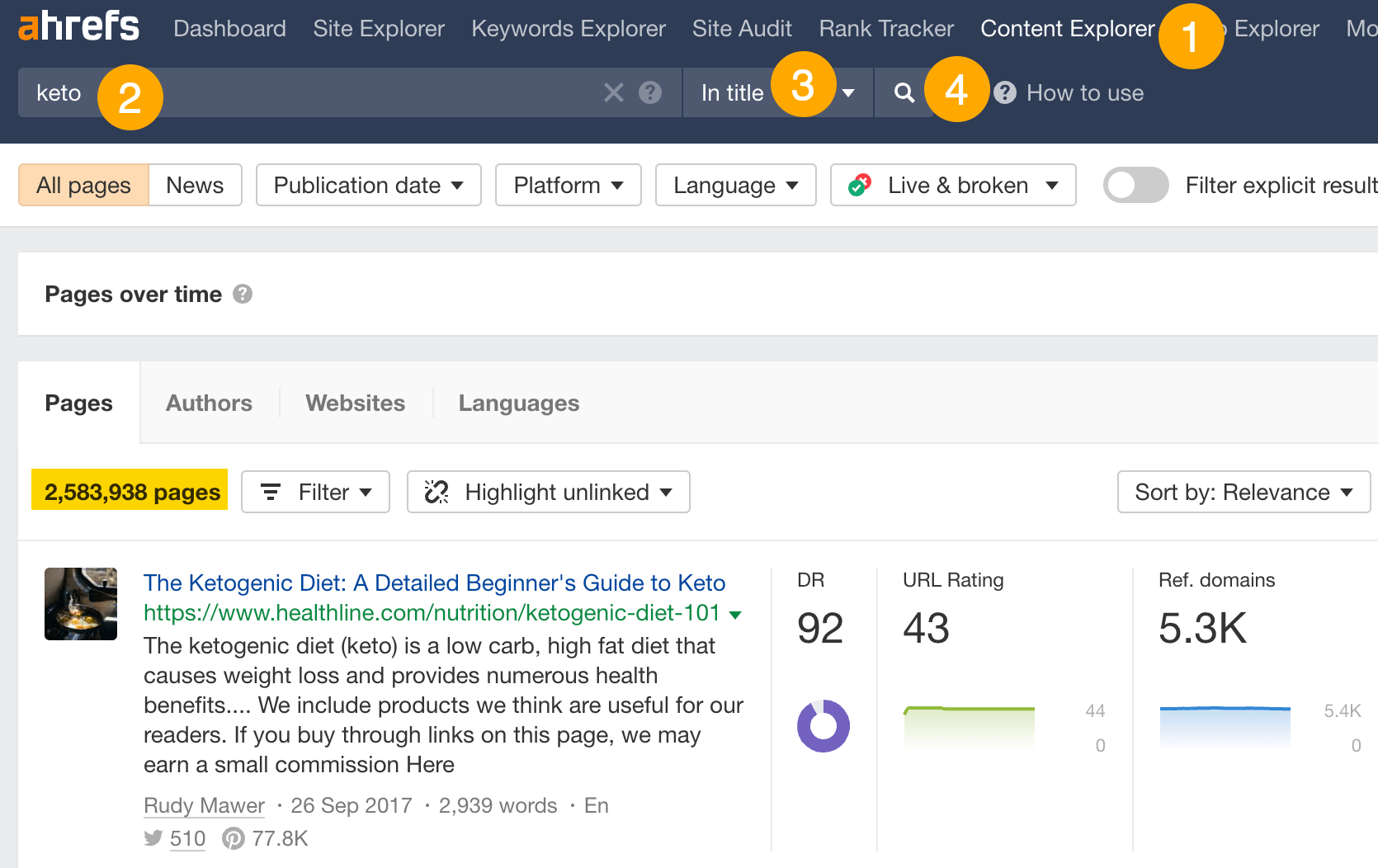

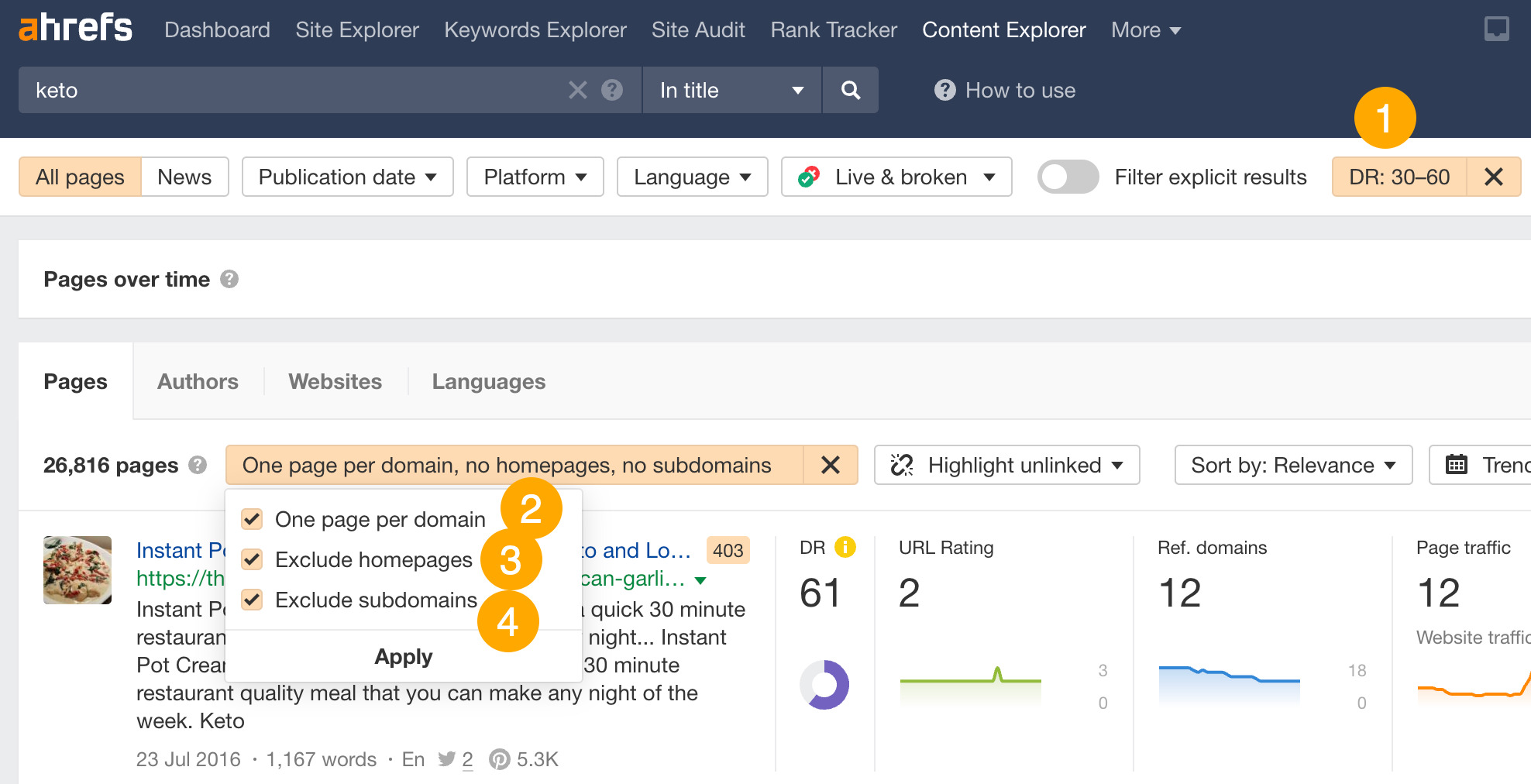

- Go to Ahrefs’ Content Explorer.

- Enter a broad keyword or phrase related to your niche.

- Select In title from the drop-down menu.

- Run the search.

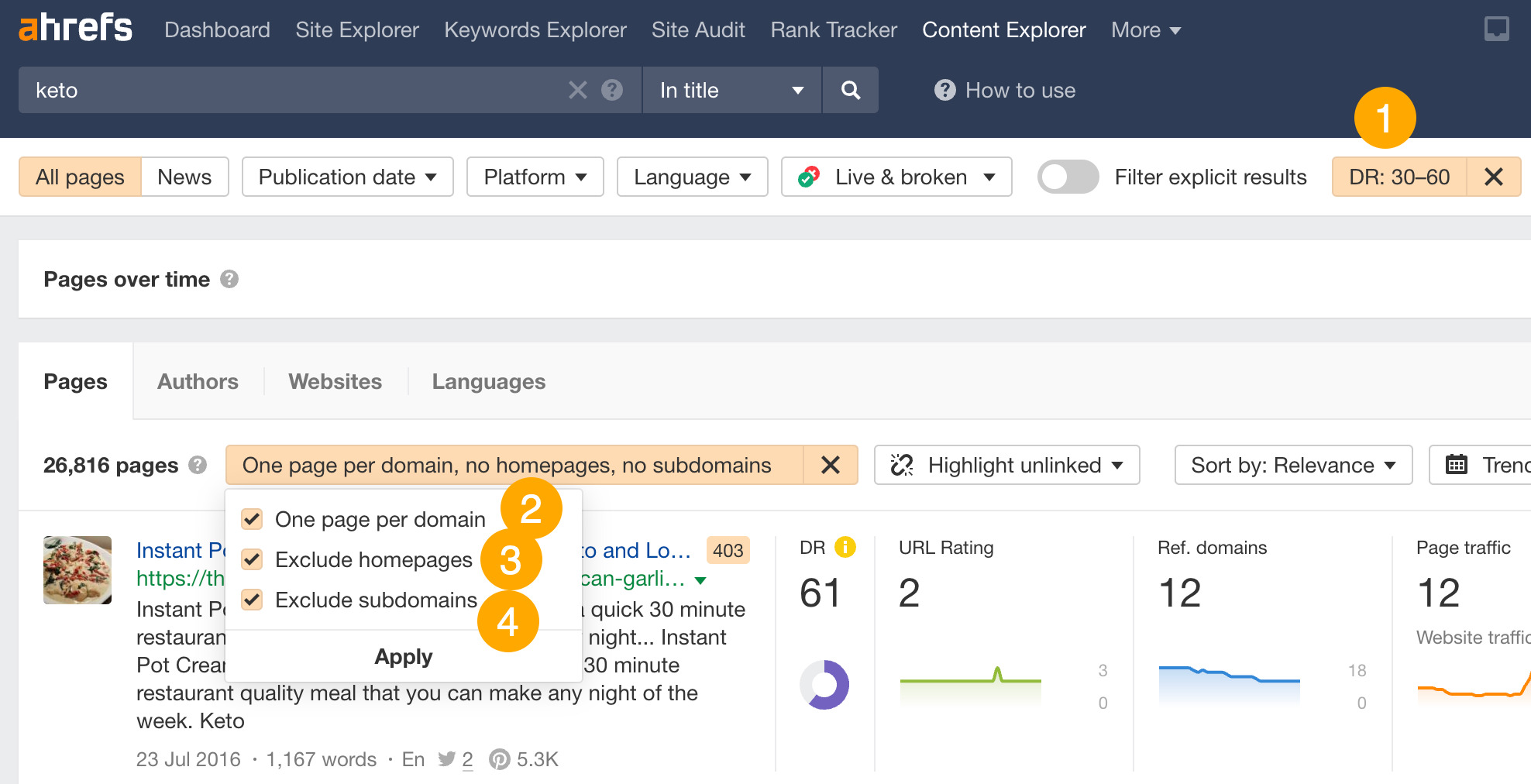

Next, refine the list by applying these filters:

- Domain Rating (DR) from 30 to 60.

- Click the One page per domain filter.

- Click the Exclude homepages filter.

- Click the Exclude subdomains filter.

Finally, click on the Websites tab to see potential websites you could guest blog for.

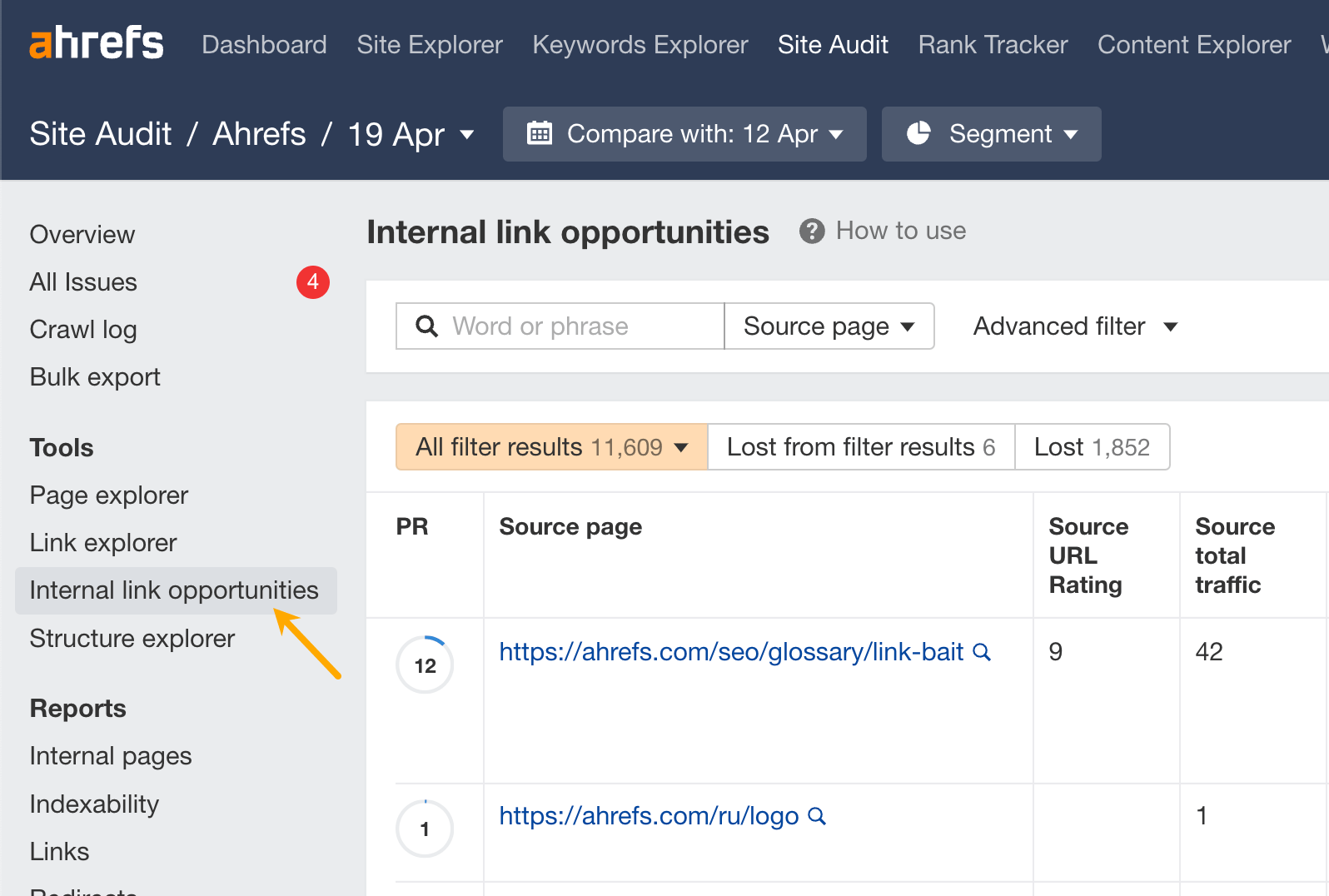

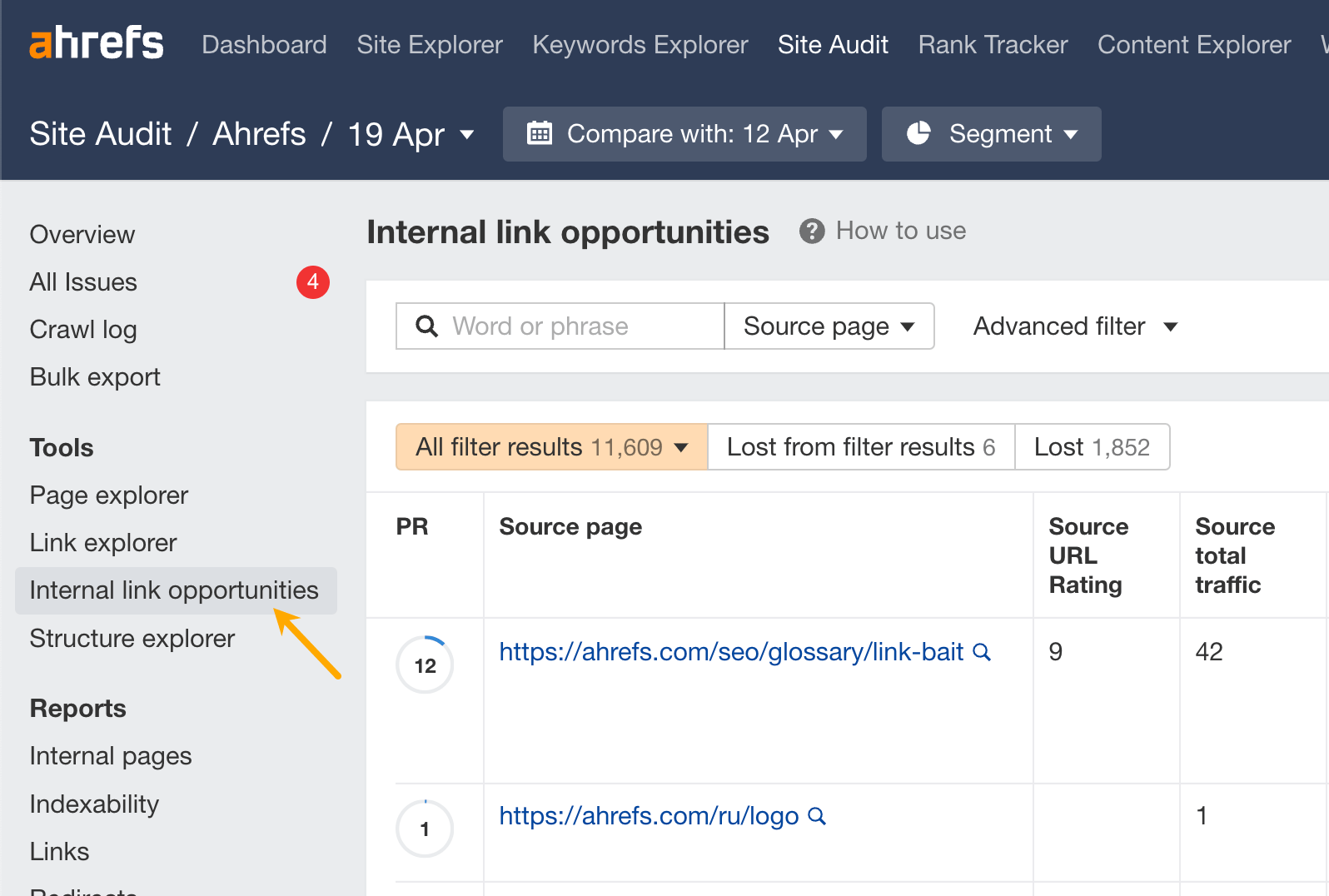

2. Use keywords to find internal link opportunities

Internal links take visitors from one page to another on your website. Their main purpose is to help visitors easily navigate your website, but they can also help boost SEO by aiding the flow of link equity.

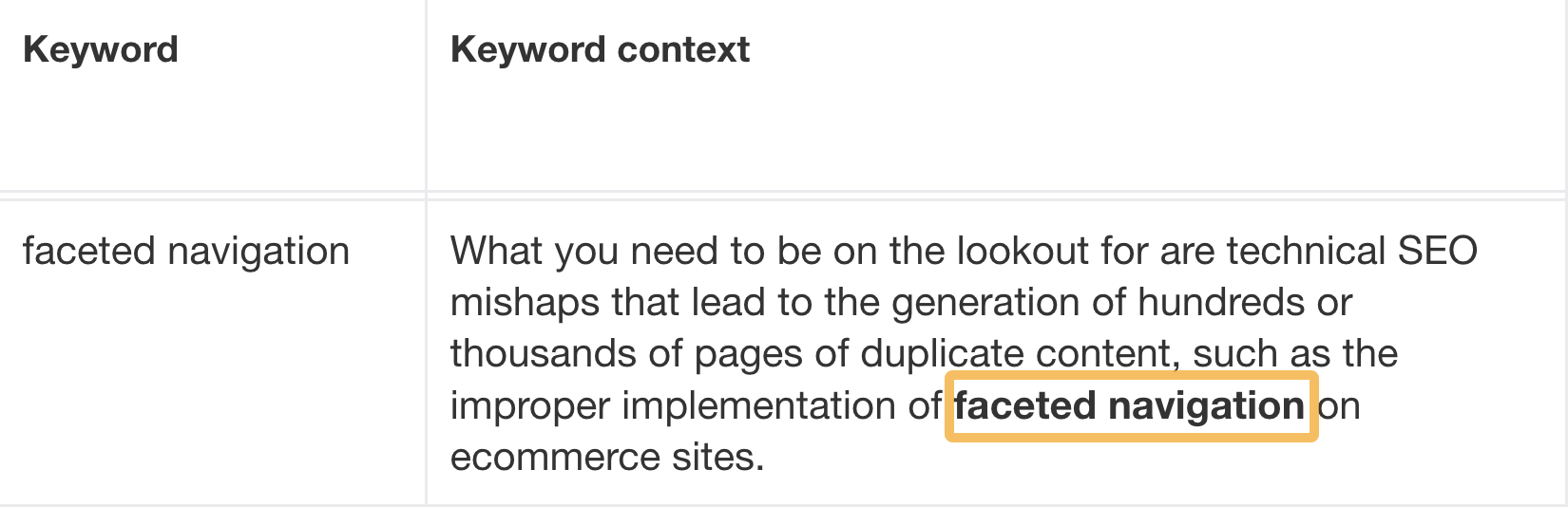

Finding new internal link opportunities is also a time-consuming process if done manually, but you can identify them in bulk using Ahrefs’ Site Audit. The tool takes the top 10 keywords (by traffic) for each crawled page, then looks for mentions of those on your other crawled pages.

Click on the Internal link opportunities report in Site Audit.

You’ll see a bunch of suggestions on how to improve your internal linking using new links. The tool even suggests exactly where to place the internal link.

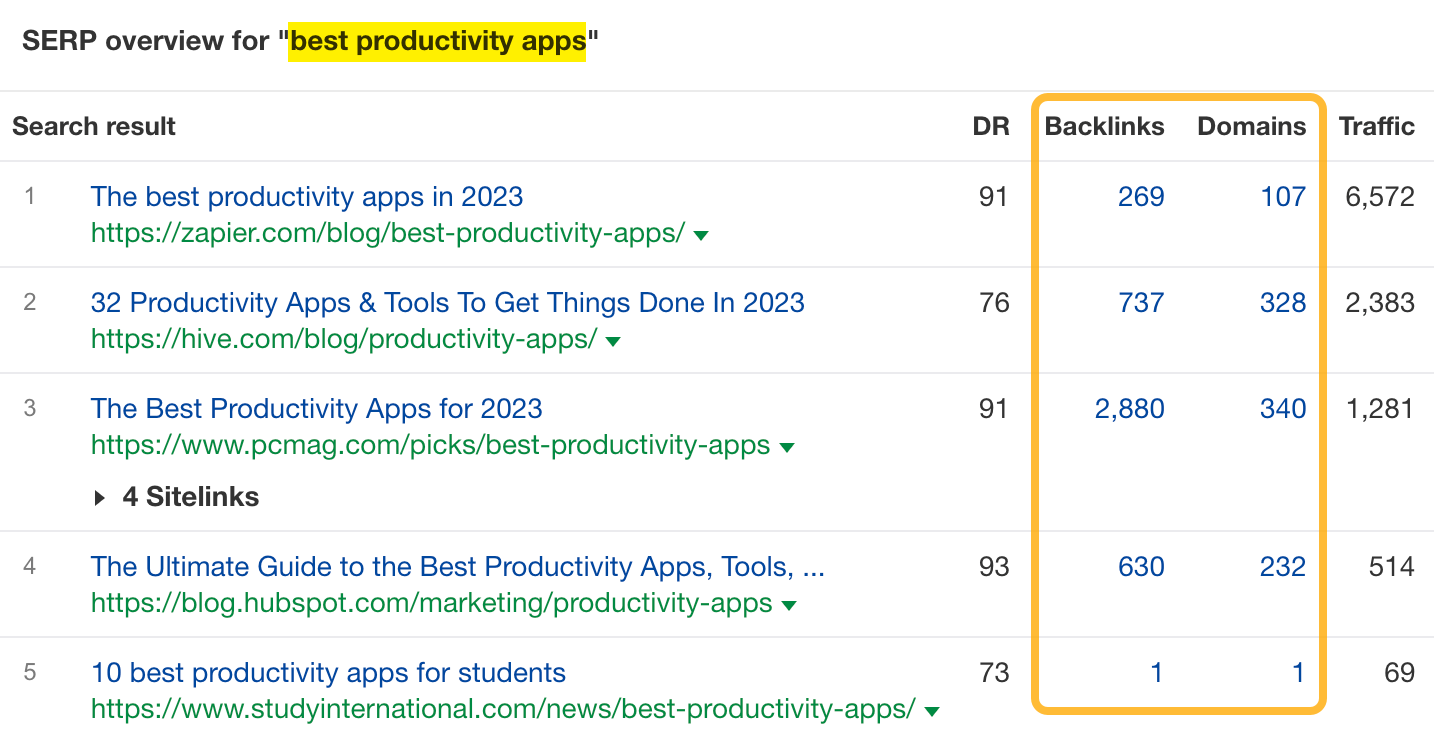

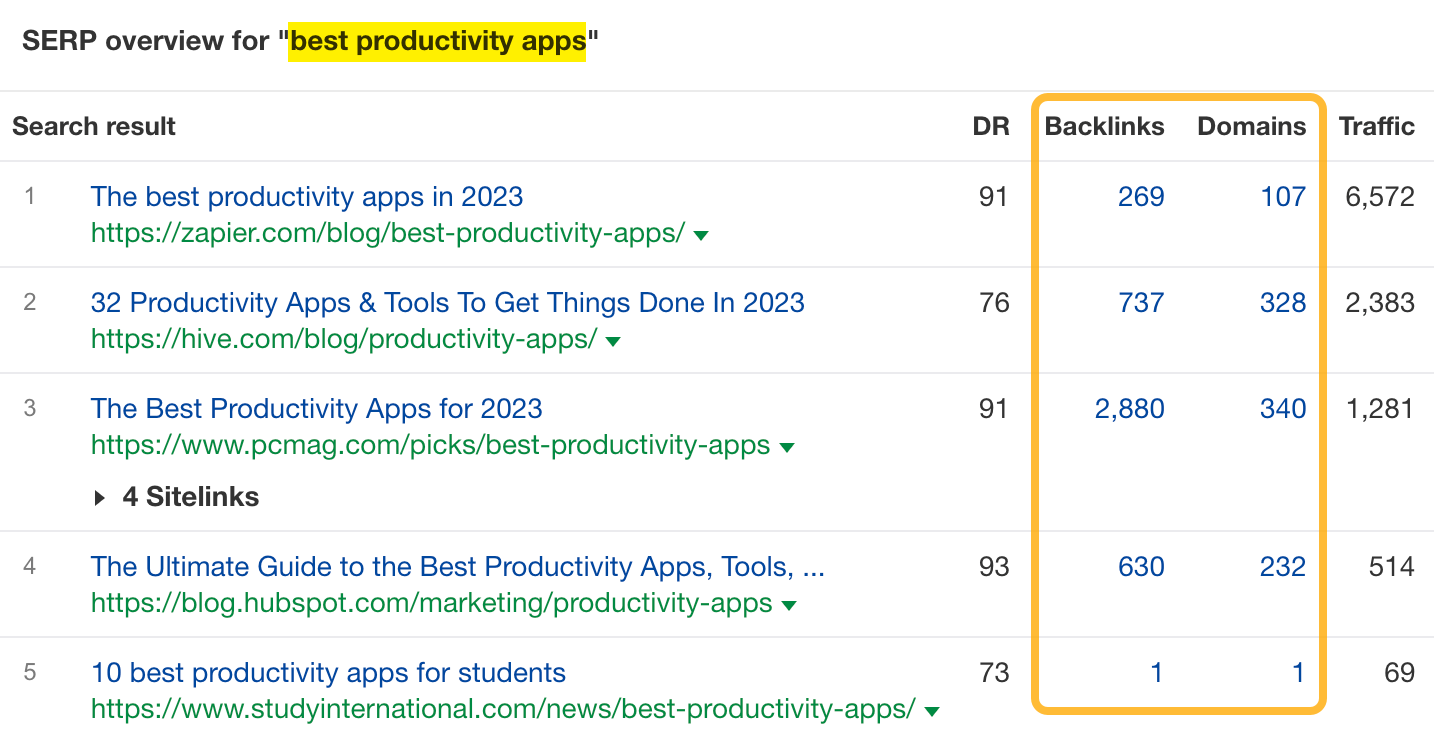

3. Use keywords to find link building opportunities

Link building is the process of getting other websites to link to pages on your website. Its purpose is to boost the “authority” of your pages in the eyes of Google and help your pages rank.

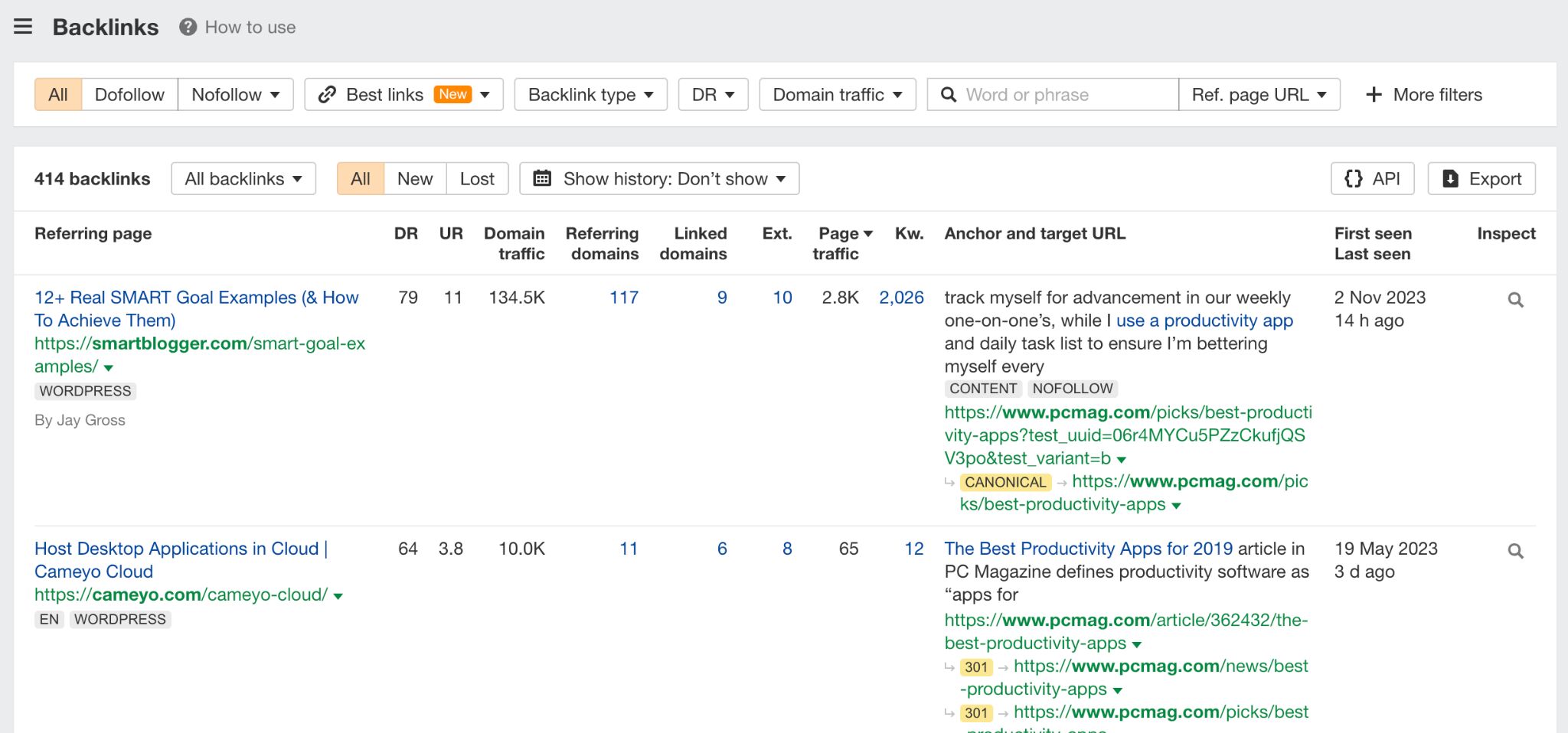

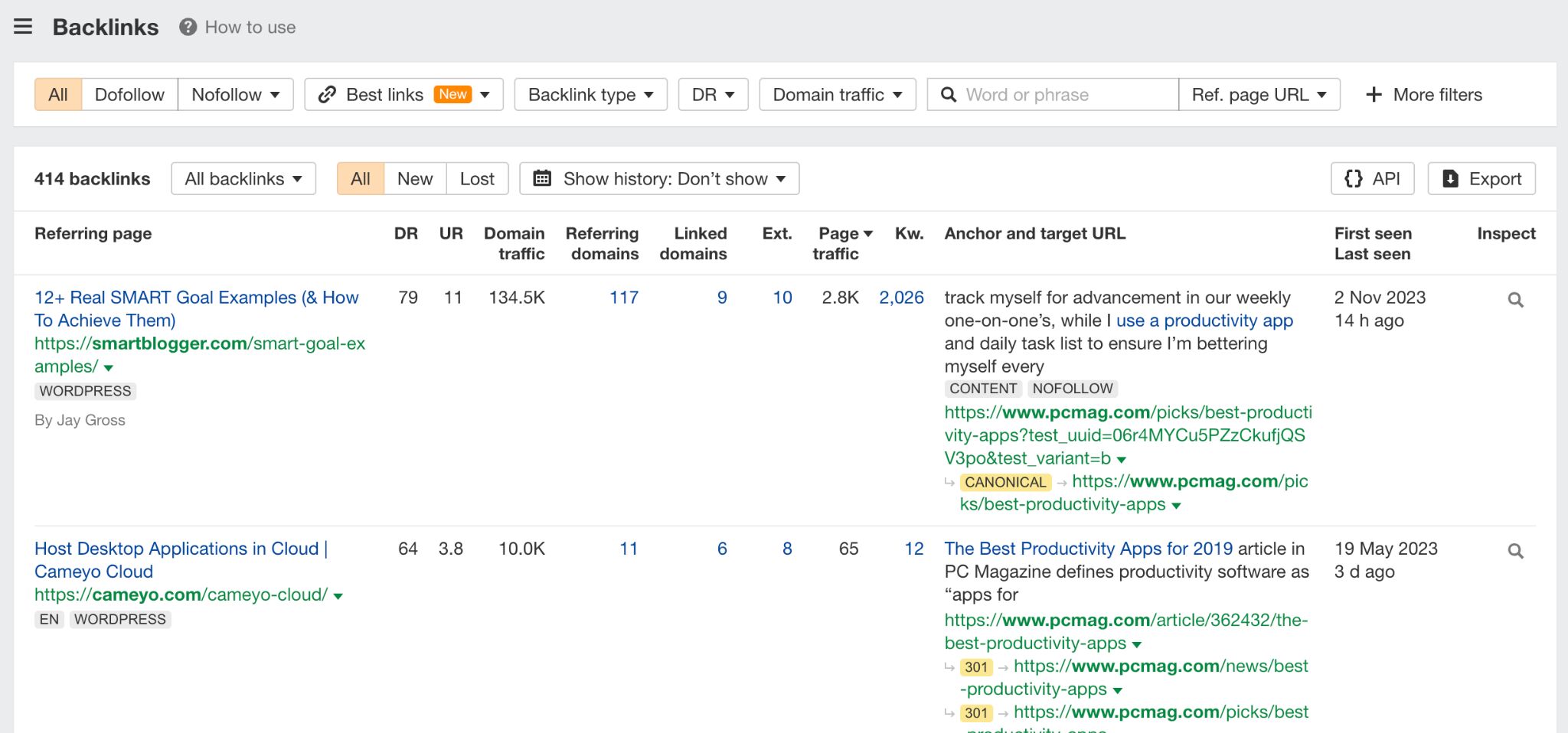

A good place to start is to pull up the top-ranking pages for your target keyword and research where they got their links from.

Put your keyword into Keywords Explorer and scroll down to the SERP overview. You’ll see the top-ranking pages and their number of backlinks (and linking domains).

Once you click on any of the backlink numbers, you’ll be redirected to a list of backlinks of a given page in Site Explorer.

From that point, the typical process involves identifying sites with the highest potential to boost your SEO and contacting their owners if you think they’d be willing to link to your content. We’re covering the details of this process and everything else you need to know to start with link building in this guide.

4. Avoid common keyword pitfalls

Four big don’ts of using keywords.

- Don’t use the same keyword excessively on a page. Repeating a keyword too frequently within a single page can lead to keyword stuffing, which is treated as spam by Google.

- Don’t use the same focus keyword across multiple pages. Each page on your website should have a unique focus keyword. Using the same keyword across multiple pages can lead to keyword cannibalization, where pages compete against each other in search results.

- Don’t sacrifice quality content for keyword usage. While keywords are essential for SEO, prioritize high-quality, informative content above all else. Don’t make your content read unnatural or too long by cramming in keywords. This won’t help you rank and will decrease content quality.

- Don’t use keywords just for the sake of using them. This means two things. First, don’t target keywords not related to your website or business — this will only bring you useless traffic. Second, don’t try to hit some keyword frequency goal which is often suggested by content optimization tools by just mentioning the keyword without any substance — SEO doesn’t work that way anymore.

Final thoughts

This article focused on general SEO for text-based content. For using keywords in other types of content and SEO, see these guides: