SEO

Internal Links for SEO: An Actionable Guide

Internal links are to backlinks what Robin is to Batman—they’re crucial to SEO success yet receive little to none of the credit.

In this guide, I’ll explain what internal links are, how to set up an internal linking structure, and how to strategically use them in SEO.

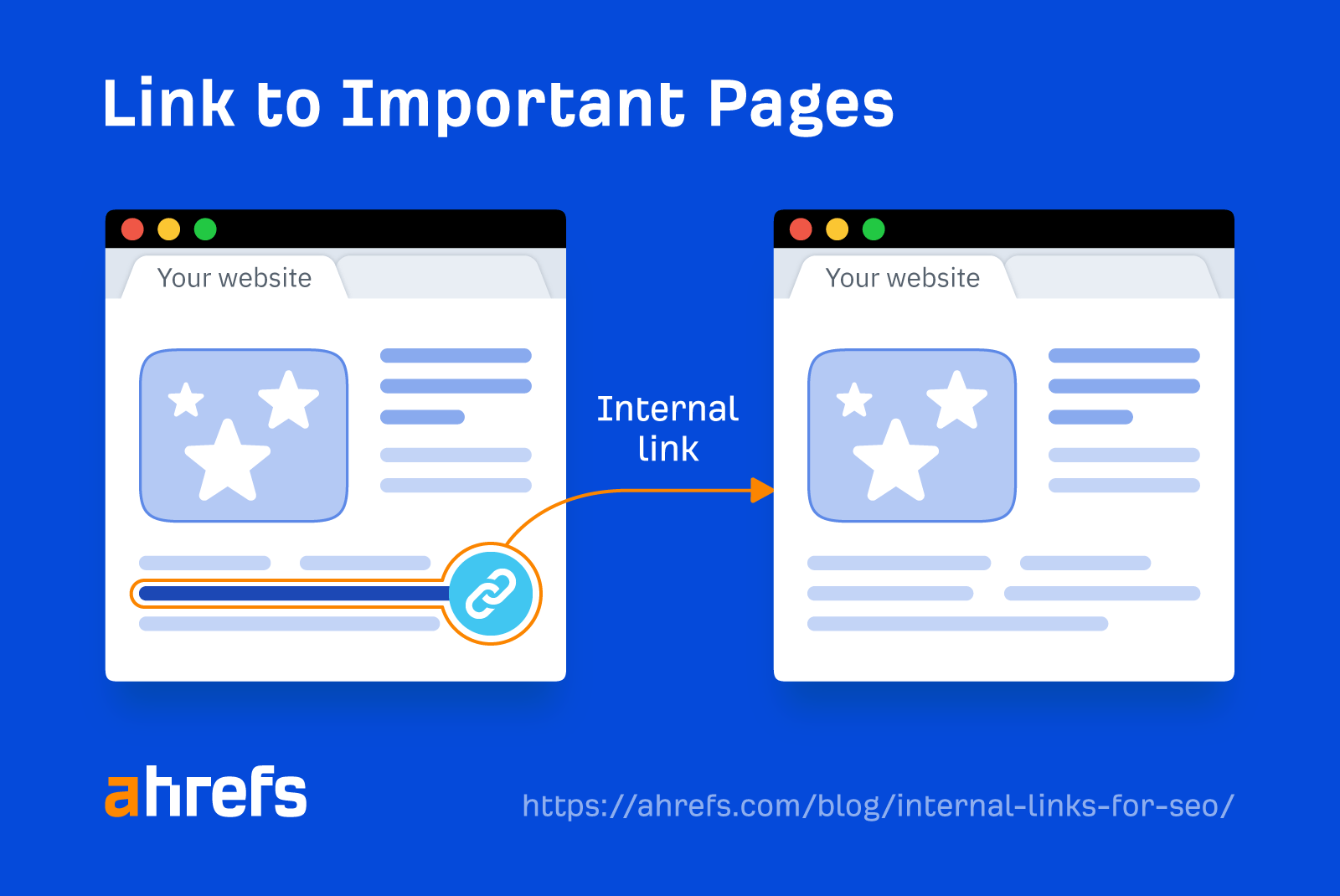

Internal links take visitors from one page to another on your website. Their main purpose is to help visitors easily navigate your website, but they can also help boost SEO.

Here’s a simplified view of what an internal link looks like:

And here’s what an internal link looks like in HTML code:

<a href="https://example.com/">Internal Linking</a>

Internal links serve a practical purpose of getting your website’s visitors from A to B, but they have an important role when utilized for SEO.

When asked in a “Google SEO office-hours” video whether internal linking was still important for SEO, John Mueller said:

Yes, absolutely… Internal linking is super critical for SEO. It’s one of the biggest things you can do on a website to guide Google and visitors to the pages that you think are important… What you think is important is totally up to you.

So if internal links are used strategically in SEO, they can help boost the performance of the pages you’re linking to.

Internal links do this by directing the flow of PageRank around your site. Even though the PageRank toolbar disappeared in 2016, PageRank is still a signal that Google uses.

Tip

You can use URL Rating as a replacement metric, as it has a lot in common with Google PageRank.

Generally speaking, the more internal links a page has, the higher its PageRank. However, it’s not all about quantity—the quality of the link also plays a vital role.

As well as passing authority, internal links allow visitors to jump straight to the content you want to show them, allowing you to control the user experience.

For example, if you run an e-commerce store, you may want to link to your best-selling or seasonal products directly from your homepage. This is helpful for visitors who want to jump straight to the products and purchase them, and also creates a good user experience.

You should look at it in a strategic way and think about what do you care about the most, and how can you highlight that with your internal links.

Google and other search engines also use internal links as signposts to help discover new pages on your website.

For example, let’s say that you publish a new webpage and forget to link to it from elsewhere on your site. Assuming the page isn’t in your XML sitemap and doesn’t have any backlinks, Google will find this page hard to discover.

Here’s what Google has to say about this:

Some pages are known because Google has already crawled them before. Other pages are discovered when Google follows a link from a known page to a new page.

Internal links also can provide context for search engines like Google. They do this through anchor text.

In other words, if you have a page about red dresses and have multiple internal links pointing to that page using anchor text like “dresses,” “red dresses,” and “red maxi dresses,” those help Google to understand the context of the linked page.

Here are the main types of links you’ll see on the web.

Navigational links

Most visitors to your website find their way around using your site navigation. These are some of the most important links on your website.

Here’s what they look like on the Ahrefs website.

Contextual links

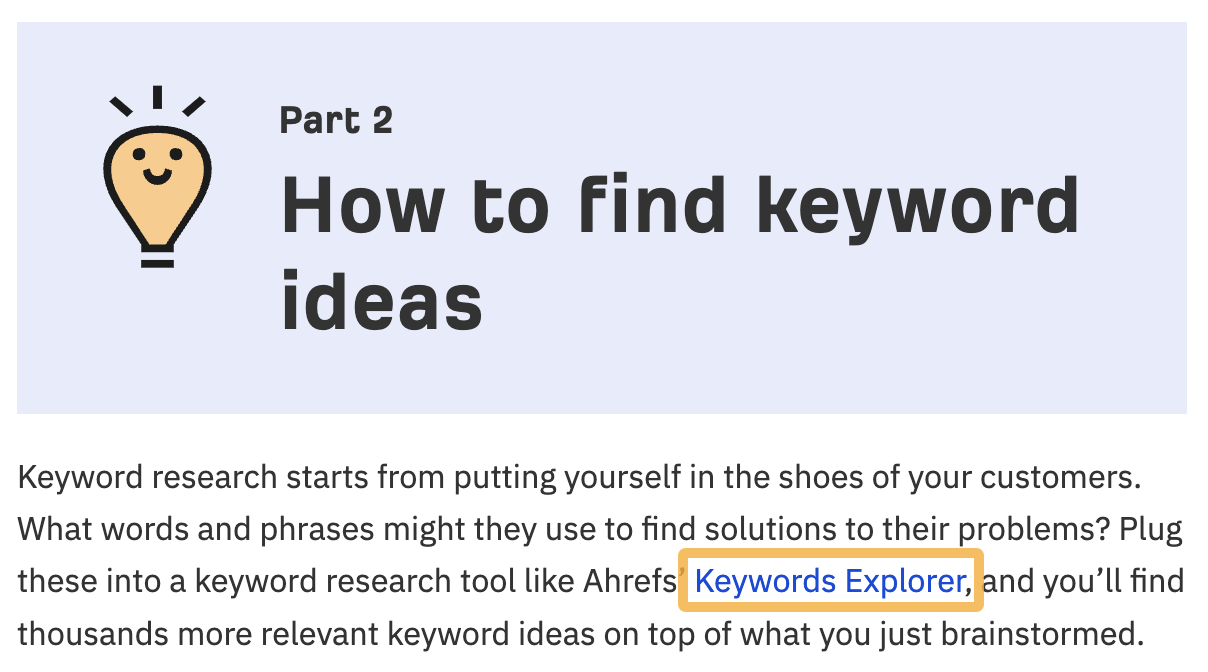

Contextual links appear in the main body of the content on a webpage. They’re typically used to expand on ideas, refer to resources, define terms, or direct readers to other relevant content.

Here’s an example of a contextual link to our keyword research tool:

Breadcrumb links

Internal links can also be used to indicate relationships between pages. One of the best examples of this is breadcrumbs. Breadcrumbs allow users to trace their journey back to the homepage.

They’re typically placed at the top of internal pages like product pages or blog posts.

Google has also indicated that it treats them as normal links (as part of PageRank computation).

Footer links

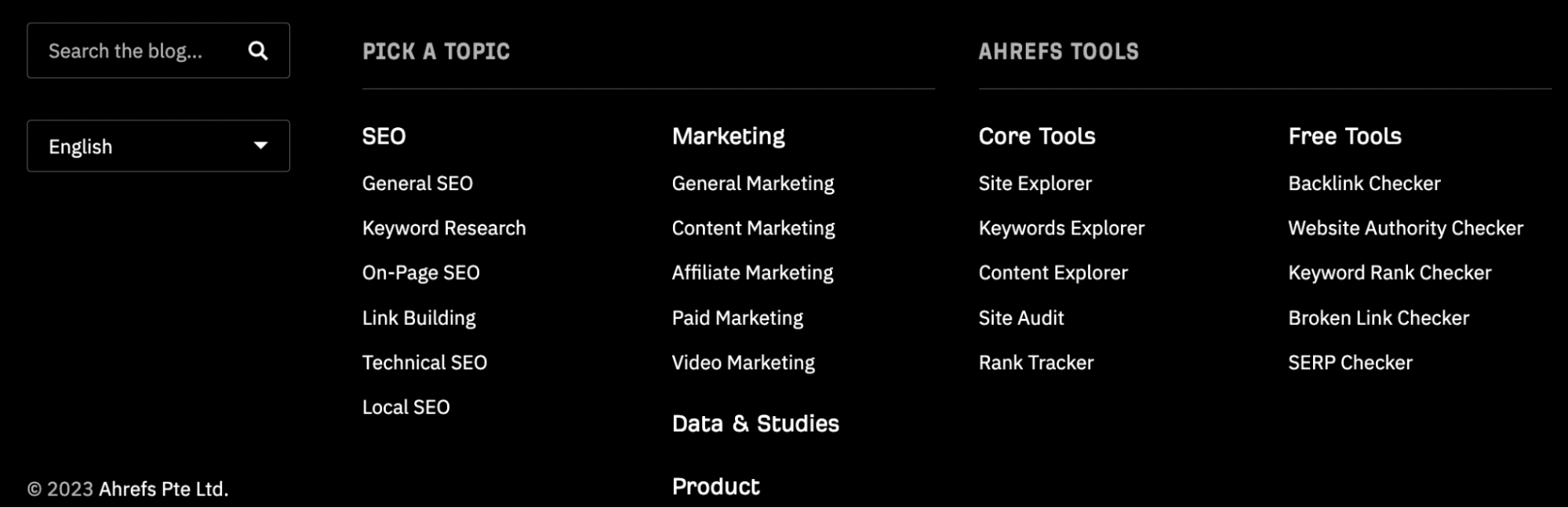

These appear at the bottom of the page. Here’s an example of the footer links in Ahrefs’ blog.

Footer links typically include links to your contact page, privacy policy, and other important pages on your website.

While footer links are useful for extra detail, they’re not the primary method of navigation on most websites.

Setting up a solid internal linking structure helps your website rise through the ranks by directing authority to the right places on your website.

Here’s how to do it.

1. Plan your internal linking structure

If you’re starting a new site or even restructuring an old one, the first step is to plan your internal linking structure.

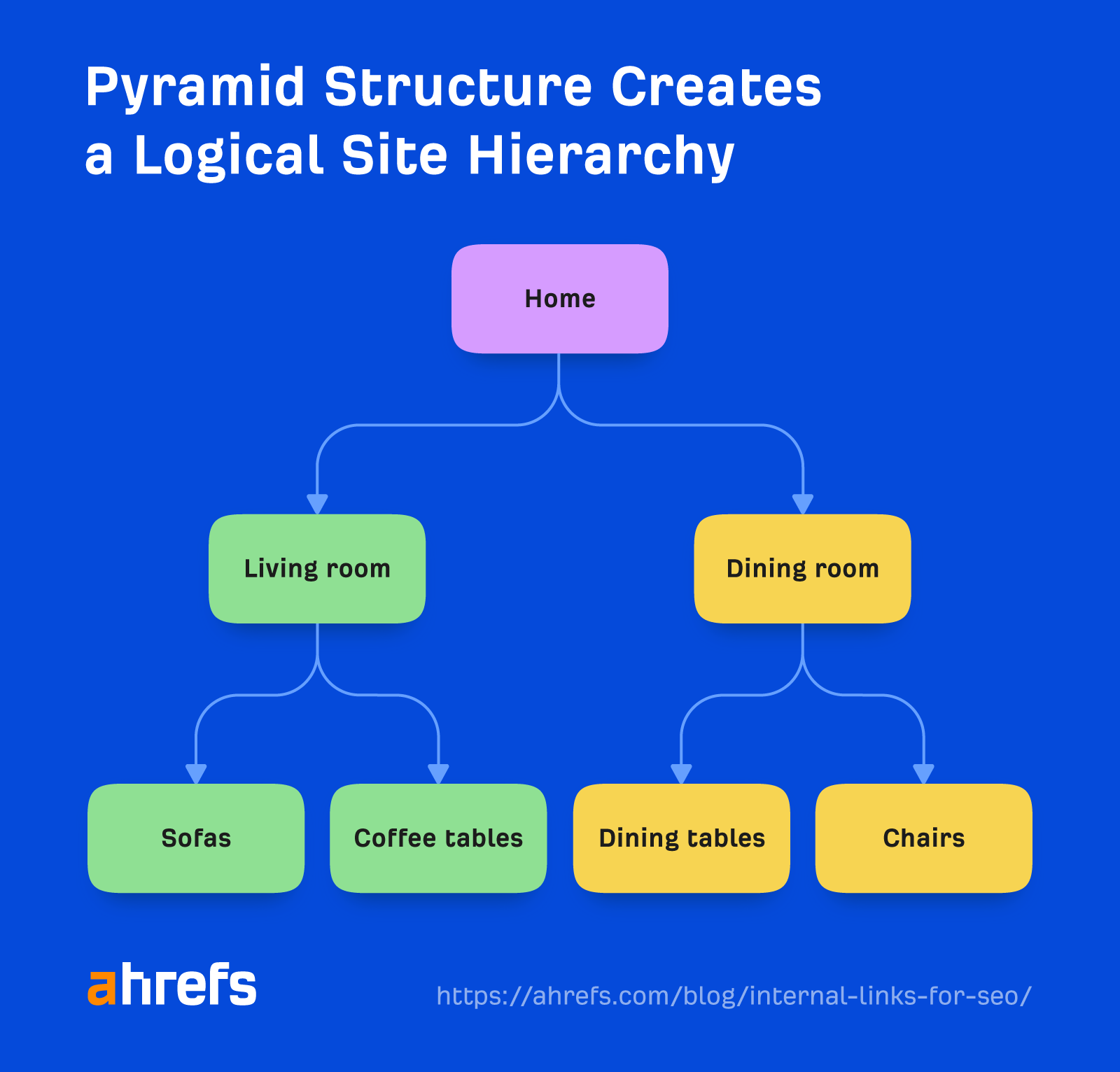

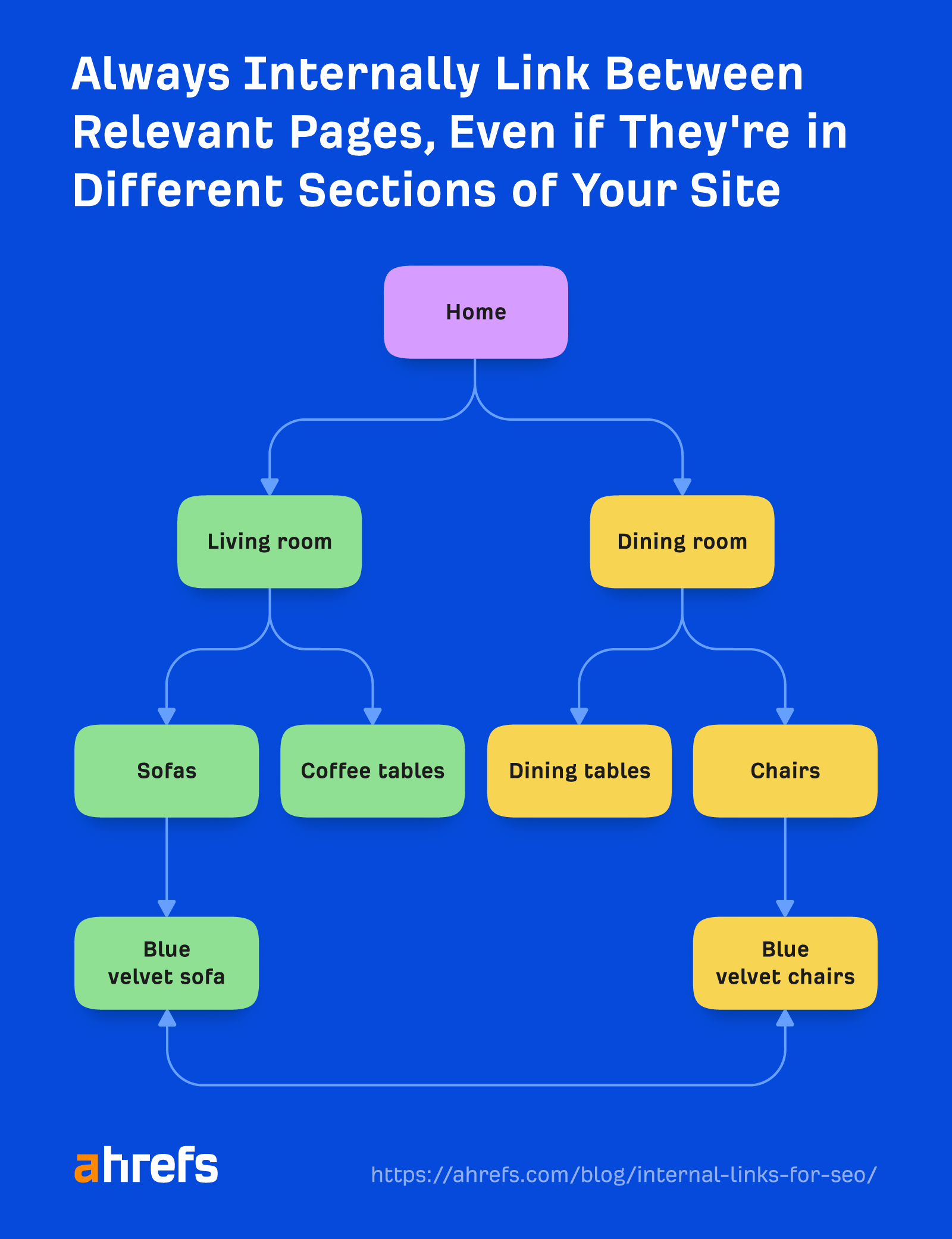

The pyramid structure is one of the most popular structures for internal linking, as it naturally creates a top-down internal linking structure.

Here’s an example of what it looks like. The arrows show the internal links from page to page.

Creating a basic internal link structure is the first stage in starting a successful internal linking strategy. This approach has also been recommended by John:

The top-down approach or pyramid structure helps us a lot more to understand the context of individual pages within the site.

As well as the top-down linking approach, you can also add breadcrumbs to make it easier to navigate around your website.

Breadcrumb links enable visitors to understand where they are on the website and to trace their journey back to the homepage.

2. Link to internal pages you care about

Once you’ve planned the basics of your internal linking structure, it’s time to start linking to the internal pages of your website you want to highlight.

For e-commerce businesses, it could mean their key products or services. For publications, it could be their most important content on a particular topic.

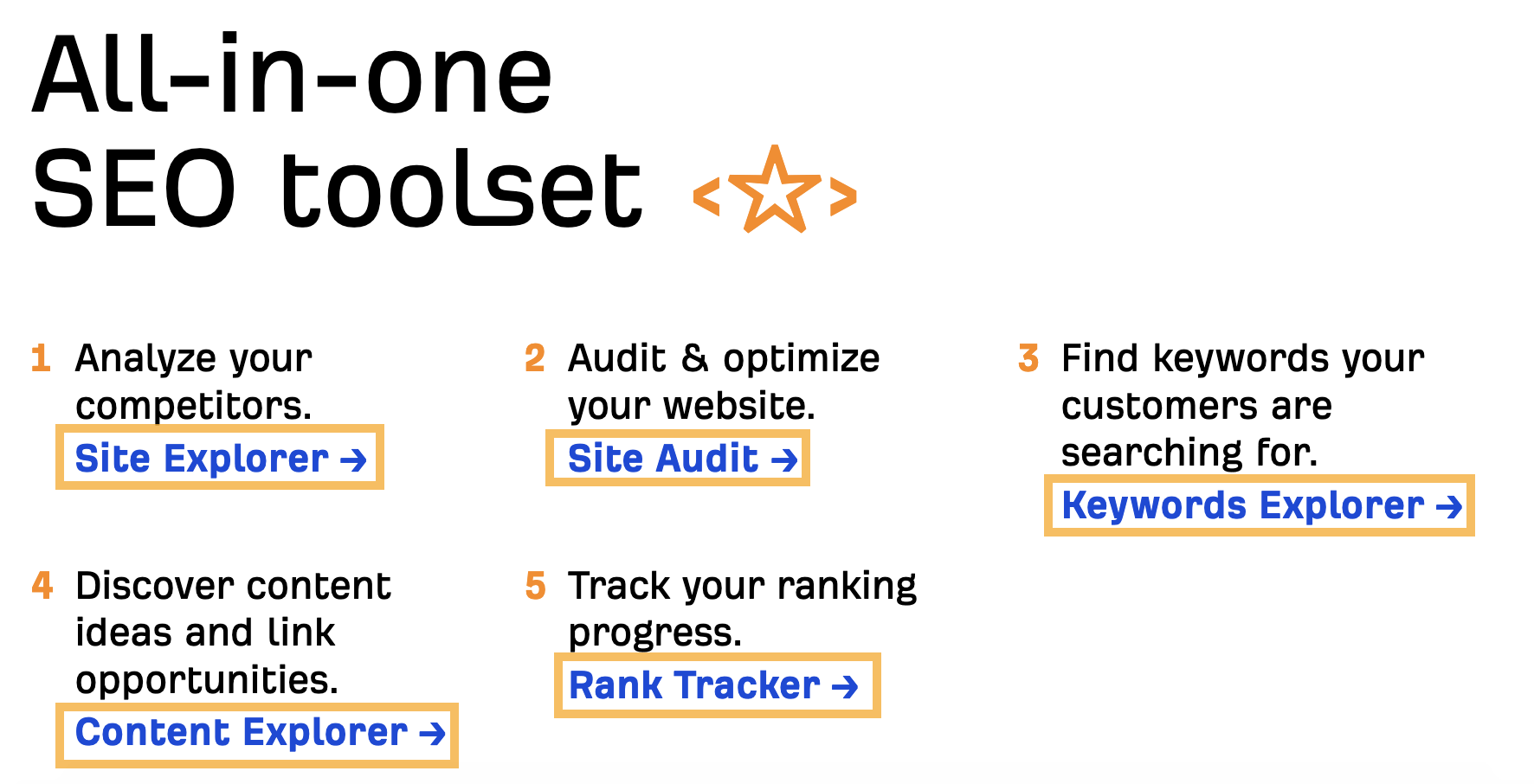

At Ahrefs, we usually internally link to our SEO tools from our key pages.

Here’s an example of this from Ahrefs’ homepage, showing our core SEO tools are prominently linked to.

This approach helps guide visitors to some of the most important parts of our website.

You can link to whatever you like, but it’s best to link to the things on your website that you care about the most.

3. Link to relevant content

Internally linking to content that’s contextually relevant on your site helps provide extra information for the readers about the topic you’re writing about.

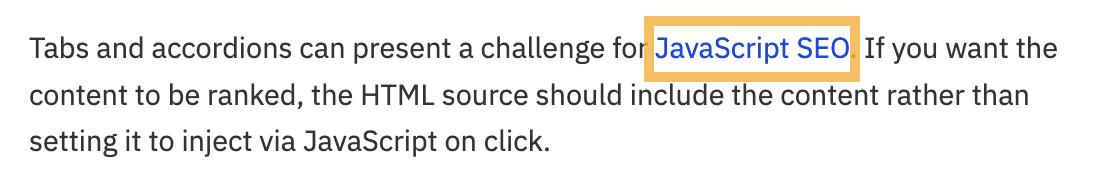

For example, when writing about SEO, the reader may encounter some technical jargon that they may not necessarily understand—but the internal link helps to provide context.

Here’s a quick example:

This approach enables the reader to click on the link to learn more about that topic.

On our blog, we also link to relevant content through a “Further Reading” box that looks like this:

This is another method you can use to help point readers to relevant content on your website.

Another consideration with internal linking is the context of the link. Gael Breton believes that:

In content, as long as it contextually makes sense to link to another page of your site, you should do it.

Here’s an example of what this can look like on an e-commerce website.

As well as being contextually relevant, it’s worth considering how powerful the pages you’re linking from are.

Let’s say you’ve just written a new post and you want to add some powerful internal links to it. What’s the best way to do it?

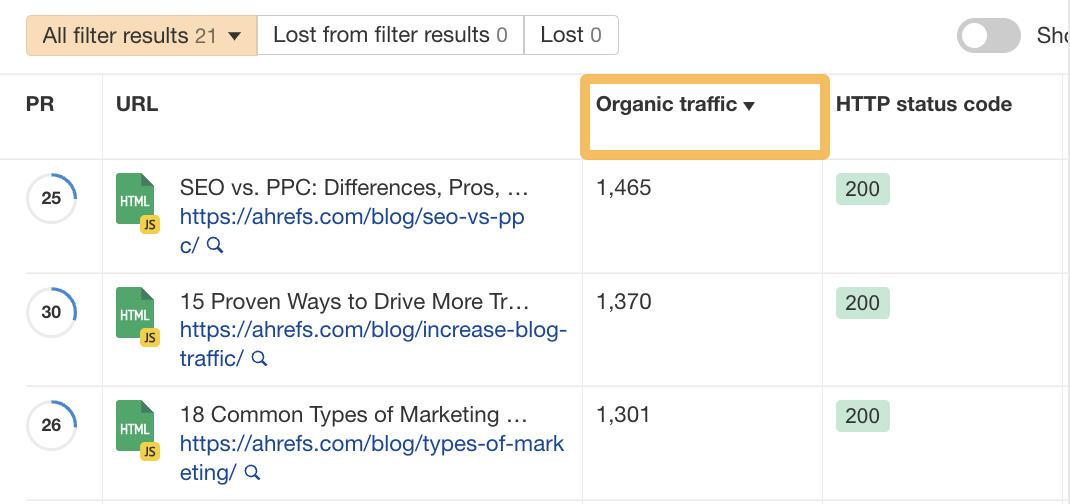

Here’s how I’ll approach it for free using Ahrefs Webmaster Tools:

- Head over to your most recent Site Audit and click on Page Explorer

- Enter your keyword into the search bar (e.g., “online advertising”)

- Change the filter to Page text (page should update once you’ve clicked it)

In this example, there are 21 results. You can then sort the pages by organic traffic. This enables you to see the pages with the most traffic first—likely to be powerful pages.

Once you’ve got your list, it’s just a question of working through it and adding the internal links to your new page to these powerful pages.

To stay on top of your SEO game, you’ll need to audit your internal links on a periodic basis.

Manually checking your internal links one by one is time consuming and, for bigger sites, almost impossible.

The best solution is to use a tool like Site Audit, which allows you to schedule the crawls of your website on a daily, weekly, or monthly basis.

With this tool, you can:

- Fix broken internal links to 4XX pages.

- Identify opportunities for new internal links.

- Fix orphan pages.

Let’s take a look at how to do these things.

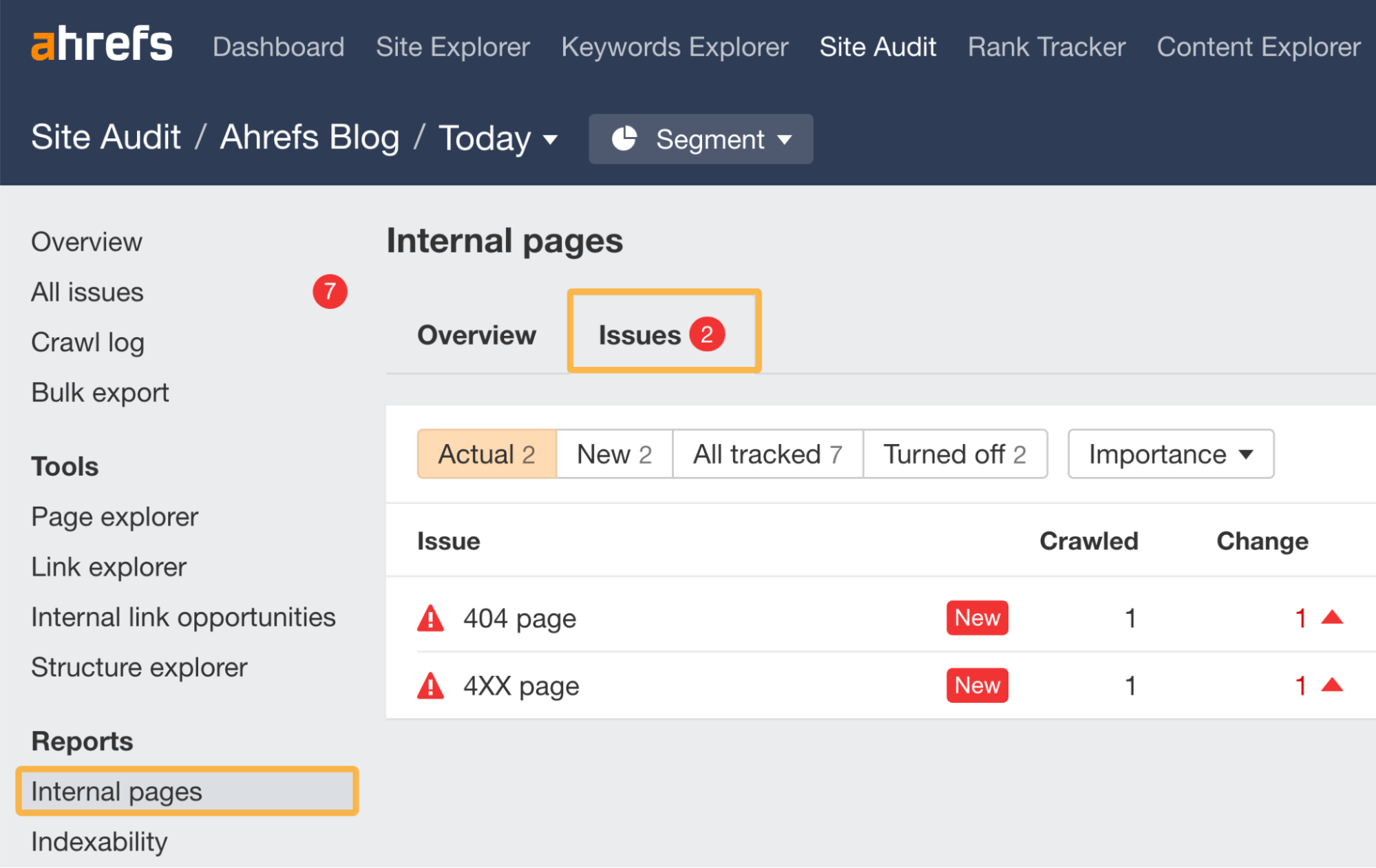

1. Fix broken internal links to reclaim authority

Once you’ve run a Site Audit crawl, head over to the Internal pages report and click on “Issues.”

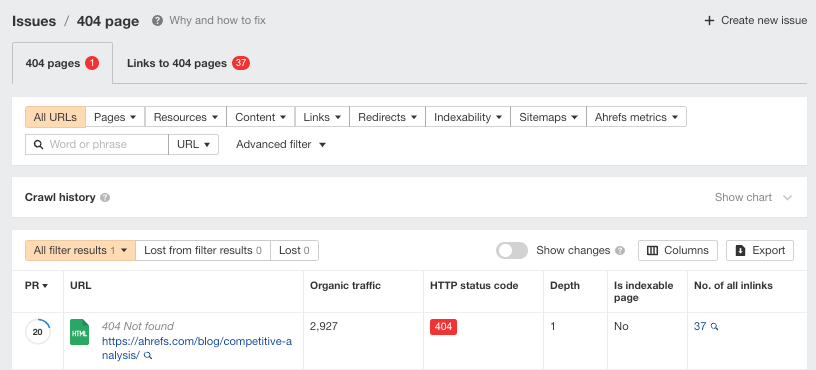

In the above example, we can see there are some issues. Let’s click on the “4XX page” to look at one issue in more detail.

We can see this issue has been caused by a blog post that we took down but still has 37 internal links pointing to it.

If the page was permanently removed, you’d need to remove the internal links pointing to this 404 page.

Tip

If the 404 page had important external links pointing to it, then you might want to consider 301 redirecting the page. To check this, you can use Ahrefs’ Site Explorer’s Broken backlinks report.

If we plug in the exact URL and then head over to the Broken backlinks report, we can see this page actually has quite a few external links pointing to it.

As a result of this check, we might want to consider 301 redirecting this page to a near equivalent.

2. Fix orphan pages

Orphan pages are pages with no internal links.

If you have important pages on your site that are classified as orphan pages in your Site Audit report, then you have an issue.

The good news is that it can be solved by simply adding a new internal link to the orphan page(s) in question.

Once you’ve run your audit, you can see if you have any orphan pages by:

- Clicking on the Links report.

- Then selecting the “Issues” tab.

Site Audit > Links > Issues tab > Orphan page (has no incoming internal links)

In the example below, we can see this site has one orphan page.

No important pages should be orphaned for two reasons:

- Google won’t be able to find them (unless you submit your sitemap via Google Search Console or they have backlinks from crawled pages on other sites).

- No PageRank will be transferred via internal links—as there are none.

Skim the list and make sure no important pages appear here.

If you have a lot of pages on your site, try sorting the list by organic traffic from high to low.

Orphaned pages that still receive organic traffic would likely get even more traffic if internally linked to.

3. Identify opportunities for new internal links

Finding new internal link opportunities is also another time-consuming process if it’s done manually, but you can identify them in bulk using Site Audit.

To do this, click the Internal link opportunities report in Site Audit.

You’ll see a bunch of suggestions on how to improve your internal linking using new links.

The best bit about this report, in my opinion, is that it suggests exactly where to place the internal link.

In the example above, Site Audit is suggesting in this passage of text that we should add a link to our page on faceted navigation.

I’d advise reviewing the recommendations and adding links to the most important pages you want to highlight.

Final thoughts

Internal linking isn’t technically difficult, but it takes time and patience to execute your plan. Also, making changes to your site can cause more issues—which, if left undiagnosed, can impact your site’s performance.

In my opinion, the only sustainable way to monitor your internal links is by using a tool like Ahrefs’ Site Audit.

Got questions? Ping me on Twitter.