SEO

7 Popular White Hat Link Building Techniques (That SEOs Are Still Using Today)

Every year, the SEO agency Aira conducts a State of Link Building survey.

In the most recent edition, 270 SEOs voted for the link-building techniques they still use today.

Let’s look at how to do these seven tactics.

Also known as creating link bait, the goal is to create something so valuable and interesting that other people want to link to it.

How to do it

There’s no surefire way to do this. If it was so easy, everyone would be doing it. That said, there are ways to find link-bait ideas. One way is to piggyback off what’s already working for your competitors.

Here’s how:

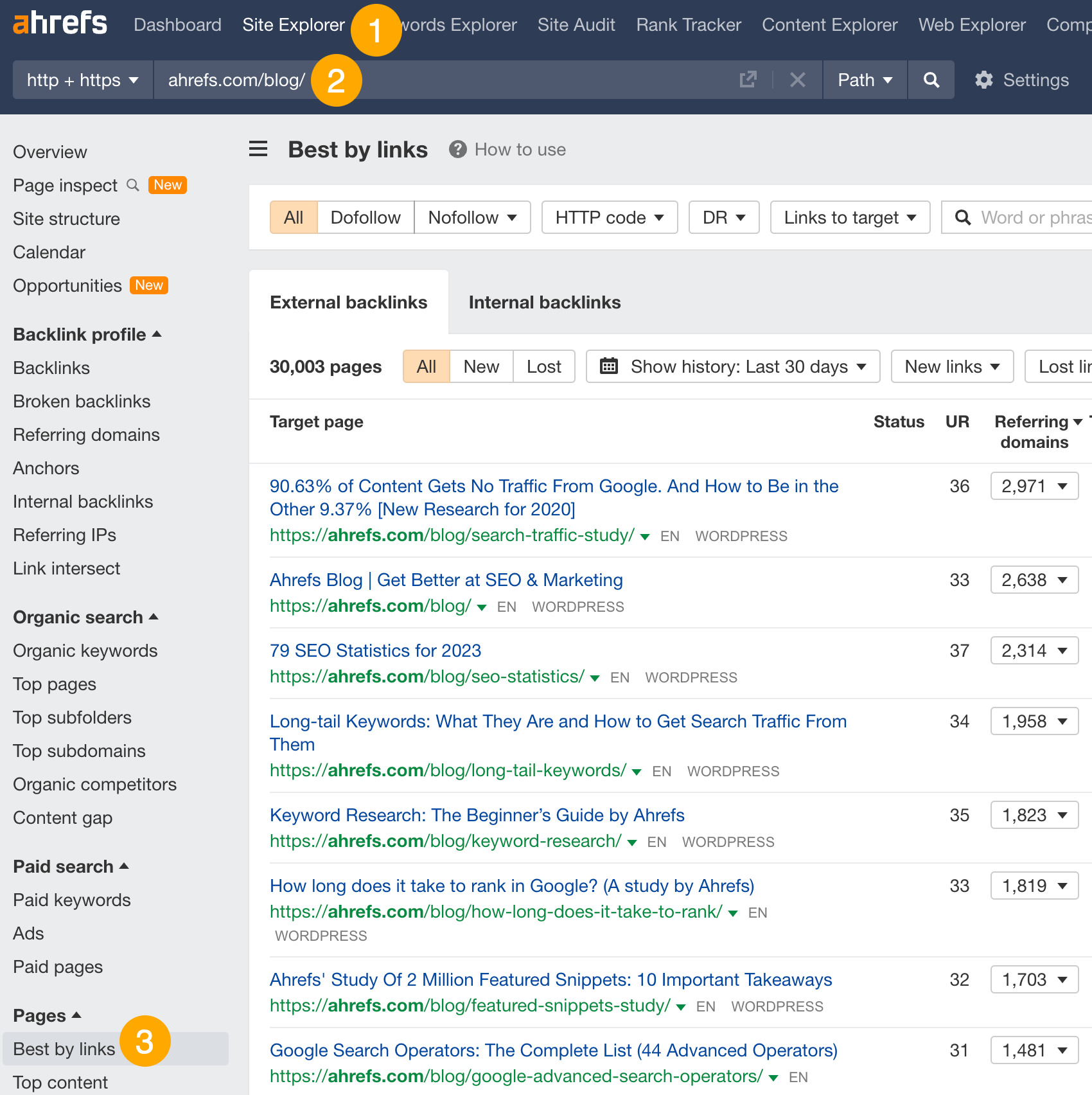

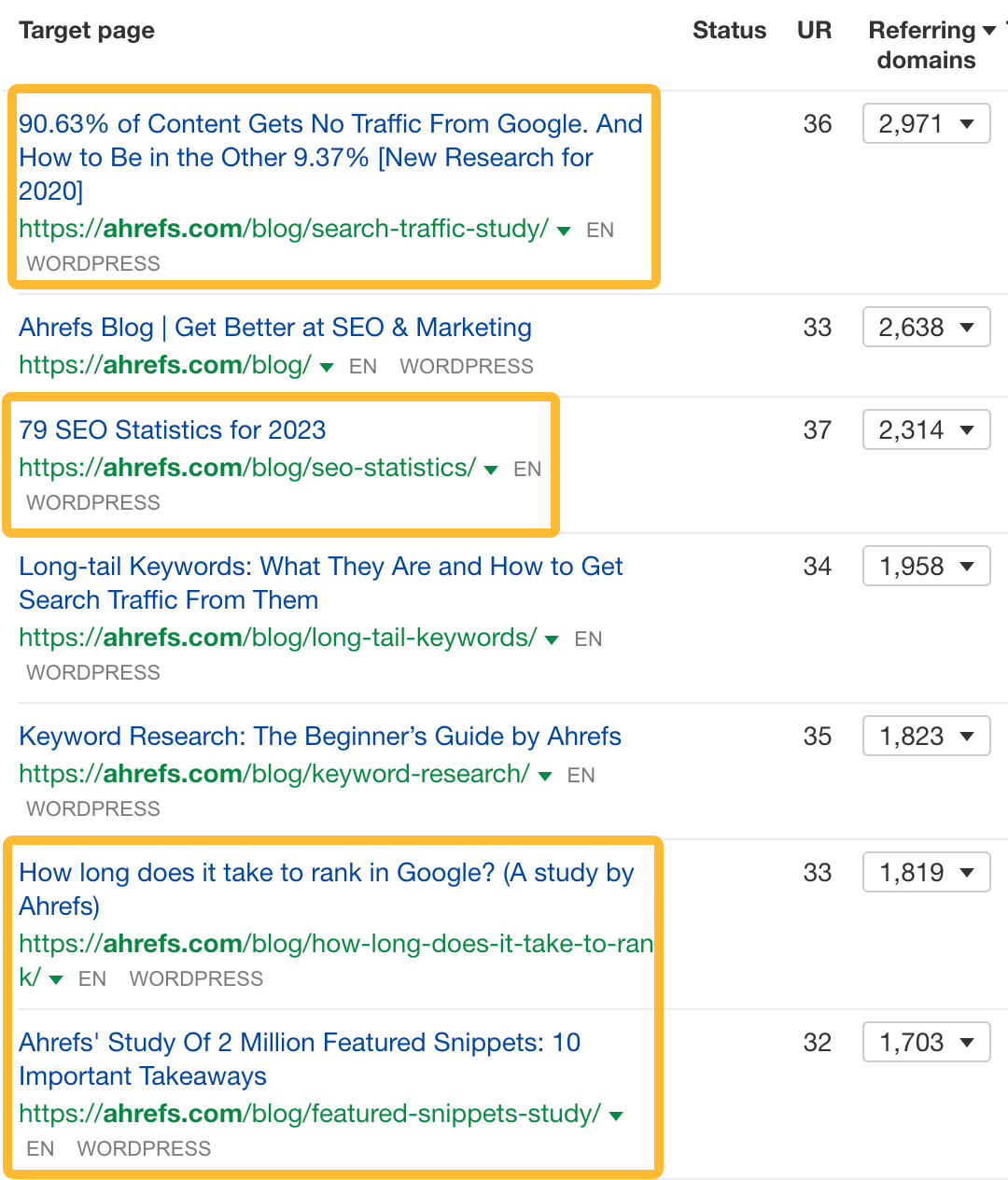

- Go to Ahrefs’ Site Explorer

- Enter your competitor’s domain

- Go to the Best by links report

This report shows which of your competitors’ pages attracted the most backlinks. Eyeball the list to see what topics and formats resonate in your industry.

For example, data studies and statistics are popular in the SEO niche:

If you’re in this space, you can probably attract links to this kind of content, too.

Just remember that creating content “designed to entice links” doesn’t mean you’ll automatically get them. People won’t magically find your content and link to it. You promote it. Learn how to do that in my content promotion post.

This technique is about looking at how your competitors get links and how you can do the same for your website.

How to do it

First, you’ll need to check the backlink profile of your competitors’ websites.

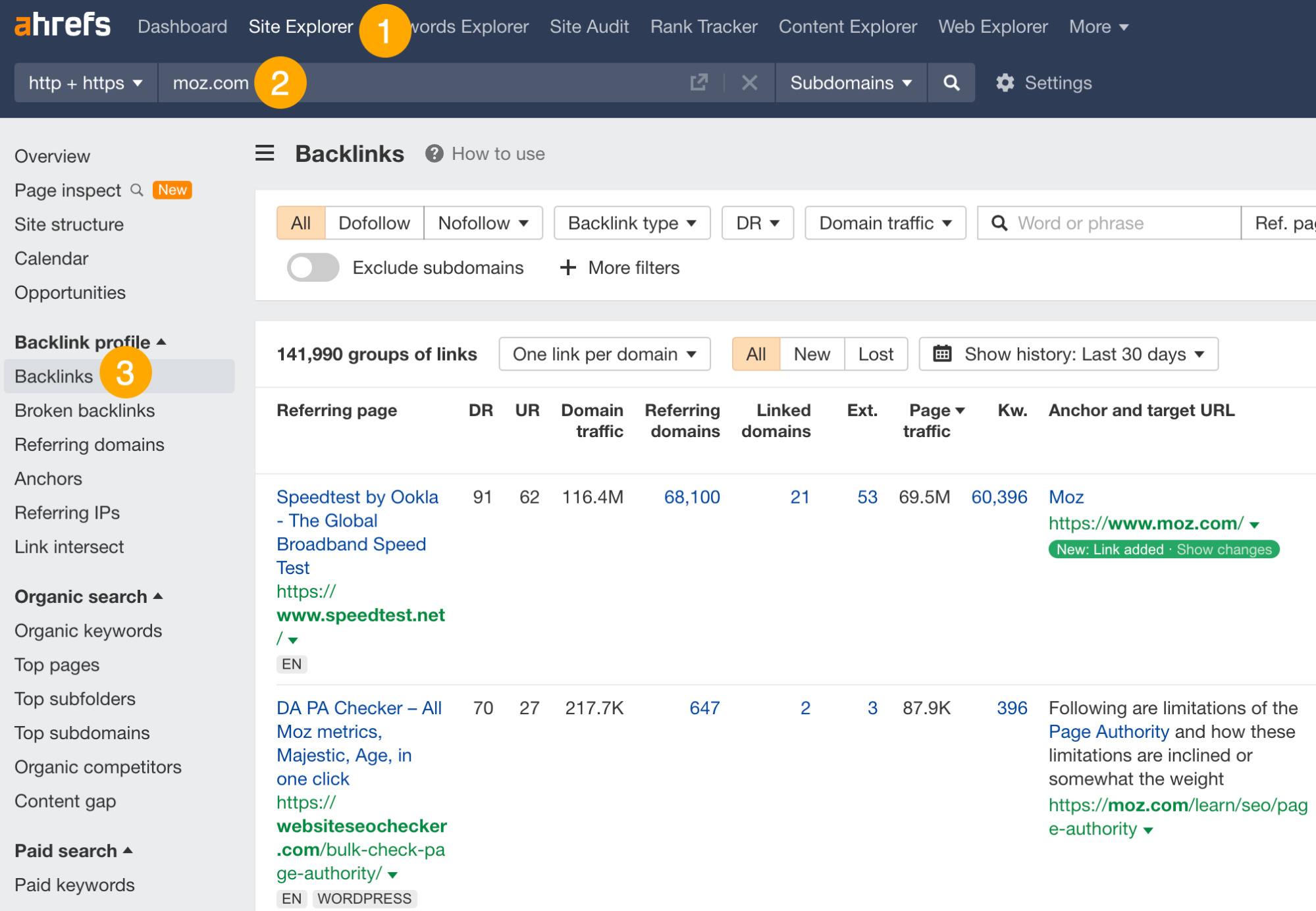

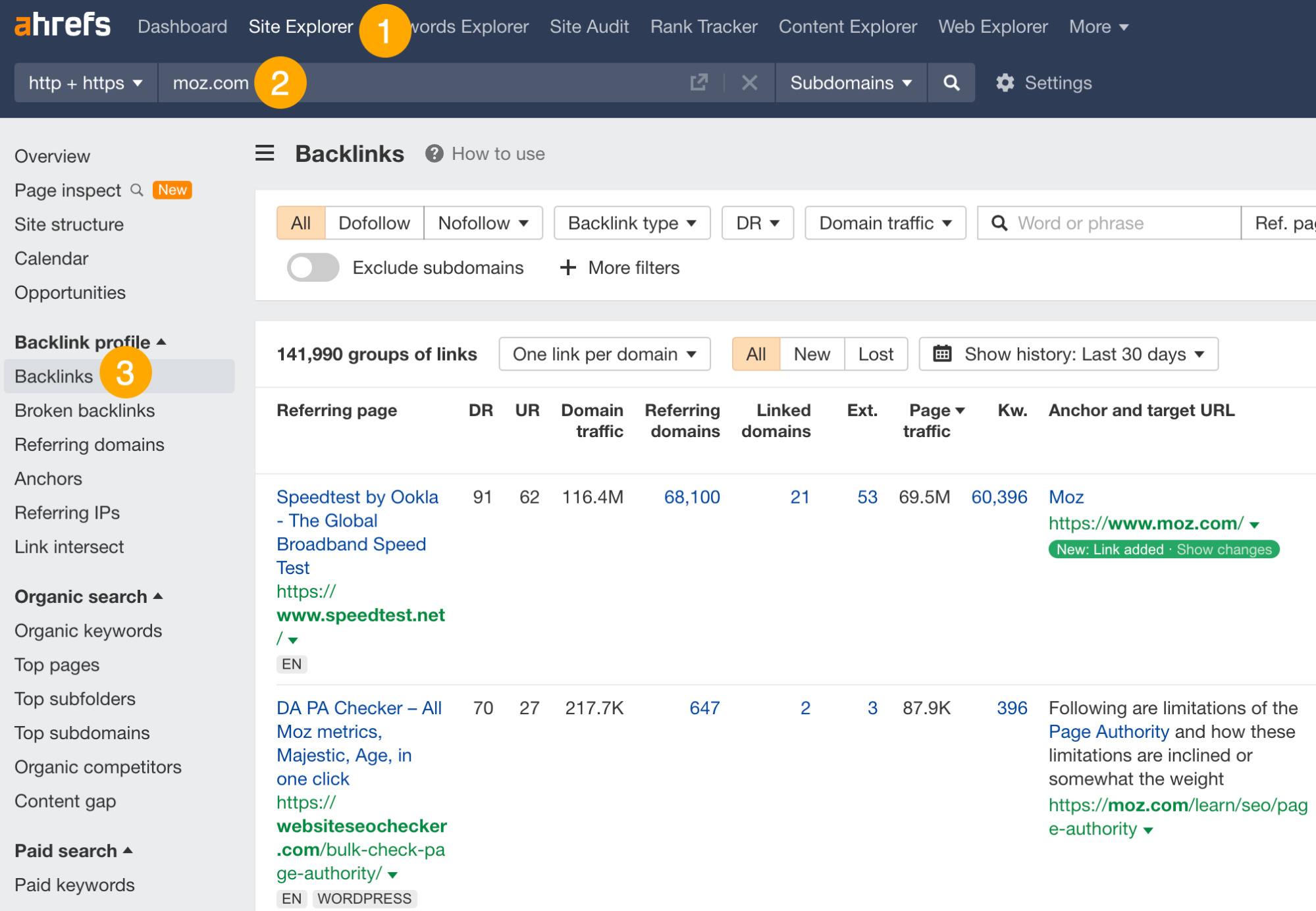

Here’s how:

- Go to Ahrefs’ Site Explorer

- Enter your competitor’s domain

- Go to the Backlinks report

Rather than identifying specific links to replicate, look for patterns. This will help you spot link-building strategies that your competitors are using successfully.

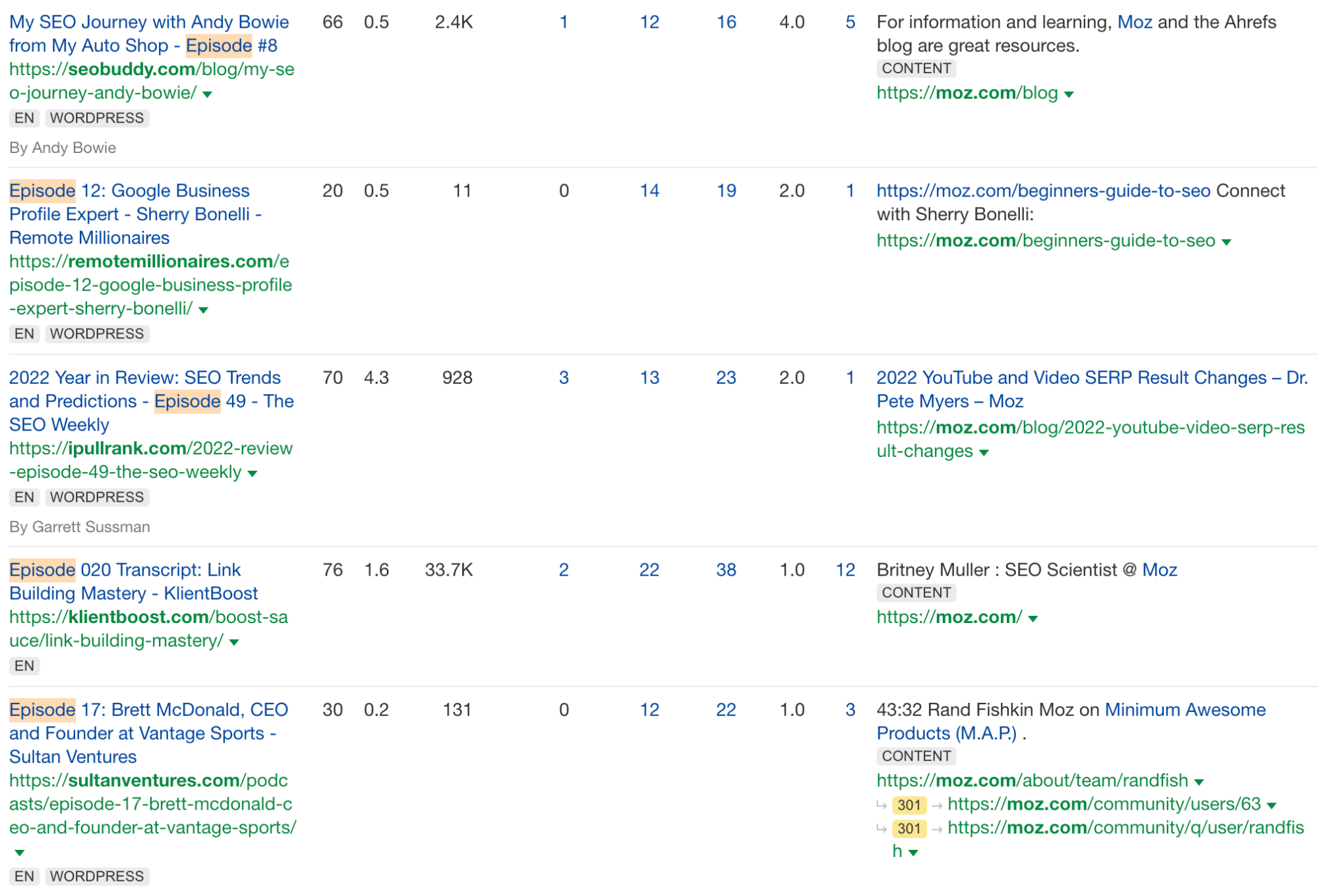

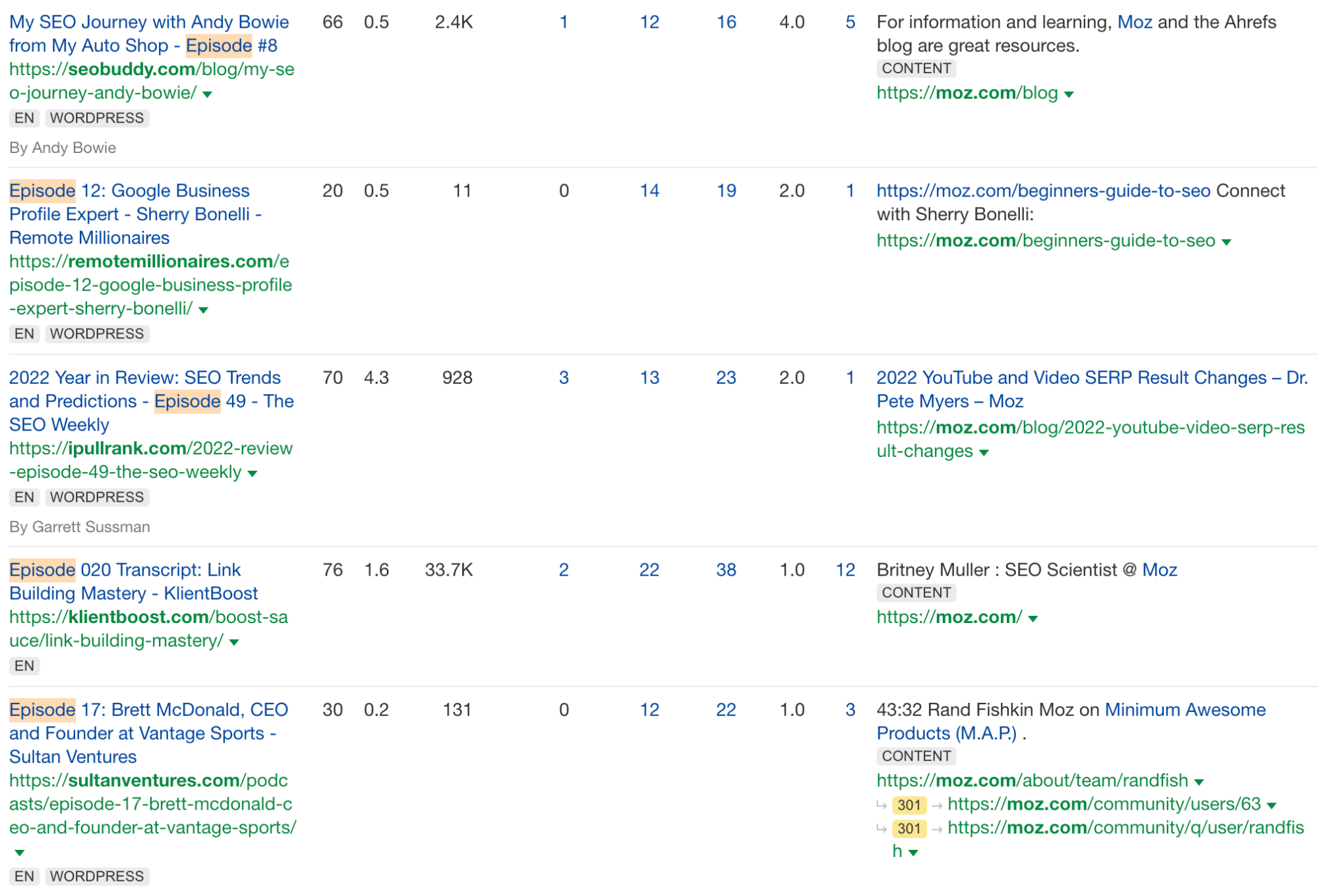

For example, we see that the Moz team has appeared on multiple podcasts:

This tells us that podcast link-building is a strategy that works in our industry. We can do the same too. We can curate podcast opportunities by looking for those our competitors have already appeared on, and we can also find more podcast opportunities by following the process in this video:

Guest posting is where you write a post for someone else’s website.

How to do it

The best websites to write for are those that already get lots of traffic to content about similar topics to the one you want to write about. Here’s how to find these websites:

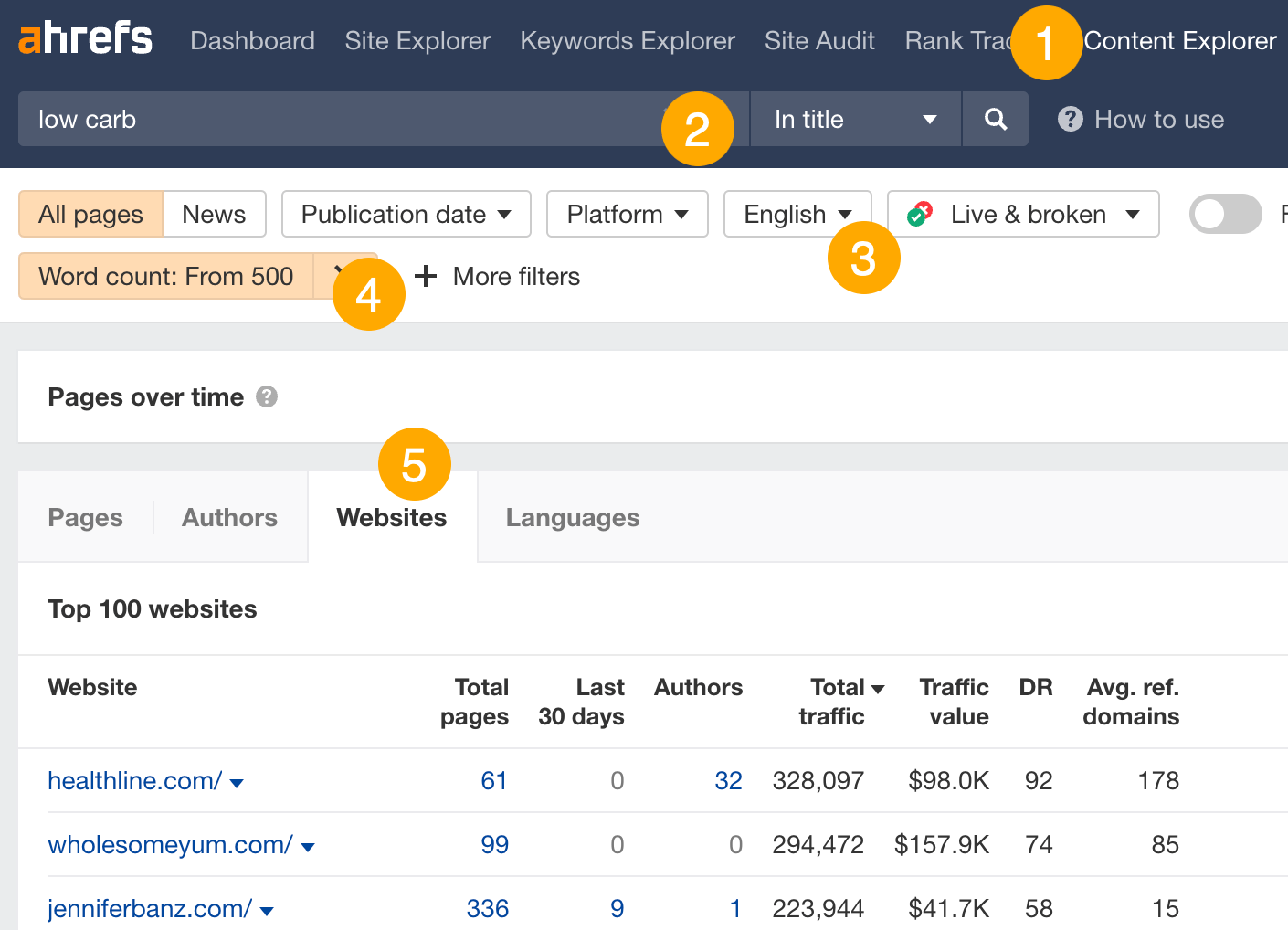

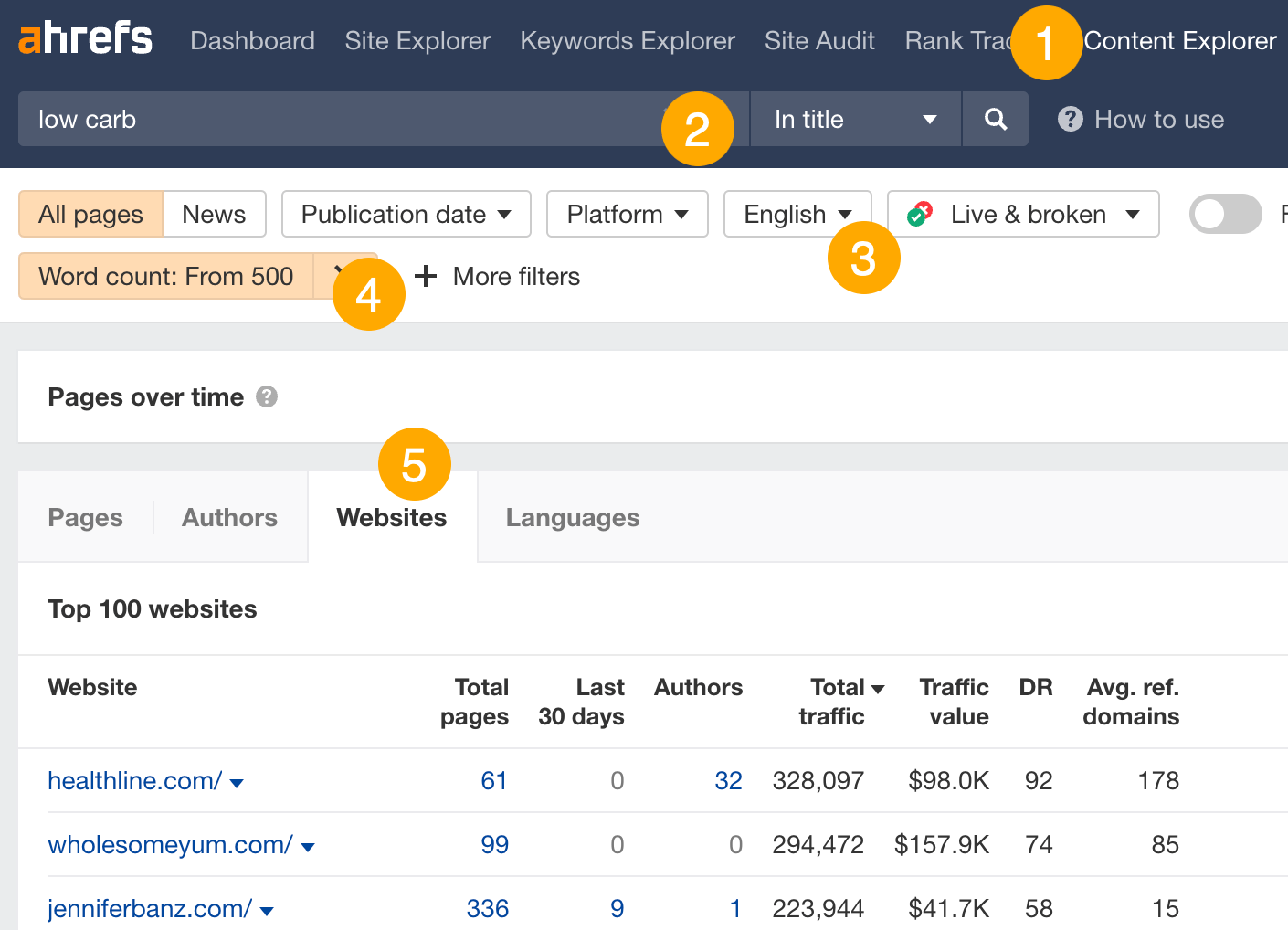

- Open Ahrefs’ Content Explorer

- Enter a related topic and change the dropdown to “In title”

- Filter for English results

- Filter for results with 500+ words

- Go to the “Websites” tab

This shows you the websites getting the most organic search traffic to content about your topic.

From here, you want to eyeball the Authors column and prioritize websites with multiple authors, as this often points to them accepting guest posts.

For example, Perfect Keto gets an estimated 19K monthly search visits from 20 authors for the topic “low carb”:

It’s likely that some of these are guest authors, so this could be a good site to pitch.

Reactive PR is where you react quickly to time-sensitive opportunities to earn links.

How to do it

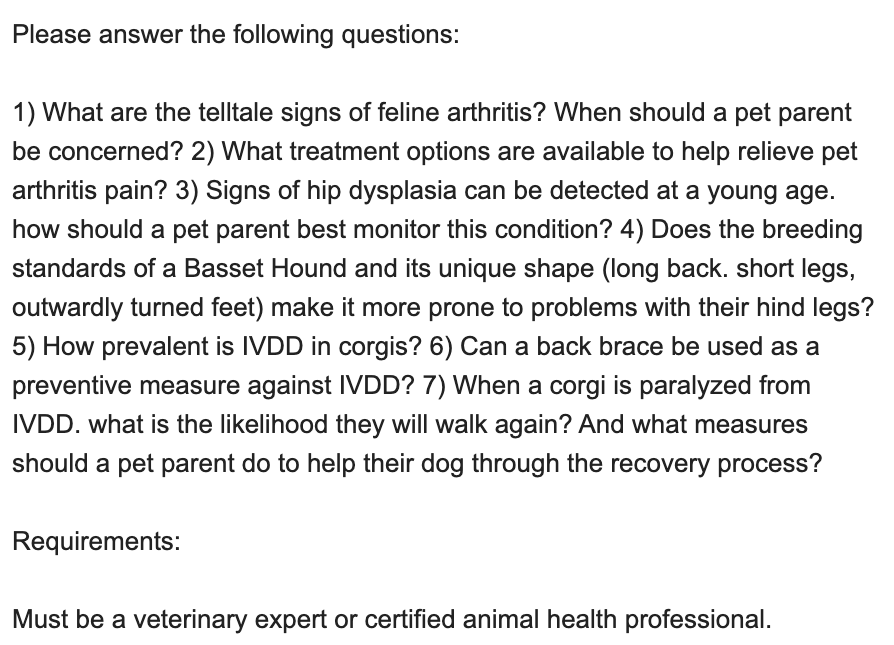

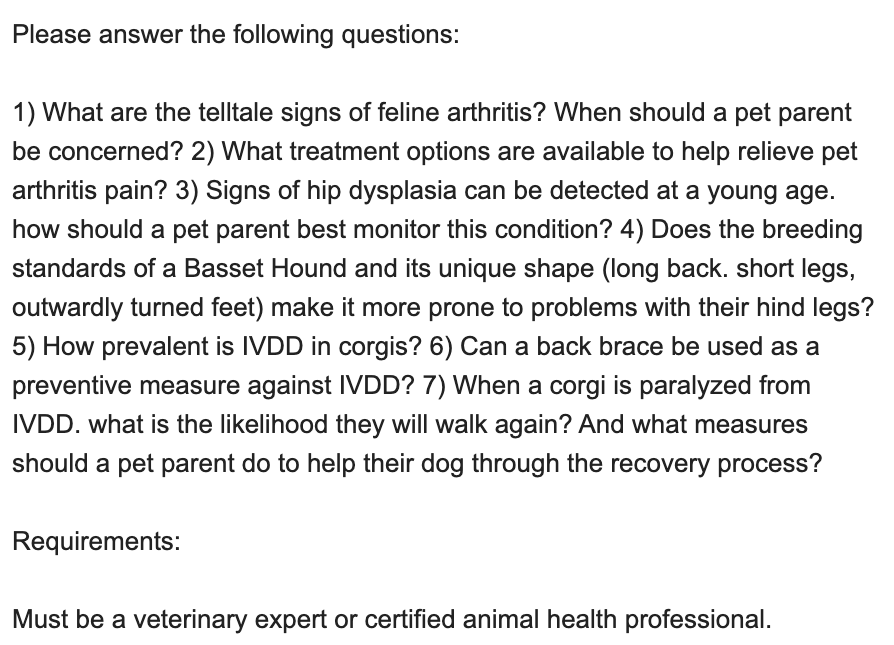

It varies from industry to industry, but it’s common to use Help a Reporter Out (HARO). This platform connects journalists looking for quotes and feedback to expert sources. You can sign up for free and respond to any you can answer.

For example, a journalist at HandicappedPets.com put out a query:

Dr. Jonathan Roberts, a veterinarian at PetKeen.com, responded and earned a link:

After you sign up for HARO, you’ll receive three emails a day.

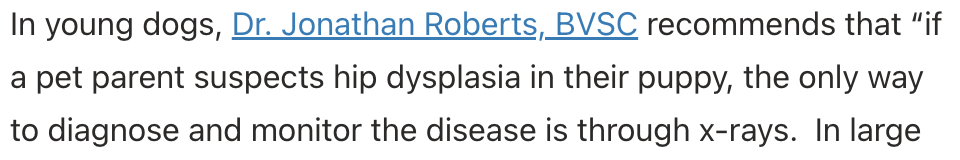

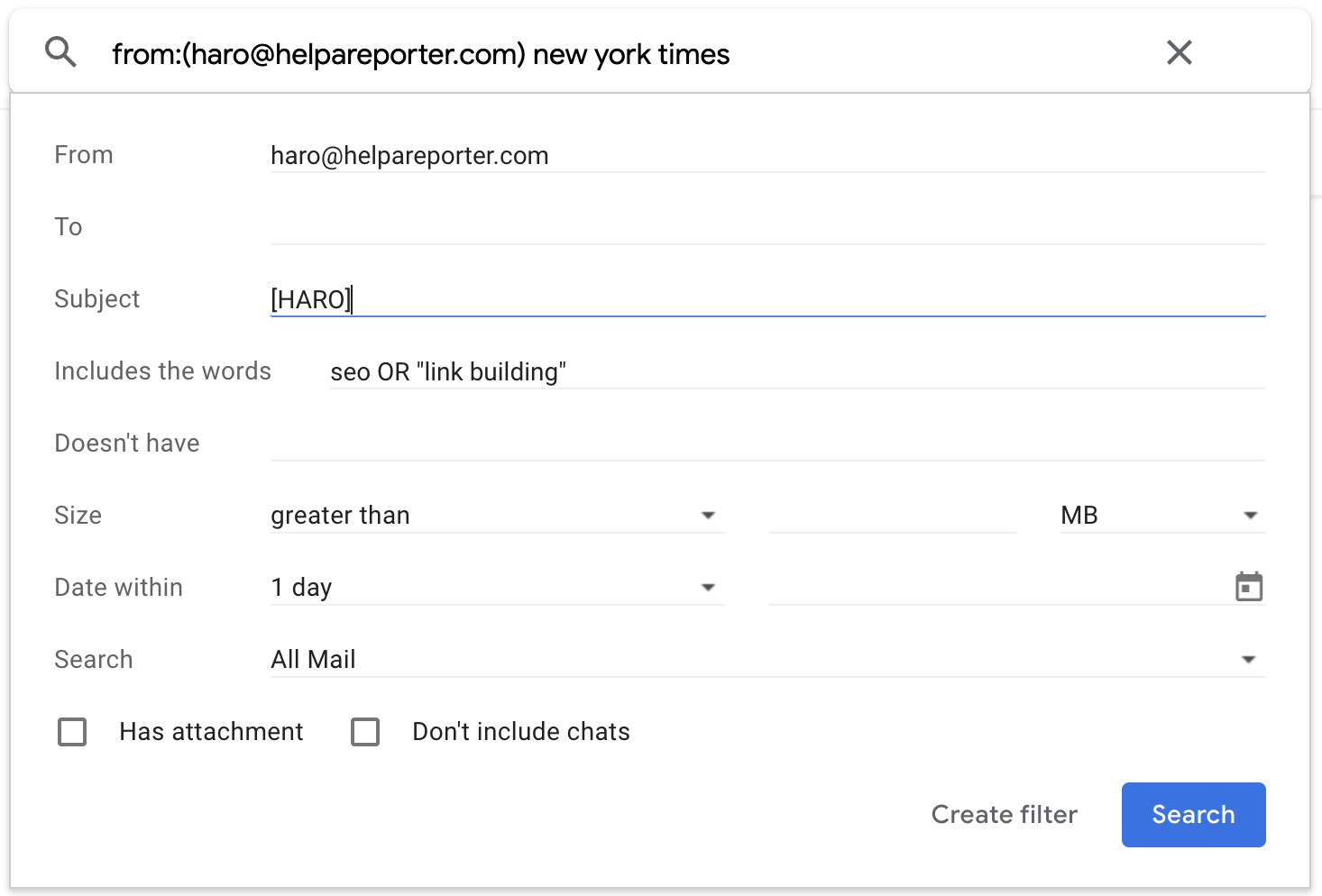

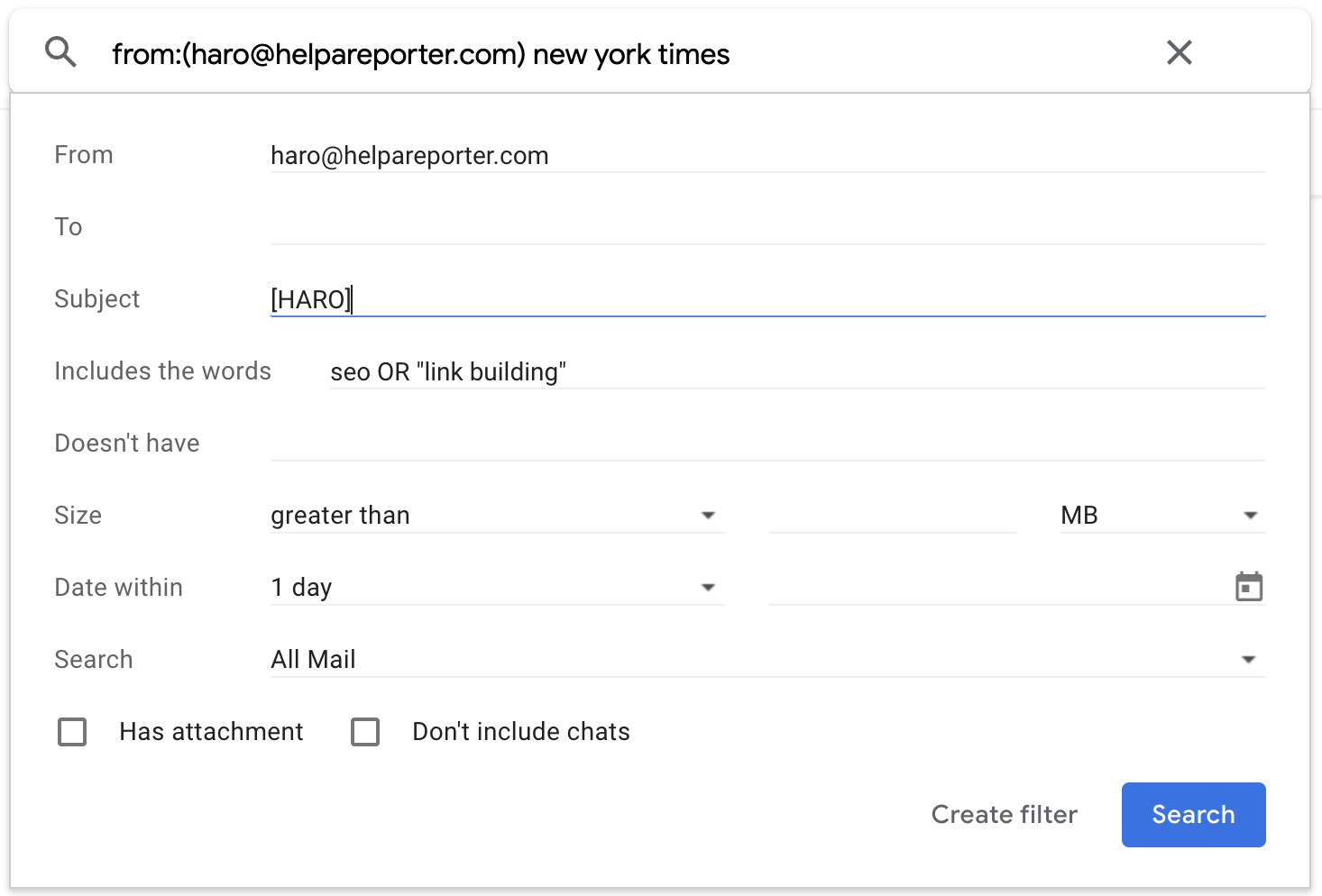

Given that you should only respond to relevant queries where you have the expertise to answer, I recommend setting up some Gmail filters. This will spare your inbox by filtering out irrelevant opportunities.

Here’s how to do it:

- Click the search options filter

- Set the “From” field to [email protected].

- Set the “Subject” to “[HARO]”

- Set “Has the words” to keywords you want to monitor (you can use the OR operator to list multiple keywords here)

Hit search and check that everything’s correct. If all looks good, hit the search options caret again and click “Create filter.”

Broken link building is where you:

- Find a dead page with links

- Create your own page on the topic

- Ask people linking to the dead page to link to you instead

How to do it

First, you’ll need to find a dead page with links. Here’s how:

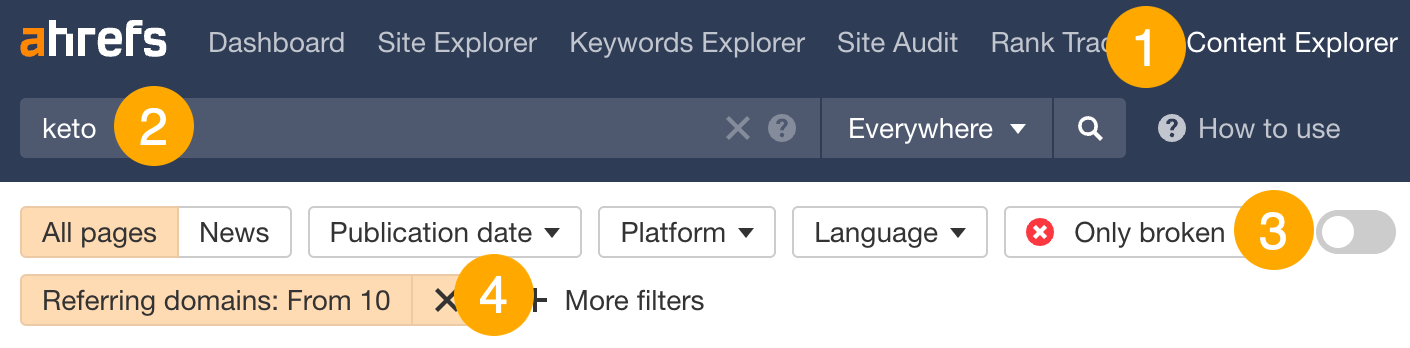

- Go to Ahrefs’ Content Explorer

- Search for a topic

- Filter for broken pages only

- Filter for pages with 10+ referring domains

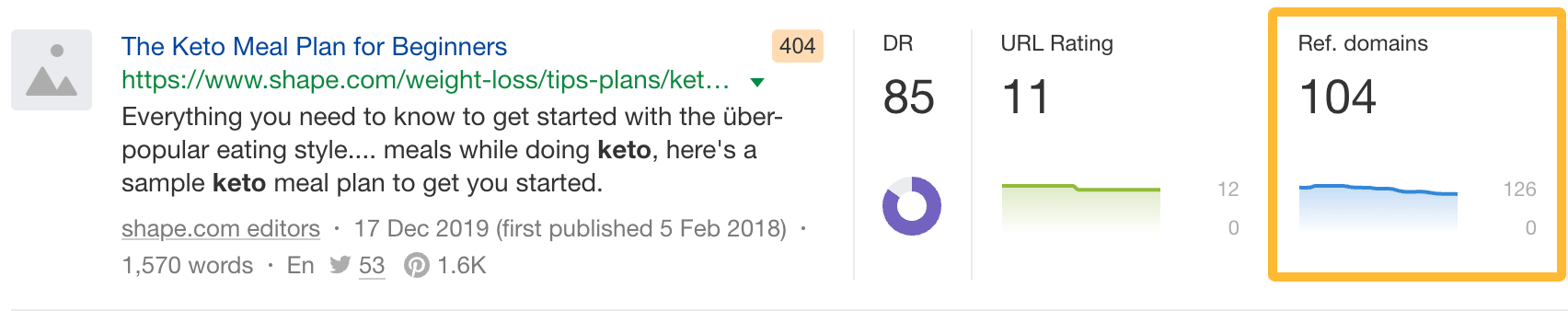

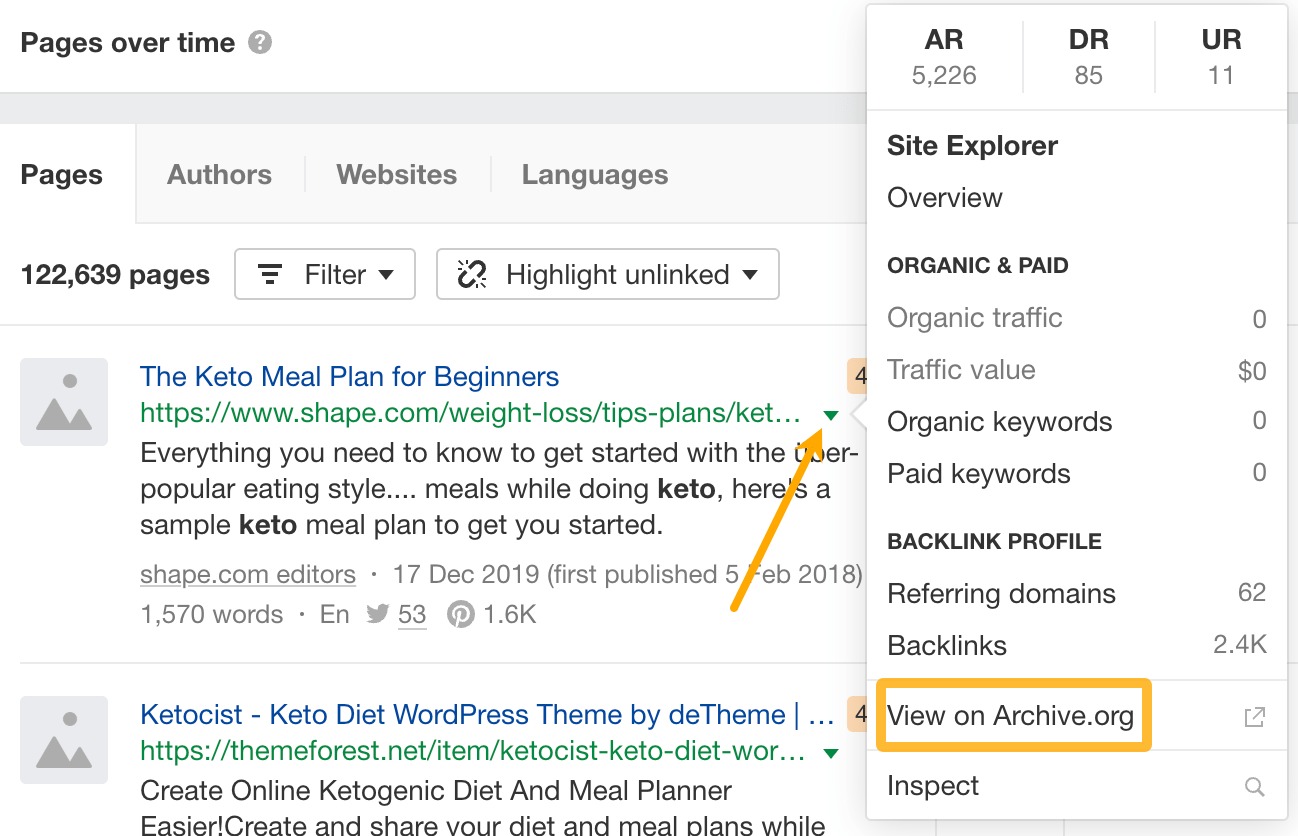

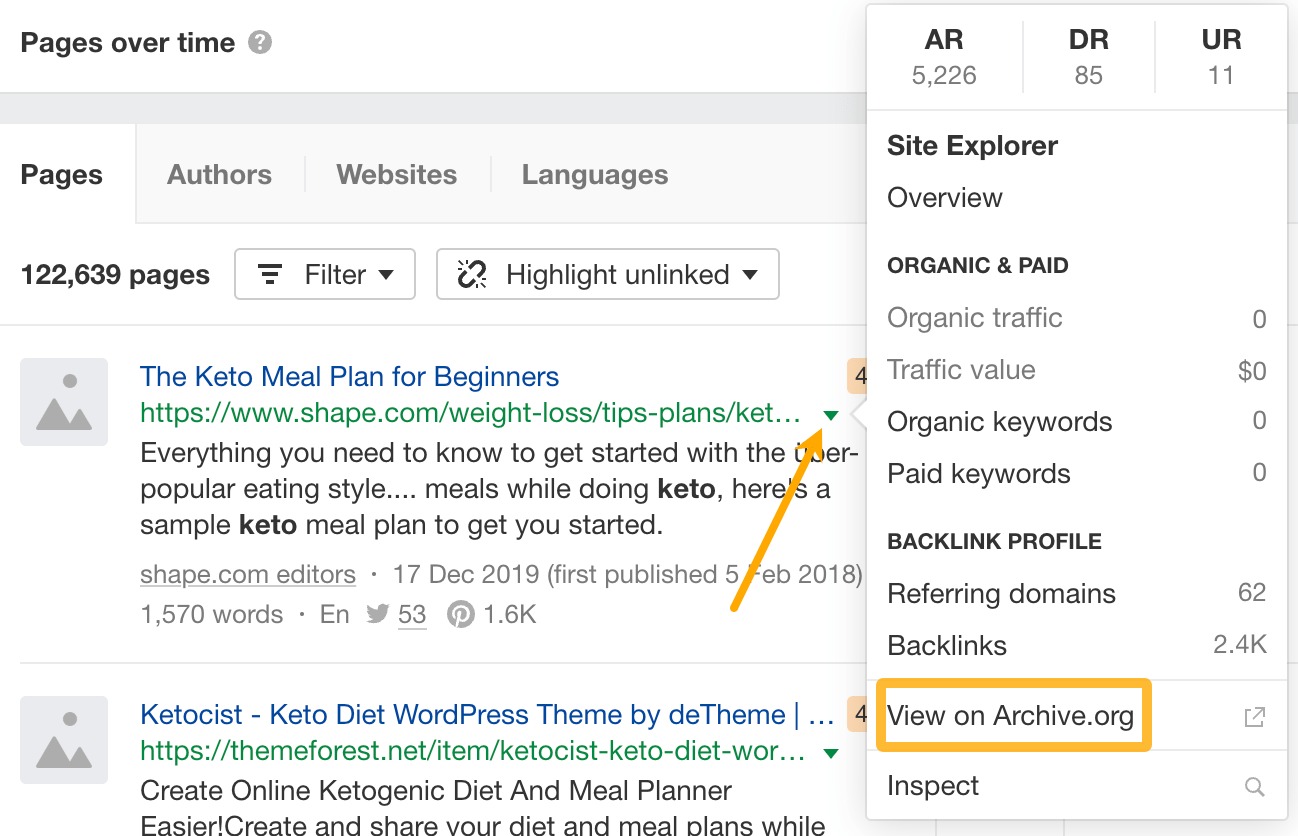

For example, searching for “keto” finds this page with 104 referring domains:

If you visit the page, you’ll see that it is indeed dead:

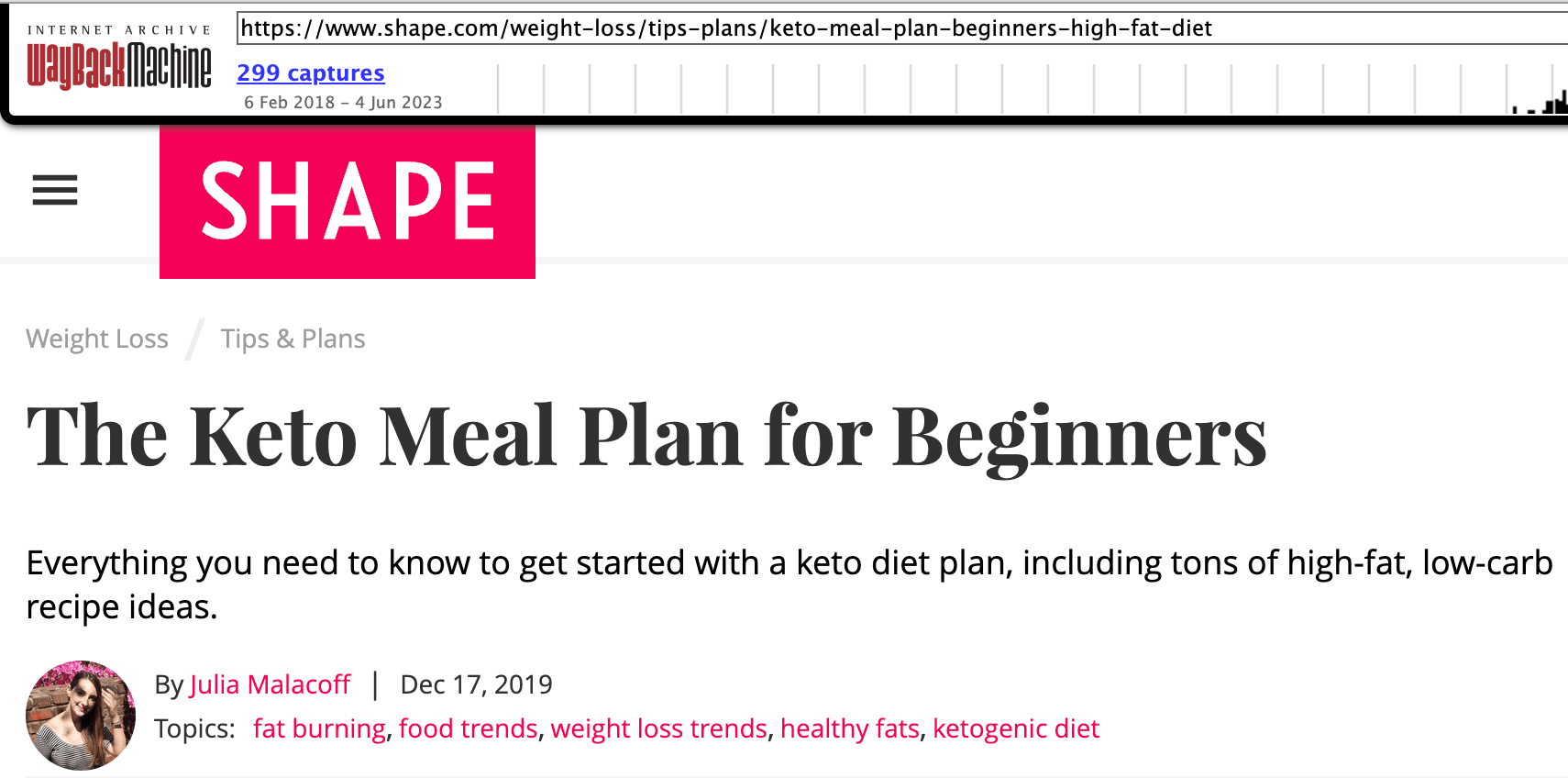

Next, click on the caret next to the URL and click to view it on archive.org. This will show you how the page looked before it disappeared.

If recreating this content makes sense for your site, do it, then reach out to all the people linking to the dead page and pitch yours as a replacement.

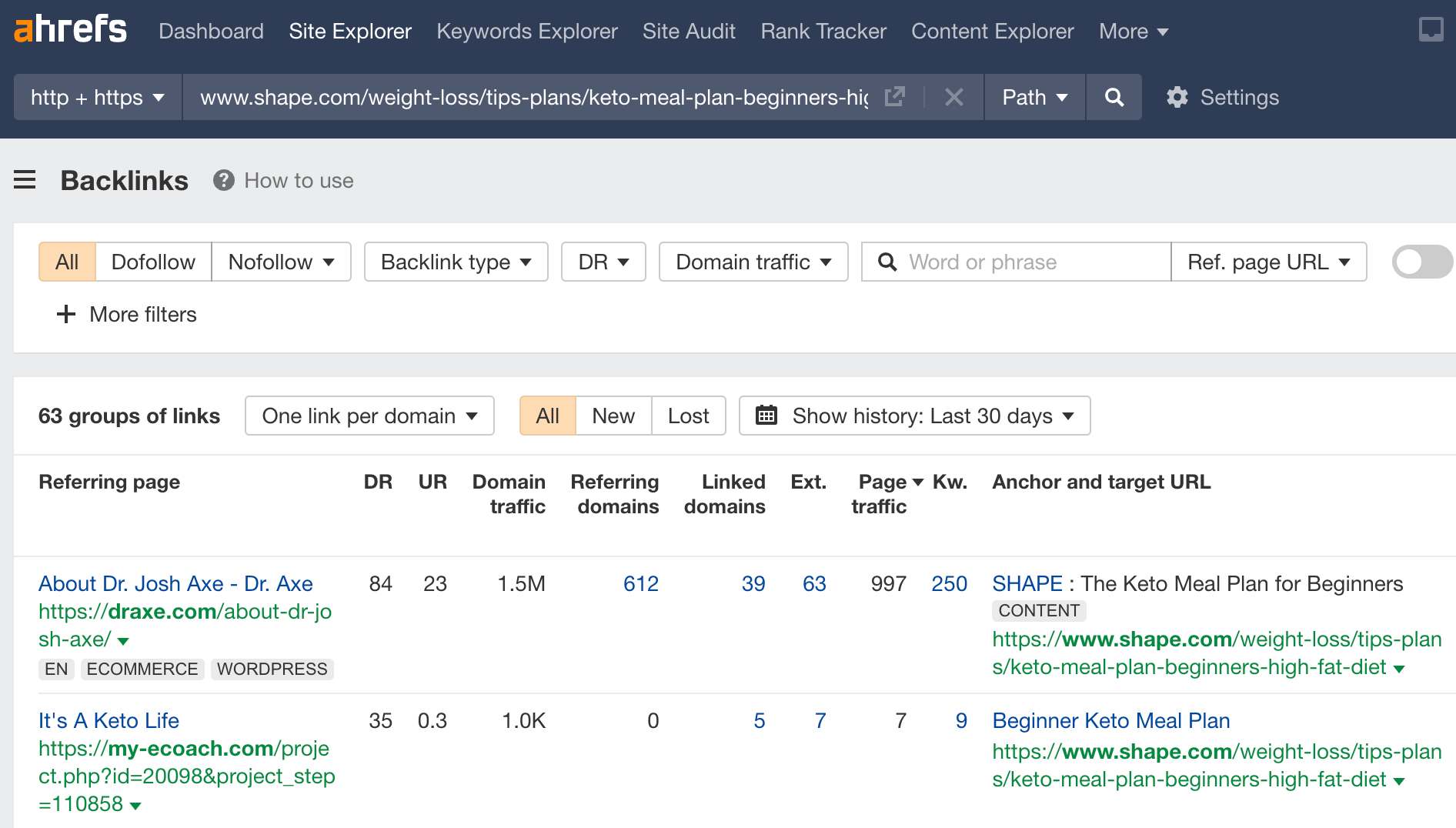

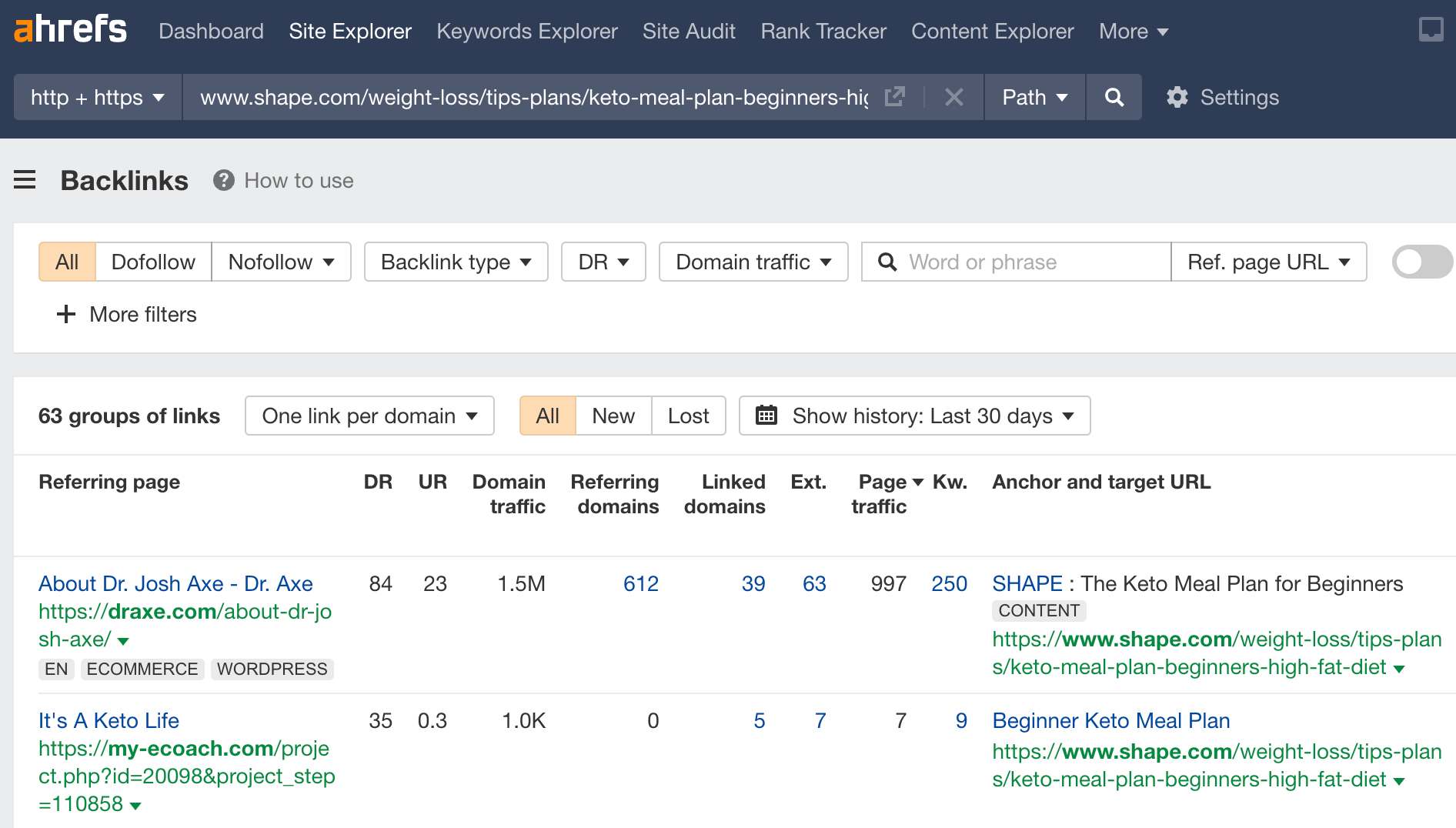

You can find these linkers by entering the dead page’s URL into Site Explorer and going to the Backlinks report.

The main focus of this technique is trying to convert unlinked mentions to linked mentions.

How to do it

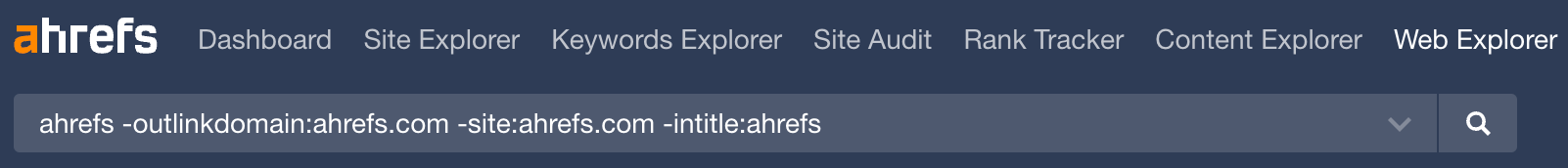

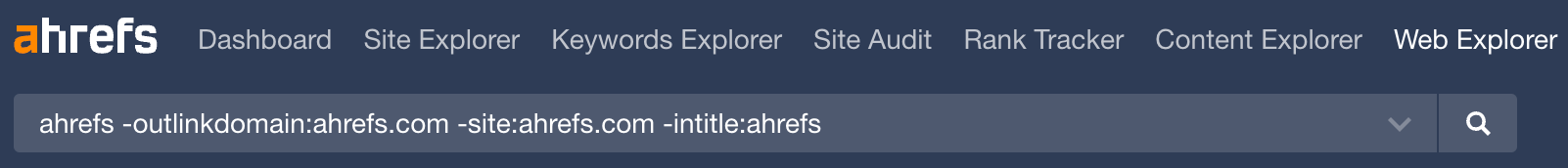

First, you need to find your unlinked mentions. You can do this by running the following search in Ahrefs’ Web Explorer:

[your brand] -outlinkdomain:[yourwebsite].com -site:[yourwebsite].com -intitle:[your brand]

This will search billions of pages for those that mention your brand name, but don’t link to you.

For example, if you wanted to find unlinked mentions for Ahrefs.com, you’d search for this:

In this case, the search finds over 59 million results:

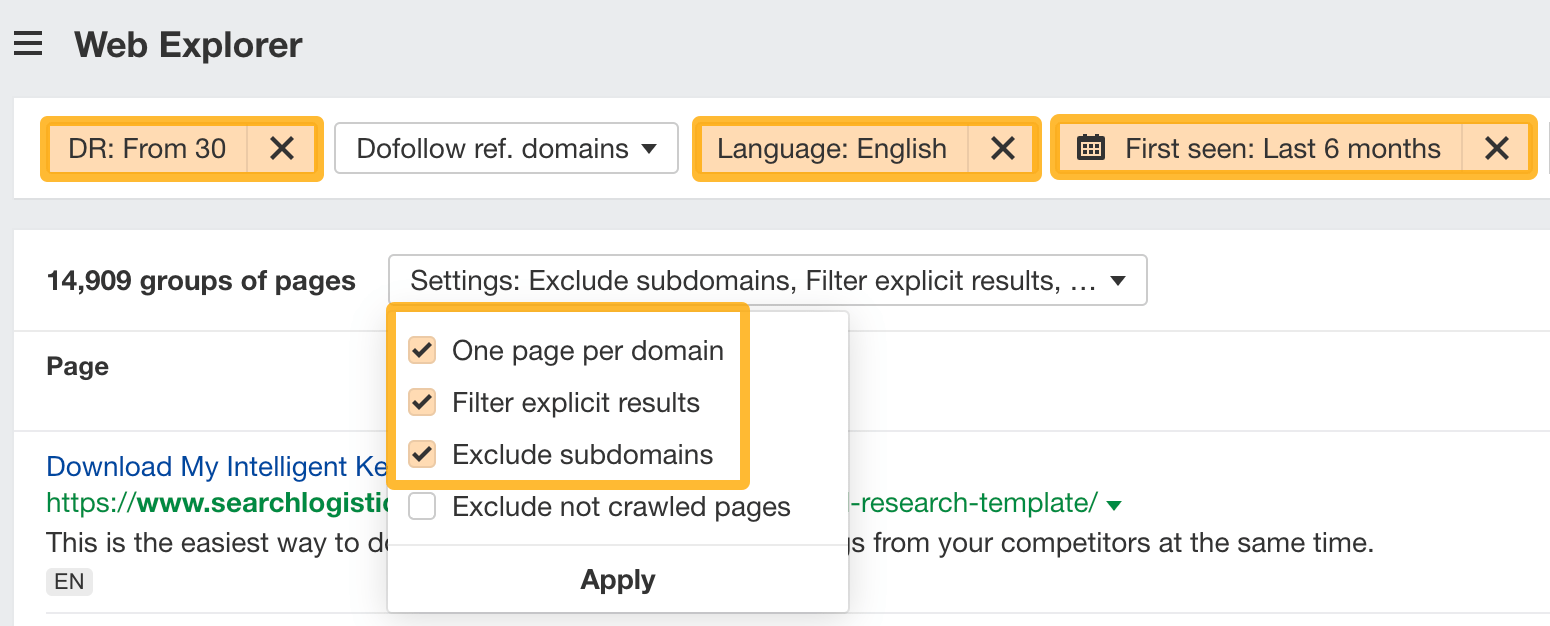

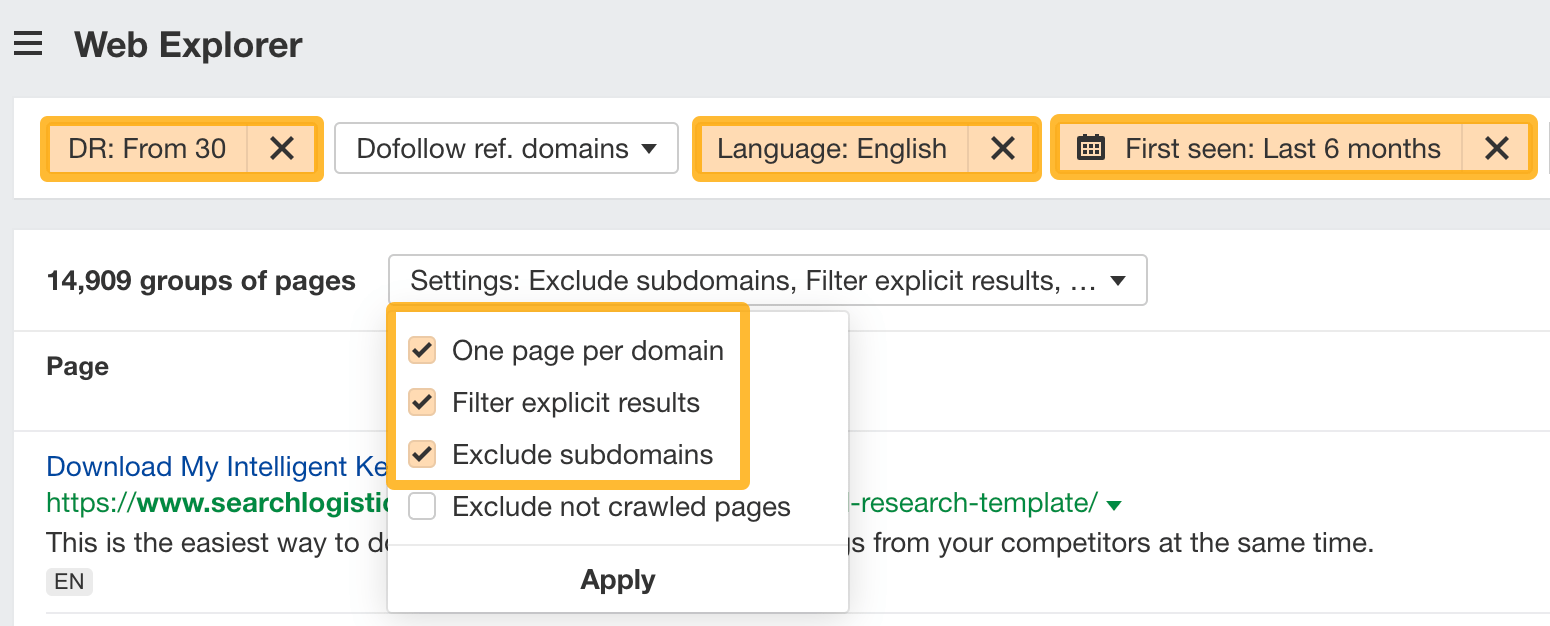

That’s too many to sift through, so I recommend adding these filters:

- Domain Rating: 30+ to remove low authority websites.

- Language: English to remove those who won’t understand your pitch.

- First seen: 6 months to remove old pages people don’t care about updating.

- One page per domain to remove duplicate websites.

- Filter explicit results to remove results your boss won’t want to see.

- Exclude subdomains to reduce spammy results.

From there, look through the results for unlinked mentions that make sense to pitch.

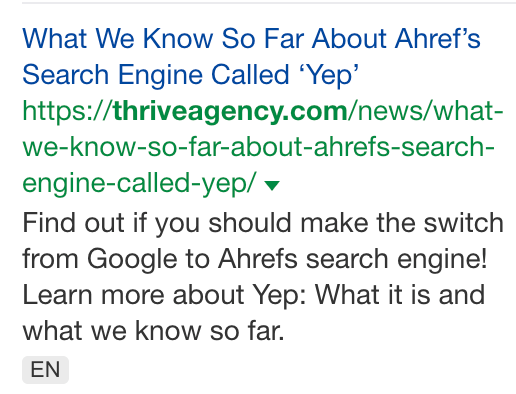

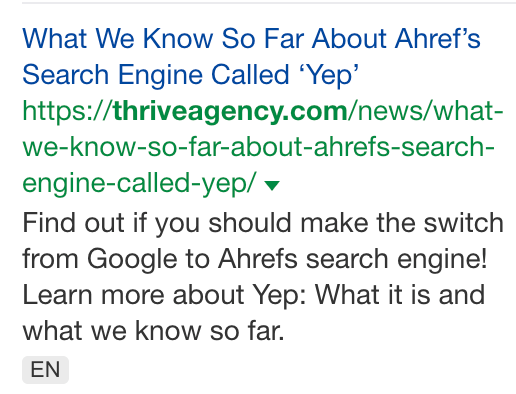

For example, this page discusses our new search engine:

Although the article links to our search engine (Yep.com), it doesn’t link to Ahrefs. That’s especially odd as it mentions “ahrefs” 16 times and talks about us in the intro:

Given that adding a link here would be helpful to readers who aren’t familiar with us, this is an unlinked mention worth chasing.

Looking for another way to earn brand mentions?

Try getting your brand included in listicles.

For example, if you run a cafe in London and you’re not featured in lists of the best cafes in London, that’s an opportunity.

To find relevant listicles, Google this query:

best [business type] in [location] -”[your business name]”

For example, if you’re the owner of Chinese Gourmet in London, you might search for something like this:

If you find listicles that don’t include your restaurant (like the one below), reach out and see if they’d be willing to add you.

Just keep in mind that your pitch needs to make sense. If the author of the listicle has never eaten at your restaurant, they’re hardly going to add you just because you asked. You’ll have more chance of success by inviting them down for some food and going from there.

This technique could mean various things, so I’ll focus on the “news” part. Essentially, it’s promoting relevant brand information (e.g., your company is going to IPO) and getting publications to cover it.

How to do it

This technique is the remit of my colleague, Daria Samokish. She successfully got TechCrunch to cover our new search engine, Yep.

She shared everything she did in this X (Twitter) thread:

Keep learning

If you’re looking for even more link-building techniques that work, check out these resources:

Any questions or comments? Let me know on X (Twitter).

Stay in the loop with Entireweb

Get the latest updates delivered straight to your inbox. No spam - unsubscribe anytime.