SEO

Google Tag Manager Contains Hidden Data Leaks & Vulnerabilities

Researchers uncover data leaks in Google Tag Manager (GTM) as well as security vulnerabilities, arbitrary script injections and instances of consent for data collection enabled by default. A legal analysis identifies potential violations of EU data protection law.

There are many troubling revelations including that server-side GTM “obstructs compliance auditing endeavors from regulators, data protection officers, and researchers…”

GTM, developed by Google in 2012 to assist publishers in implementing third-party JavaScript scripts, is currently used on as many as 28 million websites. The research study evaluates both versions of GTM, the Client-side and the newer Server-side GTM that was introduced in 2020.

The analysis, undertaken by researchers and legal experts, revealed a number of issues inherent to the GTM architecture.

An examination of 78 Client-side Tags, 8 Server-side Tags, and two Consent Management Platforms (CMPs), revealed hidden data leaks, instances of Tags bypassing GTM permission systems in order to inject scripts, and consent set to enabled by default without any user interaction.

A significant finding pertains to the Server-side GTM. Server-side GTM works by loading and executing tags on a remote server, which creates the perception of the absence of third parties on the website.

However, the study showed that this architecture allows tags running on the server to clandestinely share users’ data with third parties, circumventing browser restrictions and security measures like like the Content-Security-Policy (CSP).

Methodology Used In Research On GTM Data Leaks

The researchers are from Centre Inria de l’Université, Centre Inria d’Université Côte d’Azur, Centre Inria de l’Université, and Utrecht University.

The methodology used by the researchers was to buy a domain and install GTM on a live website.

The research paper explains in detail:

“To conduct experiments and set up the GTM infrastructure, we bought a domain – we call it example.com here – and created a public website containing one basic webpage with a paragraph of text and an HTML login form. We have included a login form since Senol et al. …have recently found that user input is often leaked from the forms, so we decided to test whether Tags may be responsible for such leakage.

The website and the Server-side GTM infrastructure were hosted on a virtual machine we rented on the Microsoft Azure cloud computing platform located in a data center in the EU.

…We used the ‘profiles’ functionality of the browser to start every experiment in a fresh environment, devoid from cookies, local storage and other technologies than maintain a state.

The browser, visiting the website, was run on a computer connected to the Internet through an institutional network in the EU.

To create Client- and Server-side GTM installations, we created a new Google account, logged into it and followed the suggested steps in the official GTM documentation.”

The results of the analysis contain multiple critical findings, including that the “Google Tag” facilitates collecting multiple types of users’ data without consent and at the time of analysis it presented a security vulnerability.

Data Collection Is Hidden From Publishers

Another discovery was the extent of data collection by the “Pinterest Tag,” which garnered a significant amount of user data without disclosing it to the Publisher.

What some may find disturbing is that publishers who deploy these tags may not only be unaware of the data leaks but that the tools they rely on to help them monitor data collection don’t notify them of these issues.

The researchers documented their findings:

“We observe that the data sent by the Pinterest Tag is not visible to the Publisher on the Pinterest website, where we logged in to observe Pinterest’s disclosure about collected data.

Moreover, we find that the data collected by the Google Tag about form interaction is not shown in the Google Analytics dashboard.

This finding demonstrates that for such Tags, Publishers are not aware of the data collected by the Tags that they select.”

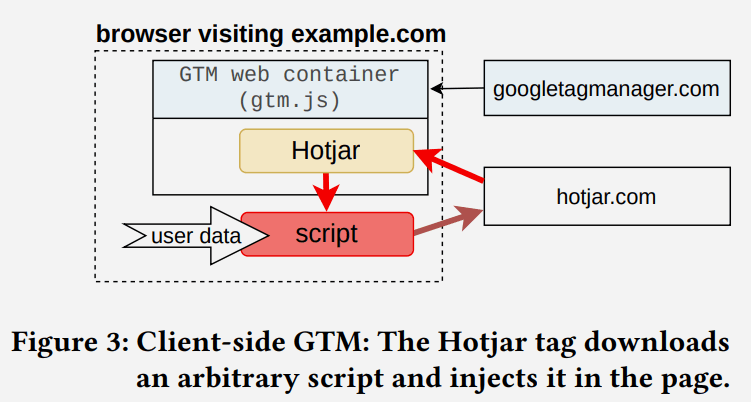

Injections of Third Party Scripts

Google Tag Managers has a feature for controlling tags, including third party tags, called Web Containers. The tags can run inside a sandbox that limits their functionalities. The sandbox also uses a permission system with one permission called inject_script that allows a script to download and run any (arbitrary) script outside of the Web Container.

The inject_script permission allows the tag to bypass the GTM permission system to gain access to all browser APIs and DOM.

Screenshot Illustrating Script Injection

The researchers analyzed 78 officially supported Client-side tags and discovered 11 tags that don’t have the inject_script permission but can inject arbitrary scripts. Seven of those eleven tags were provided by Google.

They write:

“11 out of 78 official Client-side tags inject a third-party script into the DOM bypassing the GTM permission system; and GTM “Consent Mode” enables some of the consent purposes by default, even before the user has interacted with the consent banner.”

The situation is even worse because it’s not just a privacy vulnerability, it’s also a security vulnerability.

The research paper explains the meaning of what they uncovered:

“This finding shows that the GTM permission system implemented in the Web Container sandbox allows Tags to insert arbitrary, uncontrolled scripts, thus opening potential security and privacy vulnerabilities to the website. We have disclosed this finding to Google via their Bug Bounty online system.”

Consent Management Platforms (CMP)

Consent Management Platforms (CMP) are a technology for managing what consent users have granted in terms of their privacy. This is a way to manage ad personalization, user data storage, analytics data storage and so on.

Google’s documentation for CMP usage states that setting the consent mode defaults is the responsibility of the marketers and publishers who use the GTM.

The defaults can be set to deny ad personalizaton by default, for example.

The documentation states:

“Set consent defaults

We recommend setting a default value for each consent type you are using.The consent state values in this article are only examples. You are responsible for making sure that default consent mode is set for each of your measurement products to match your organization’s policy.”

What the researchers discovered is that CMPs for Client-side GTMs are loaded in an undefined state on the webpage and that becomes problematic when a CMP does not load default variables (referred to as undefined variables).

The problem is that GTM considers undefined variables to mean that users have given their consent to all of the undefined variables, even though the user has not consented in any way.

The researchers explained what’s happening:

“Surprisingly, in this case, GTM considers all such undefined variables to be accepted by the end user, even though the end user has not interacted with the consent banner of the CMP yet.

Among two CMPs tested (see §3.1.1), we detected this behavior for the Consentmanager CMP.

This CMP sets a default value to only two consent variables – analytics_storage and ad_storage – leaving three GTM consent variables – security_-storage , personalization_storage functionality_storage – and consent variables specific to this CMP – e.g., cmp_purpose_c56 which corresponds to the “Social Media” purpose – in undefined state.

These extra variables are hence considered granted by GTM. As a result, all the Tags that depend on these four consent variables get executed even without user consent.”

Legal Implications

The research paper notes that United States privacy laws like the European Union General Data Protection Regulation (GDPR) and the ePrivacy Directive (ePD) regulate the processing of user data and the use of tracking technologies and impose significant fines for violations of those laws, such as requiring consent for the storage of cookies and other tracking technologies.

A legal analysis of the Client-Side GTM flagged a total of seven potential violations.

Seven Potential Violations Of Data Protection Laws

- Potential violation 1. CMP scanners often miss purposes

- Potential violation 2. Mapping CMP purposes to GTM consent variables is not compliant.

- Potential violation 3. GTM purposes are limited to clientside storage.

- Potential violation 4. GTM purposes are not specific nor explicit.

- Potential violation 5. Defaulting consent variables to “accepted” means that Tags run without consent.

- Potential violation 6. Google Tag sends data independently of user’s consent decisions.

- Potential violation 7. GTM allows Tag Providers to inject scripts exposing end users to security risks.

Legal analysis of Server-Side GTM

The researchers write that the findings raise legal concerns about GTM in its current state. They assert that the system introduces more legal challenges than resolutions, complicating compliance efforts and posing a challenge for regulators to monitor effectively.

These are some of the factors that caused concern about the ability to comply with regulations:

- Complying with data subject rights is hard for the Publisher

For both Client- and Server-Side GTM there is no easy way for a publisher to comply with a request for access to collected data as required by Article 15 of the GDPR. The publisher would have to manually track down every Data Collector to comply with that legal request. - Built-in consent raises trust issues

When using tags with built-in consent, publishers are forced to trust that Tag Providers actually implement the built-in consent within the code. There’s no easy way for a publisher to review the code to verify that the Tag Provider is actually ignoring the consent and collecting user information. Reviewing the code is impossible for official tags that are sandboxed within the gtm.js script. The researchers state that reviewing the code for compliance “requires heavy reverse engineering.” - Server-side GTM is invisible for regulatory monitoring and auditing

The researchers write that Server-side GTM blocks obstructs compliance auditing because the data collection occurs remotely on a server. - Consent is hard to configure on GTM Server Containers

Consent management tools are missing in GTM Server Containers, which prevents CMPs from displaying the purposes and the Data Collectors as required by regulations.

Auditing is described as highly difficult:

“Moreover, auditing and monitoring is exclusively attainable by only contacting the Publisher to grant access to the configuration of the GTM Server Container.

Furthermore, the Publisher is able to change the configuration of the GTM Server Container at any point in time (e.g., before any regulatory investigation), masking any compliance check.”

Conclusion: GTM Has Pitfalls And Flaws

The researchers were gave GTM poor marks for security and the non-compliant defaults, stating that it introduces more legal issues than solutions while complicating the compliance with regulations and making it hard for regulators to monitor for compliance.

Read the research paper:

Google Tag Manager: Hidden Data Leaks and its Potential Violations under EU Data Protection Law

Download the PDF of the research paper here.

Featured Image by Shutterstock/Praneat

Stay in the loop with Entireweb

Get the latest updates delivered straight to your inbox. No spam - unsubscribe anytime.