SOCIAL

Twitter Is Looking to Re-Open its Account Verification Process, Seeks Feedback on New Guidelines

Get ready to stake your case – after shutting down its account verification application process back in 2017, Twitter says that it’s now looking to re-open applications for account verification which could give you a chance to get your own blue checkmark, making you infinitely more important than others on the platform.

We’re planning to relaunch verification in 2021, but first we want to hear from you.

Help us shape our approach to verification on Twitter by letting us know what you think. Take a look at our draft policy and submit your #VerificationFeedback here: https://t.co/0vmrpVtXGJ

— Twitter Support (@TwitterSupport) November 24, 2020

But hang on – as you can see, the actual policy around what verification means isn’t set in stone yet.

As Twitter notes, it’s currently seeking feedback from the community as to what people expect the blue checkmark to represent, in order to revamp its previous, flawed process, which ended up being a mess due to the different ways that Twitter employees applied the qualifiers, and who should be approved and not, etc.

That’s what lead to the initial pause on the public application process – because there was a level of confusion around what the blue checkmark meant, no one really knew who should be approved for one, who should not. That lead to Twitter verifying the profile of reported a white supremacist leader – despite, at that time, looking to take more action against hate speech. Because there was a level of uncertainty over whether the badge signified ‘identity’ or ‘endorsement’, Twitter shut the whole thing down, and while certain accounts have still been verified since then, public applications have been off the cards entirely for three years.

Now they could be coming back – but Twitter first needs to ensure that there’s clear understanding about what verification actually means, both internally and externally.

In order to address this, Twitter’s published a proposed overview of which accounts should be considered for verification, along with a new survey to seek feedback on its process.

The first tier of profiles that Twitter says should be eligible for verification are ‘Notable Accounts’, with the blue tick signifying that the account is an authentic representation of that person or entity, serving an immediate public purpose.

The six types of accounts Twitter has filed under this heading are:

- Government

- Companies, Brands and Non- Profit Organizations

- News

- Entertainment

- Sports

- Activists, Organizers, and Other Influential Individuals

Those make sense – marking official accounts in these categories serves a clear purpose, and while its public application process has been paused, Twitter has continued to approve verification for accounts in these categories.

But the more complex, and divisive arguments around verification come from the public, when people want their own blue checkmark. If you’ve got lots of followers and you spend a lot of time on Twitter, why shouldn’t you get your own checkmark, right?

This comes down to the core of the question around Twitter verification – does the blue checkmark simply signify identity, in which case, anyone who can provide their ID documents should qualify? Or does it signify celebrity, which is a more nebulous and subjective criteria?

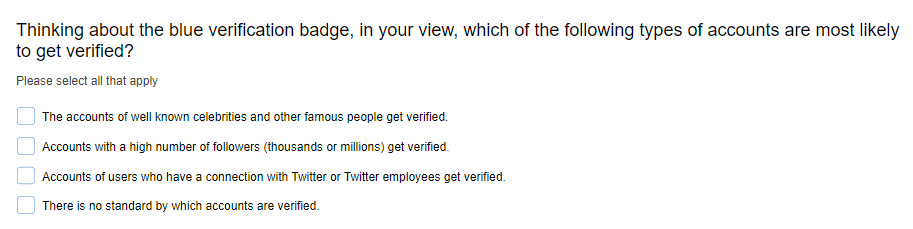

That’s what Twitter’s now trying to determine – in the public survey on expectations around verification, Twitter looks to glean insight what people think the blue tick means.

Twitter also seeks to clarify whether people see the verification badge as a general qualifier of identity, or as an endorsement of that person from Twitter.

The distinction here is key – as noted, Twitter has previously given blue checkmarks to users of questionable background, and if people see that as Twitter giving their support to that person, as opposed to a marker of identity, then that’s a problem for the brand.

As such, Twitter needs to clarify what its badge actually represents – but even so, this doesn’t seem like the best way to address these issues and formulate a better policy.

Back in June, it seemed like Twitter was going to go with a new form of verification that would signify that a user had confirmed their identity, with reverse engineering expert Jane Manchun Wong uncovering this explanation.

That relates to ‘confirming’ your Twitter account, not verification exactly, which seemed like it could be a different form of profile badge. That would cater to those looking to confirm their identity, who were not considered to be in the top tier of qualifiers for account verification.

Maybe that’s what Twitter is looking to go with – as noted by Twitter:

“The blue verified badge isn’t the only way we are planning to distinguish accounts on Twitter. Heading into 2021, we’re committed to giving people more ways to identify themselves, such as new account types and labels. We’ll share more in the coming weeks.”

Maybe, then, Twitter will make account verification available to ‘Notable accounts’ only, those which fit into a strict criteria of celebrity or status, while it could also add another tier of accounts that have confirmed their ID documents, with a different type of badge.

That seems to cater to the key elements – and while there will still be debates over what counts as ‘notable’, the criteria listed above seems fairly clear. The ‘entertainment’ category could lead to questions, as could the ‘other influential individuals’ marker. But if Twitter has an internal review panel to approve such, that could be a more workable solution.

Twitter has also been looking to add dedicated badges for bot accounts, to provide more transparency in interactions, and that could be another category it looks to implement.

So we could soon have three different types of account badges to signify who or what each is.

Will that clear things up? Probably not, especially given that many people who’ve already been incorrectly approved for verification, based on these revised guidelines, will still be circulating on the platform, which will maintain a level of confusion over what the badge means.

But maybe, if Twitter revises the rules and removes the badges from those who no longer qualify based on the update, that could work.

Maybe. Still seems like it’ll cause a lot of headaches.

You can take the Twitter verification survey yourself here.

Stay in the loop with Entireweb

Get the latest updates delivered straight to your inbox. No spam - unsubscribe anytime.