The new app is called watchGPT and as I tipped off already, it gives you access to ChatGPT from your Apple Watch. Now the $10,000 question (or more accurately the $3.99 question, as that is the one-time cost of the app) is why having ChatGPT on your wrist is remotely necessary, so let’s dive into what exactly the app can do.

NEWS

Deep Science: Robot perception, acoustic monitoring, using ML to detect arthritis

Research papers come out far too rapidly for anyone to read them all, especially in the field of machine learning, which now affects (and produces papers in) practically every industry and company. This column aims to collect the most relevant recent discoveries and papers — particularly in but not limited to artificial intelligence — and explain why they matter.

The topics in this week’s Deep Science column are a real grab bag that range from planetary science to whale tracking. There are also some interesting insights from tracking how social media is used and some work that attempts to shift computer vision systems closer to human perception (good luck with that).

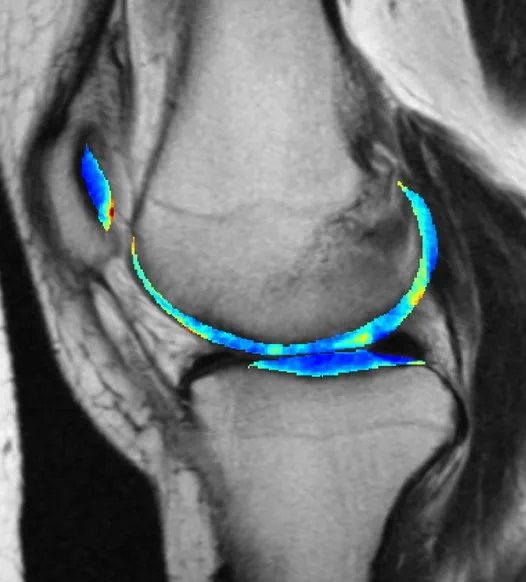

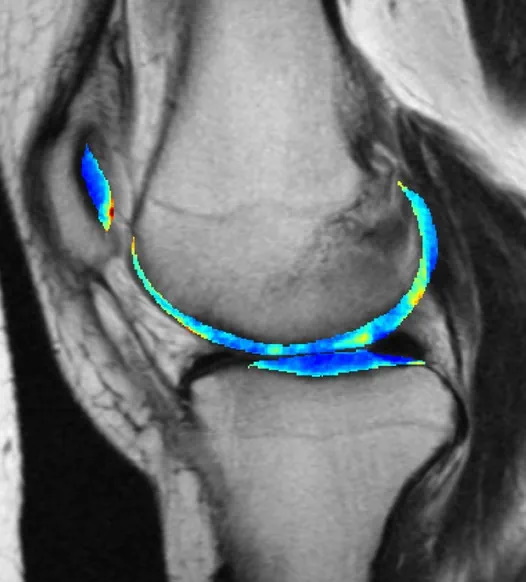

ML model detects arthritis early

One of machine learning’s most reliable use cases is training a model on a target pattern, say a particular shape or radio signal, and setting it loose on a huge body of noisy data to find possible hits that humans might struggle to perceive. This has proven useful in the medical field, where early indications of serious conditions can be spotted with enough confidence to recommend further testing.

This arthritis detection model looks at X-rays, same as doctors who do that kind of work. But by the time it’s visible to human perception, the damage is already done. A long-running project tracking thousands of people for seven years made for a great training set, making the nearly imperceptible early signs of osteoarthritis visible to the AI model, which predicted it with 78% accuracy three years out.

The bad news is that knowing early doesn’t necessarily mean it can be avoided, as there’s no effective treatment. But that knowledge can be put to other uses — for example, much more effective testing of potential treatments. “Instead of recruiting 10,000 people and following them for 10 years, we can just enroll 50 people who we know are going to be getting osteoarthritis … Then we can give them the experimental drug and see whether it stops the disease from developing,” said co-author Kenneth Urish. The study appeared in PNAS.

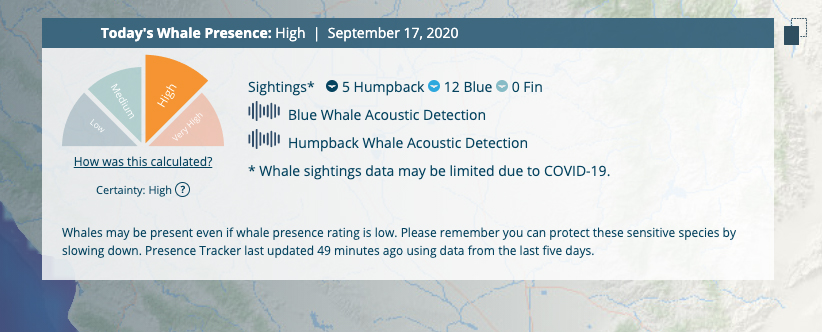

Using acoustic monitoring to preemptively save the whales

It’s amazing to think that ships still collide with and kill large whales on a regular basis, but it’s true. Voluntary speed reductions haven’t been much help, but a smart, multisource system called Whale Safe is being put in play in the Santa Barbara channel that could hopefully give everyone a better idea of where the creatures are in real-time.

The system uses underwater acoustic monitoring, near-real-time forecasting of likely feeding areas, actual sightings and a dash of machine learning (to identify whale calls quickly) to produce a prediction for whale presence along a given course. Large container ships can then make small adjustments well-ahead of time instead of trying to avoid a pod at the last minute.

“Predictive models like this give us a clue for what lies ahead, much like a daily weather forecast,” said Briana Abrahms, who led the effort from the University of Washington. “We’re harnessing the best and most current data to understand what habitats whales use in the ocean, and therefore where whales are most likely to be as their habitats shift on a daily basis.”

Incidentally, Salesforce founder Marc Benioff and his wife Lynne helped establish the UC Santa Barbara center that made this possible.

Facebook Faces Yet Another Outage: Platform Encounters Technical Issues Again

Uppdated: It seems that today’s issues with Facebook haven’t affected as many users as the last time. A smaller group of people appears to be impacted this time around, which is a relief compared to the larger incident before. Nevertheless, it’s still frustrating for those affected, and hopefully, the issues will be resolved soon by the Facebook team.

Facebook had another problem today (March 20, 2024). According to Downdetector, a website that shows when other websites are not working, many people had trouble using Facebook.

This isn’t the first time Facebook has had issues. Just a little while ago, there was another problem that stopped people from using the site. Today, when people tried to use Facebook, it didn’t work like it should. People couldn’t see their friends’ posts, and sometimes the website wouldn’t even load.

Downdetector, which watches out for problems on websites, showed that lots of people were having trouble with Facebook. People from all over the world said they couldn’t use the site, and they were not happy about it.

When websites like Facebook have problems, it affects a lot of people. It’s not just about not being able to see posts or chat with friends. It can also impact businesses that use Facebook to reach customers.

Since Facebook owns Messenger and Instagram, the problems with Facebook also meant that people had trouble using these apps. It made the situation even more frustrating for many users, who rely on these apps to stay connected with others.

During this recent problem, one thing is obvious: the internet is always changing, and even big websites like Facebook can have problems. While people wait for Facebook to fix the issue, it shows us how easily things online can go wrong. It’s a good reminder that we should have backup plans for staying connected online, just in case something like this happens again.

NEWS

We asked ChatGPT what will be Google (GOOG) stock price for 2030

Investors who have invested in Alphabet Inc. (NASDAQ: GOOG) stock have reaped significant benefits from the company’s robust financial performance over the last five years. Google’s dominance in the online advertising market has been a key driver of the company’s consistent revenue growth and impressive profit margins.

In addition, Google has expanded its operations into related fields such as cloud computing and artificial intelligence. These areas show great promise as future growth drivers, making them increasingly attractive to investors. Notably, Alphabet’s stock price has been rising due to investor interest in the company’s recent initiatives in the fast-developing field of artificial intelligence (AI), adding generative AI features to Gmail and Google Docs.

However, when it comes to predicting the future pricing of a corporation like Google, there are many factors to consider. With this in mind, Finbold turned to the artificial intelligence tool ChatGPT to suggest a likely pricing range for GOOG stock by 2030. Although the tool was unable to give a definitive price range, it did note the following:

“Over the long term, Google has a track record of strong financial performance and has shown an ability to adapt to changing market conditions. As such, it’s reasonable to expect that Google’s stock price may continue to appreciate over time.”

GOOG stock price prediction

While attempting to estimate the price range of future transactions, it is essential to consider a variety of measures in addition to the AI chat tool, which includes deep learning algorithms and stock market experts.

Finbold collected forecasts provided by CoinPriceForecast, a finance prediction tool that utilizes machine self-learning technology, to anticipate Google stock price by the end of 2030 to compare with ChatGPT’s projection.

According to the most recent long-term estimate, which Finbold obtained on March 20, the price of Google will rise beyond $200 in 2030 and touch $247 by the end of the year, which would indicate a 141% gain from today to the end of the year.

Google has been assigned a recommendation of ‘strong buy’ by the majority of analysts working on Wall Street for a more near-term time frame. Significantly, 36 analysts of the 48 have recommended a “strong buy,” while seven people have advocated a “buy.” The remaining five analysts had given a ‘hold’ rating.

The average price projection for Alphabet stock over the last three months has been $125.32; this objective represents a 22.31% upside from its current price. It’s interesting to note that the maximum price forecast for the next year is $160, representing a gain of 56.16% from the stock’s current price of $102.46.

While the outlook for Google stock may be positive, it’s important to keep in mind that some potential challenges and risks could impact its performance, including competition from ChatGPT itself, which could affect Google’s price.

Disclaimer: The content on this site should not be considered investment advice. Investing is speculative. When investing, your capital is at risk.

NEWS

This Apple Watch app brings ChatGPT to your wrist — here’s why you want it

ChatGPT feels like it is everywhere at the moment; the AI-powered tool is rapidly starting to feel like internet connected home devices where you are left wondering if your flower pot really needed Bluetooth. However, after hearing about a new Apple Watch app that brings ChatGPT to your favorite wrist computer, I’m actually convinced this one is worth checking out.

-

MARKETING7 days ago

MARKETING7 days agoThe key to correcting the C-suite trust deficit

-

MARKETING6 days ago

MARKETING6 days agoA Recap of Everything Marketers & Advertisers Need to Know

-

MARKETING4 days ago

MARKETING4 days agoHow To Protect Your People and Brand

-

PPC4 days ago

PPC4 days agoHow the TikTok Algorithm Works in 2024 (+9 Ways to Go Viral)

-

SEARCHENGINES5 days ago

SEARCHENGINES5 days agoGoogle Started Enforcing The Site Reputation Abuse Policy

-

SEO3 days ago

SEO3 days agoHow to Use Keywords for SEO: The Complete Beginner’s Guide

-

SEO7 days ago

SEO7 days ago128 Top SEO Tools That Are 100% Free

-

SEO5 days ago

SEO5 days agoBlog Post Checklist: Check All Prior to Hitting “Publish”